AI agents get serious about trust and control

Codenotary, Klient PSA, Georgia Tech, and Samsara show AI agents are moving into monitored, explainable, human-led business workflows.

AI agents are getting pulled into real business work, and the numbers tell the story. AI Agent Store highlighted four developments today: a monitoring tool from Codenotary, a hybrid delivery model from Klient PSA, a trust study from Georgia Tech, and a physical AI demo from Samsara. The common thread is simple: companies want agents that do useful work without creating security, billing, or trust headaches.

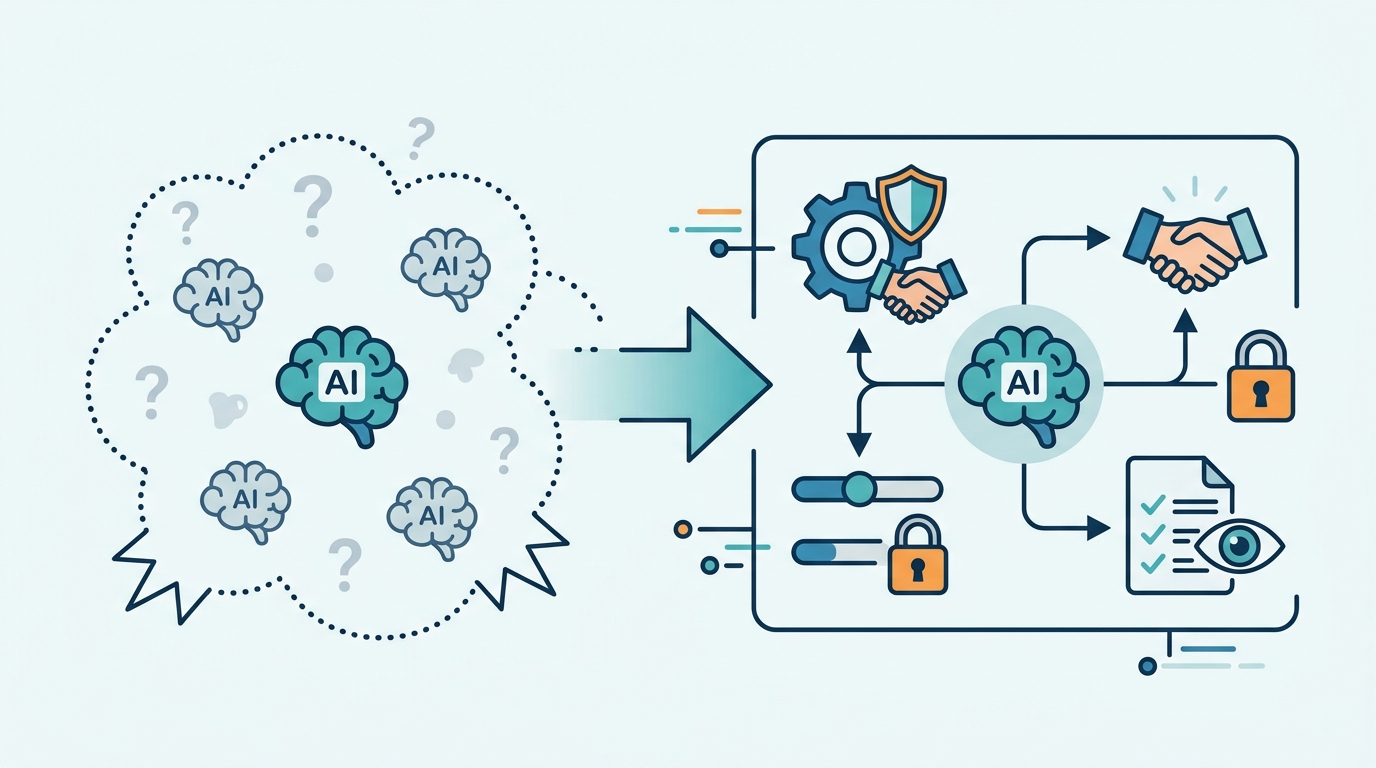

This is the stage where AI stops being a demo and starts acting like infrastructure. Once agents touch files, make decisions, and coordinate with people, the real question is no longer whether they can do the task. It is whether anyone can explain what they did, stop them when they go off script, and prove they did not leak data along the way.

Codenotary’s AgentMon is a sign of the times

Get the latest AI news in your inbox

Weekly picks of model releases, tools, and deep dives — no spam, unsubscribe anytime.

No spam. Unsubscribe at any time.

AgentMon is built for the problem every enterprise AI team eventually hits: visibility. Codenotary says the tool tracks what AI agents do across systems, including file access, behavior patterns, and data movement. That matters because agentic systems can make a lot of small decisions very quickly, and one bad permission or prompt injection can turn into a messy incident.

The timing is telling. Businesses are adding AI agents to workflows faster than their security teams can write policies for them. Monitoring is becoming a product category because old software logs were designed for humans and simple apps, not autonomous software that can browse, write, call tools, and trigger actions on its own.

- AgentMon watches agent behavior across systems

- It tracks file access and data patterns

- It is aimed at data leaks, cost overruns, and policy violations

- It targets companies deploying agents in production, not hobby projects

That focus on observability is a big deal. If an agent can touch customer records, internal docs, or cloud services, then security teams need more than a transcript. They need a trail that shows what the agent saw, what it changed, and why it made the move.

For developers, this is also a hint about where the market is heading. The next wave of AI tools will not just sell better agents. They will sell audit trails, policy controls, and cost guardrails around those agents.

Klient PSA is betting on human-plus-agent delivery

Klient PSA introduced Hybrid Project Delivery, a setup built around eight specialized AI agents working with human consultants. Each agent handles a narrow function such as project planning or software development. The company says pricing starts at $15 per user per month, plus a one-time $1,000 fee per AI agent, with launch planned in three weeks.

That pricing model is interesting because it splits software cost from labor cost. Most SaaS tools charge per seat or per usage. Klient PSA is charging for the human interface and the agent layer separately, which tells you the company thinks the agent itself is a billable unit. That is a very different way to package automation.

“The future of AI is not about replacing humans, it’s about augmenting human capabilities,” Satya Nadella said at Microsoft Build 2017.

Nadella’s line gets quoted a lot because it maps cleanly onto what Klient PSA is selling here. The agents are not replacing consultants; they are being inserted into a managed delivery system where humans still own the relationship, the judgment calls, and the final sign-off.

That model may be easier to sell than fully autonomous AI. Buyers in IT services and project delivery already know how to pay for people. Adding agents into that billing structure gives them something they can understand, budget for, and audit.

- Eight AI agents are included in the hybrid delivery model

- Base pricing starts at $15 per user per month

- Each AI agent adds a one-time $1,000 cost

- Launch is scheduled in about three weeks

There is also a quiet operational lesson here. If a vendor can name the job each agent performs, customers can assign ownership, set expectations, and measure output. That is much easier than buying a vague “AI assistant” and hoping it behaves.

Trust is becoming a product feature, not a soft skill

The Georgia Tech research points to a problem many teams have been underestimating: people do not trust AI agents just because the model sounds confident. Researchers found that older adults trusted AI agents more when the systems explained how they reached a decision. Simple confidence scores like “92% sure” backfired because they did not answer the real question: what information did the agent use?

That insight matters far beyond accessibility. If a system is making recommendations about health, money, scheduling, or customer service, users want a reason they can inspect. A percentage score may look scientific, but it does not tell a person whether the AI read the right file, used stale data, or guessed from weak signals.

Georgia Tech’s finding also exposes a design mistake that shows up everywhere in AI products. Teams often think certainty language is enough. It is not. Users want evidence, source references, and a short explanation of the path from input to answer.

- Older adults trusted AI agents more with clear explanations

- Confidence scores like “92% sure” reduced trust

- Users wanted to know what data drove the decision

- Explainability mattered more than raw certainty wording

This has practical consequences for product teams. If your agent is customer-facing, the UI should expose the why behind the answer, not just the answer itself. If your agent is internal, logs and citations matter because the person reviewing the output needs to verify it fast.

It also explains why some AI products feel impressive in a demo and brittle in real use. A polished answer is nice. A traceable answer is what gets adopted.

Physical AI is moving from slides to warehouses and roads

Samsara is set to showcase physical AI at the HumanX 2026 conference on April 8, with autonomous trucks and robots designed to work alongside human operators. That is a different class of agent work from document handling or customer support. Here, the software is tied to sensors, vehicles, and safety-critical workflows.

The comparison with the other announcements is useful. AgentMon is about watching digital behavior. Klient PSA is about packaging digital labor. Georgia Tech is about trust in decision-making. Samsara is about putting AI into motion in the physical world, where mistakes can hit schedules, equipment, and people.

- AgentMon focuses on monitoring digital actions

- Klient PSA packages eight task-specific agents into service delivery

- Georgia Tech found explainability matters more than confidence scores

- Samsara is demoing autonomous trucks and robots at HumanX 2026 on April 8

Put together, these updates show a pattern that is hard to ignore. AI agents are moving into production, but the winning products are the ones that make humans more comfortable with them. That means better monitoring, clearer explanations, and tighter control over where the agent can act.

If you are building in this space, the takeaway is direct: ship the guardrails with the agent, not after the incident. The next buyers will ask where the logs are, who can override the system, and how the output can be explained in plain language. The teams that answer those questions first will have an easier path into real deployments.

My bet is that the next 12 months will reward agent products that treat trust as a feature and observability as a default. If your AI agent cannot explain itself, cannot be audited, and cannot be constrained, it will struggle to move past pilots. The real question now is which vendors can prove control before the first serious failure forces them to.

// Related Articles