AI Models in 2026: Which One to Use

Gemini 3.1 Pro leads reasoning, Claude writes best, and Grok tops some coding tests, so the right pick depends on the task.

2026 AI model choice depends on the task, not one winner.

By 2026, the model race has split into specialties. OpenAI, Anthropic, Google DeepMind, and xAI all have models that win in different jobs, and the numbers make that clear.

The source article points to four models that matter most: GPT-5.4, Claude Opus 4.6, Gemini 3.1 Pro, and Grok 4. Each one leads somewhere, but none owns every category.

| Model | Coding | Reasoning | Writing | API Price per 1M tokens |

|---|---|---|---|---|

| GPT-5.4 | 74.9% SWE-bench | 92.8% GPQA | Canvas editor | $2.50 / $15 |

| Claude Opus 4.6 | 74%+ SWE-bench | 91.3% GPQA | 128K output, natural prose | $15 / $75 |

| Gemini 3.1 Pro | 63.8% SWE-bench | 94.3% GPQA | Docs integration | $2 / $12 |

| Grok 4 | 75% SWE-bench | Competitive | Uncensored style | $2 / $15 |

The 2026 story is specialization

Get the latest AI news in your inbox

Weekly picks of model releases, tools, and deep dives — no spam, unsubscribe anytime.

No spam. Unsubscribe at any time.

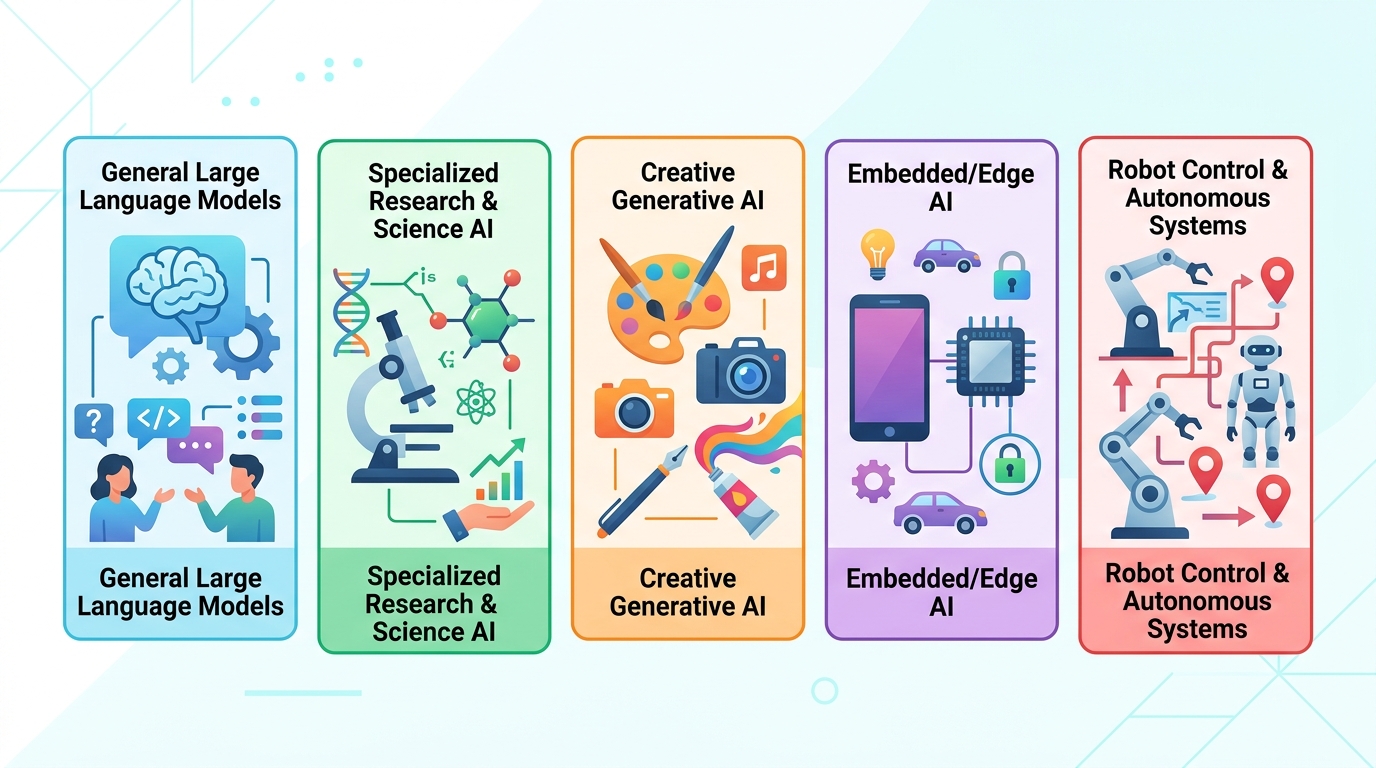

The biggest shift in AI this year is simple: the best model depends on what you ask it to do. That sounds obvious, but it matters because older buying habits were built around one assistant doing everything well enough. In 2026, that assumption breaks down.

For coding, Grok 4 leads the source’s raw benchmark table with 75% on SWE-bench. GPT-5.4 follows at 74.9%, while Claude Opus 4.6 sits at 74%+. Those numbers are close enough that workflow matters almost as much as the benchmark.

For reasoning, Gemini 3.1 Pro pulls ahead with 94.3% on GPQA. GPT-5.4 posts 92.8%, and Claude Opus 4.6 reaches 91.3%. If you care about hard science, math-heavy analysis, or structured thinking, that gap is enough to notice.

- Grok 4: 75% SWE-bench for coding

- GPT-5.4: 92.8% GPQA for reasoning

- Gemini 3.1 Pro: 94.3% GPQA for reasoning

- Claude Opus 4.6: 128K output for long writing tasks

Claude wins when the output has to read well

If your work lives in long documents, reports, proposals, or polished drafts, Claude is the model most people will want to test first. The article’s claim is plain: Claude produces the most natural prose, and Opus 4.6 can output 128K tokens in one pass.

That matters because writing quality is not just style. Long-context writing changes how teams draft internal docs, research summaries, and customer-facing material. A model that can hold a huge draft together without losing tone or structure saves editing time later.

Anthropic has also become deeply tied to developer workflows. The article notes that Claude powers Cursor and Windsurf, two of the most popular AI coding editors. That ecosystem effect matters because model quality is only part of the experience.

“Claude is the best model for writing and coding assistants.” — Andrew Ng, speaking on his personal site and in public talks about AI workflows

Business buyers should care about the system, not the chatbot

The article makes its strongest point in the business section: the model matters less than the orchestration around it. That is the part many comparison posts miss. A support bot that can route questions, retrieve knowledge base answers, and hand off to a human will outperform a raw chatbot, even if the chatbot uses a stronger base model.

GuruSup’s own framing is that AI agents can automate customer support, sales, and internal help desks with 40% to 60% automation rates. The important detail is the architecture. The model is only one layer in a larger workflow.

That is why companies should compare products on four practical questions: can it retrieve the right data, can it escalate cleanly, can it track intent across multiple turns, and can it fit the budget when volume rises? If the answer is yes, the exact model becomes less important than the system design.

- 40% to 60% automation rates are possible in support workflows

- Routing and retrieval matter more than raw model size

- Human escalation still matters for messy edge cases

- ROI depends on workflow design, not benchmark bragging rights

What each model is best at in practice

The article’s decision tree is useful because it maps models to real jobs instead of abstract scores. If you code all day, Claude Opus 4.6 and Grok 4 are the names to check first. If you need deep reasoning, Gemini 3.1 Pro is the one that jumps out. If you write a lot, Claude is the safest bet.

GPT-5.4 is the broadest pick. It does not top every category, but it stays competitive across coding, reasoning, and writing, and it has the biggest ecosystem around it. For many teams, that ecosystem is the real product.

For real-time information, Grok 4 gets a boost from live X data, while Perplexity brings a search-first approach that still feels distinct from the main frontier models. For low API cost, Gemini 3.1 Pro is the cheapest in the source table at $2 in and $12 out per 1 million tokens.

What I would pick if I were buying today

If I needed one model for general work, I would start with GPT-5.4 because the ecosystem matters and the model is strong across the board. If I were building a writing-heavy product, I would test Claude first. If I were doing research or scientific analysis, Gemini 3.1 Pro would be my default trial.

For coding tools, I would care less about benchmark headlines and more about where the model is already integrated. Cursor, Windsurf, and Claude Code give Claude an advantage that pure scores do not capture. For support automation, I would ignore the chatbot pitch and ask how the agent handles retrieval, routing, and escalation.

The practical takeaway is blunt: do not buy an AI model like it is a phone spec sheet. Pick the model that matches the job, then measure how well the workflow around it handles your data, your users, and your edge cases. If you want a shortcut, ask one question before you choose: is this task about code, reasoning, writing, or operations?

For a deeper comparison, read the related OraCore.dev guides on Perplexity vs ChatGPT and Claude vs ChatGPT.

// Related Articles

- [MODEL]

MiniMax-M1 brings 1M-token open reasoning model

- [MODEL]

Gemini Omni Video Review: Text Rendering Beats Rivals

- [MODEL]

Why Xiaomi’s MiMo-V2.5-Pro Changes Coding Agents More Than Chatbots

- [MODEL]

OpenAI’s Realtime Audio Models Target Live Voice

- [MODEL]

Anthropic发布10款金融AI Agent

- [MODEL]

Why Claude’s “Infinite” Context Window Still Won’t Make AI Autonomous