Why Distributed Systems Solve Business Problems

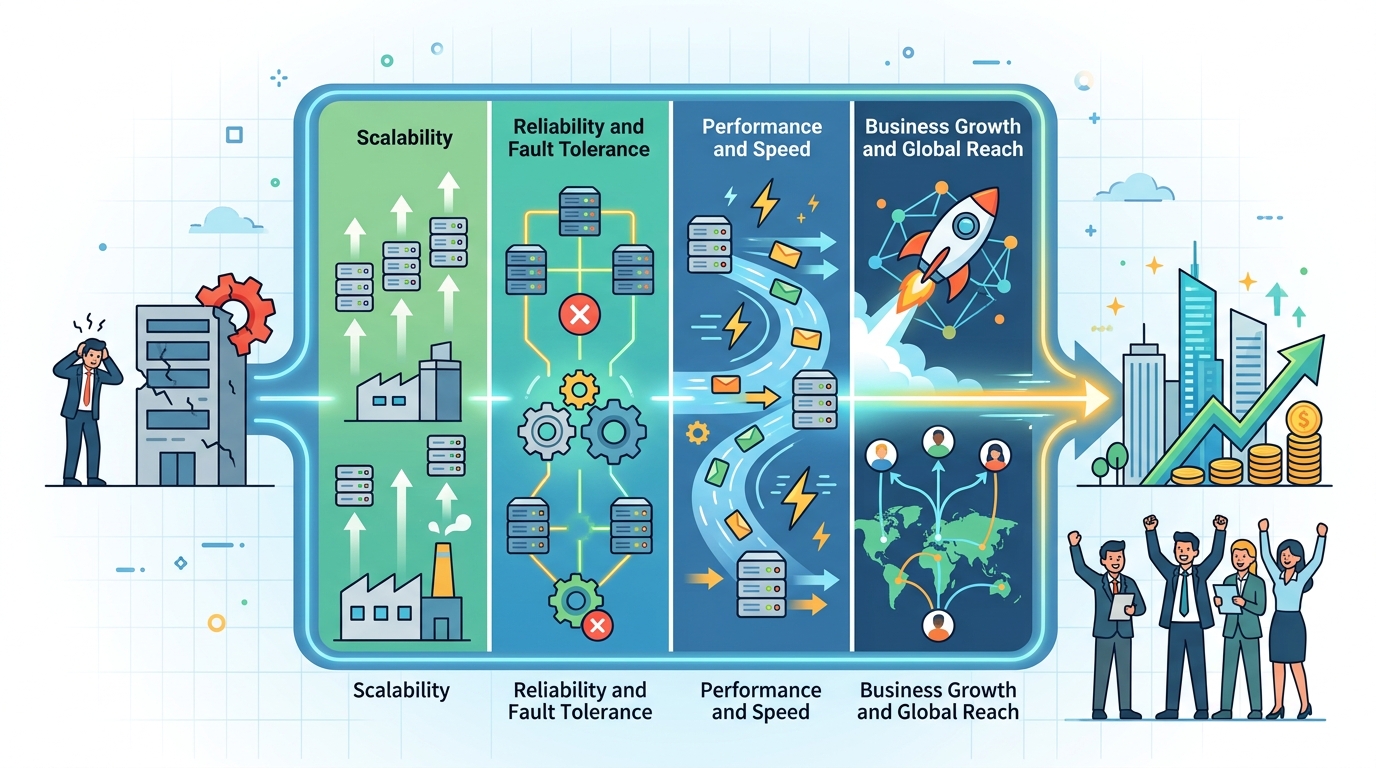

Distributed systems are business tools, not just infra. They cut outage risk, support global growth, and simplify complex workflows.

When a company runs across regions, handles millions of transactions, or needs to survive a cloud outage, architecture stops being an internal detail. It becomes a business decision with real cost attached. In Sunil Thamatam’s ITOps Times article, the point is clear: distributed systems are often the hidden machinery behind growth, resilience, and faster product delivery.

That matters because the old “single app, single database, single region” model breaks down fast. Once a business needs local compliance, lower latency, or isolation from failures, distributed design stops being optional and starts acting like strategy.

Distributed systems change what a company can do

Get the latest AI news in your inbox

Weekly picks of model releases, tools, and deep dives — no spam, unsubscribe anytime.

No spam. Unsubscribe at any time.

At the technical level, distributed systems split work across services, regions, and data stores. At the business level, that split changes the shape of the company itself. A firm that can route users to the right region, keep data where regulations demand, and continue serving traffic during a regional outage can expand with far less fear.

This is why companies like AWS, Google Cloud, and DataStax keep pushing multi-region and distributed data patterns. The technical story is about replication and failover. The business story is about continuity, geography, and trust.

Thamatam gives a concrete example: a service that distributed data across multiple regions and switched to a secondary region during an AWS outage. That kind of design is easy to dismiss as infra work until the alternative is a platform-wide outage during peak demand.

- Multi-region routing lets businesses keep serving users when one region degrades.

- Data residency controls help teams meet local compliance rules without building separate products.

- Regional isolation limits the blast radius of outages.

- Horizontal scaling gives leadership room to grow without a full rewrite.

The interesting part is that these are not abstract benefits. They show up in real budget lines: fewer incident hours, lower revenue loss, and less pressure to overbuild a single central system just to stay safe.

Isolation is really risk management

One of the strongest arguments for distributed systems is fault isolation. In a monolith, one bad database lock, memory leak, or slow query can drag down the whole application. In a distributed setup, the damage can be contained if services are separated well and failures are expected instead of ignored.

That is why patterns like asynchronous queues, circuit breakers, and service boundaries matter. They are engineering techniques, but they also act like insurance. A payment platform can keep authorizing transactions even if reporting is delayed. A notification service can fail without stopping checkout. A search cluster can degrade without taking identity down with it.

For a good example of how architecture and business risk overlap, look at Cockroach Labs and MongoDB, both of which sell distributed data systems built around availability and fault tolerance. Their pitch is technical, but the buyer is usually thinking about uptime, recovery, and customer trust.

“Any sufficiently advanced technology is indistinguishable from magic.” — Arthur C. Clarke

That quote fits distributed systems well. When they work, users never think about replication lag, regional failover, or background queues. They just see a product that keeps moving even when one piece fails.

Thamatam also points out a practical business win: controlled experimentation. If a new feature can live in an isolated service, a company can test it without risking the core platform. That lowers the cost of trying new ideas, which matters more than most slide decks admit.

Distributed design simplifies complex organizations

Distributed systems can look more complex on paper because they add services, network calls, and failure modes. In practice, they often reduce organizational confusion. The reason is simple: each service owns a clear job, and each team gets a smaller surface area to manage.

That lines up with the real world of product teams. Identity, billing, analytics, search, and notifications rarely change at the same pace. If all of that sits in one codebase, every change becomes a coordination tax. If the boundaries are clean, teams can ship in parallel.

Thamatam references Conway’s Law, and the point is hard to miss: software often mirrors the structure of the company building it. If the org is split into separate groups, the architecture usually follows. Good distributed design makes that reality work in your favor instead of fighting it.

- Identity can evolve without waiting on billing.

- Search can scale differently from transactional workflows.

- Analytics can lag without blocking customer-facing actions.

- Notification systems can fail independently without stopping the core product.

That kind of separation is especially useful in regulated industries and large SaaS products. It creates clearer ownership, cleaner interfaces, and fewer “who owns this?” moments during incidents.

The tradeoffs are real, and the numbers matter

Distributed systems are powerful, but they are never free. They add latency, operational overhead, and more ways for things to fail. The question is whether those costs are lower than the cost of forcing everything through one central system.

Here is where business leaders need actual numbers, not slogans. If a company can cut a regional outage from 45 minutes to 5 minutes, that is not a technical win alone. If a replicated service reduces the chance of a full-platform outage, that changes revenue risk. If a team can ship independently because APIs are stable, that changes release speed.

For comparison, cloud providers and distributed database vendors often publish performance and availability targets in the four- to five-nines range, but those numbers only matter when the architecture supports them. A single shared dependency can erase all that paper confidence very quickly.

- Kubernetes is often used to coordinate distributed workloads, but it adds operational complexity of its own.

- Confluent pushes event-driven systems that trade simplicity for better decoupling and real-time flow.

- Uber and Netflix are well-known examples of companies that rely on distributed architectures to keep large-scale services moving.

- CAP tradeoffs are not theory when a product team must choose between strict consistency and higher availability.

That last point is where the architecture becomes strategic. A business that understands its consistency tolerance can make smarter product calls. Sometimes stale data for a few seconds is acceptable. Sometimes it is not. Distributed systems force that conversation early, before the product ships a promise it cannot keep.

What this means for the next architecture decision

The main takeaway from Thamatam’s argument is simple: distributed systems are not a badge of technical maturity. They are a way to solve business problems that would otherwise stay stuck at the scale of one server, one database, or one region.

If your company is expanding into new markets, processing sensitive data, or depending on always-on workflows, the next architecture review should ask a blunt question: what business risk does centralization create, and what does distribution actually buy us?

My bet is that more teams will stop treating multi-region design as an enterprise luxury and start treating it as a default for anything customer-facing and time-sensitive. The companies that do that well will not just survive outages better. They will make faster bets, enter new markets with less friction, and spend less time turning technical debt into board-level anxiety.

The real test is whether your current system can fail in one place without taking the business with it. If the answer is no, the architecture discussion is already overdue.

// Related Articles

- [IND]

Circle’s Agent Stack targets machine-speed payments

- [IND]

IREN signs Nvidia AI infrastructure pact

- [IND]

Circle launches Agent Stack for AI payments

- [IND]

Why Nebius’s AI Pivot Is More Real Than Hype

- [IND]

Nvidia backs Corning factories with billions

- [IND]

Why Anthropic and the Gates Foundation should fund AI public goods