Harness Engineering for Long-Running Multi-Agent Systems

A context-reset design keeps each Claude Code run clean, turning Planner output into JSON so Generator stays focused on the task.

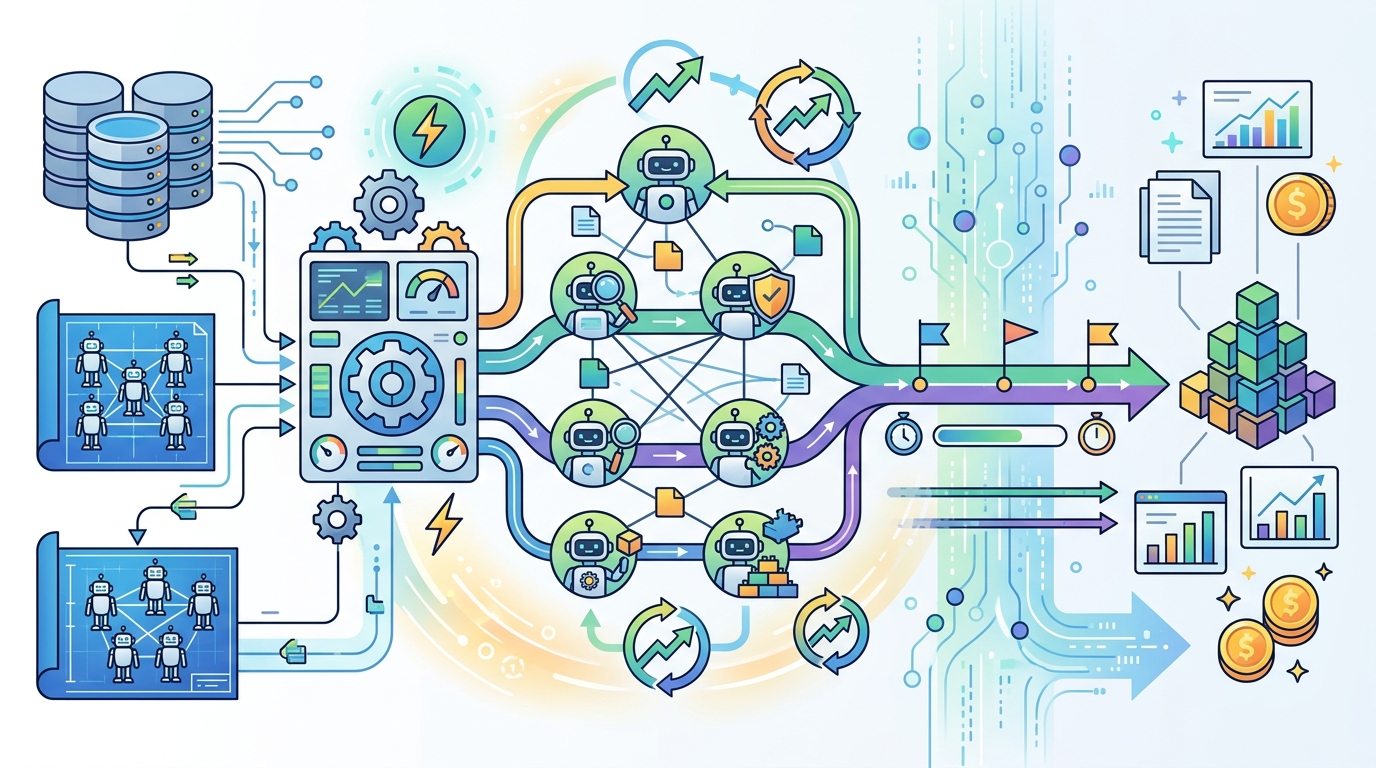

Long-running multi-agent systems fail in boring ways: memory gets polluted, prompts drift, and one agent starts inheriting the half-finished reasoning of another. In the Harness Engineering setup described here, each execution starts a fresh Claude Code process, and the Planner’s output is converted into JSON before the Generator sees it.

That design choice sounds simple, but it solves a real coordination problem. Instead of passing a messy conversation history downstream, the system passes a structured task description. The result is a cleaner boundary between planning and execution, which matters a lot once a workflow runs for minutes or hours instead of a few turns.

Why context reset matters

Get the latest AI news in your inbox

Weekly picks of model releases, tools, and deep dives — no spam, unsubscribe anytime.

No spam. Unsubscribe at any time.

Most multi-agent systems fail because they treat conversation history like a shared workspace. That works for short tasks, but it gets risky when the Planner explores alternatives, changes its mind, or leaves behind tentative reasoning that should never reach execution.

Context reset fixes that by making every agent run independent. The Generator does not inherit the Planner’s internal debate. It receives a task payload, then acts on that payload alone. In practice, that reduces accidental coupling between agents and makes failures easier to inspect.

This is especially useful in systems that run many jobs back to back. A clean process boundary means fewer hidden dependencies, fewer prompt artifacts, and less chance that an old instruction survives longer than it should.

- Each agent execution starts from a fresh process

- Planner output becomes structured JSON, not chat history

- Generator sees only the final task definition

- Context pollution drops because reasoning is not forwarded downstream

The important detail is that this is not a memory trick. It is an interface design decision. The system treats planning as an upstream compilation step and execution as a downstream runtime step.

From conversation to task spec

The article’s core move is to turn Planner output into JSON before handing it to the Generator. That means the Planner can reason freely, but only the parts that matter get serialized into the next step. The Generator receives a compact task spec instead of a transcript.

That approach is cleaner than passing raw text because JSON forces structure. Fields like objectives, constraints, inputs, and expected outputs can be named explicitly. Once that happens, the Generator spends less time interpreting intent and more time doing the work.

For teams building agentic systems, this also makes logging and debugging easier. You can diff task objects, validate required fields, and spot malformed instructions before they hit execution.

- Planner output is filtered before execution

- JSON makes task boundaries explicit

- Structured fields are easier to validate than free-form chat

- Execution logs become easier to audit

This is the kind of design that feels slightly unglamorous until you scale it. Then it becomes obvious why the interface between agents matters more than the prompt itself.

A useful quote from Anthropic

Anthropic has been very direct about the value of agentic workflows. In its Claude Code documentation, the company says, “Claude Code is an agentic coding tool that operates directly in your terminal.” That line matters here because it frames the product as an execution-oriented system, which fits the Harness Engineering pattern well.

“Claude Code is an agentic coding tool that operates directly in your terminal.” — Anthropic

That quote lines up with the article’s design choice. If the tool is built for execution, then the surrounding harness should protect it from noisy upstream state. Fresh processes and structured prompts do exactly that.

It also explains why this pattern is attractive for long-running jobs. Terminal-based agents are powerful, but they need a disciplined wrapper if you want predictable behavior across repeated runs.

How this compares with other agent setups

Many agent frameworks keep a shared conversation thread across steps. That can be convenient for prototypes, but it often creates invisible carryover. One agent’s partial reasoning becomes another agent’s context, and the system starts behaving like a group chat instead of a pipeline.

By contrast, the context-reset model treats each stage as a separate computation. The Planner produces a task description, the Generator executes it, and neither step depends on hidden conversational residue. That is a stronger fit for workflows where determinism matters.

Here is the practical tradeoff:

- Claude Code with fresh-process execution gives you cleaner isolation

- OpenAI Codex-style agent loops can be faster to prototype when shared context is acceptable

- LangGraph gives you explicit state graphs, which help when orchestration gets complex

- JSON task specs are easier to test than free-form prompt chains

There is a performance cost to resetting context every time, because you lose conversational continuity. But for long-running systems, that cost is often worth paying. Predictable execution usually beats clever memory reuse.

What builders should take from this

The lesson here is simple: if an agent’s job is to execute, do not let it inherit reasoning it does not need. Use the Planner to think, then serialize the result into a task object that the Generator can consume cleanly.

This design is especially relevant for teams building internal automation, code-generation pipelines, or multi-step research tools. It reduces prompt contamination, makes failures easier to reproduce, and gives you a clear place to validate inputs before execution begins.

If you are building your own harness, the first question to ask is whether each step really needs conversational memory. If the answer is no, reset the context and pass structured data instead. That one decision can save a lot of debugging later.

My prediction is straightforward: the strongest multi-agent systems over the next year will look less like chat transcripts and more like typed pipelines. The teams that define clean interfaces between agents will spend less time untangling prompt drift and more time improving actual task quality.

If you want to compare this design with other orchestration patterns, check our related coverage of agent orchestration patterns and structured prompting for code agents.

// Related Articles