Kubernetes Is Becoming AI’s Control Plane

KubeCon Europe 2026 showed Kubernetes moving from app orchestration to AI ops, with inference, GPUs, and open standards leading the shift.

KubeCon Europe 2026 drew more than 13,500 attendees, up about 10% year over year, and the message from CNCF was plain: Kubernetes is no longer just where cloud-native apps run. It is becoming the control plane for AI infrastructure, especially inference.

That shift matters because the AI conversation has moved past demos and into operations. The hard problem now is serving models reliably, routing requests efficiently, and keeping GPU-heavy systems from turning into an expensive mess.

Why KubeCon’s AI message landed so hard

Get the latest AI news in your inbox

Weekly picks of model releases, tools, and deep dives — no spam, unsubscribe anytime.

No spam. Unsubscribe at any time.

The event in Amsterdam was the largest KubeCon to date, with more than 13,500 attendees from over 100 countries, 3,000-plus organizations, and nearly 900 sessions. CNCF also said the cloud-native developer base is approaching 20 million people. Those numbers are a reminder that this ecosystem has real gravity.

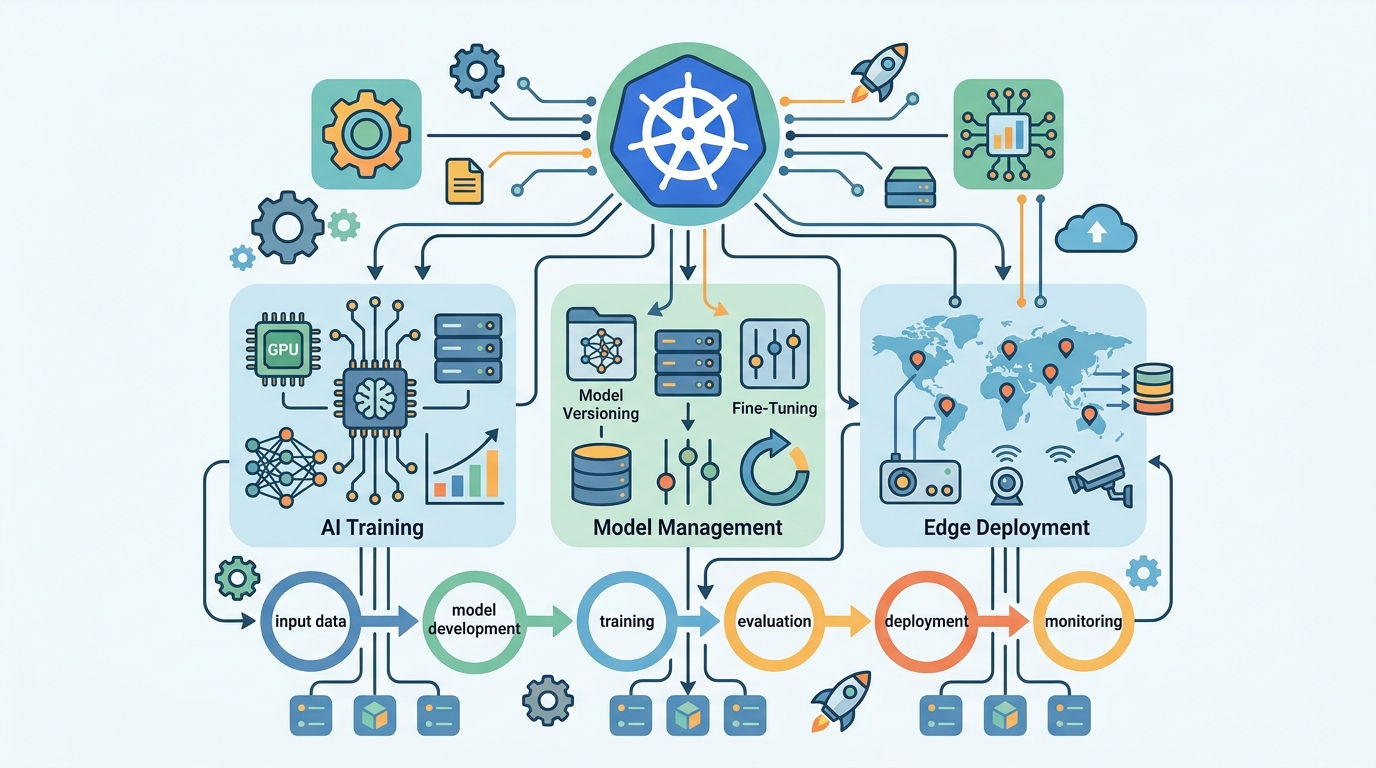

What changed this year is where that gravity is pointing. CNCF leadership framed AI infrastructure as the next major workload for cloud native, with inference taking center stage. Training still matters, but inference is what enterprises will run every day, at scale, and under pressure from latency, cost, and reliability targets.

That is why Kubernetes keeps coming up in AI conversations. It already solves scheduling, service discovery, scaling, and policy for distributed systems. AI workloads now need those same capabilities, plus better handling for GPUs, stateful routing, and model serving patterns that do not behave like ordinary web traffic.

- 13,500+ attendees at KubeCon Europe 2026

- 100+ countries represented

- 3,000+ organizations in attendance

- Nearly 900 sessions across the conference

- Cloud-native developer base approaching 20 million

The AI announcements were more than conference theater

The most important signal came from the ecosystem itself. NVIDIA joined CNCF as a platinum member, donated its GPU driver to Kubernetes SIG Node as a reference implementation for the vendor-neutral DRA API, and pledged $4 million over three years to support GPU access for CNCF projects.

That is the kind of move that tells you the hardware layer wants in on the standards conversation. If Kubernetes is going to remain the operating layer for AI infrastructure, GPU access cannot stay trapped inside vendor-specific tooling. The same goes for scheduling, routing, and serving.

CNCF also announced LLMD as a new sandbox project. LLMD is pitched as a distributed inference system built around Kubernetes, which is a strong sign that the community is now treating inference as a first-class infrastructure problem, not an application afterthought.

“Inference is where the money is,” said Jensen Huang, CEO of NVIDIA, onstage at GTC 2025. The line fits this moment almost too well.

CNCF also expanded its Kubernetes AI conformance work, adding requirements around Gateway API support, inference-aware routing, and disaggregated inference. Those are dry terms, but they point to a real operational headache: traditional load balancing assumes stateless traffic, while AI inference cares about cache reuse, prompt latency, and GPU memory efficiency.

In other words, the stack is changing because the workload changed first.

The numbers behind the inference shift

The keynote leaned hard on one forecast: in 2023, about two-thirds of AI compute went to training and one-third to inference. By the end of 2026, that ratio is expected to flip. By decade’s end, inference demand was projected to reach 93.3 gigawatts of compute capacity.

That forecast should be treated as directional, not destiny. Still, the trend is easy to understand. Chatbots introduced people to AI. Agents will drive sustained usage, and that means far more repeated requests, more token generation, and much higher infrastructure load.

For operators, this changes the economics. Training is a burst. Inference is the bill that keeps arriving.

- 2023: roughly 2/3 of AI compute went to training

- 2023: roughly 1/3 of AI compute went to inference

- By end of 2026: inference is expected to overtake training

- By decade’s end: inference demand projected at 93.3 gigawatts

This is also why Kubernetes is being recast as a programmable control plane for AI infrastructure rather than a simple container scheduler. Once inference becomes the dominant steady-state workload, the winning platform is the one that can coordinate GPUs, route requests intelligently, and keep utilization high without turning every deployment into a custom project.

What operators can learn from Uber and the cloud-native stack

The Uber segment at KubeCon gave the keynote some much-needed reality. Uber said its Michelangelo platform supports 100% of mission-critical ML at the company, with 20,000 models trained per month, 5,300 in production, and more than 30 million peak predictions per second across roughly 1,000 serving nodes.

Those are not small numbers, and they show why AI infrastructure has to be treated like production infrastructure from day one. The scale is not hypothetical. It already exists inside large companies that have spent years building internal platforms around model training, deployment, and serving.

For teams trying to make sense of all this, the comparisons are useful because they show where the pressure sits:

- Kubernetes already handles scheduling and orchestration across mixed workloads

- Kyverno and Tekton help standardize policy and pipelines, which matters when models change often

- Dragonfly helps with distribution patterns that become more important as model artifacts and inference traffic grow

- Fluid addresses data access patterns that matter when AI jobs need fast, repeated reads

That mix is the real story. AI infrastructure does not need a brand-new operating model from scratch. It needs the cloud-native stack to stretch into GPU scheduling, inference routing, and policy enforcement without losing the portability that made Kubernetes useful in the first place.

Europe’s role also matters here. CNCF said Europe is currently the largest regional contributor across CNCF projects, which fits the broader sovereignty conversation. If AI infrastructure is going to be regulated, audited, and deployed across national boundaries, open standards will matter more, not less.

Kubernetes is moving from app hosting to AI operations

The takeaway from KubeCon Europe 2026 is simple: the AI discussion has left the hype phase and entered the operations phase. The important questions now are about inference, GPU access, routing, and control, not just model quality or benchmark scores.

That means platform teams should stop asking whether Kubernetes can support AI and start asking where the bottlenecks will appear first. Is it GPU allocation? Is it cache-aware routing? Is it policy drift across clusters? Those are the decisions that will define the next generation of AI infrastructure.

If CNCF gets this right, Kubernetes becomes the standard control layer for enterprise AI. If it does not, vendors will fill the gap with closed systems that make portability harder and costs less predictable. My bet is that the open stack still has the edge, but only if it keeps standardizing around the messy reality of inference.

For now, the actionable move is clear: treat inference as a production workload, not a pilot. The teams that design for routing, GPU economics, and policy today will have far fewer surprises when agents and specialized models start driving most of the traffic.

// Related Articles

- [IND]

Circle’s Agent Stack targets machine-speed payments

- [IND]

IREN signs Nvidia AI infrastructure pact

- [IND]

Circle launches Agent Stack for AI payments

- [IND]

Why Nebius’s AI Pivot Is More Real Than Hype

- [IND]

Nvidia backs Corning factories with billions

- [IND]

Why Anthropic and the Gates Foundation should fund AI public goods