LoRA vs QLoRA vs Full Fine-Tuning

A practical comparison of LoRA, QLoRA, and full fine-tuning for 2026 LLM projects.

LoRA, QLoRA, and full fine-tuning trade cost, speed, and quality in different ways for LLM teams.

Choosing between LoRA, QLoRA, and full fine-tuning usually comes down to budget, model size, and how much behavior you need to change.

At a glance

Get the latest AI news in your inbox

Weekly picks of model releases, tools, and deep dives — no spam, unsubscribe anytime.

No spam. Unsubscribe at any time.

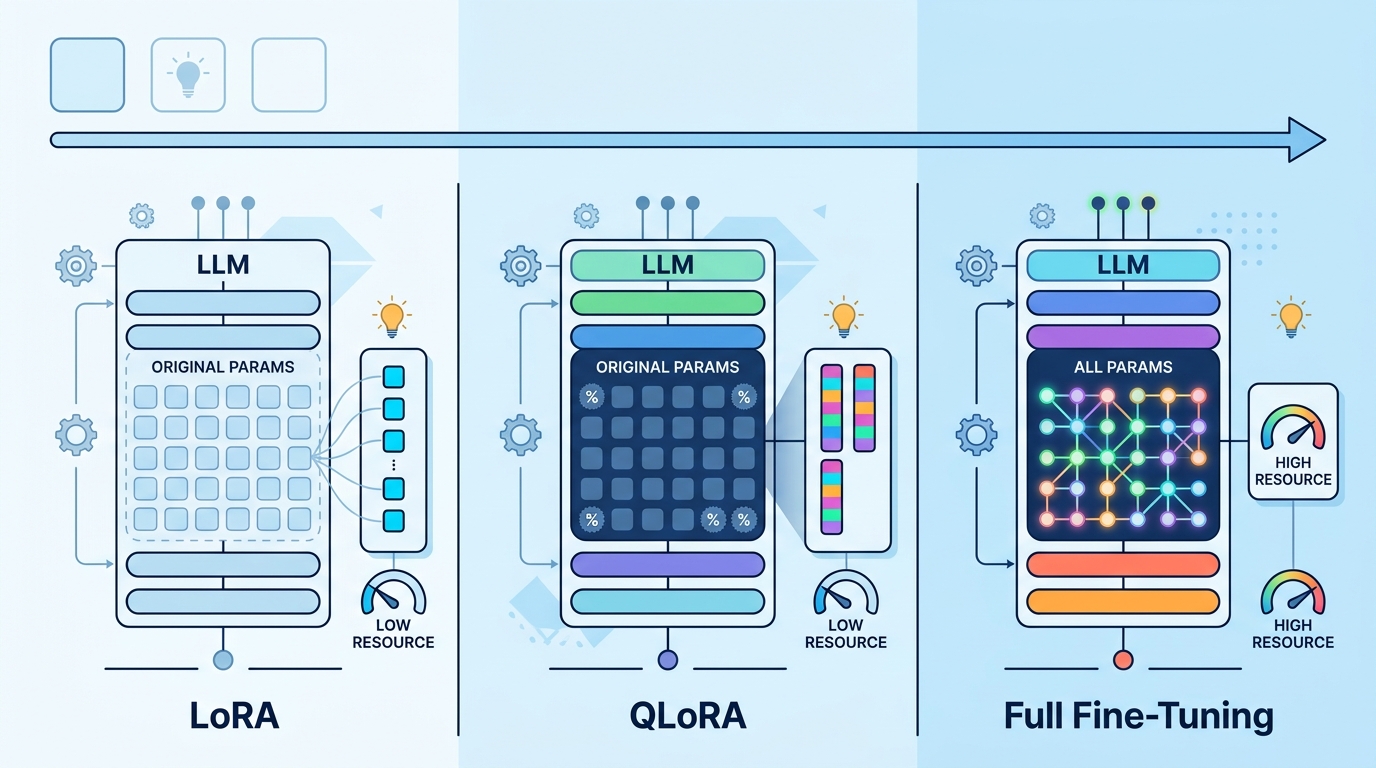

| Dimension | LoRA | QLoRA | Full fine-tuning |

|---|---|---|---|

| Typical GPU need | 1x A100 40GB or 80GB | 1x A100 80GB; some 24GB cards for 7B | 4x A100 80GB or 2x H100 80GB for 8B+ |

| Approx. cost for 8B SFT | USD 15-40 | USD 12-20 | USD 150-500+ |

| Adapter / checkpoint size | 20-100 MB | 20-100 MB | 10-30 GB |

| Training speed | Fast | Fastest on limited VRAM | Slowest |

| Quality ceiling | High for narrow tasks | Very high for most SFT jobs | Highest for deep behavior shifts |

| Risk of forgetting | Low to moderate | Low to moderate | Highest without careful data mixing |

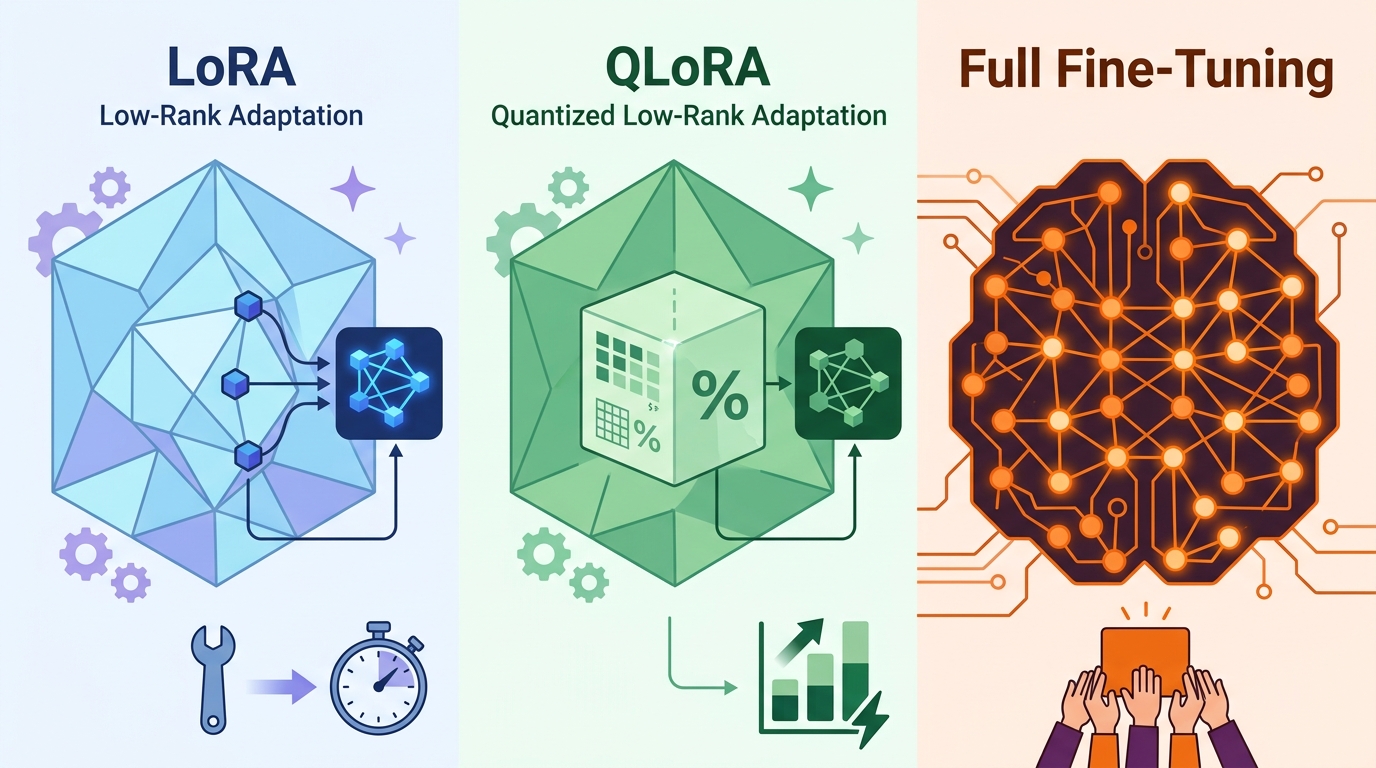

LoRA

LoRA is the safest middle path when you want a strong domain adaptation without rewriting the whole model. It keeps the base weights frozen and learns small low-rank adapters, which makes experiments cheap, reversible, and easy to deploy.

In practice, LoRA is best when you already have enough VRAM for the base model and want a cleaner training setup than full fine-tuning. It is also a good choice if you expect to maintain several variants, since adapter files are tiny and can be swapped without rebuilding the whole stack.

QLoRA

QLoRA is the default choice for many 2026 teams because it compresses the base model to 4-bit during training while still learning LoRA adapters. That cuts memory enough to fine-tune larger models on a single A100 80GB, and sometimes even smaller cards for 7B-class models.

The trade-off is that quantization adds some complexity and can slightly narrow the ceiling for the most demanding tasks. Even so, for instruction tuning, format learning, and many domain tasks, QLoRA gives the best mix of cost, speed, and quality.

Full fine-tuning

Full fine-tuning updates all model weights, so it offers the most freedom to reshape behavior. That extra freedom matters when the task is unusually sensitive, the dataset is large and clean, or you need the model to internalize patterns that adapters do not capture well.

The downside is obvious: it is expensive, slower, and easier to get wrong. You need more GPU memory, more careful optimization, and stronger eval discipline because catastrophic forgetting and overfitting show up faster when every weight can move.

When to pick what

If you are a startup, internal platform team, or solo engineer trying to ship a useful domain model quickly, pick QLoRA first. It gives you the lowest-friction path to a working result, and you can often keep the same workflow even as the dataset grows.

If you already have a healthy GPU budget and want a simpler training stack with fewer quantization concerns, pick LoRA. It is a better fit than QLoRA when VRAM is not tight and you want a strong adapter-based system with predictable behavior.

If you are working on a high-stakes product, have thousands to hundreds of thousands of high-quality examples, and need the model to change deeply rather than politely, choose full fine-tuning. It is the right answer when the extra cost is justified by the need for maximum control over the base model.

Default to QLoRA, unless you have enough budget and data to justify full fine-tuning for a genuinely hard behavior shift.

// Related Articles

- [IND]

How to Follow Gemini and Apple Watch 12 Rumors

- [IND]

Jensen Huang Joins Trump on China Trip

- [IND]

ChatGPT vs Gemini: 9 Tests, 1 Clear Winner

- [IND]

How to Reduce AI Model Serving Friction

- [IND]

Why Global AI Regulation in 2026 Rewards Modular Compliance

- [IND]

Lovable backs Atech’s vibe coding for hardware