Why Global AI Regulation in 2026 Rewards Modular Compliance

Global AI regulation in 2026 rewards modular compliance, not one universal policy.

Global AI regulation now rewards modular compliance, not one universal policy.

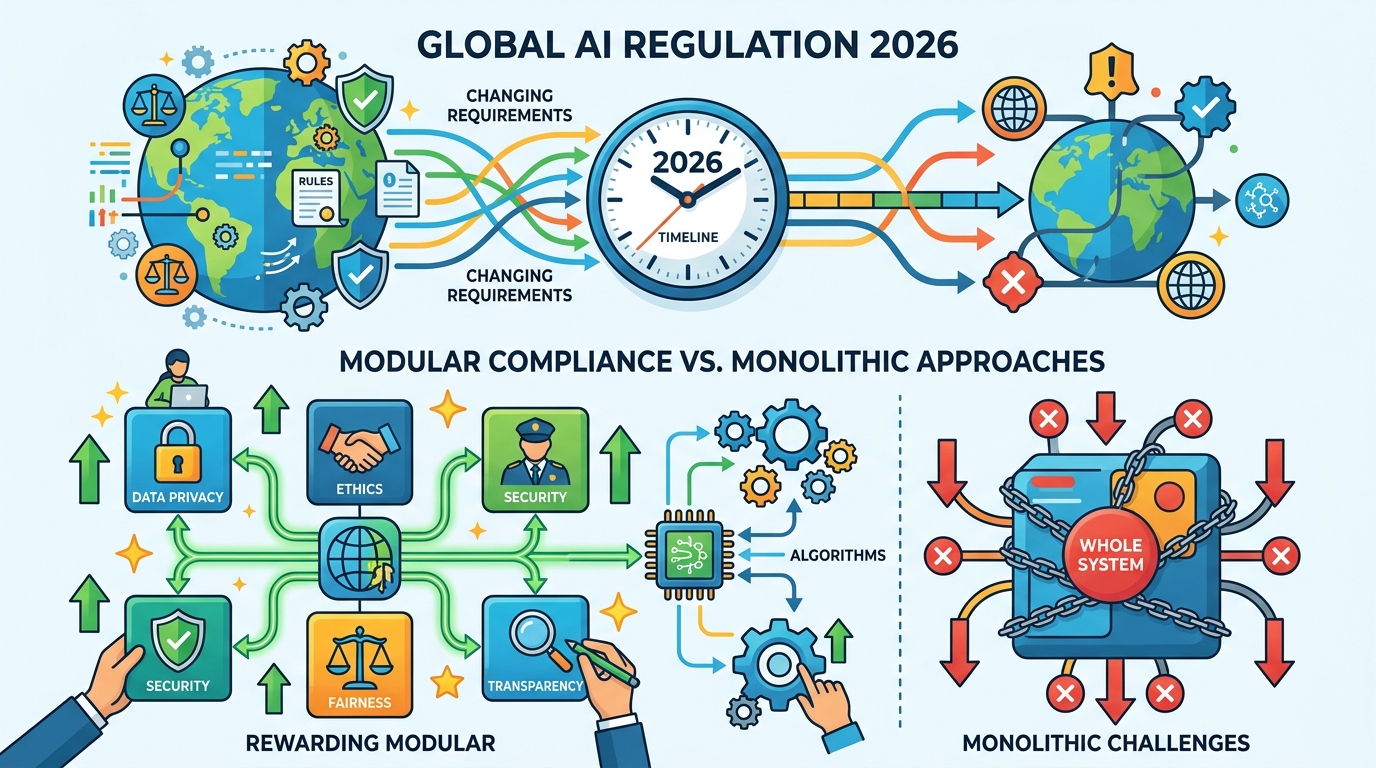

I think global AI regulation in 2026 makes one thing clear: companies should stop chasing a single worldwide compliance model and build region-specific systems instead. The evidence is already on the table. The EU AI Act is fully in force, the U.S. still leans on a patchwork of sector rules and voluntary commitments, and China enforces strict data sourcing, labeling, and value-alignment rules. Those are not minor implementation differences. They are incompatible operating assumptions, and any company pretending otherwise is setting itself up for delay, rework, and market access problems.

The first argument: the rules are diverging too far to unify

Get the latest AI news in your inbox

Weekly picks of model releases, tools, and deep dives — no spam, unsubscribe anytime.

No spam. Unsubscribe at any time.

The EU has chosen the most rigid path, and that matters because it sets a high bar for everyone selling into Europe. The AI Act treats general-purpose models with systemic risk as regulated systems, not just software products. That means transparency obligations, copyright checks, and pre-market scrutiny. In other words, the EU is not asking companies to be careful; it is demanding proof. If your product fails those checks, you do not get to argue your way into the market.

The U.S. is doing the opposite. Federal agencies issued 59 AI-related regulations in 2024, but there is still no single federal AI law that ties the system together. That patchwork favors speed, and speed is useful, but it also creates ambiguity for planning, procurement, and model governance. A company that optimizes for U.S. flexibility will still have to redesign its controls for Europe and China. That is not a compliance strategy. It is a temporary truce.

The second argument: compliance is now an engineering problem, not just a legal one

Global AI compliance now depends on infrastructure choices, not just policy memos. Data residency rules, provenance tracking, watermarking, audit logs, and model segmentation all have to live inside the product stack. This is why sovereign AI has become such a central issue. If model weights, training data, and inference pipelines need to stay inside national borders, then compliance is no longer something a lawyer reviews at the end. It is part of the architecture from day one.

The implementation burden is already measurable. One industry estimate puts the average time to establish an effective AI governance framework at 6.2 months, and that is before the rules change again. Add the fact that 58% of organizations struggle to integrate new AI tools with legacy systems, and the case for a single global stack gets weak fast. A universal design sounds elegant until it collides with real systems, real data flows, and real enforcement deadlines.

The counter-argument

The best argument for a unified global approach is that fragmentation is expensive and slows innovation. A company that builds separate EU, U.S., and China versions of the same AI product pays more in engineering, compliance, and operations. It also risks inconsistent user experiences and duplicated governance work. From a founder’s perspective, the dream is obvious: one model, one policy, one rollout, one set of controls.

That argument is strong, and it is right about the cost. But it fails on the central fact of the market: the jurisdictions are not converging fast enough to justify a single compliance layer. The EU wants precaution, the U.S. wants flexibility, and China wants control. Those are not cosmetic differences. They shape what data you can use, what outputs you can generate, and where you can deploy. You can standardize some baseline practices, but you cannot standardize away sovereign law. The limit is real, and pretending otherwise only delays the inevitable rewrite.

What to do with this

If you are an engineer, PM, or founder, stop designing AI compliance as a legal overlay and treat it as a modular product capability. Build a common core for transparency, provenance, logging, and risk review, then add jurisdiction-specific controls for Europe, the U.S., and China. Keep your data pipelines separable, your model release process region-aware, and your documentation ready for audits. The winning move in 2026 is not to simplify the world. It is to build systems that survive complexity without collapsing under it.

// Related Articles