OpenAI Limits GPT-5.4-Cyber to Trusted Firms

OpenAI is limiting GPT-5.4-Cyber to vetted partners as it pushes AI deeper into security testing and dual-use risk management.

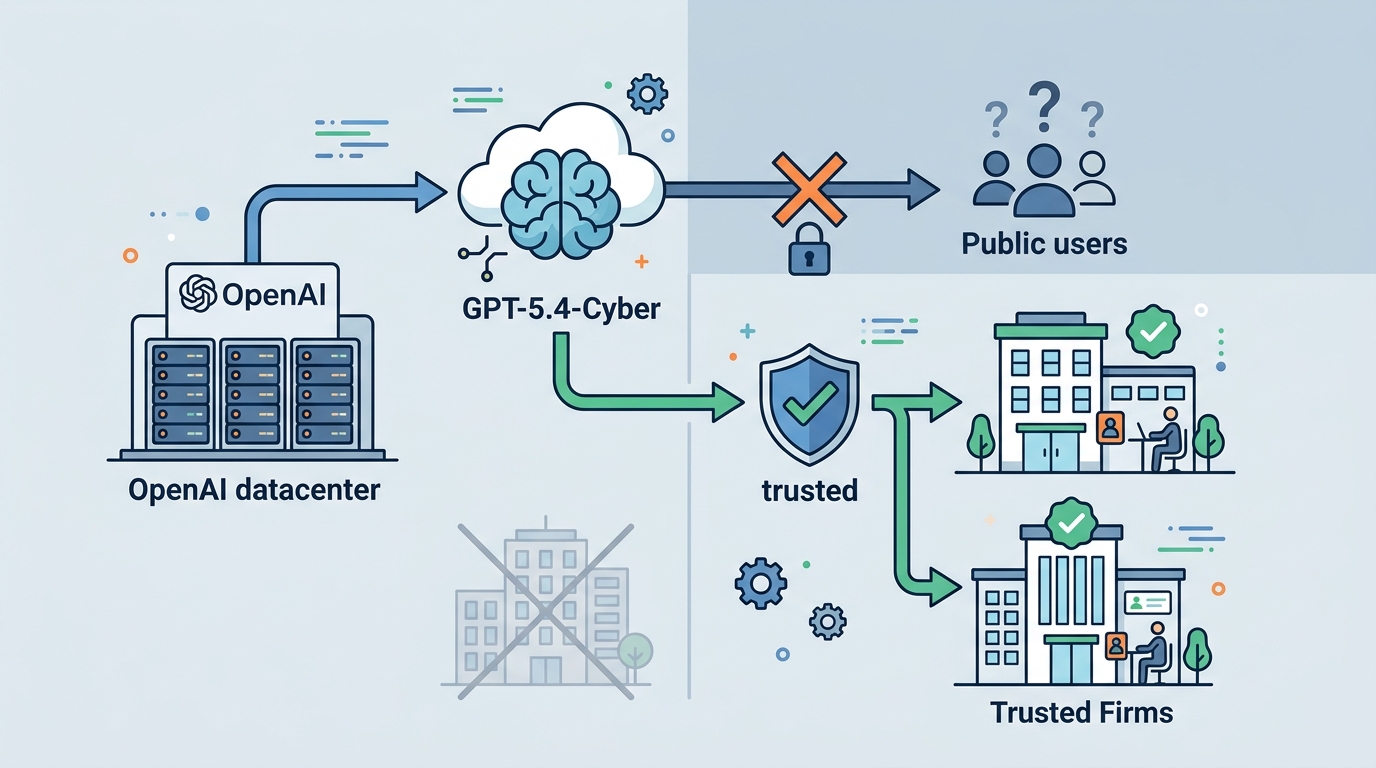

OpenAI has started a limited release of GPT-5.4-Cyber, a model aimed at finding security holes in software. The move matters because it puts a hard boundary around a powerful tool: access goes to trusted companies only, not the public at large.

That choice tells you where the company thinks the risk sits. A model that can spot vulnerabilities can also be used to probe systems in ways defenders never intended, so OpenAI is treating it more like a controlled security instrument than a general-purpose chatbot.

OpenAI’s decision also follows a pattern already visible across the AI sector. The company is making a bet that some high-end models should be distributed the way sensitive security tools are distributed: slowly, selectively, and with a paper trail.

What GPT-5.4-Cyber is meant to do

Get the latest AI news in your inbox

Weekly picks of model releases, tools, and deep dives — no spam, unsubscribe anytime.

No spam. Unsubscribe at any time.

OpenAI says GPT-5.4-Cyber is built to help identify weaknesses in code and software systems. In practical terms, that puts it in the same broad class as other AI-assisted security tools that scan for misconfigurations, exposed endpoints, and logic flaws before attackers can find them.

The limited release matters because software security is a huge market with real stakes. The average cost of a data breach reached $4.88 million in 2024, according to IBM’s Cost of a Data Breach Report. Even modest improvements in detection can save serious money, especially for companies running large codebases and cloud infrastructure.

OpenAI has not framed GPT-5.4-Cyber as a consumer product. That tells us the company is thinking about misuse, model behavior, and customer screening before scale. It is a narrower release, but the narrower path is the point.

- Target use: security testing and vulnerability discovery

- Access model: limited release to trusted companies

- Risk profile: dual-use, since the same skills can help defenders and attackers

- Business impact: stronger demand for AI-assisted red teaming and code review

Why OpenAI is restricting access

The big issue here is dual use. A model that can help a security team find a bug can also help someone map out how to exploit it. That tension has been visible for years in the security industry, but large language models make the tradeoff easier to scale.

OpenAI is taking a more controlled route rather than opening the model to everyone on day one. The company is also signaling that trust is now part of product design, not just a compliance afterthought.

“With great power comes great responsibility.” — Volodymyr Zelenskyy, speaking at the World Economic Forum in Davos in 2024

That quote gets used a lot, but it fits here because this is exactly the kind of capability that forces a company to think hard about distribution. If a model can accelerate defense work, it can also lower the cost of offensive research.

This is where OpenAI’s move lines up with a broader industry trend: AI companies are getting more selective about who gets access to their most capable systems, especially when those systems can be pointed at infrastructure, code, or sensitive workflows.

How this compares with other AI security efforts

OpenAI is not the first company to restrict advanced model access for safety reasons. Anthropic has also used staged access and policy controls for more sensitive capabilities, especially where misuse could scale quickly. The difference is that OpenAI is now applying that approach more visibly to cybersecurity work.

That puts GPT-5.4-Cyber in a category that is useful, valuable, and hard to distribute casually. Security teams want tools that can reason over messy codebases and spot subtle flaws. Vendors want to reduce the chance that the same tools end up in the wrong hands.

- Microsoft Security Copilot focuses on analyst workflows and incident response rather than open-ended vulnerability discovery

- Wiz emphasizes cloud security posture and exposure management across environments

- OpenAI GPT-4o is a general model, while GPT-5.4-Cyber is tuned for a narrower security task

- Anthropic’s news page shows a similar pattern of controlled rollout for higher-risk capabilities

The comparison also highlights a business reality: security-focused AI is moving from demos to procurement. Companies do not just want a model that can chat about code. They want one that can inspect repositories, flag risky patterns, and fit into existing review processes without creating a new liability.

That is why the release criteria matter as much as the model itself. A trusted-company rollout means OpenAI can watch usage, measure abuse patterns, and tighten controls before wider distribution.

What this means for developers and security teams

For developers, the immediate takeaway is simple: AI security tools are getting more capable, but access will remain uneven. Teams with strong vendor relationships and mature security programs will see these tools first. Smaller teams may have to wait for a broader release or settle for less specialized products.

For security teams, the opportunity is practical. A model like GPT-5.4-Cyber could speed up code review, support internal red teaming, and help prioritize which findings deserve human attention first. It will not replace a skilled pentester, but it may reduce the time spent on repetitive scans and triage.

The harder question is governance. If a model can identify vulnerabilities in software, who gets to use it, how are logs stored, and what happens when a customer tries to push it beyond defensive work? OpenAI’s limited release suggests the company wants answers before scale, not after a public incident.

Here is the likely next step: more AI firms will copy this distribution model for sensitive tools, especially in cybersecurity and bio-related work. The companies that can prove strong access controls and auditing will get the first look at the newest systems. The rest of the market will have to wait, and that wait may become a permanent feature of advanced AI products.

If you are a security leader, the question is no longer whether AI will touch vulnerability research. It already does. The real question is whether your team will be in the trusted group that gets early access, or the broader market that sees the product after the controls are already set.

// Related Articles

- [MODEL]

MiniMax-M1 brings 1M-token open reasoning model

- [MODEL]

Gemini Omni Video Review: Text Rendering Beats Rivals

- [MODEL]

Why Xiaomi’s MiMo-V2.5-Pro Changes Coding Agents More Than Chatbots

- [MODEL]

OpenAI’s Realtime Audio Models Target Live Voice

- [MODEL]

Anthropic发布10款金融AI Agent

- [MODEL]

Why Claude’s “Infinite” Context Window Still Won’t Make AI Autonomous