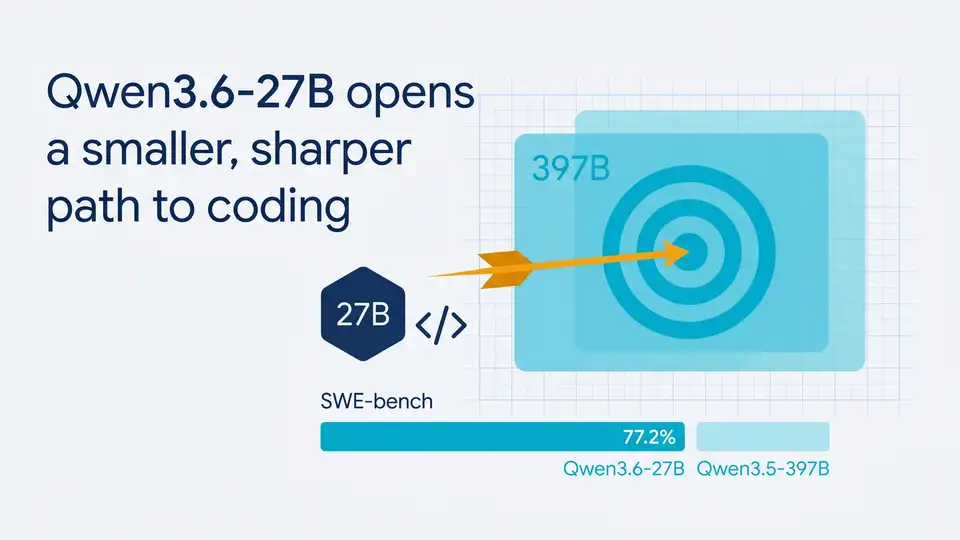

Qwen3.6-27B opens a smaller, sharper path to coding

Qwen3.6-27B is a 27B dense multimodal model that beats Qwen3.5-397B-A17B on key coding benchmarks while staying easier to deploy.

Alibaba’s Qwen team just shipped Qwen3.6-27B, a 27-billion-parameter dense multimodal model that posts numbers big enough to make people blink twice. On SWE-bench Verified, it scores 77.2, edging past the much larger Qwen3.5-397B-A17B at 76.2, while using a far simpler architecture to run.

That matters because open models usually force a trade-off: smaller models are easier to deploy, while larger ones often win on quality. Qwen3.6-27B is trying to break that rule for agentic coding, and the early benchmark sheet says it has a real shot.

Why this release is getting attention

Get the latest AI news in your inbox

Weekly picks of model releases, tools, and deep dives — no spam, unsubscribe anytime.

No spam. Unsubscribe at any time.

The headline here is not just that Qwen released another model. It is that a 27B dense model is beating a 397B MoE model on the tasks developers care about most: code fixing, terminal work, and agent-style problem solving.

Qwen says the model supports both thinking and non-thinking modes, plus multimodal input for images, video, and text. That makes it useful for coding assistants that need to read screenshots, inspect logs, or reason over documents without switching models mid-task.

For teams that care about shipping software, the dense architecture is the practical part. Dense models are easier to serve than MoE systems because they do not need routing logic to activate subsets of experts. Fewer moving parts usually means simpler deployment, easier scaling, and fewer surprises in production.

- Model size: 27B parameters, dense architecture

- Reference model beaten: Qwen3.5-397B-A17B, a 397B MoE model with 17B active parameters

- Public access: Qwen Studio, Hugging Face, and ModelScope

- API support: Alibaba Cloud Bailian is expected to add support soon

The benchmark numbers are the real story

Benchmarks are easy to overhype, but the spread here is wide enough to matter. Qwen3.6-27B scores 77.2 on SWE-bench Verified, 53.5 on SWE-bench Pro, 59.3 on Terminal-Bench 2.0, and 48.2 on SkillsBench. Against Qwen3.5-397B-A17B, those numbers are 76.2, 50.9, 52.5, and 30.0 respectively.

That is a cleaner win than a lot of model launches get. The most interesting gap is SkillsBench, where Qwen3.6-27B jumps almost 18 points ahead of the older flagship. That suggests better agent behavior, not just better pattern matching in code snippets.

“The future of AI is not about bigger models. It’s about better models.” — Sam Altman, OpenAI DevDay 2023

Altman’s line fits this release because the raw parameter count is no longer the main headline. If a 27B dense model can outscore a 397B MoE model on practical coding tasks, the question changes from “How large is it?” to “How much work can it actually do for a developer?”

One more point worth noting: Qwen also reports a GPQA Diamond score of 87.8, which is strong for a model in this size class. GPQA is not a coding benchmark, but it does hint that the model’s reasoning stack is not a one-trick feature.

- SWE-bench Verified: 77.2 vs. 76.2

- SWE-bench Pro: 53.5 vs. 50.9

- Terminal-Bench 2.0: 59.3 vs. 52.5

- SkillsBench: 48.2 vs. 30.0

- GPQA Diamond: 87.8

What developers can do with it today

Qwen3.6-27B is already available through Qwen Studio, and the weights are on Hugging Face and ModelScope for local use. That means a team can test it in a browser, pull it into a private environment, or wire it into an internal coding workflow without waiting for a closed beta.

The model also plugs into OpenClaw, Claude Code, and Qwen Code. That is a practical signal. Qwen is not asking developers to rebuild their stack around a new tool; it is trying to fit into tools people already use.

For multimodal work, the model can read images and video as well as text. That opens up use cases like UI debugging from screenshots, document analysis, and code review with visual context. In other words, it is aimed at the messier parts of software work, where the input is rarely just a clean prompt.

Qwen also mentions a preserve_thinking feature in the upcoming API support. For agent workflows, that matters because keeping prior reasoning context can reduce the need to restate instructions across turns. If it works well in practice, it could make long coding sessions less brittle.

How it compares with the open-model field

The easiest way to read this release is to compare it with other open models developers already know. Qwen3.6-27B is dense, multimodal, and tuned for agentic coding. That puts it in a different lane from giant MoE models that may look stronger on paper but are harder to serve.

Here is the practical comparison:

- Meta Llama models often win on ecosystem reach, but Qwen is pushing harder on coding-specific agent behavior.

- DeepSeek has earned attention for coding and reasoning, yet Qwen’s 27B dense format is easier to reason about operationally.

- Qwen3.5-397B-A17B is much larger on paper, but Qwen3.6-27B beats it on the benchmarks Qwen chose to highlight.

- Qwen’s open-source stack keeps getting more usable for local and agentic deployment.

The deployment angle may matter more than the benchmark bragging rights. A 397B MoE model can be impressive in a slide deck, but a 27B dense model is easier to fit into real infrastructure budgets and simpler to optimize for latency.

That is where Qwen3.6-27B could earn adoption: not by being the biggest model in the room, but by being the one teams can actually run, inspect, and integrate without a lot of engineering drama.

What this release says about open AI coding models

Qwen3.6-27B is a useful reminder that model quality is becoming more specialized. The best open coding models are no longer just general chatbots with code training attached. They are being shaped around terminal use, repair loops, document understanding, and multimodal context.

If Qwen’s numbers hold up under wider community testing, this model could become a default choice for open agentic coding experiments, especially for teams that want strong performance without a huge serving bill. The bigger question is whether developers will prefer a dense 27B model that is easier to deploy over a larger MoE model that looks better in raw parameter count.

My bet: the next wave of adoption will reward models like this one, especially inside products that need fast iteration and predictable infrastructure costs. If you are building an AI coding tool this quarter, Qwen3.6-27B is worth testing before you lock in your model choice.

// Related Articles

- [MODEL]

MiniMax-M1 brings 1M-token open reasoning model

- [MODEL]

Gemini Omni Video Review: Text Rendering Beats Rivals

- [MODEL]

Why Xiaomi’s MiMo-V2.5-Pro Changes Coding Agents More Than Chatbots

- [MODEL]

OpenAI’s Realtime Audio Models Target Live Voice

- [MODEL]

Anthropic发布10款金融AI Agent

- [MODEL]

Why Claude’s “Infinite” Context Window Still Won’t Make AI Autonomous