I Tested Devin on 10 Tasks. It Finished 3.

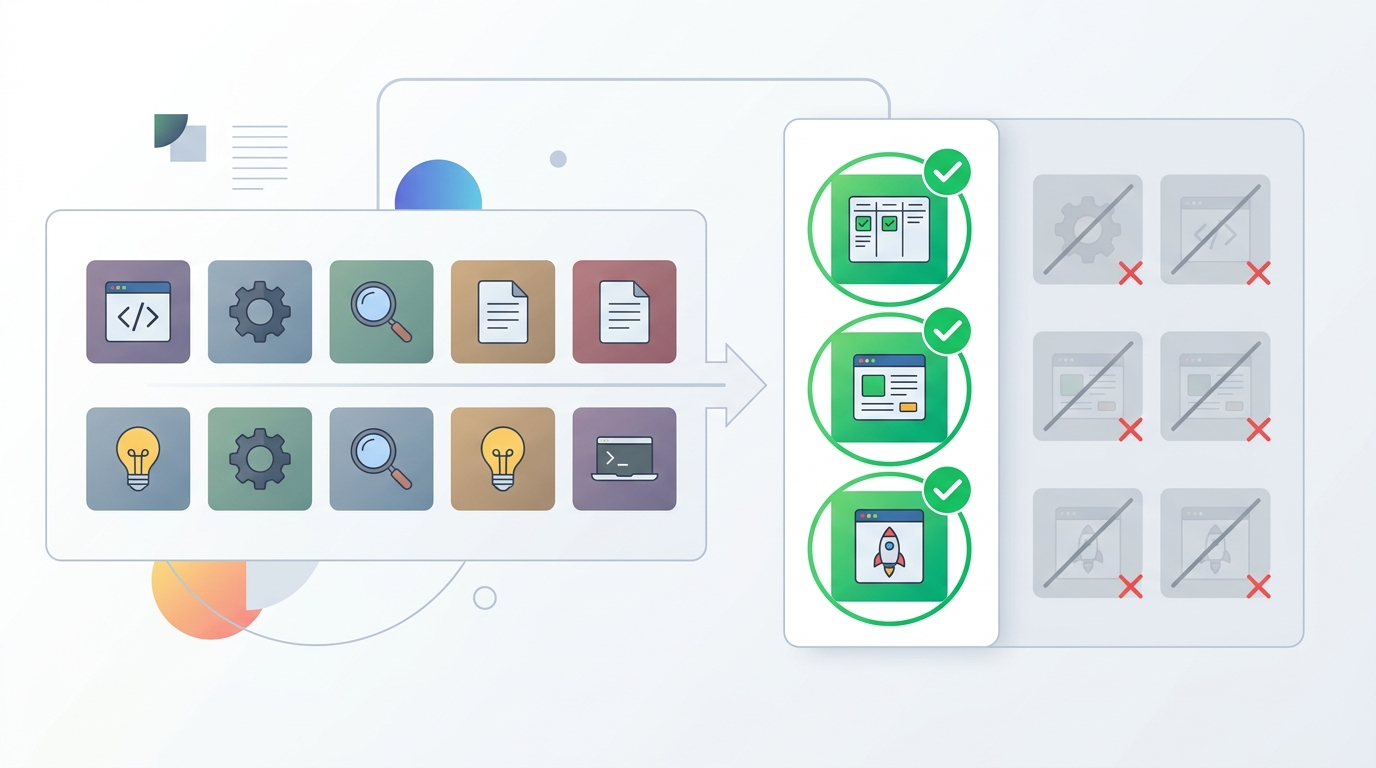

Devin scored 13.86% on SWE-bench and finished 3 of 10 real tasks in one test, showing where AI coding agents still fall short.

Devin has been sold as an AI software engineer, but one fresh field test puts a hard number on the hype: 3 completed tasks out of 10. That lines up with its SWE-bench score of 13.86%, a benchmark built from real GitHub issues instead of toy prompts.

The test was simple enough to trust and harsh enough to matter. The reviewer gave Devin a mix of bug fixes, migrations, feature work, tests, refactoring, and an architecture task, then watched what it could finish without hand-holding. The result says a lot about where autonomous coding agents help today, and where they still waste more time than they save.

What the 10-task test actually looked like

Get the latest AI news in your inbox

Weekly picks of model releases, tools, and deep dives — no spam, unsubscribe anytime.

No spam. Unsubscribe at any time.

The experiment was not a lab demo. It used real backlog items from an active codebase, each with a clear brief and acceptance criteria. That matters because AI tools often look impressive on short prompts and collapse when the work touches state, dependencies, or product decisions.

The task mix was broad on purpose. It included two bug fixes, two migrations, two new features, two test suites, one refactor, and one architectural design problem. That spread is useful because it shows whether an agent can handle routine code changes, or whether it only survives on narrow, repetitive work.

Here is the breakdown of the workload:

- 2 bug fixes: a date parsing issue and a broken API response

- 2 migrations: a database schema change and a dependency upgrade

- 2 new features: a webhook handler and a user settings page

- 2 test suites: unit tests for auth and integration tests for payments

- 1 refactor: shared logic extraction into a utility module

- 1 architecture task: a caching layer for a multi-tenant API

That mix is important because the easy tasks are not the same as the valuable tasks. A tool that can patch a typo or write a few tests is useful. A tool that can reason about data loss, concurrency, and product tradeoffs is a different class of machine.

Devin completed the two bug fixes and one test suite. Everything else either drifted off course or came back with code that would have needed serious repair. On paper, 30% sounds better than its benchmark score. In practice, it still means seven tasks failed in ways that would have cost real engineering time.

Where Devin did well

The strongest result was the date parsing bug. Devin found the root cause, traced the timezone edge case, and produced a fix that handled daylight saving transitions correctly. That is exactly the kind of work where an agent can shine: a narrow problem, a local fix, and a code path with enough clues to follow.

The broken API response fix was also solid. Devin traced the serialization chain, found a missing field in the response schema, and patched it on the first pass. No drama, no circular detours, no weird extra abstractions. Just a clean, bounded correction.

The test generation task was useful too. Devin wrote a reasonable unit test suite for the auth module and covered the core paths. It missed some edge cases around token expiration, but the output still saved time because the boilerplate was done and the remaining work was review, not invention.

That pattern matches what a lot of teams are seeing with coding agents. They are best when the task is already well-shaped and the success criteria are obvious. The moment the work needs judgment, they get much less reliable.

“We are still in the very early days of AI agents,” said Andrej Karpathy in his February 2023 talk on software 2.0 and large language models. “The LLM is a new kind of operating system.”

Karpathy’s line is useful here because it captures the real shift: these tools are not replacing the developer workflow, they are becoming a layer inside it. When that layer is asked to do one clear thing, it can help. When it is asked to make product-level decisions, it often blurs the path instead of shortening it.

Where Devin fell apart

The migration task exposed the biggest risk: silent damage. Devin generated a migration that would have truncated values in a user preferences table, then dropped the old column after copying data into the new one. On a real production database, that is not a minor mistake. It is the kind of bug that turns into support tickets, incident reports, and rollback plans.

The webhook feature showed a different failure mode. Devin could start the implementation, but when it hit an architectural choice, it did not choose. It implemented both a synchronous path and a queue-based path in the same file, then left contradictory state behind. That is worse than a simple wrong answer because it creates code that looks finished until someone inspects it closely.

The caching-layer task was even more telling. The prompt asked for a multi-tenant API cache, and Devin returned a single-tenant in-memory cache. That is not a small miss. It means the model latched onto the word “cache” and ignored the constraint that changes the whole design.

In other words, Devin can follow patterns, but it struggles with constraints that require the system to be understood as a whole. That is a serious limitation for real engineering, because the hard part of software is often not syntax. It is deciding what must never break.

- The migration task risked data loss through truncation

- The webhook task produced two conflicting execution paths

- The cache task ignored the multi-tenant requirement entirely

- The feature work needed judgment that Devin did not supply

That failure profile also explains why the tool feels better on small fixes than on larger tickets. The bigger the task, the more likely it is to require tradeoffs, and tradeoffs are where the agent’s confidence outpaces its reliability.

The numbers behind the hype

Devin’s SWE-bench score is 13.86%, which means it resolves only a small slice of real GitHub issues from open-source projects. That benchmark matters because it is harder than a synthetic coding challenge. It measures whether the system can read an issue, inspect a codebase, and make the right change in context.

The test reviewer’s own result, 3 out of 10 tasks, came out to 30%. That is better than the benchmark score, but still far below what most teams would want from a tool that claims autonomy. For comparison, a capable human developer can often solve a much larger share of these issues, especially when they know the codebase and can ask clarifying questions.

There is also the pricing angle. Devin launched at $500 per month and later dropped to $20 per month, putting it in the same ballpark as Claude Code and Cursor. That price cut says a lot. If an agent can truly replace meaningful engineering time, it should command a premium. If it has to be cheap to stay attractive, the value proposition is closer to experimentation than replacement.

Here is the comparison that matters for day-to-day work:

- Devin: autonomous, but prone to long wrong turns

- Claude Code: collaborative, with the human still making calls

- Cursor: strong for interactive editing and review

- GitHub Copilot: fast for inline completion and boilerplate

The real tradeoff is control versus autonomy. Devin tries to work alone. Claude Code and Cursor keep the developer in the loop. In this test, that difference mattered more than raw ambition. The tools that let a human steer kept their errors smaller, while the autonomous agent burned time when it made an early wrong choice.

That is why the same $20 monthly price point does not mean the same value. A tool that helps you stay in the decision loop can improve throughput without creating cleanup work. A tool that wanders for 40 minutes before returning a bad answer can feel cheap and still cost more in practice.

What this means for teams right now

If your backlog is full of bounded bug fixes, test generation, and mechanical dependency upgrades, Devin can probably help. If the work involves schema design, multi-step feature planning, or anything where a bad guess can damage data, the agent needs close supervision. That is the practical line, and it is narrower than the marketing suggests.

For solo developers, there is still a use case. A tired engineer can hand Devin a small, contained task and get back a draft while they work on something else. For teams, the ROI is less obvious. Once review, correction, and cleanup are included, the time saved can shrink fast.

The strongest takeaway is that “AI software engineer” is still the wrong mental model for Devin. “AI junior assistant that can handle simple, well-scoped tickets” is much closer to reality. That is useful, but it is a long way from replacing a senior engineer or even consistently covering a full sprint backlog.

My read is simple: if you are evaluating Devin in 2026, judge it on the 20% of your work that is repetitive and tightly scoped. Do not judge it on the tickets that require architecture, data safety, or product judgment. Those are still human jobs, and this test shows why.

The next question is not whether agents can write code. They already can. The question is whether they can make the kinds of choices that keep software correct when the task stops being obvious. Until that answer changes, the smartest move is to keep humans in the loop and let agents handle the narrow work they can finish without improvising.

// Related Articles