Why Claude’s real advantage is not raw intelligence

Claude’s edge is its safety-first product design, not just model quality.

Claude’s edge is its safety-first product design, not just model quality.

Claude is winning because Anthropic turned alignment, product packaging, and agentic tools into a business advantage instead of treating them as compliance overhead.

That matters because Claude is not just another chatbot with a new coat of paint. Anthropic has built a stack that includes constitutional AI, tiered model families like Haiku, Sonnet, and Opus, and tools such as Claude Code, browser control, and enterprise connectors. The result is a product that sells into serious work: coding, research, document generation, and workflow automation. The public record also shows the company drawing a hard line on military and surveillance use, even when that stance created friction with the U.S. government. That is not a side note. It is the center of Claude’s market identity.

First argument: Claude’s safety posture is a product feature, not a PR layer

Get the latest AI news in your inbox

Weekly picks of model releases, tools, and deep dives — no spam, unsubscribe anytime.

No spam. Unsubscribe at any time.

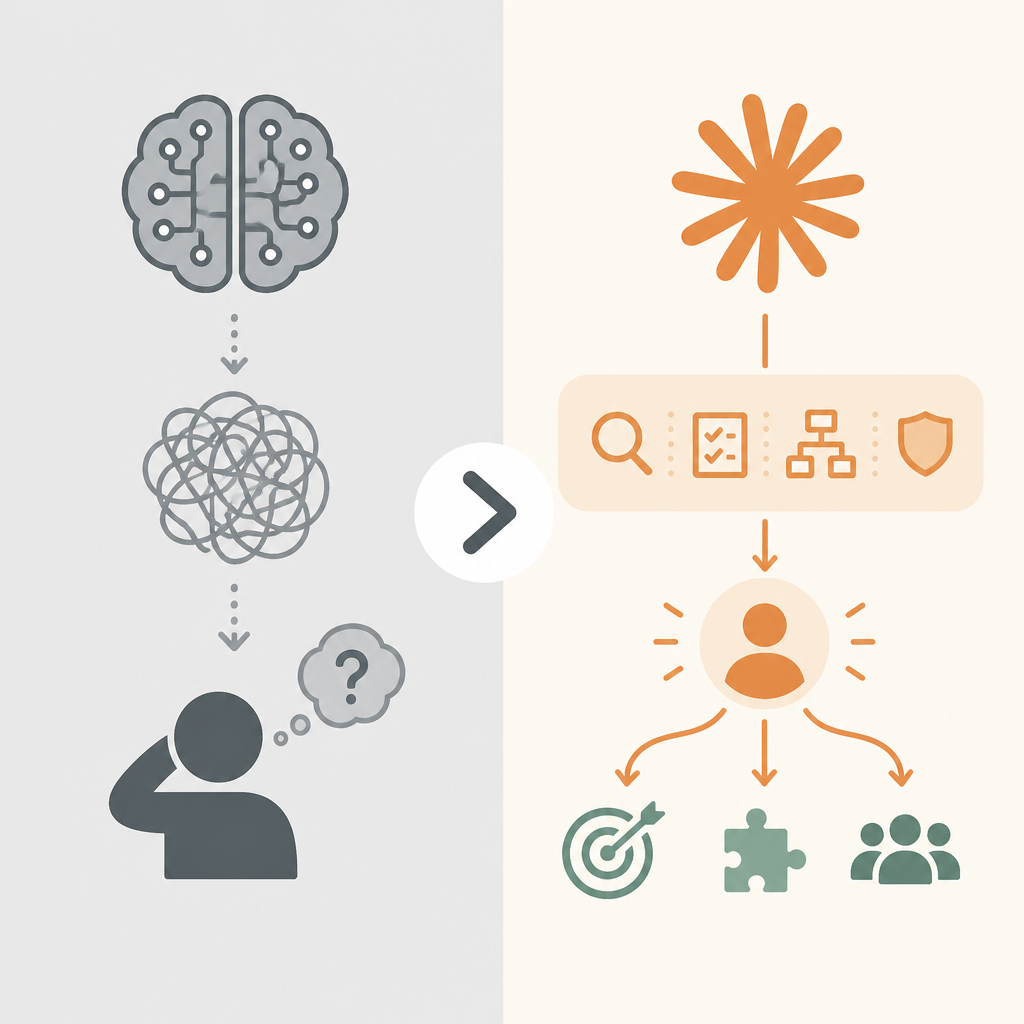

Anthropic’s constitutional AI approach gives Claude a clear operating philosophy. Instead of relying only on human feedback, the model is trained against a written constitution that tells it how to behave, with the goal of being helpful and harmless. That is a real design choice, not a slogan. In practice, it gives buyers a reason to trust the system in environments where mistakes are expensive: enterprise support, internal knowledge work, and regulated industries.

The strongest evidence is how Anthropic has handled use restrictions. The company refused to remove contractual prohibitions on mass domestic surveillance and fully autonomous weapons, and that refusal triggered a U.S. federal response that treated Anthropic as a supply-chain risk. Most AI vendors chase every possible deployment. Anthropic chose to limit some of them. That decision likely narrows the addressable market in the short run, but it also makes Claude easier to adopt for companies that need a model with a visible policy boundary.

Second argument: Claude’s product ladder is built for real workflows, not demo reels

The Haiku, Sonnet, and Opus lineup is smart because it maps model power to business need. Not every task needs the highest-end model, and Anthropic knows it. A developer debugging code, a PM drafting a spec, and a legal team reviewing a document do not need the same cost profile. By offering smaller and larger variants, Anthropic makes Claude usable across a wider range of budgets and latency requirements. That is how a model becomes infrastructure instead of a novelty.

The agent tools reinforce that strategy. Claude Code lets developers delegate tasks from the terminal. Claude for Chrome pushes the model into browser control. Claude Cowork brings those same capabilities to non-technical users through a GUI and local file access. Claude Design extends the system into slides, prototypes, and marketing assets. These are not isolated features. They are a deliberate attempt to turn Claude into a work surface. The reported growth in Claude Code revenue and the fact that major tech companies use the tool internally show that the market is responding to utility, not hype.

The counter-argument

The best case against this view is simple: Claude’s safety story is only valuable if the model is good enough, and model quality still drives adoption. If a competitor is faster, cheaper, or better at coding, then alignment principles do not matter much. Buyers care about output first. They will switch if another system produces better answers, better code, or lower costs. From that angle, Claude’s policy-heavy branding looks secondary to the underlying model performance.

There is also a legitimate concern that guardrails slow the product down. A model that refuses more requests, limits certain actions, or requires more careful prompting can feel less capable than a more permissive rival. In agentic systems especially, friction is a real tax. If the model cannot act quickly across browsers, files, and apps, users will not keep it in their workflow. That is why many AI products win by being aggressive rather than cautious.

That counter-argument is incomplete. Claude does need strong model performance, and Anthropic has clearly invested in that. But the company’s differentiation is not raw capability alone. It is the combination of capability with trust, clear boundaries, and workflow integration. In enterprise AI, trust is not a soft benefit. It shortens procurement, lowers legal risk, and makes internal rollout easier. Claude’s market position shows that buyers are willing to trade a little permissiveness for a system they can actually govern.

What to do with this

If you are an engineer, PM, or founder, stop treating safety, permissions, and model tiers as afterthoughts. Design your AI product so the user can understand what it will do, what it will not do, and which model level is appropriate for the task. Build agentic features only when you can bound their access, audit their actions, and explain their failure modes. Claude’s rise says the same thing plainly: the winners in AI will not just be the smartest models, but the ones that can be trusted inside real organizations.

// Related Articles

- [IND]

Why AI will not automate all white-collar work in 18 months

- [IND]

7 milestones in the Claude timeline for 2026

- [IND]

Rust Hiring Spikes in HN’s May 2026 Roundup

- [IND]

Why Anthropic’s safety-first brand is no longer enough

- [IND]

Why Caitlin Clark’s Morgan Wallen walkout was a bad look

- [IND]

Caitlin Clark misses WNBA All-Star Game with injury