Why Kimi K2.6 Changes the Coding Model Race

Kimi K2.6 is the open-weight coding model that matches GPT-5.5 on SWE-Bench Pro at far lower cost.

Kimi K2.6 matches GPT-5.5 on top coding benchmarks at a much lower price.

Kimi K2.6 is the first open-weight model that makes the closed frontier look overpriced for serious coding work. Moonshot AI released it on April 20, 2026, and the headline is not hype: it tied GPT-5.5 on SWE-Bench Pro at 58.6 while costing about $0.95 per million input tokens and $4.00 per million output tokens, versus GPT-5.5 at $5 and $30. That is not a marginal discount. It is the kind of gap that changes procurement, product design, and the default model choice inside real engineering teams.

First, K2.6 wins where developers actually feel pain

Get the latest AI news in your inbox

Weekly picks of model releases, tools, and deep dives — no spam, unsubscribe anytime.

No spam. Unsubscribe at any time.

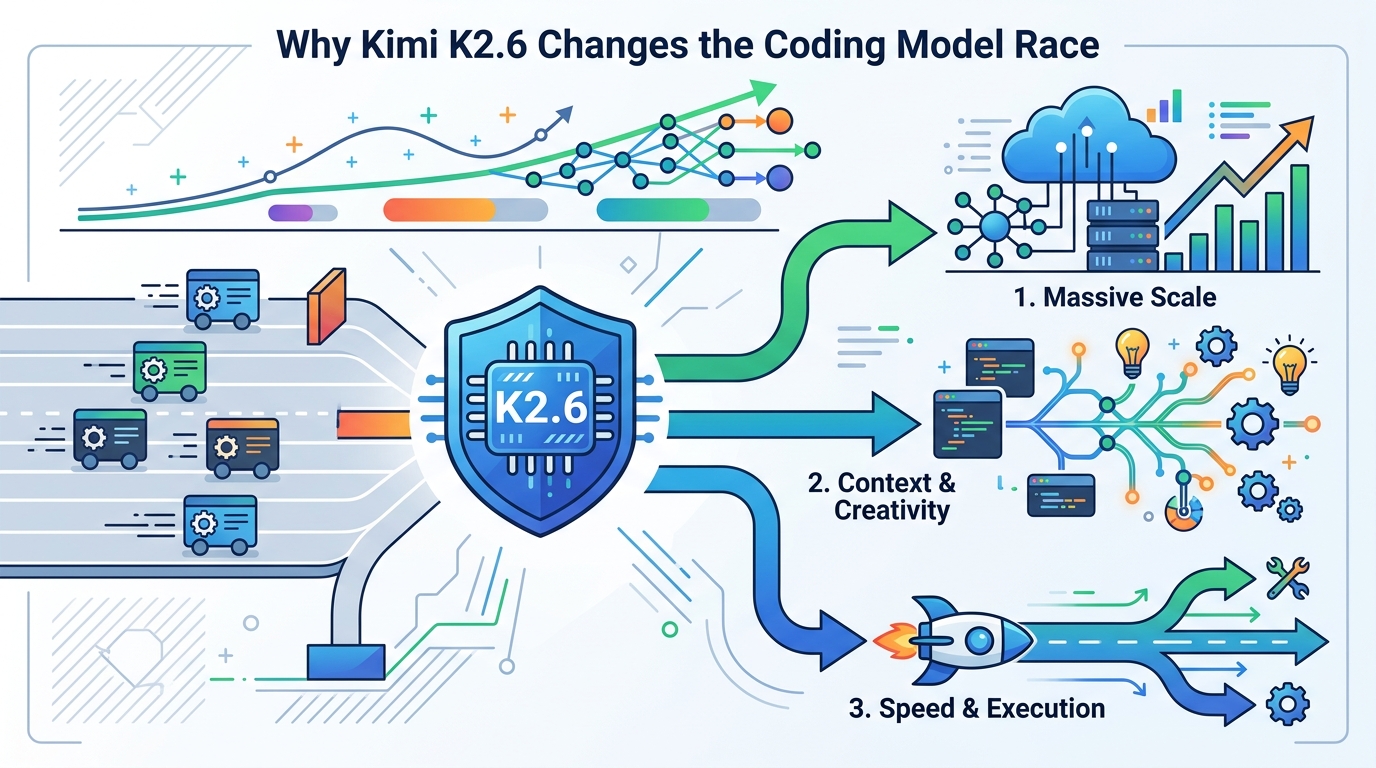

The benchmark that matters here is not a synthetic trivia test. SWE-Bench Pro measures whether a model can fix real software issues in real repositories, and K2.6 tied GPT-5.5 on it. That matters because coding assistants are judged by one thing: can they land the patch without turning the repo into a mess. K2.6 also improved long-horizon coding completion by 185% over K2.5 and raised Terminal-Bench 2.0 from 50.8 to 66.7, which tells you Moonshot focused on sustained work, not just short answers.

That focus shows up in the product shape. K2.6 is built for agentic coding, not just autocomplete. It accepts vision input, handles long context up to 256K tokens, and can keep a task coherent across hours of work. In practical terms, this is the first open model that makes “leave it alone and come back to a working refactor” a normal expectation instead of a demo trick. For teams that spend their days on bug fixes, migrations, and integration work, that is the difference that counts.

Second, the price gap is too large to ignore

Cost is where the argument stops being academic. On Moonshot’s own API pricing, K2.6 is roughly one-fifth the input cost and one-seventh the output cost of GPT-5.5. Claude Opus 4.7 is better on SWE-Bench Pro at 64.3, but it is also far more expensive at $5 per million input tokens and $25 per million output tokens. If your team ships code every day, the model choice is no longer about prestige. It is about burn rate.

Open weights make the economics even stronger. K2.6 is available on Hugging Face under a modified MIT license, which means teams can self-host, fine-tune, and avoid vendor lock-in for most uses. Moonshot adds a UI branding requirement only for products above 100 million monthly active users or $20 million in monthly revenue. For everyone else, the model behaves like a truly open asset. That matters because the cheapest token is the one you do not have to send to a closed API at all.

The agent swarm is the real product shift

The most important feature in K2.6 is not the benchmark score. It is the 300-agent swarm system. Moonshot says the model can split a task across hundreds of sub-agents, coordinate up to 4,000 steps, and run for 13 hours in one autonomous session. That is a direct challenge to the current assumption that serious agent work belongs to closed systems with expensive orchestration. K2.6 turns parallelism into a model feature, not a framework tax.

This matters because orchestration is where many AI coding projects fail. Most teams do not need another chatbot. They need a system that can decompose work, assign subtasks, recover from stalls, and keep state across a long build. K2.6 does that inside the model. For product teams, that means fewer hand-built agent pipelines. For engineers, it means fewer brittle glue layers. For founders, it means a lower-cost way to ship AI-assisted features that actually complete multi-step work.

The counter-argument

The strongest case against K2.6 is simple: it is not the best model overall. Claude Opus 4.7 leads on SWE-Bench Pro, GPT-5.5 leads on broader intelligence scores, and both closed models offer larger context windows. If you are building a high-stakes system where absolute quality outweighs cost, paying for the best closed model is a rational choice. There is also a trust argument. Open weights do not erase the need for evaluation, guardrails, or human review, and agent swarms can amplify mistakes as efficiently as they amplify productivity.

That critique is fair, but it does not defeat the case for K2.6. The question is not whether it beats every frontier model in every category. It does not. The question is whether an open model can now do first-tier coding work at a fraction of the cost. On that question, K2.6 wins decisively. It ties GPT-5.5 on the benchmark that tracks real developer pain, and it does so with open weights and aggressive pricing. That is enough to make it the default choice for a huge class of coding workloads.

What to do with this

If you are an engineer, test K2.6 on one real repo task this week: a bug fix, a migration, or a feature that needs tests and follow-up edits. If you are a PM, compare its cost and completion rate against your current assistant on a task that takes more than one turn. If you are a founder, treat K2.6 as a product lever, not a curiosity. Use it where long-horizon coding, autonomous research, or multi-step agent work can cut build time and token spend at the same time. The market just got a cheaper serious option, and ignoring it now is a budgeting mistake.

// Related Articles

- [MODEL]

Mistral Is Building a Cybersecurity Model for Banks

- [MODEL]

Kimi K2.6: What Changed in 2026

- [MODEL]

Why Google’s Hidden Gemini Live Models Matter More Than the Demo

- [MODEL]

MiniMax-M1 brings 1M-token open reasoning model

- [MODEL]

Gemini Omni Video Review: Text Rendering Beats Rivals

- [MODEL]

Why Xiaomi’s MiMo-V2.5-Pro Changes Coding Agents More Than Chatbots