Amazon Bedrock Agents Gets Multi-Agent Workflows

AWS adds memory, code execution, and multi-agent collaboration to Bedrock Agents, aiming at harder enterprise workflows.

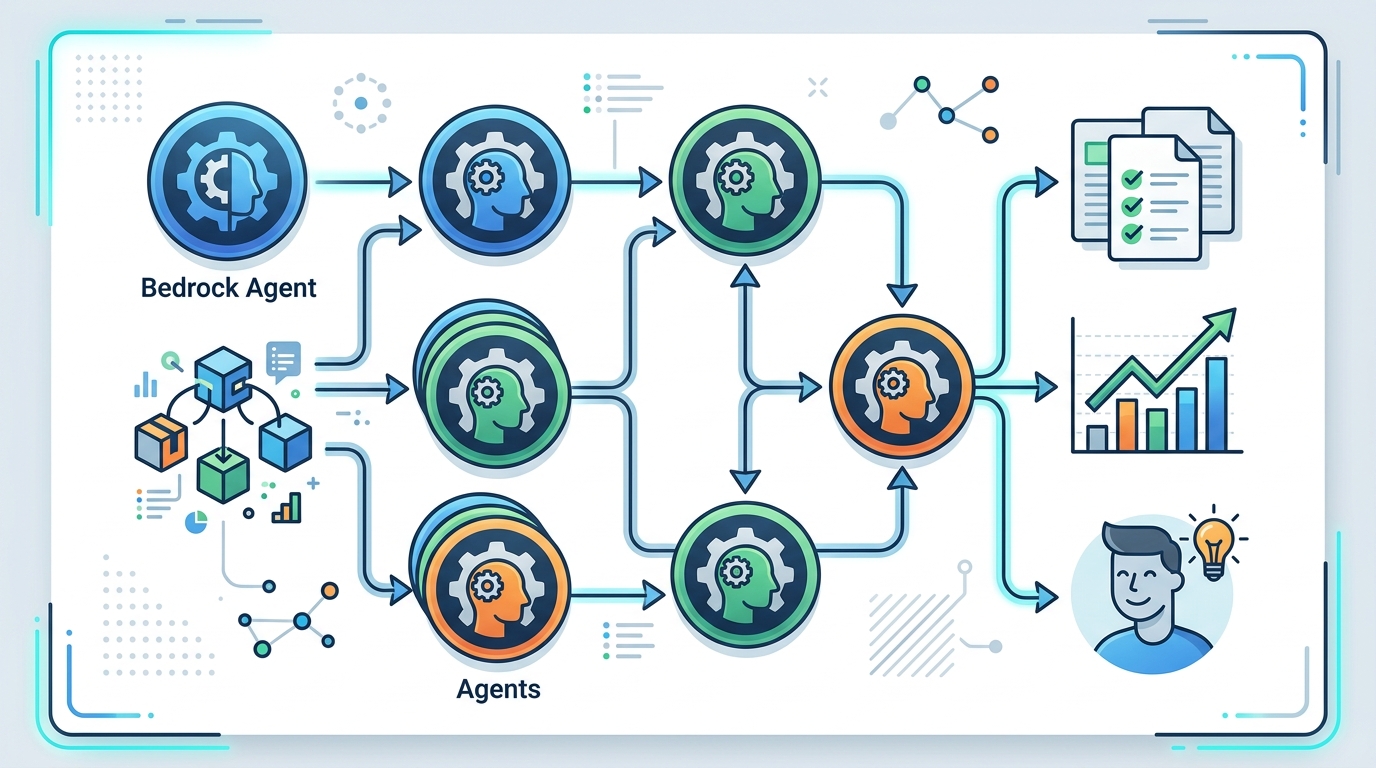

Amazon Bedrock Agents now pushes beyond simple tool use. AWS says agents can break down user requests, call APIs, pull from data sources, and coordinate multiple specialized agents under a supervisor agent.

The pitch is practical: automate multistep work without forcing teams to stitch together every workflow by hand. AWS also added memory retention, Amazon Bedrock Guardrails, and code interpretation, which makes the product feel less like a demo and more like infrastructure.

What AWS is actually shipping

Get the latest AI news in your inbox

Weekly picks of model releases, tools, and deep dives — no spam, unsubscribe anytime.

No spam. Unsubscribe at any time.

Bedrock Agents is built for apps that need more than a single prompt and a single answer. AWS says the agent can reason over foundation models, APIs, and data, then decide what to do next. In practice, that means an agent can inspect a request, query a system, and complete a task without making the developer hard-code every branch.

The setup story is also a big part of the appeal. AWS says you can create an agent in a few steps: pick a model, write instructions in natural language, and connect the systems it needs. That lowers the barrier for teams that want agent behavior without building a full orchestration layer from scratch.

Here are the main capabilities AWS highlights:

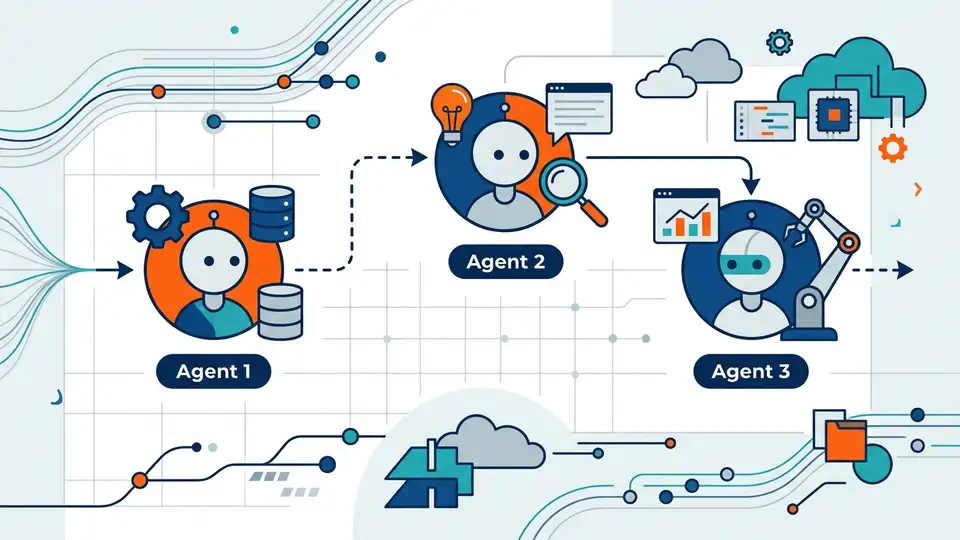

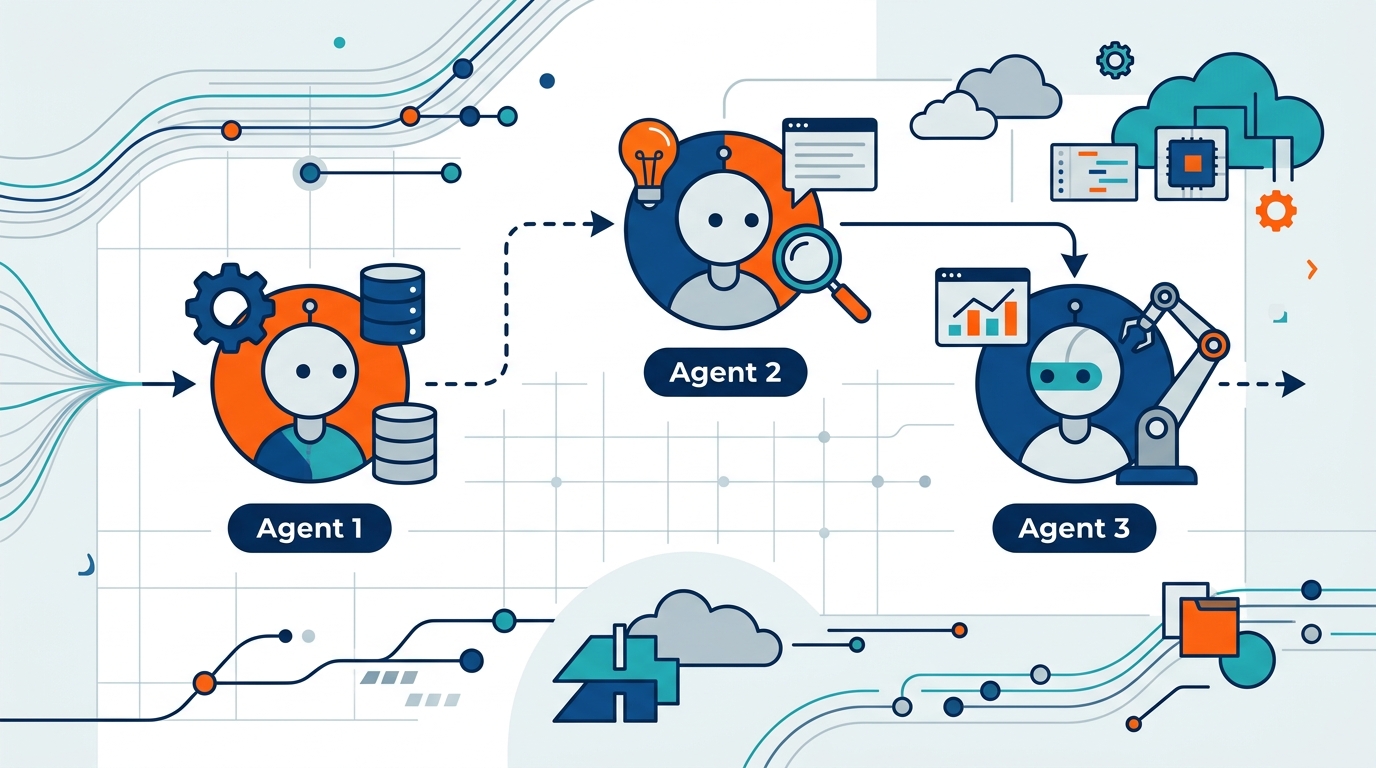

- Multi-agent collaboration: multiple specialized agents work under a supervisor agent

- Retrieval augmented generation: agents pull from company data sources for grounded answers

- Orchestrate and execute: agents break tasks into steps and call APIs as needed

- Memory retention: agents remember prior interactions across sessions

- Code interpretation: agents generate and run code in a secure environment

That mix matters because most enterprise AI projects fail on the boring parts: state, permissions, and integration. Bedrock Agents is trying to make those boring parts first-class features instead of afterthoughts.

Why multi-agent collaboration matters

Multi-agent systems sound abstract until you map them to real business work. AWS describes a supervisor agent that breaks a complex process into smaller steps, then assigns those steps to specialized agents. That is a cleaner fit for workflows like claims handling, procurement checks, and support triage than a single general-purpose agent trying to do everything at once.

The interesting part is coordination. A single agent can answer a question, but a multi-agent setup can split responsibilities: one agent gathers data, another checks policy, another drafts a response. That structure can reduce the chance of one model doing too much with too little context.

“A system is only as good as the interactions between its parts.” — Andrew S. Tanenbaum

Tanenbaum said that long before today’s agent boom, but the line fits here. If an agent platform cannot control handoffs, memory, and tool use, the whole workflow gets shaky fast. AWS is clearly betting that enterprises want a managed way to handle those interactions instead of building them from scratch.

There is also a business angle. Multi-agent collaboration lets teams separate concerns across domains, which is useful when one workflow touches finance, operations, and customer service. The more regulated the process, the more valuable that separation becomes.

How Bedrock compares with other agent stacks

Amazon is not alone in this space. OpenAI, Anthropic Claude, and open-source frameworks such as LangChain and AutoGen all aim at agentic workflows. Bedrock’s edge is that it sits inside AWS’s cloud stack, where enterprise customers already keep data, identity, and compute.

That matters because agent systems are expensive to wire together if every piece lives in a different place. Bedrock Agents can sit closer to AWS services, which may reduce the amount of glue code needed for teams already building on AWS.

Here is the practical comparison:

- Bedrock Agents: managed agent orchestration inside AWS, with guardrails and memory built in

- LangChain: flexible open-source framework, but teams own more of the integration work

- AutoGen: strong for multi-agent experimentation, less opinionated about enterprise deployment

- OpenAI agent tooling: fast to prototype, but often paired with external systems for enterprise workflows

For developers, the tradeoff is clear. Bedrock gives you more managed pieces, while open-source stacks give you more control. If your team already runs workloads on AWS, the managed route may be easier to justify. If you want to experiment quickly across providers, the open-source route may still win.

Memory, guardrails, and code execution change the pitch

Memory retention is one of the more useful additions here. An agent that remembers previous interactions can reduce repetitive input, improve follow-up responses, and keep multistep workflows coherent. That matters in support, sales ops, and internal tooling, where users hate repeating themselves.

Guardrails are equally important, especially for enterprises that care about policy and safety. AWS says Bedrock Agents includes built-in security and reliability features through Amazon Bedrock Guardrails. That gives teams a way to constrain outputs and keep the agent aligned with company rules.

Code interpretation is the sleeper feature. AWS says agents can dynamically generate and execute code in a secure environment, which opens the door to data analysis, charts, and mathematical problem solving. That is a real step up from plain text generation because many enterprise questions need computation, not just language.

For teams evaluating the platform, the question is whether these features reduce production risk enough to justify the lock-in. Memory and code execution are powerful, but they also raise the bar for testing, monitoring, and access control.

A few details from AWS’s own product page make the direction obvious:

- Agents can be created in just a few steps

- Multi-agent collaboration targets complex business workflows

- RAG connects agents to company data sources

- Code execution happens in a secure environment

- AgentCore is aimed at deploying and operating open-source agents at scale

That last point matters. Amazon Bedrock AgentCore suggests AWS wants to own more of the agent lifecycle, from building to deployment to operations. It is a strong signal that the company sees agents as a long-term cloud workload, not a novelty feature.

What developers should watch next

Bedrock Agents is most compelling for companies that already live inside AWS and want to automate workflows that touch internal systems. The combination of multi-agent collaboration, memory, and code execution makes it easier to imagine real production use cases instead of toy demos.

The risk is complexity. The more an agent can do, the more you need observability, evaluation, and tight access controls. Teams that adopt Bedrock Agents should be asking one question first: which workflow is repetitive enough, sensitive enough, and structured enough to benefit from an agent today?

My guess is that the first wins will come in support operations, claims processing, inventory checks, and internal analytics. If AWS keeps tightening the integration story around Amazon Bedrock and AgentCore, the platform could become the default choice for AWS-native agent systems. The next real test is simple: can these agents handle messy enterprise work without turning every workflow into a debugging exercise?

// Related Articles

- [AGENT]

How to Switch AI Outputs from Markdown to HTML

- [AGENT]

Anthropic’s Cat Wu on proactive AI assistants

- [AGENT]

How to Run Hermes Agent on Discord

- [AGENT]

Why RAGFlow is the right open-source RAG engine to self-host

- [AGENT]

How to Add Temporal RAG in Production

- [AGENT]

GitHub Agentic Workflows puts AI agents in Actions