GitHub Agentic Workflows puts AI agents in Actions

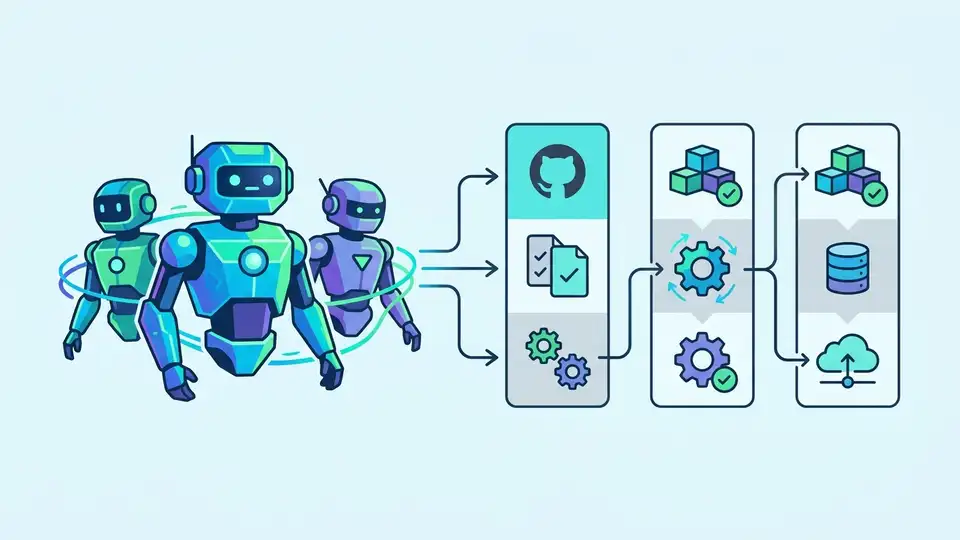

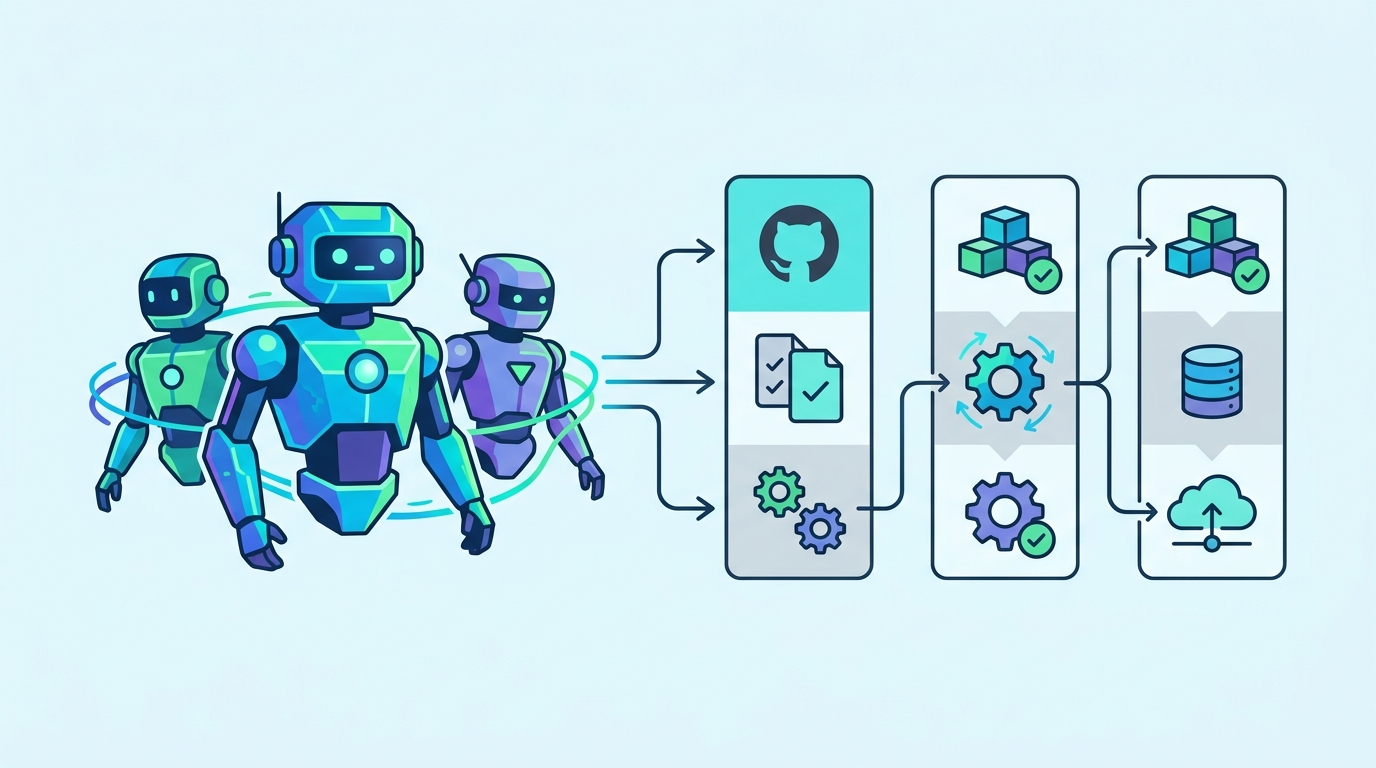

GitHub Agentic Workflows lets teams write markdown automation for AI agents and run it in Actions with guardrails.

GitHub Agentic Workflows lets teams run AI-driven repo automation from markdown files in GitHub Actions.

GitHub says the system supports 4 AI engines, 10+ event triggers, and 5 security layers. That is enough to make it more than a demo and less than a free-for-all.

| Metric | Value |

|---|---|

| Supported AI engines | 4: GitHub Copilot, Claude, OpenAI Codex, custom |

| Security layers | 5: read-only token, zero secrets, firewall, safe outputs, threat detection |

| Design patterns | 18+ |

| GitHub event triggers | 10+ |

| Safe output types | 8+ |

| Install command | 1: gh extension install github/gh-aw |

What GitHub is actually shipping

Get the latest AI news in your inbox

Weekly picks of model releases, tools, and deep dives — no spam, unsubscribe anytime.

No spam. Unsubscribe at any time.

GitHub Agentic Workflows is GitHub’s attempt to make AI agents part of normal repository automation, not a side experiment. The pitch is simple: write workflow intent in markdown, run it through GitHub Actions, and let an AI agent handle repetitive repo work with tight controls.

The project comes from GitHub Next and Microsoft Research, and it is still early development. GitHub is explicit about that. The docs warn that agentic workflows can go wrong, even with human supervision, which is a healthy warning for a system that can inspect issues, analyze CI failures, and draft pull requests from scheduled jobs.

What makes this interesting is the format. Instead of asking teams to write more YAML, GitHub wants them to describe intent in markdown. That lowers the barrier for maintainers who already know how to write docs, and it makes workflows easier to review because the logic reads like instructions rather than plumbing.

- Runs AI agents inside GitHub Actions

- Accepts markdown-based workflow definitions

- Supports event-driven and scheduled jobs

- Works with Copilot, Claude, and Codex

The guardrails are the real product

GitHub spends more time on safety than on magic, and that is the right call. The system assumes an agent can be tricked by malicious repository content, compromised tools, or prompt injection, then builds layers around that risk instead of pretending it does not exist.

The five protections are practical, not theoretical: read-only tokens, no secrets inside the agent, a container with a network firewall, safe outputs, and threat detection before anything gets written back to the repo. In other words, the agent can suggest actions, but it cannot freely execute them.

“AI agents can be manipulated into taking unintended actions,” GitHub says in the project’s guardrails section.

That sentence matters because it explains the philosophy behind the whole project. GitHub is not trying to make agents autonomous in the sci-fi sense. It is trying to make them useful inside a permissioned system where the worst outcomes are contained before they hit production code.

The firewall detail is especially worth noting. GitHub describes an Agent Workflow Firewall that routes outbound traffic through a Squid proxy with an explicit domain allowlist, while anything else gets dropped at the kernel level. That is stricter than the usual “be careful with secrets” guidance that shows up in most AI workflow demos.

- Read-only GitHub token for the agent

- No API keys or write credentials in the agent process

- Allowlisted network access only

- AI scan before writeback

- Scoped write job applies approved actions

How the workflow model compares to old CI

Traditional CI is deterministic. It runs the same steps, in the same order, every time. GitHub Agentic Workflows adds a different layer: continuous AI that can inspect context, decide what matters, and then produce a structured output for a gated job to apply.

That sounds subtle, but it changes what automation can do. A normal scheduled job might open a report or fail a build. An agentic job can triage issues, summarize repo activity, propose documentation updates, or suggest code cleanup based on what it sees in the repository that day.

The examples GitHub lists make the target audience obvious: maintainers who already live in Issues, Pull Requests, Discussions, and release pages. The gallery includes issue and PR management, continuous documentation, daily code improvement, metrics and analytics, quality and testing, and multi-repository sync.

- 10+ GitHub event triggers, including issues, pull_request, push, schedule, discussion, and label

- 18+ workflow patterns, including IssueOps, ChatOps, DailyOps, and BatchOps

- 8+ safe output types, including create-issue, create-pull-request, add-comment, and add-label

- 1 command to install the CLI extension

Why this matters for maintainers

The practical appeal is not that AI will replace repo automation. It is that the boring parts of maintenance may become easier to describe and easier to review. If a team can write “create a daily status issue, summarize recent activity, and tag it with the right labels,” that is less work than maintaining a custom script and easier to audit than a pile of ad hoc bots.

There is also a nice fit with existing GitHub habits. Teams already use markdown for issues and docs, and they already trust Actions for scheduled work. Putting agent intent into markdown keeps the mental model close to the tools developers already use, which matters more than flashy model names.

The sample workflow in the docs shows the pattern clearly: schedule a daily job, read repository context, generate a report, and create an issue with a title prefix and labels. The workflow stays declarative, while the AI agent handles the messy part of deciding what to summarize.

GitHub also points to GitHub Blog coverage and an extension-based setup via the GitHub CLI. That matters because adoption usually hinges on setup friction. If installing and testing the workflow takes minutes rather than a weekend, more teams will try it.

Where this goes next

GitHub Agentic Workflows is best read as a controlled experiment in making AI part of day-to-day repo operations. It is not ready to replace careful CI or human review, and GitHub says so plainly. What it does offer is a cleaner way to express automation that needs context, judgment, and a hard stop before write access.

If GitHub keeps the markdown format simple and the guardrails strong, this could become the default way teams run low-risk AI tasks on repositories. The real test is whether maintainers trust it enough to let an agent file the first issue, draft the first PR, and keep doing it without creating cleanup work for the humans.

For now, the smart move is to treat it like an assistant with a locked toolbox: useful when supervised, dangerous when overtrusted, and worth testing on non-critical workflows before it touches anything important.

// Related Articles

- [AGENT]

How to Switch AI Outputs from Markdown to HTML

- [AGENT]

Anthropic’s Cat Wu on proactive AI assistants

- [AGENT]

How to Run Hermes Agent on Discord

- [AGENT]

Why RAGFlow is the right open-source RAG engine to self-host

- [AGENT]

How to Add Temporal RAG in Production

- [AGENT]

Meta and Google join the AI agent race