Anthropic adds dreaming to Claude Managed Agents

Anthropic added dreaming, outcomes, and multiagent orchestration to Claude Managed Agents, aiming to improve memory, grading, and teamwork.

Anthropic added dreaming, outcomes, and multiagent orchestration to Claude Managed Agents.

Anthropic shipped Claude Managed Agents last month, and the company is already expanding the system with three features that make agents more useful in production. The update matters because it moves Claude from “build an agent” territory into “run an agent team and measure it” territory.

The new additions are dreaming, outcomes, and multiagent orchestration. One is a memory tool, one is a grading system, and one splits work across specialist agents.

| Feature | What it does | Status |

|---|---|---|

| Dreaming | Reviews past sessions, finds patterns, and updates memory | Research preview |

| Outcomes | Lets developers define success criteria and grade results | New launch feature |

| Multiagent orchestration | Lets a lead agent delegate work to specialist subagents | New launch feature |

| Launch timing | Claude Managed Agents launched last month; update arrived this week | May 7, 2026 |

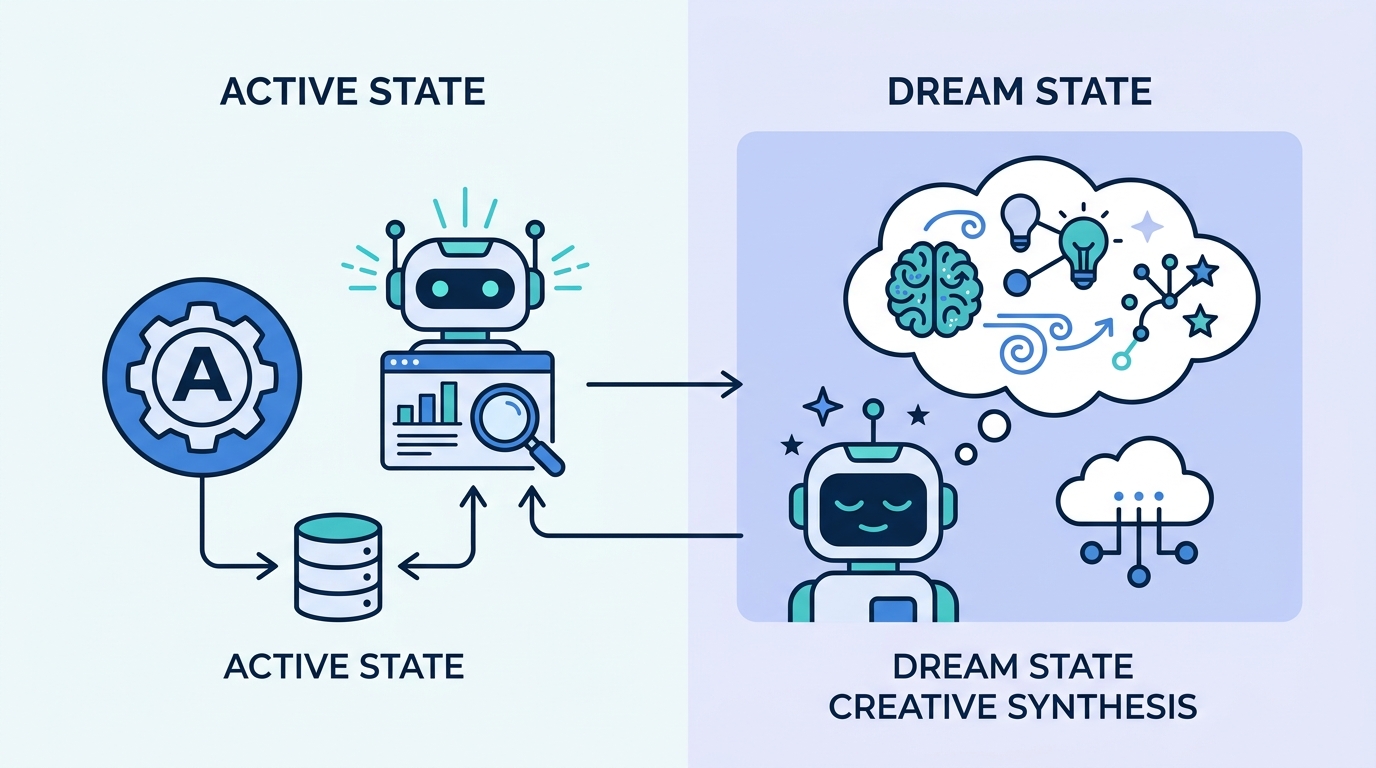

Dreaming gives Claude a memory cleanup pass

Get the latest AI news in your inbox

Weekly picks of model releases, tools, and deep dives — no spam, unsubscribe anytime.

No spam. Unsubscribe at any time.

Anthropic says dreaming extends Claude’s memory by reviewing past sessions to find patterns and help agents improve over time. In plain English, it is a scheduled review job that looks at what an agent did before, pulls out useful lessons, and updates memory stores so the next run starts smarter.

The interesting part is control. Anthropic says dreaming can update memory automatically, or developers can review the changes before they land. That matters for teams that want agents to learn without letting them rewrite their own behavior unchecked.

Anthropic also ties dreaming directly to the memory system already built into Managed Agents. Memory captures what an agent learns while it works, while dreaming refines that memory between sessions and shares useful patterns across agents.

- Dreaming runs on a schedule, not after every action.

- It reads past sessions and memory stores.

- It can auto-apply changes or wait for human review.

- Anthropic labels it a research preview, so the feature is still experimental.

Outcomes turns vague goals into testable targets

Outcomes is the kind of feature developers usually end up building themselves after a few painful iterations. Anthropic lets you define what success looks like with a rubric, then a separate grader evaluates the output against that rubric in its own context window.

That separation matters because the grader does not see the agent’s reasoning chain. In practice, that reduces the chance that the evaluator gets swayed by the agent’s explanation instead of the actual result. If the output misses the mark, the grader points to what needs to change and the agent tries again.

“A separate grader evaluates the output against your criteria in its own context window, so it isn’t influenced by the agent’s reasoning.”

Anthropic also added webhook support for outcomes. That means you can define the target, let the agent run, and get notified when it is done, which is a cleaner fit for asynchronous workflows than babysitting every run in a console.

For teams building internal tools, this is the more practical update than dreaming. Memory is useful, but grading is what turns an agent from a demo into something a product team can trust.

Multiagent orchestration splits work into specialists

The biggest architectural change is multiagent orchestration. Anthropic says a lead agent can break a job into pieces and delegate each part to a specialist with its own model, prompt, and tools. That is a smarter way to handle messy jobs like incident response, support analysis, or code investigation.

Anthropic gives a concrete example: a lead agent can investigate while subagents fan out across deploy history, error logs, metrics, and support tickets. Those specialists work in parallel on a shared filesystem, then feed their findings back into the lead agent’s context.

The company says events are persistent, so the lead agent can check back in mid-workflow and every agent remembers what it has done. That persistence is what makes the system feel closer to a real workflow engine than a one-shot chatbot.

- Lead agent: coordinates the task and keeps the overall context.

- Specialist agents: focus on logs, metrics, deploy history, or tickets.

- Shared filesystem: lets agents contribute to the same working set.

- Persistent events: let the lead agent resume and check progress later.

Anthropic says companies like Netflix are already using Claude Managed Agents, including multiagent orchestration for platform work. That does not prove every team needs this setup, but it does show the feature is aimed at real operational workloads, not toy demos.

Why this update matters for agent builders

Claude Managed Agents launched last month, and this week’s update tells you how Anthropic wants developers to think about agents in production. The company is pushing in three directions at once: longer memory across sessions, clearer success criteria, and division of labor across multiple agents.

That combination is more interesting than any single feature. Dreaming helps agents remember better, outcomes helps teams measure better, and orchestration helps them tackle larger tasks without stuffing everything into one prompt.

There is also a clear product signal here. Anthropic is not treating agents as a side feature inside Claude. It is building the plumbing for teams that want persistent, testable, multi-step automation with less manual glue code.

If you are building on Claude, the next question is simple: do you need a smarter solo agent, or do you need a system of agents with memory, grading, and delegation? Anthropic is betting that a lot of real work needs the second option, and this update makes that bet harder to ignore.

// Related Articles

- [AGENT]

How to Switch AI Outputs from Markdown to HTML

- [AGENT]

Anthropic’s Cat Wu on proactive AI assistants

- [AGENT]

How to Run Hermes Agent on Discord

- [AGENT]

Why RAGFlow is the right open-source RAG engine to self-host

- [AGENT]

How to Add Temporal RAG in Production

- [AGENT]

GitHub Agentic Workflows puts AI agents in Actions