How Cloudflare runs AI code review at scale

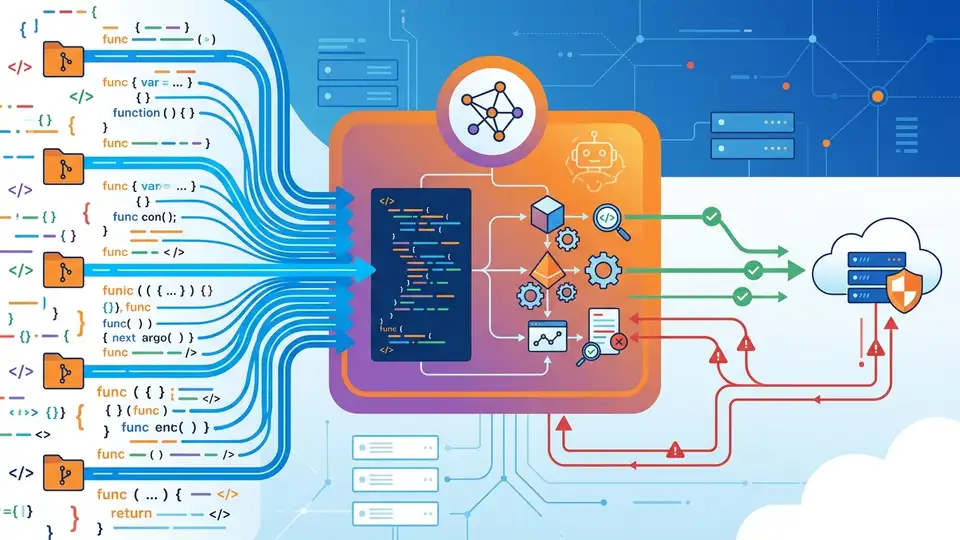

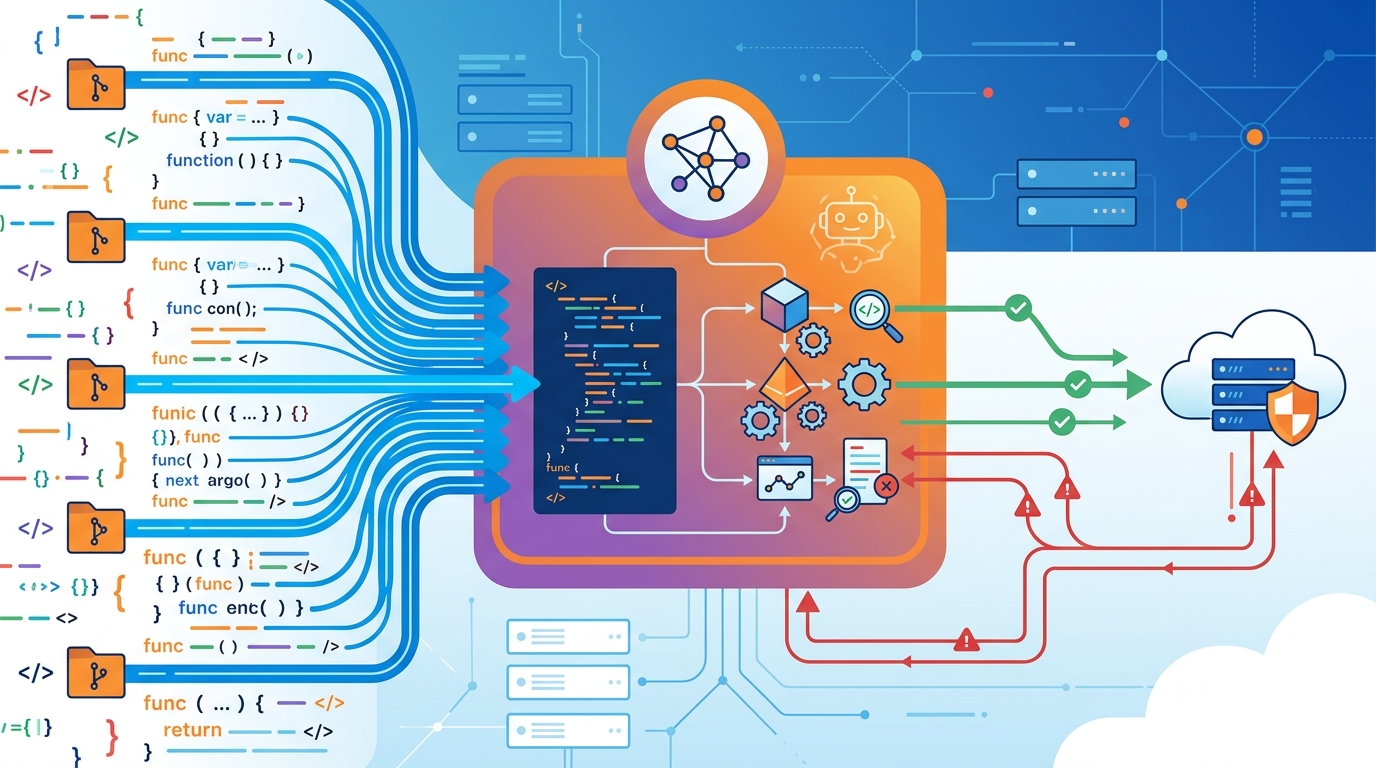

Cloudflare built a CI-native AI review system that scans merge requests with up to seven specialist agents.

Cloudflare built a CI-native AI review system that scans merge requests with up to seven specialist agents.

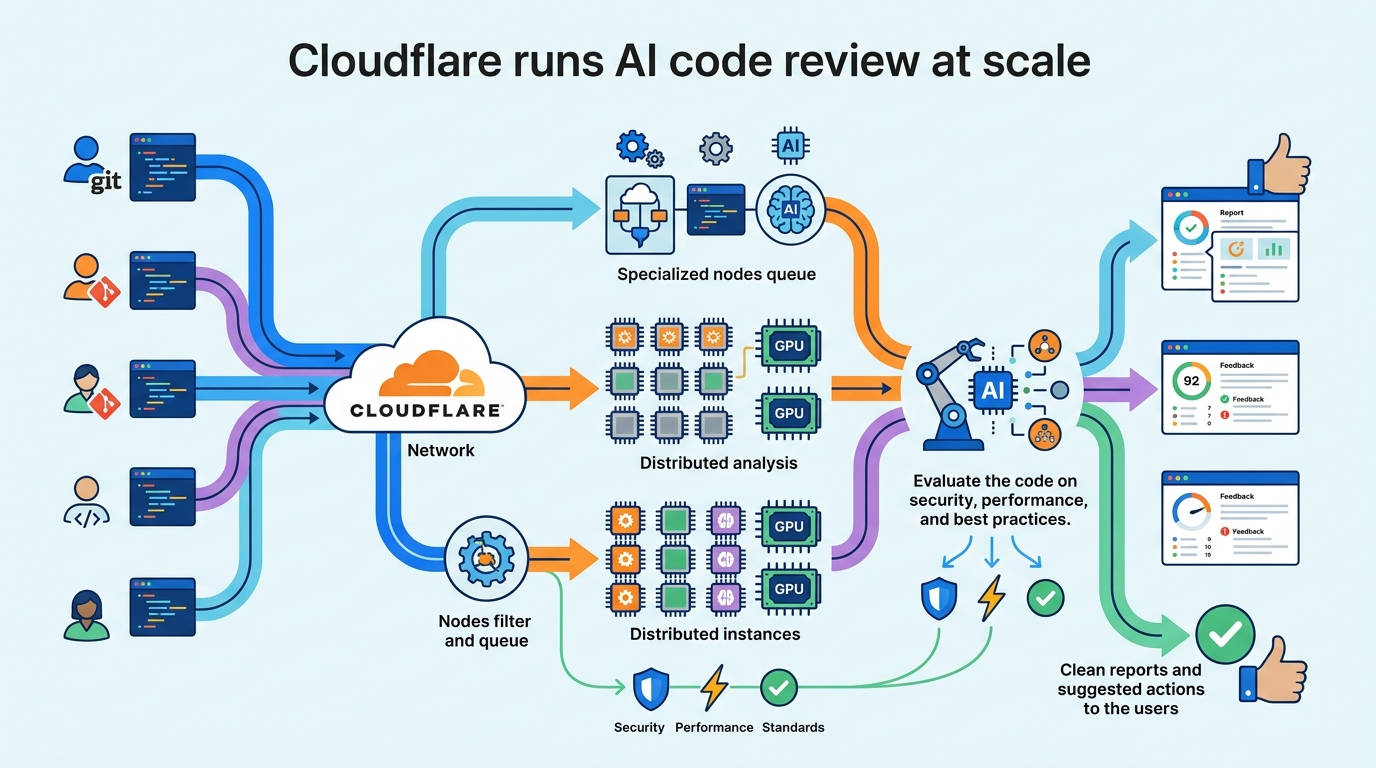

Cloudflare says its internal review system now processes tens of thousands of merge requests and replaces the usual single-model prompt with a coordinator plus specialist agents. The company built it because first-review waits were often measured in hours, and generic AI comments were too noisy to trust.

| Metric | Value | Why it matters |

|---|---|---|

| Specialist reviewers | Up to 7 | Splits security, quality, docs, release, and policy checks |

| Internal pull requests upstream | 45+ | Shows Cloudflare is actively shaping OpenCode |

| Buffering interval | 100 lines or 50 ms | Limits disk churn in the streaming pipeline |

| Heartbeat interval | 30 seconds | Prevents users from thinking the job froze |

| Heap cap | 2.5 GB | Protects the coordinator process from runaway memory use |

Why Cloudflare rejected the one-big-prompt approach

Get the latest AI news in your inbox

Weekly picks of model releases, tools, and deep dives — no spam, unsubscribe anytime.

No spam. Unsubscribe at any time.

The first version of AI review looked like what most teams try: feed the diff to a model and ask for bugs. Cloudflare found the output was noisy, repetitive, and full of false alarms, including hallucinated syntax errors and generic advice about error handling that already existed in the code.

That failure matters because code review is already a bottleneck. A merge request waits, a human reviewer switches context, and the author ends up in a back-and-forth over small issues before anyone gets to the real risk. If an AI reviewer adds more noise than signal, it slows the queue instead of clearing it.

Cloudflare’s answer was to stop treating review as a single prompt problem and treat it as an orchestration problem. The system now runs inside CI, uses Cloudflare AI Gateway for model routing, and relies on OpenCode as the core coding agent rather than building a custom monolith.

- The system supports GitLab today, with room for other VCS providers later.

- It isolates provider-specific logic so the GitLab plugin does not care about AI routing.

- It keeps internal policy checks separate from model selection.

- It posts one structured review comment after deduplication and severity scoring.

The plugin model is the real design choice

The smartest part of the setup is the plugin boundary. Each plugin owns one job: GitLab integration, AI provider configuration, internal compliance checks, observability, AGENTS.md validation, remote model overrides, and telemetry. That separation keeps the system from turning into a pile of cross-dependencies that only one engineer understands.

Cloudflare also made the lifecycle explicit. Bootstrap hooks run concurrently and can fail without killing the review. Configure hooks run sequentially and are fatal when a required dependency breaks. Post-configure hooks handle async work such as pulling remote overrides. That is a very practical way to keep a CI system predictable when dozens of repositories depend on it.

"The architecture: plugins all the way to the moon" — Ryan Skidmore, Cloudflare

The Cloudflare plugin model also keeps the final config assembly controlled. Plugins contribute through a context API, and the core system merges those contributions into the opencode.json file that OpenCode reads. No plugin gets direct access to the final object, which lowers the odds of one extension breaking another in subtle ways.

What the coordinator actually does

Cloudflare runs Bun as the process wrapper and starts the coordinator as a child process. It sends the prompt through stdin rather than as a command-line argument, which avoids the Linux ARG_MAX limit when merge requests get huge. Output comes back as JSONL, so the system can read and act on each event as it arrives.

That choice sounds boring until you think about failure modes. A normal JSON blob needs to close cleanly, which is a pain if a process crashes or gets killed mid-review. JSONL lets the pipeline keep moving line by line, which is much easier to debug and much safer for long-running jobs in CI.

The coordinator watches for step events, token usage, errors, and truncation. If a model hits its token limit and returns with reason: "length", the system retries. If there has been no output for a while, it prints a heartbeat line every 30 seconds so engineers do not assume the job is stuck.

- Review output is buffered and flushed every 100 lines or 50 ms.

- Token usage is tracked from step finish events.

- Retries kick in when output is truncated.

- Heartbeat logs reduce false cancellation by users.

Why specialist reviewers beat one generalist

Cloudflare’s agents are narrow on purpose. The security reviewer only flags issues that are exploitable or concretely dangerous. The performance reviewer looks for real regressions. The documentation reviewer checks whether the change leaves future maintainers confused. The compliance reviewer checks against internal Engineering Codex rules. That kind of scoping is what keeps the system from producing the usual AI-review mush.

This is also where the scale story becomes interesting. The company says the system now runs across tens of thousands of merge requests, approves clean code, catches serious bugs, and blocks merges when it sees security issues that matter. That is a much higher bar than “summarize the diff” or “leave some helpful comments.” It is closer to a gatekeeper than a note-taker.

Cloudflare’s own numbers suggest the team is serious about keeping the stack maintainable too. Engineers have already landed more than 45 upstream pull requests in OpenCode, which means the review system is not a one-off internal hack. It is tied to an open-source project that Cloudflare can inspect, extend, and repair when the workflow changes.

What this means for teams building their own review bots

The lesson here is simple: AI code review works better when the product is an orchestration layer, not a giant prompt. Teams that want this to work at scale need clear plugin boundaries, structured output, good observability, and a way to separate policy from model behavior. Without those pieces, the reviewer becomes another source of friction.

Cloudflare’s setup also shows that the hard part is operational, not just linguistic. You need retry logic, memory caps, streaming logs, provider abstraction, and a way to keep engineers informed while a model thinks. That is a very different job from asking an LLM to summarize a diff and hoping for the best.

The most useful takeaway is that AI review can earn a place in CI only if it behaves like infrastructure. If your team is considering a similar system, the question is not whether a model can read code. The question is whether your pipeline can turn model output into dependable decisions without flooding engineers with noise.

Conclusion: the winning pattern is orchestration, not magic

Cloudflare’s system is a strong signal for where AI-assisted review is heading in large engineering orgs: more specialization, more structured control, and less trust in a single general-purpose prompt. The next step for teams is to ask a practical question, not a hype question: which parts of review should be automated, and which ones still need a human in the loop?

If you want to compare this with other AI workflow patterns, OraCore’s coverage of agentic tooling and developer automation is a good place to start, including Claude Code at scale and AI agents in CI.

// Related Articles

- [IND]

IREN signs Nvidia AI infrastructure pact

- [IND]

Circle launches Agent Stack for AI payments

- [IND]

Why Nebius’s AI Pivot Is More Real Than Hype

- [IND]

Nvidia backs Corning factories with billions

- [IND]

Why Anthropic and the Gates Foundation should fund AI public goods

- [IND]

Why Observability Is Critical for Cloud-Native Systems