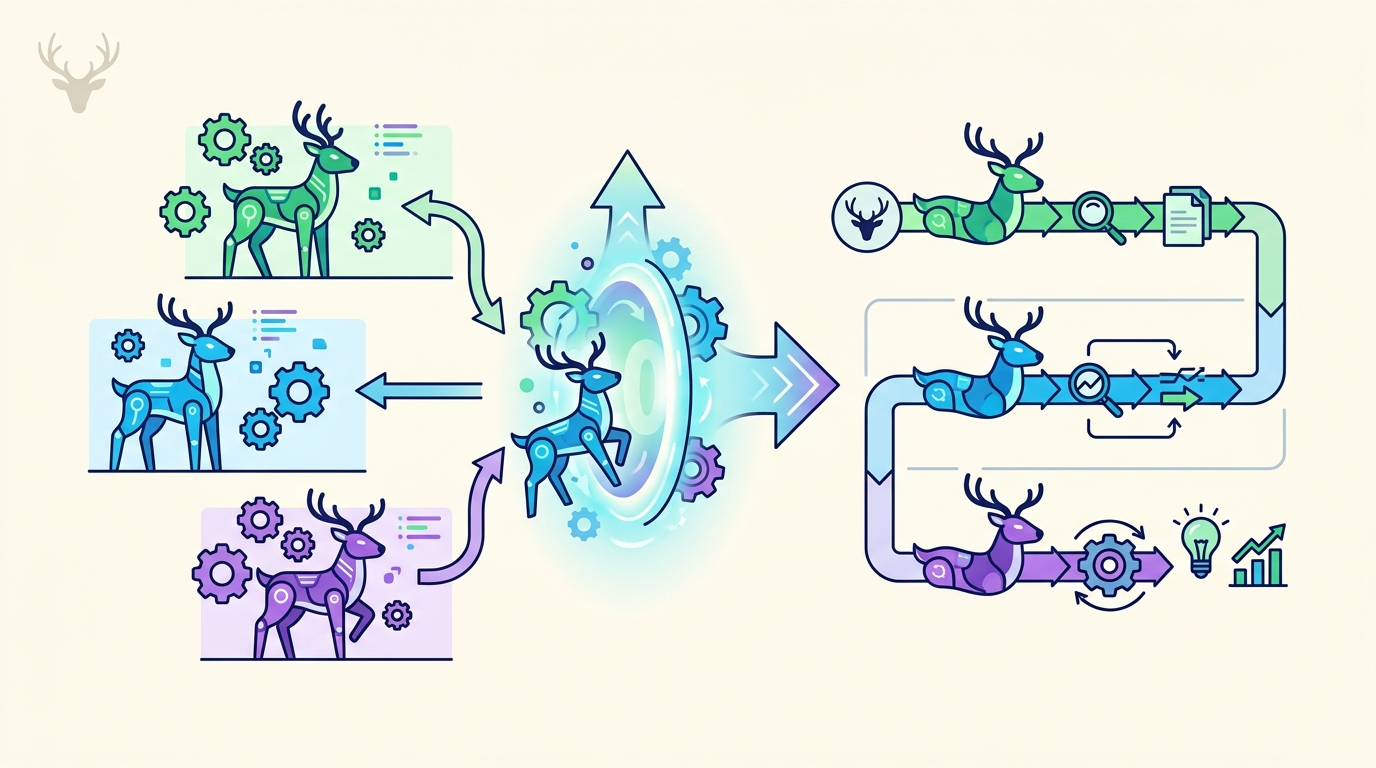

DeerFlow 2.0 turns agents into workflows

DeerFlow 2.0 is an open-source AI agent orchestration framework that splits complex work across sub-agents, sandboxes, and memory.

DeerFlow 2.0 is an open-source AI agent framework that breaks complex work into coordinated sub-agents.

DeerFlow hit GitHub Trending at number one, and the timing makes sense. The project’s 2.0 rewrite is built for long-running tasks, not quick chat replies, with sandboxed execution, persistent memory, and a workflow model that behaves more like a project manager than a prompt wrapper.

That matters because a lot of agent demos still collapse the moment a task gets messy. DeerFlow tries to keep the process intact when the work stretches across research, code, files, and tool use, which is exactly where most “agent” tools start to wobble.

| Feature | What DeerFlow 2.0 does | Why it matters |

|---|---|---|

| Version | 2.0 rewrite | Reworks the agent flow from the ground up |

| License | MIT | Free to use, modify, and ship |

| Execution | AioSandboxProvider in /mnt/user-data | Isolates file and shell actions |

| Model support | OpenAI-compatible LLMs | Works with multiple providers |

| Memory | Persistent local profile | Keeps preferences across sessions |

What DeerFlow actually is

Get the latest AI news in your inbox

Weekly picks of model releases, tools, and deep dives — no spam, unsubscribe anytime.

No spam. Unsubscribe at any time.

DeerFlow is an open-source AI agent orchestration framework built to manage long-horizon work. Instead of sending one model a giant prompt and hoping it keeps track of everything, DeerFlow splits the task into smaller jobs, assigns those jobs to sub-agents, and then combines the results into one output.

That design is a better fit for research-heavy and execution-heavy work. If you want a system that can read sources, compare findings, write code, and keep track of intermediate state, DeerFlow is aiming at that exact problem.

The project also leans hard into practical controls. It uses a secure sandbox, supports custom skills, and can keep local memory without forcing everything into a cloud-only workflow.

- Modular

.skillfiles define workflows in Markdown. - A lead agent breaks work into parallel sub-agents.

- Each task runs in an isolated container with its own filesystem.

- Intermediate steps get summarized and compressed to save context.

- Local memory keeps user preferences across sessions.

Why the 2.0 rewrite matters

The most interesting part of DeerFlow is not the feature list. It is the fact that version 2.0 is described as a ground-up rewrite, which usually means the maintainers ran into the same wall that hits most agent systems: context gets messy, tools get noisy, and long tasks become hard to trust.

DeerFlow attacks that problem with aggressive context management. It summarizes finished steps, moves intermediate data to disk, and trims irrelevant history so the agent does not drown in its own conversation log. That is the sort of engineering detail that sounds boring until you try to run an agent for more than a few minutes.

“The future of AI is not just about scaling models, but also about building systems around them that can handle complex tasks reliably.” — Demis Hassabis, Google DeepMind

Hassabis said that in his 2024 Nobel Prize lecture, and the quote fits DeerFlow better than most marketing copy. The framework is trying to make the system around the model do more of the heavy lifting, which is where a lot of practical value lives right now.

Features that matter in real use

DeerFlow’s feature set reads like a checklist for people who have already been burned by brittle agent demos. The framework supports any OpenAI-compatible LLM, accepts custom tools through MCP servers or Python functions, and includes built-in web search, file operations, and bash execution.

It also has a few integration points that make it more interesting than a pure research project. You can drive it from the terminal with the claude-to-deerflow skill, or use the embedded DeerFlowClient from Python without HTTP overhead.

- Claude Code integration: control tasks from the CLI with streaming responses.

- Embedded Python client: call agent features directly inside an app.

- Universal model support: works with OpenAI-compatible endpoints.

- Tool extensibility: add custom APIs, local files, or MCP-based tools.

That combination makes DeerFlow feel closer to an orchestration layer than a chatbot. It is trying to coordinate work across tools, memory, and sub-agents while staying flexible enough for local and self-hosted setups.

How it compares with simpler agent tools

Most agent tools still optimize for a single interaction loop: prompt, response, maybe one tool call, then stop. DeerFlow is built for tasks that take minutes or hours, where the system has to remember what happened earlier and keep the work separated enough to stay understandable.

Here is the practical difference:

- Single-agent chat tools are good for fast answers and lightweight tool use.

- DeerFlow is better suited for research, codebases, and multi-step workflows.

- Closed agent platforms often hide how work gets done.

- DeerFlow keeps the workflow visible, modular, and editable through skills.

That does come with tradeoffs. A system like this asks you to think more like an operator than a casual chatbot user. You need to care about skills, tools, sandbox boundaries, and memory behavior. In return, you get a framework that can be shaped around real work instead of a demo script.

There is also a broader signal here. Open-source agent frameworks are moving from novelty toward infrastructure, and DeerFlow is one of the clearer examples of that shift. The project is not trying to be everything for everyone. It is trying to make long-running AI work less fragile.

What developers should watch next

If DeerFlow keeps its momentum, the real question is whether the community builds useful skills around it fast enough to make the framework stick. The core ideas are already strong: isolated execution, persistent memory, modular skills, and multi-agent orchestration.

For developers, the immediate takeaway is simple. If your current agent setup falls apart once the task grows beyond a few tool calls, DeerFlow is worth a look. The next test is whether teams can use it to ship reliable workflows, not just impressive demos.

That is the metric that matters now: not how clever the agent looks in a notebook, but whether it can keep its head when the work gets long, messy, and real.

// Related Articles

- [AGENT]

How to Switch AI Outputs from Markdown to HTML

- [AGENT]

Anthropic’s Cat Wu on proactive AI assistants

- [AGENT]

How to Run Hermes Agent on Discord

- [AGENT]

Why RAGFlow is the right open-source RAG engine to self-host

- [AGENT]

How to Add Temporal RAG in Production

- [AGENT]

GitHub Agentic Workflows puts AI agents in Actions