Marginlab Tracks Claude Code Opus 4.6 Drift

Marginlab’s daily tracker watches Claude Code Opus 4.6 on 50 SWE-Bench-Pro tasks and flags statistically significant drops.

Marginlab has built a daily tracker for Claude Code running Opus 4.6, and the setup is refreshingly concrete: 50 benchmark cases every day, with weekly and monthly rollups for a steadier read. The point is simple and useful for anyone watching agent quality closely: catch statistically significant drops before they become user complaints.

This matters because coding agents can look fine on a single demo and still drift in real use. Marginlab’s tracker focuses on a curated subset of SWE-Bench-Pro, runs directly in Claude Code CLI, and avoids custom harnesses so the numbers reflect what users actually experience.

What the tracker measures every day

Get the latest AI news in your inbox

Weekly picks of model releases, tools, and deep dives — no spam, unsubscribe anytime.

No spam. Unsubscribe at any time.

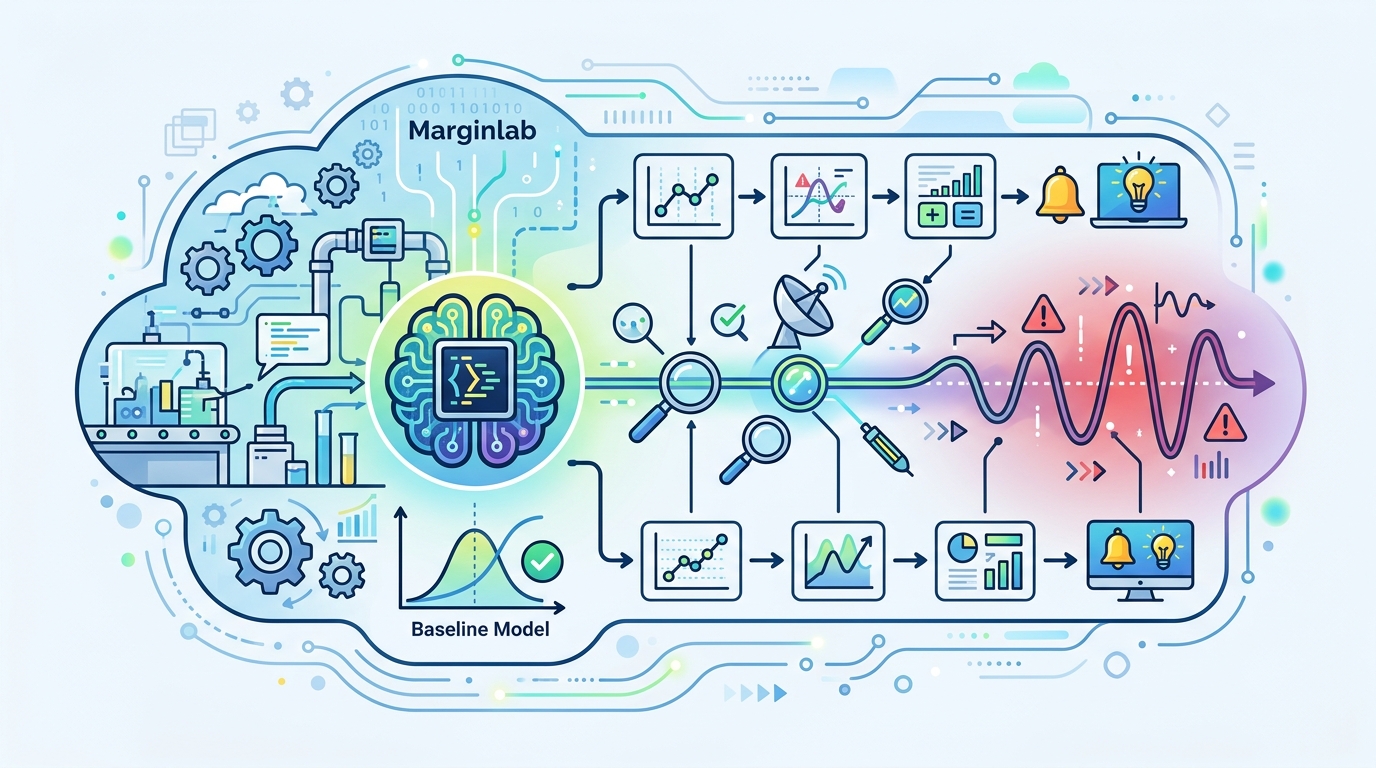

The dashboard is built around pass rate, but it also exposes the operational signals that usually explain performance changes. That includes input tokens, output tokens, API cost, average runtime per instance, and total tool calls during the daily run.

That mix is smart. If pass rate slips while tool calls spike, you may be looking at a model that is thrashing through more actions to solve the same tasks. If runtime rises without a matching token jump, the issue could be in execution behavior, backend latency, or the agent loop itself.

Marginlab also shows a degradation status panel with thresholds tied to sample size. With only 50 eval cases, the tracker says a change of about ±13.8% is needed to clear the p < 0.05 bar. At 350 cases, that shrinks to ±4.8%. At 1,400 cases, the threshold drops to ±2.3%.

- Daily sample size: 50 SWE-Bench-Pro cases

- Weekly and monthly aggregates for tighter confidence bands

- Pass-rate testing modeled with Bernoulli trials

- 95% confidence intervals shown for daily, weekly, and monthly results

- Direct Claude Code CLI runs, no custom harness layer

That last point is easy to miss, but it is the most important design choice on the page. If a benchmark uses a heavy wrapper, it can hide regressions or create fake ones. Marginlab’s method tries to measure the same path a real developer would use.

Why this tracker exists now

Marginlab says the tracker was built in response to Anthropic’s September 2025 postmortem on Claude degradations. That postmortem made one thing clear: model quality can move in the wrong direction after release, and teams need a way to notice quickly.

The company also says it is an independent third party with no affiliation to frontier model providers. That matters because benchmark dashboards often blur the line between marketing and measurement. Here, the stated goal is narrower and more credible: detect statistically significant degradation in Claude Code on SWE tasks, not celebrate every new release.

The methodology is also meant to be contamination-resistant. In practice, that means the benchmark subset is curated to reduce the chance that training data leakage makes the results look better than they should. For coding agents, that kind of care is the difference between useful signal and dashboard theater.

“We want to offer a resource to detect such degradations in the future.” — Marginlab, tracker methodology

That quote gets to the heart of the project. This is less about leaderboard bragging rights and more about early warning. If a model update changes the way Claude Code reasons, calls tools, or handles long-running tasks, a daily tracker can surface the drift before it becomes invisible background noise.

How the numbers compare in practice

The most interesting part of the page is how it frames sample size against statistical confidence. Small daily runs are noisy by design, so the dashboard does not pretend a one-day dip is always meaningful. Instead, it compares daily, weekly, and monthly windows so users can separate random variation from a real performance slide.

That approach is especially useful in agent benchmarks, where tool use and runtime can swing from day to day. A model may solve the same issue with more steps, or spend more time on retries, without changing the final pass rate much. Watching those side metrics gives you a better read on whether the agent is becoming less efficient even before accuracy drops.

- 50 cases: about ±13.8% change needed for statistical significance

- 350 cases: about ±4.8% change needed for statistical significance

- 1,400 cases: about ±2.3% change needed for statistical significance

- Daily runs are paired with weekly and monthly aggregation

- Reported metrics include pass rate, runtime, tool calls, token usage, and API cost

That is a practical tradeoff. A daily tracker gives fast feedback, while longer windows reduce the chance of overreacting to noise. For teams shipping agentic products, that combination is more valuable than a single headline score.

It also makes the tracker useful beyond Claude Code itself. Any team working on coding agents can compare their own internal monitoring against Marginlab’s setup and ask a blunt question: are we measuring the model, the harness, or both?

What developers should watch next

If you rely on Claude Code for real work, the tracker is worth bookmarking. The most actionable signal is not a one-off pass-rate dip; it is a pattern that shows up across daily runs and is echoed in runtime, tool calls, or token consumption. That is where quality issues usually become visible first.

There is also a broader lesson here for the agent world. As models get better, the hard part shifts from proving they can solve a benchmark to proving they keep doing it week after week. A daily degradation monitor is a small idea, but it addresses a very real operational problem.

My read is that more teams will copy this pattern in 2026: independent trackers, direct CLI execution, fixed task subsets, and statistical alarms instead of vibe-based judgment. If Marginlab keeps publishing these numbers consistently, it may become the reference point people check after every major Claude Code update.

The practical takeaway is simple: if your product depends on coding agents, treat benchmark drift like uptime. The question is no longer whether a model can score well once. The question is whether it can hold that score after the next release, the next backend change, and the next quiet update to the agent loop.

// Related Articles

- [AGENT]

How to Switch AI Outputs from Markdown to HTML

- [AGENT]

Anthropic’s Cat Wu on proactive AI assistants

- [AGENT]

How to Run Hermes Agent on Discord

- [AGENT]

Why RAGFlow is the right open-source RAG engine to self-host

- [AGENT]

How to Add Temporal RAG in Production

- [AGENT]

GitHub Agentic Workflows puts AI agents in Actions