OpenAI vs DeepMind in 2026: models and apps

OpenAI trails DeepMind on raw model quality, but ChatGPT’s app stack still keeps it competitive in 2026.

By April 1, 2026, the gap between model quality and product quality is easier to see than ever. Google DeepMind has pushed Gemini into more places, while OpenAI still wins a lot of day-to-day usage because people live inside ChatGPT, not benchmark tables.

The interesting part is that this fight is no longer about one model beating another on a single leaderboard. It is about how well a company connects inference quality, search, tools, memory, and interface design into one experience. That is where the current split becomes obvious.

Why model quality and app quality are diverging

Get the latest AI news in your inbox

Weekly picks of model releases, tools, and deep dives — no spam, unsubscribe anytime.

No spam. Unsubscribe at any time.

Raw capability matters, but users feel product design first. A model can be smarter on paper and still lose if the app makes it hard to ask follow-up questions, compare sources, or keep context across sessions.

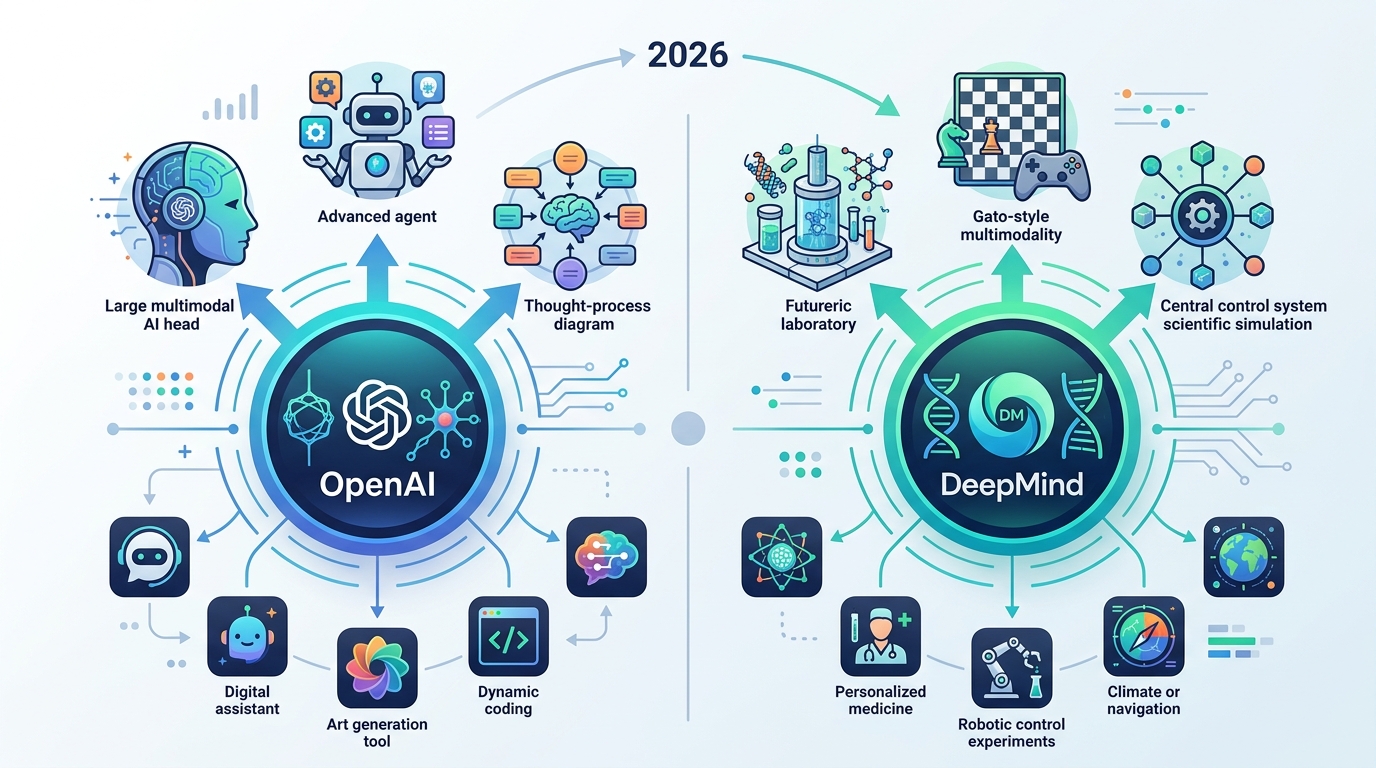

That is why the current debate around OpenAI and DeepMind is more useful when broken into two layers: the base models and the applications built on top of them. OpenAI has strong consumer products and a large developer ecosystem. DeepMind has been shipping models that often feel better at retrieval, reasoning, and multimodal response quality.

In practical terms, this means a user may prefer ChatGPT for drafting, coding help, and everyday chat, while preferring Gemini for search-heavy tasks, source-backed answers, and visually structured responses. The winner changes with the job.

- ChatGPT remains the most recognizable consumer AI app.

- Gemini is tightly tied to Google’s search and workspace ecosystem.

- OpenAI’s model releases still shape the broader developer conversation.

- Gemini models increasingly define Google’s AI-first product strategy.

What the article gets right about Gemini 3 Flash

The source article highlights Gemini 3 Flash, described there as the default model for Gemini App and Google AI Mode. The key claim is not just speed. It is that the model handles nuance, local context, and web-linked answers better than before.

That matters because most AI usage is not a clean benchmark prompt. It is messy: a user asks a half-formed question, expects the assistant to infer intent, and wants the answer to include current facts without turning into a wall of text. DeepMind has been pushing hard on that exact experience.

“The future is already here — it’s just not very evenly distributed.” — William Gibson

That quote fits this market well. The best AI experience is not evenly spread across products. Some apps give you raw model power, while others give you a better workflow around the model. The difference is visible in everyday use.

Google’s advantage is distribution. When Gemini is the default inside search-adjacent surfaces, more users get exposed to the latest model without changing habits. OpenAI’s advantage is brand and habit. ChatGPT is still the app people open first when they want a general-purpose assistant.

Comparing the current stacks with real numbers

It helps to compare the two companies by product surface, because that is where the tradeoffs show up. OpenAI is one company with a tightly controlled consumer app, API business, and enterprise push. Google has a wider product web, from Search to Workspace to Android, and Gemini can appear inside all of them.

In the source article, OpenAI is described as weaker than DeepMind on model strength alone, but still competitive once its application ecosystem is included. That is a fair reading. The app layer can offset a model gap if the product is faster to use and easier to trust.

- OpenAI’s strength: one primary consumer interface, ChatGPT, with clear brand recognition.

- Google’s strength: Gemini can plug into Search, Android, Docs, and Gmail.

- DeepMind’s strength: model quality and multi-step response quality often feel more refined in search-like tasks.

- OpenAI’s weakness: the app experience can feel less connected to live web context than Google’s stack.

- Google’s weakness: product sprawl can make Gemini feel scattered across too many entry points.

The numbers that matter most here are not model parameter counts, which are rarely disclosed in a useful way. The numbers that matter are adoption, retention, and how often users return without being prompted. On those metrics, ChatGPT still has a massive installed base, while Google has the distribution advantage to close the gap quickly when it gets the product right.

For developers, this split has a second-order effect. If the app layer keeps improving, more API calls will be shaped by product behavior rather than pure model ranking. That changes how teams choose where to build, which SDKs to trust, and which platform gets the first integration.

What this means for builders and power users

If you are building AI features today, the lesson is simple: do not evaluate a model in isolation. Test the whole product path, from prompt entry to source handling to memory and export. A slightly weaker model inside a better interface can outperform a stronger model that feels clumsy in practice.

For power users, the choice is becoming task-based. Use ChatGPT when you want a flexible general assistant with a strong consumer workflow. Use Gemini when you want deeper integration with Google services and better handling of search-heavy questions. If your work depends on current information and clean citations, that difference matters.

For teams tracking the market, the key question is whether OpenAI can keep its app lead while DeepMind keeps improving model quality. That is the real contest in 2026, and it is happening one product update at a time.

My read: if Google keeps shipping models like Gemini 3 Flash into default surfaces, OpenAI will need to win on product speed, memory, and developer tooling rather than model bragging rights alone. If that does not happen, more users will quietly drift toward the assistant that answers with better context and less friction.

// Related Articles

- [MODEL]

Why Google’s Hidden Gemini Live Models Matter More Than the Demo

- [MODEL]

MiniMax-M1 brings 1M-token open reasoning model

- [MODEL]

Gemini Omni Video Review: Text Rendering Beats Rivals

- [MODEL]

Why Xiaomi’s MiMo-V2.5-Pro Changes Coding Agents More Than Chatbots

- [MODEL]

OpenAI’s Realtime Audio Models Target Live Voice

- [MODEL]

Anthropic发布10款金融AI Agent