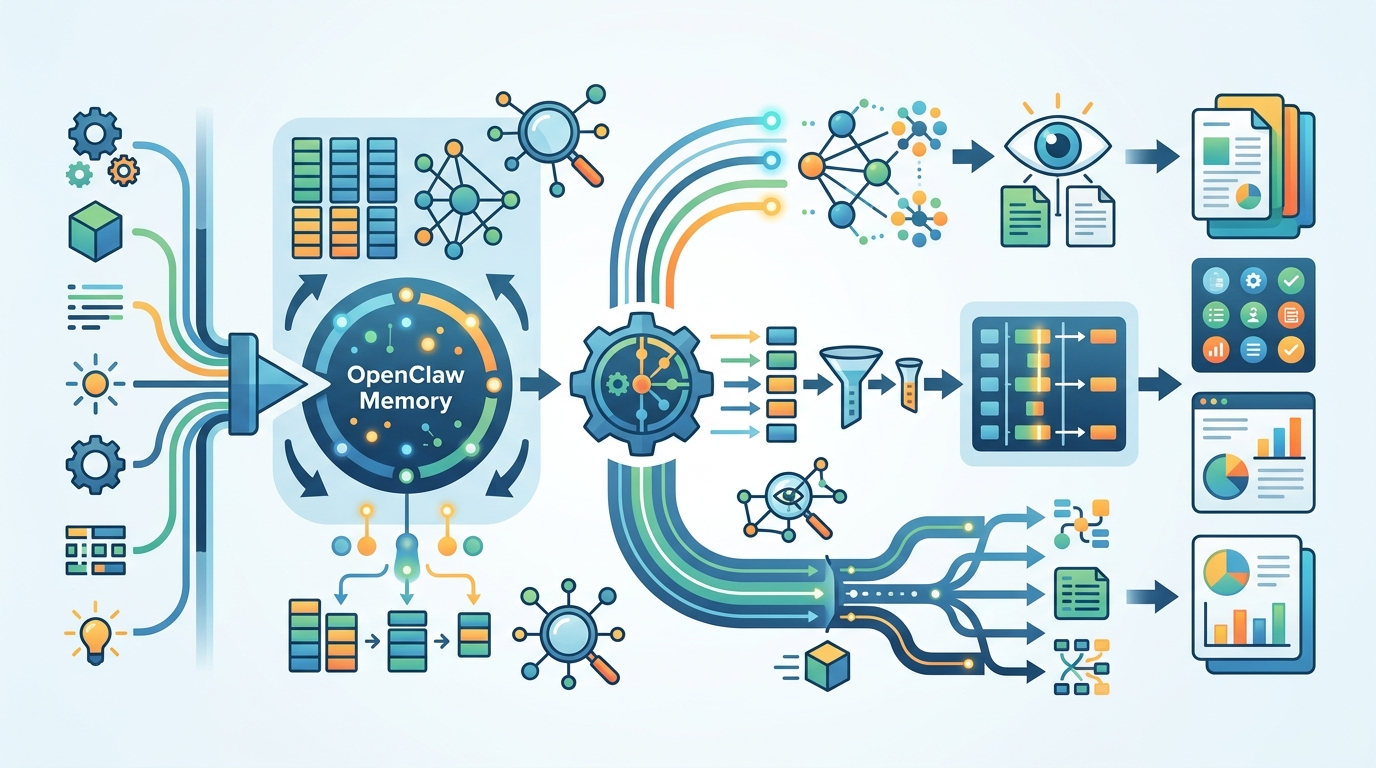

OpenClaw Memory: how its retrieval system works

OpenClaw’s memory system uses file watching, async debounced index updates, and optional API-backed search across local and cloud models.

OpenClaw keeps its memory layer simple on the surface and practical underneath. If there is no API key, memory search is disabled. When memory files change, a file watcher triggers an index update, and that update runs async with debouncing so the agent does not stall.

That design choice matters because memory in agent tools often becomes either too slow or too magical. OpenClaw goes the other way: it treats memory as an indexable store with clear rules, a fallback path, and a retrieval flow that can be inspected instead of guessed.

In other words, this is less about “smart” memory in the abstract and more about predictable retrieval behavior for local and hosted models such as OpenAI, Gemini, Voyage AI, and Mistral.

What OpenClaw’s memory layer is doing

Get the latest AI news in your inbox

Weekly picks of model releases, tools, and deep dives — no spam, unsubscribe anytime.

No spam. Unsubscribe at any time.

OpenClaw’s memory system is built around a few concrete behaviors rather than a large, opaque abstraction. The source summary points to local memory files, an index that updates when those files change, and retrieval that can be turned off when no API key is available.

That last part is easy to miss, but it is a big product decision. If search depends on an external API and the key is missing, OpenClaw does not pretend retrieval is still working. It disables memory search. That makes failures obvious, which is a lot better than returning partial results and calling them good enough.

The file watcher plus async debounced update path also tells you how the system is meant to behave under real use. Memory files can change repeatedly while an agent is active, and OpenClaw avoids rebuilding the index on every tiny edit. It waits, coalesces the updates, then refreshes the index without blocking the agent loop.

- API key missing: memory search is disabled

- Memory file changes: file watcher triggers index update

- Index updates: async and debounced

- Agent runtime: not blocked by memory refresh

That is a sensible default for developer tools. It keeps the agent responsive and puts the cost of indexing in the background, where it belongs.

Why this design matters for agent workflows

Memory in agent systems gets messy fast. If retrieval is too aggressive, the agent drags in stale context. If it is too weak, the agent forgets project details after a few turns. OpenClaw’s approach suggests a middle path: keep memory tied to files, update the index when those files change, and only search when the retrieval backend is actually configured.

The practical upside is that developers can reason about the system. A memory file is a file. An index update is an update. Search is either enabled or disabled based on configuration. That is much easier to debug than a hidden state machine that silently mutates context behind the scenes.

It also fits the way many teams already work. Notes, prompts, task histories, and project facts often live in Markdown or plain text. A watcher-based system can track those files and turn them into searchable context without asking the user to manually rebuild anything.

“The best code is no code at all.” — Jeff Atwood

That quote from Jeff Atwood is old, but it maps neatly onto memory systems like this one. If the index refreshes itself when files change, the user gets less manual bookkeeping and fewer chances to forget a sync step.

How OpenClaw compares with other memory setups

OpenClaw’s memory flow is simpler than the kind of multi-layer memory stack you see in some agent frameworks. Some tools mix vector stores, long-term summaries, and automatic prompt injection. That can be powerful, but it can also become hard to audit.

Here, the tradeoff is clearer: OpenClaw appears to favor predictable file-backed retrieval over a more elaborate memory architecture. That means fewer hidden transitions and a smaller surface area for bugs.

It also helps that the system is compatible with multiple model backends. The summary mentions local model support alongside OpenAI, Gemini, Voyage, and Mistral. That matters because memory quality is often tied to the embedding or retrieval layer, and different teams will want different providers depending on cost, latency, and deployment constraints.

- OpenAI is often chosen for broad model availability and tooling support

- Gemini API fits teams already working inside Google’s ecosystem

- Voyage AI focuses on embedding and retrieval quality

- Mistral appeals to teams that want strong open model options

Compared with a fully managed memory service, OpenClaw’s approach gives developers more visibility into what changed and when. Compared with a purely manual note system, it removes the need to rebuild context by hand. That middle position is where a lot of useful developer tools end up living.

What developers should watch next

The most interesting part of OpenClaw’s memory system is not the fact that it has memory. It is the way it treats memory as a background index with explicit failure modes. That design will matter more as agent workflows become more stateful and teams expect tools to remember project context without slowing down.

If OpenClaw keeps this direction, the next questions are straightforward: how good is the retrieval quality, how often do debounced updates miss important changes, and how well does the system behave when memory files grow large? Those are the questions that decide whether this stays a neat implementation detail or turns into a feature developers depend on every day.

My read is simple: if you are building an agent tool, start with a memory model you can explain in one minute. OpenClaw’s file-watcher-plus-index approach is a good example of that philosophy, and it is likely to age better than a memory stack that hides too much behind automation.

If the project publishes benchmarks or retrieval traces next, that would be the real proof point. Until then, the takeaway is clear: memory systems win when they are boring to operate and easy to inspect.

// Related Articles