Superpowers Hits 121K Stars as Agents Get Structured

Superpowers hit 121K GitHub stars in five months by forcing AI agents into test-first, skill-based workflows that ship cleaner code.

Superpowers just crossed 121,000 GitHub stars, and the pace is the real headline. The GitHub Trending chart had it sitting near the top while the repo picked up 2,292 stars in a single day, which is the kind of number that tells you developers are not casually bookmarking it. They are trying it because their current AI coding setup is not holding up.

Built by Jesse Vincent, the Perl project lead and Keyboardio founder, Superpowers takes a stricter path than tools like LangChain or autonomous agents such as AutoGPT. Instead of giving agents a pile of tools and hoping they behave, it wraps them in Markdown-based skills, enforced workflows, and test-first rules that delete code when the process is violated.

That sounds severe, but it matches the problem developers keep running into: AI can feel fast while producing more cleanup work later. The METR research cited in the article points to a 39-point gap between perceived productivity and measured results, with AI coding work ending up 19% slower in some cases. Superpowers is basically a rebuttal to that problem.

Why Superpowers Is Growing So Fast

Get the latest AI news in your inbox

Weekly picks of model releases, tools, and deep dives — no spam, unsubscribe anytime.

No spam. Unsubscribe at any time.

The easiest way to understand Superpowers is to think of it less like a general-purpose agent framework and more like a disciplined operating system for coding tasks. Skills are not optional helpers. They are mandatory workflows stored in Markdown files with YAML frontmatter, plus scripts and templates that agents invoke automatically when the task fits.

That matters because most AI coding tools still assume the human will catch mistakes later. Superpowers flips that assumption. If an agent writes code before tests, the framework deletes the code. If a task needs planning, it forces planning. If review is required, review happens before completion. The point is to make the agent follow the same habits a careful senior engineer would insist on during a code review.

The growth curve is striking because it suggests developers are tired of tools that promise autonomy but deliver cleanup. Five months from launch to 121K stars is not normal even in AI land. It says there is a large audience for a framework that prioritizes correctness, repeatability, and shipping code that does not collapse under the first regression test.

- 121,000 GitHub stars in roughly five months

- 2,292 stars added in a single day

- #2 trending on GitHub during the spike

- Launched in October 2025

- Crossed 40,000+ stars by January 2026

The Philosophy: Skills Over Free-Form Agents

Superpowers is built around a simple idea: agent behavior should be encoded as skills, not improvised every time. That puts it closer to a workflow engine than a chatty assistant. The framework’s v2.0 split skills into a separate repository, obra/superpowers-skills, so updates can land without reinstalling the whole plugin. That modular move matters because it keeps the system maintainable as the skill set grows.

There is also a practical reason this model is catching on. Anthropic’s own engineering work on GitHub MCP tooling showed how quickly schema-heavy tool catalogs can become token-hungry. The article cites 90+ tools consuming 50,000+ tokens of JSON schemas. Skills avoid some of that weight by packaging domain knowledge as concise instructions rather than giant tool manifests.

“It makes me a better developer by forcing TDD.”

That quote captures the appeal better than any architecture diagram. Developers are not adopting Superpowers because they want an agent to be more creative. They are adopting it because they want the agent to stop freelancing and start following the same discipline they would expect from a teammate.

The comparison with LangChain and AutoGPT is useful here. LangChain gives you primitives and leaves the workflow design to you. AutoGPT pushes toward open-ended autonomy. Superpowers makes the workflow itself the product. That difference explains a lot of the enthusiasm.

What the 7-Phase Workflow Actually Does

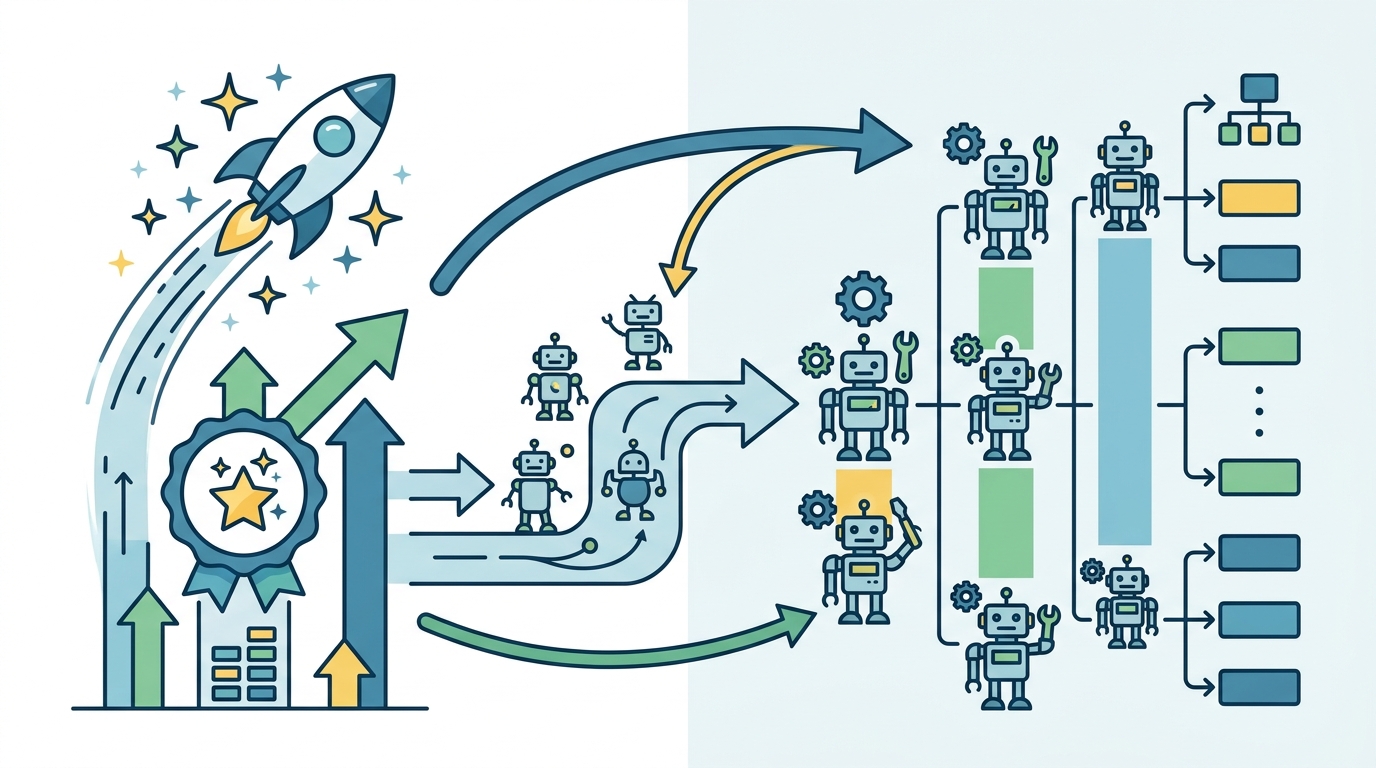

Superpowers uses a seven-step process that is intentionally opinionated. It starts with Socratic brainstorming, moves into Git worktree isolation, then micro-task planning, parallel subagent execution, test-driven development, systematic code review, and branch completion. Each phase forces the agent to finish one kind of thinking before moving to the next.

That structure is why people report unusually high test coverage. The article claims developers see 85% to 95% coverage with Superpowers, compared with 30% to 50% using standard Claude Code workflows. Even if you treat those numbers as anecdotal, the direction is familiar: when tests are mandatory, coverage rises. When tests are a suggestion, coverage drops.

There is also a speed story here, but it is more nuanced than “AI does everything faster.” One developer reportedly built a Notion clone in 45 to 60 minutes with 87% test coverage, including rich-text editing, tables, Kanban boards, and PostgreSQL persistence. The trick was parallel subagents handling UI, API, database, and tests at the same time, while the framework kept the process locked to TDD.

- 7 phases from brainstorming to branch completion

- 45 to 60 minutes for a Notion-style app prototype

- 87% test coverage in that example

- 85% to 95% reported coverage in production use

- Standard AI coding workflows cited at 30% to 50% coverage

The important detail is that the first 10 to 20 minutes are slower because the plan is real. After that, implementation and debugging move faster because the codebase is already shaped around tests and review. That is the tradeoff Superpowers is betting on: spend more time up front, spend less time fixing chaos later.

How It Compares With Other Agent Tools

If you want open-ended research or a bot that can wander through a problem space, AutoGPT still makes sense. If you need a flexible framework for custom chains, retrieval, and app-specific orchestration, LangChain remains a strong option. Superpowers is narrower, and that narrowness is the point.

It is built for teams that care about production quality more than improvisation. That makes it a better fit for shipping code than for experimenting with broad, fuzzy agent behavior. It also explains why the framework’s supporters keep talking about TDD, code review, and branch hygiene instead of “agent magic.”

The broader industry is starting to move in the same direction. The article notes that Spring AI adopted an Agent Skills pattern in January 2026, and that other ecosystems are exploring similar modular approaches. This is the kind of shift that usually starts with developer pain and then spreads once a cleaner pattern proves it can scale.

- LangChain: flexible primitives for custom workflows

- AutoGPT: more autonomous, less prescribed task flow

- Superpowers: enforced skills and test-first execution

- Spring AI: enterprise framework now exploring agent skills

There is a clear takeaway for developers choosing tools right now. If your team keeps fighting flaky AI-generated code, Superpowers is worth a look. If you are prototyping a one-off script or exploring a vague idea, its strictness may feel heavy. The framework is opinionated because the problem it solves is opinionated too: shipping code that works.

What This Says About AI Coding Next

Superpowers hitting 121K stars is less about one repo going viral and more about a shift in what developers want from AI coding tools. The market is rewarding systems that make agents behave like accountable collaborators, not improvisers with a terminal. That matters because the next wave of adoption will probably come from teams that care about test coverage, review discipline, and repeatable delivery.

My bet is simple: the next popular agent tools will copy the parts Superpowers gets right, especially enforced workflows and skill modularity. The question for teams is whether they want to keep asking AI to “help,” or whether they want to define exactly how help is allowed to happen.

If your current setup produces fast demos but slow cleanup, the answer is probably obvious. Try a workflow that treats tests, review, and planning as non-negotiable. That is where the real productivity gain lives.

// Related Articles

- [AGENT]

How to Switch AI Outputs from Markdown to HTML

- [AGENT]

Anthropic’s Cat Wu on proactive AI assistants

- [AGENT]

How to Run Hermes Agent on Discord

- [AGENT]

Why RAGFlow is the right open-source RAG engine to self-host

- [AGENT]

How to Add Temporal RAG in Production

- [AGENT]

GitHub Agentic Workflows puts AI agents in Actions