Why asset tokenization is still a legal problem, not a blockchain sho…

Asset tokenization in the US is a legal classification problem first and a blockchain design problem second.

Asset tokenization in the US is a legal classification problem first and a blockchain design problem second.

Asset tokenization does not escape US law, and the winning rule is still “same risk, same rules.” That means a tokenized share, note, fund interest, or payment claim is not judged by the novelty of its ledger but by what it represents, who can hold it, how it trades, and which obligations attach to the underlying arrangement. Reuters’ Practical Law guide gets the core point right: the first job in any real-world asset tokenization project is classification, because classification determines the compliance stack.

First argument: the token’s legal category controls everything

Get the latest AI news in your inbox

Weekly picks of model releases, tools, and deep dives — no spam, unsubscribe anytime.

No spam. Unsubscribe at any time.

In the US, a token is not a magic wrapper that erases the law of the asset inside it. If the token is a security, securities law applies. If it is a payment instrument, money transmission and payments rules come into play. If it represents a fund interest, then fund, custody, transfer, and disclosure issues follow. The blockchain layer changes the rail, not the legal character of the thing being moved.

This is why early-stage tokenization teams keep getting tripped up when they start with smart contracts and end with counsel. The right sequence is the reverse: identify the asset, map the rights the token conveys, then test the structure against federal and state regimes. A project that skips that step can build a technically elegant system that is legally unusable on day one. The classification question decides whether the product needs registration, exemptions, broker-dealer involvement, transfer restrictions, or all of the above.

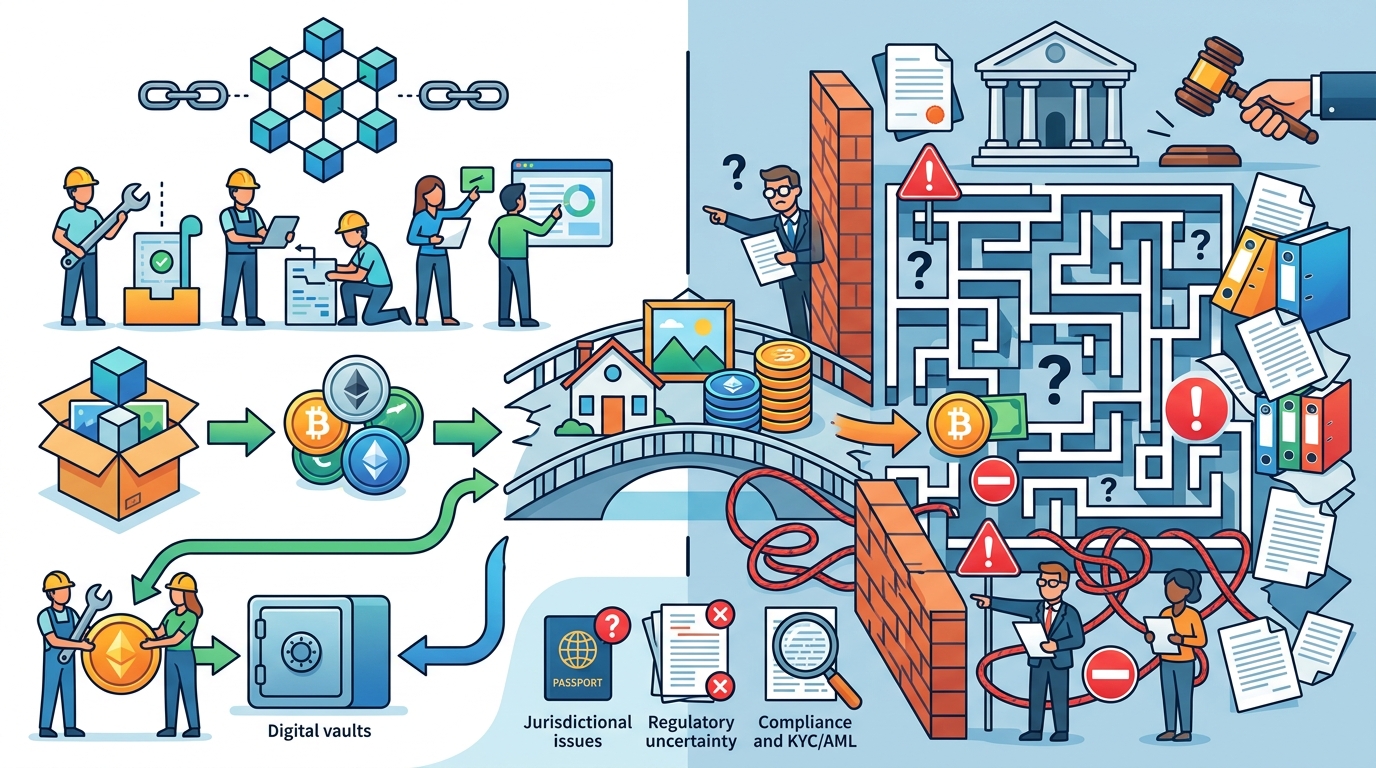

Second argument: compliance costs do not disappear, they relocate

Tokenization is often sold as a way to reduce friction, but in regulated markets the friction usually moves from back-office reconciliation into front-end controls. That is not a bad thing, but it is the real tradeoff. If a tokenized asset must be sold only to accredited investors, the system still needs eligibility checks. If transfers are restricted, the ledger must enforce those rules. If custody rules apply, the platform must solve for that obligation instead of pretending the chain itself is the custodian.

We have already seen this pattern in adjacent markets. Stablecoin issuers, for example, have not escaped AML, sanctions, reserve, and consumer-protection scrutiny simply because the instrument lives on-chain. The same is true for tokenized treasuries, private credit, or real estate interests. The promise is not fewer rules. The promise is tighter automation of the rules that already exist. That is an operational advantage, not a regulatory exemption.

The counter-argument

Supporters of tokenization make a serious point: blockchain can improve settlement speed, reduce recordkeeping errors, widen access, and create programmable compliance that traditional systems struggle to match. They are right that a well-designed token can lower operational cost and make transfer logic more transparent. In some cases, the technology can even improve compliance by making restrictions enforceable at the protocol level instead of relying on manual checks.

There is also a broader market argument. If the US makes every tokenized asset behave exactly like its off-chain counterpart, innovation will slow and projects will stay trapped in pilot mode. That concern is real. Excessive legal caution can freeze useful products before they reach scale, especially when issuers are trying to tokenize assets with clear economic value but unclear regulatory fit.

But that counter-argument fails on the central issue: efficiency does not change legal substance. A faster settlement system is still a settlement system. A programmable transfer restriction is still a transfer restriction. Regulators are not rejecting tokenization; they are rejecting the idea that tokenization creates a new asset class outside existing law. The practical limit is simple: where the asset is regulated today, the token will be regulated tomorrow. Innovation survives only when it is built around that fact, not against it.

What to do with this

If you are an engineer, PM, or founder, stop treating tokenization as a product decision and start treating it as a legal architecture decision. Before you choose a chain, choose the asset classification. Before you write the smart contract, define the rights the token carries, the transfer restrictions it must enforce, the parties who can hold it, and the compliance obligations that attach at issuance, custody, and secondary transfer. Build the system so that law is encoded in the workflow, not patched on later. In the US, that is the difference between a viable tokenization business and a demo that never leaves the lab.

// Related Articles

- [IND]

Circle’s Agent Stack targets machine-speed payments

- [IND]

IREN signs Nvidia AI infrastructure pact

- [IND]

Circle launches Agent Stack for AI payments

- [IND]

Why Nebius’s AI Pivot Is More Real Than Hype

- [IND]

Nvidia backs Corning factories with billions

- [IND]

Why Anthropic and the Gates Foundation should fund AI public goods