Why frontend teams should stop treating AI as a rewrite machine

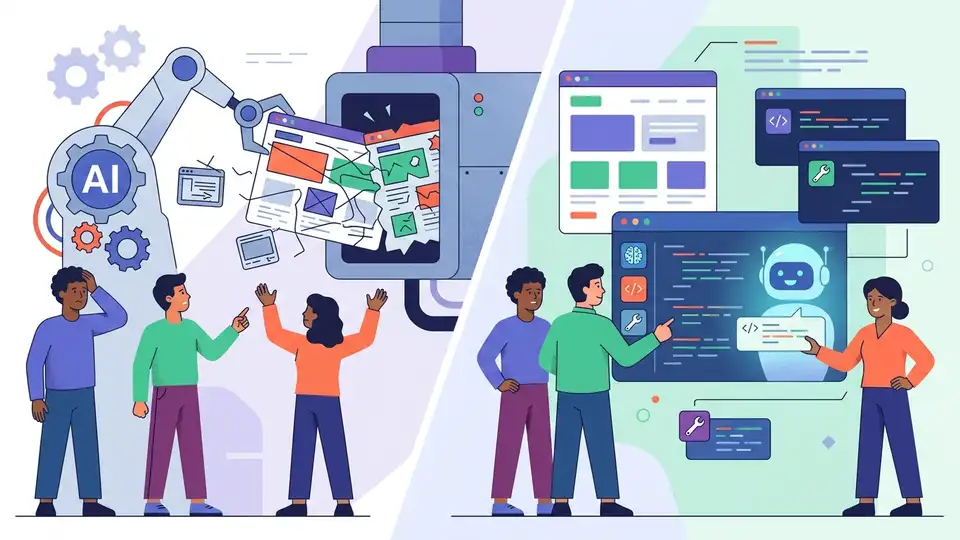

Frontend teams should stop using AI assistants as broad refactoring engines and start using them as constrained editors, because the real gains now come from precision, not wholesale rewrites.

Frontend teams should stop treating AI as a rewrite machine and start using it as a constrained editor.

The evidence is already plain in the tools people are shipping and the failures they are documenting. In the same Habr digest that highlights wasm-xlsxwriter generating Excel files in the browser, Agent Readiness Score for AI-facing sites, and automated E2E test generation, one of the most useful AI findings is not about bigger models or fancier agents. It is about a smaller, harsher truth: code assistants often over-edit. The digest cites a study that measured this “excessive editing” on 400 BigCodeBench tasks and found that a prompt like “keep the original code” reduces unnecessary churn across most models. That is the pattern worth noticing. Teams do not need AI to rewrite stable code into a fresh shape. They need it to make the smallest correct change, preserve intent, and avoid turning a one-line fix into a new bug.

First argument: rewriting destroys the thing frontend code depends on most, which is local predictability

Get the latest AI news in your inbox

Weekly picks of model releases, tools, and deep dives — no spam, unsubscribe anytime.

No spam. Unsubscribe at any time.

Frontend systems are full of code that looks trivial until a tool rewrites it. A component may be only 40 lines long, but those lines often encode layout behavior, accessibility hooks, event ordering, and framework-specific assumptions. When an assistant replaces a narrow patch with a broader refactor, it changes more than syntax. It changes the mental model the team uses to reason about the UI. The Habr digest’s note about chain calls in JavaScript points in the same direction: once a chain gets past two or three steps, readability and debugging suffer. AI-driven rewrites do the same thing at scale. They spread the change across more lines, more branches, and more names, which makes the final diff harder to review than the original bug.

The study cited in the digest is especially important because it did not rely on vibes. It measured token-level Levenshtein distance and cognitive complexity on 400 tasks. That matters because frontend teams live and die by diff quality. A small fix to a button state, a validation rule, or a hook dependency should not come back as a renamed helper, a reordered guard, and three new null checks. The digest also notes that models with reasoning did better at following the “preserve the original code” instruction. That is the right lesson: reasoning is useful when it helps the model stay inside the boundaries of the task. It is not a license to expand the task.

Second argument: the best AI use cases in frontend are already constrained, and they outperform open-ended generation

Look at the other stories in the digest. wasm-xlsxwriter is valuable because it moves a specific server-side job into WebAssembly and keeps the boundary clear: generate .xlsx files in browser and Node.js, nothing more. The same logic applies to AI in frontend work. The strongest cases are the ones with tight inputs, tight outputs, and measurable success criteria. Cloudflare’s Agent Readiness Score is a good example of this mindset. It checks discoverability, content negotiation, access control, and capabilities against concrete standards such as robots.txt, Markdown fallback, and API catalog support. It does not ask a model to “improve the site.” It asks a system to verify specific properties. That is the right shape for AI-assisted engineering too.

There is also a performance argument. The digest mentions Cloudflare’s own docs changes, where an agent on Kimi-k2.5 through OpenCode answered technical questions 66% faster and used 31% fewer tokens after the documentation was adjusted to the new standards. That is a clean example of leverage coming from structure, not from freeform generation. When the input space is organized, agents become cheaper and more accurate. When the input space is messy, they hallucinate confidence and produce sprawling edits. Frontend teams should copy the structural approach: constrain the task, define the expected output, and measure the result. AI belongs in code review assistance, test generation, docs migration, and small mechanical edits. It does not belong in broad “make this better” prompts that invite redesign by accident.

The counter-argument

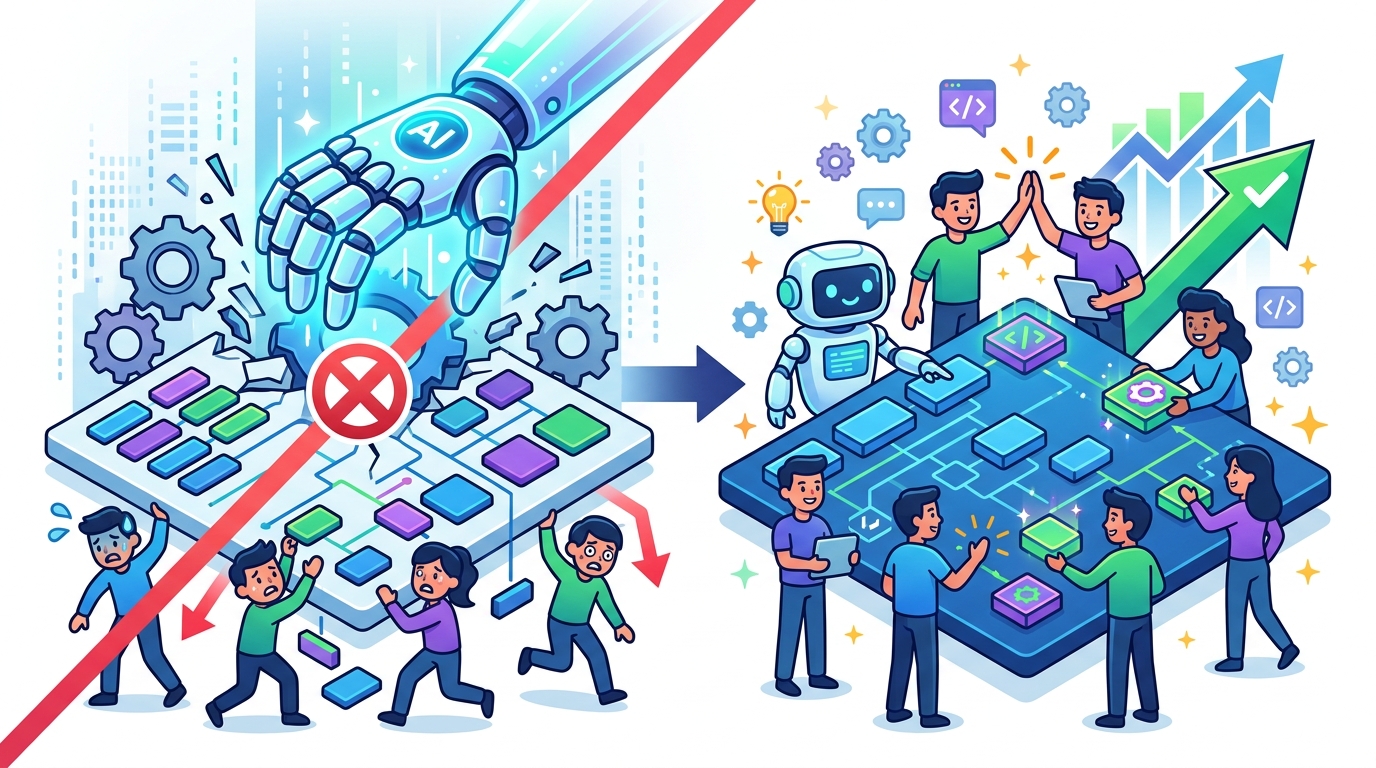

The strongest case for AI as a rewrite engine is speed. A senior engineer can often read a component, identify a cleaner architecture, and apply it in one pass. A good assistant can imitate that pattern and save time on repetitive work. In large frontend codebases, there are plenty of places where the current code is already brittle, duplicated, or over-coupled. If a tool rewrites a module into a cleaner shape, the argument goes, the team gets better code and faster delivery in one move.

That argument is real, and it is strongest when the code is isolated, the tests are strong, and the team already plans a refactor. It is weakest in day-to-day product work, where the primary goal is not elegance but safe change. The digest’s evidence cuts against the rewrite-first mindset: broad edits increase cognitive load, while explicit preservation instructions reduce churn. The right limit is simple. Use AI to propose refactors, not to perform them blindly. Accept larger rewrites only when the diff is already a refactor ticket with tests and review time. For ordinary frontend fixes, the assistant must stay in edit mode, not transformation mode.

What to do with this

If you are an engineer, change your prompts and your review habits now. Ask the model to preserve structure, avoid renaming unless necessary, and touch the smallest possible surface area. If you are a PM, stop measuring AI success by how dramatic the output looks and start measuring it by escaped defects, review time, and rollback rate. If you are a founder, invest in workflow integration over demo-friendly magic. The winning AI tools in frontend will look less like autonomous rewrite bots and more like disciplined assistants that respect existing code, existing standards, and existing intent. That is how they reduce risk instead of manufacturing it.

// Related Articles

- [IND]

Banking Groups Push Senate to Tighten Stablecoin Yield Rules

- [IND]

Senate committee advances crypto bill after $119M push

- [IND]

Sulphur 2 把开源视频生成推向无审查路线

- [IND]

Why Anthropic’s small-business push is the right AI bet

- [IND]

Why AI infrastructure is now the real moat

- [IND]

Circle’s Agent Stack targets machine-speed payments