Z.ai’s GLM-5V-Turbo Beats Claude on Design2Code

Z.ai’s GLM-5V-Turbo scores 94.8 on Design2Code, tops Claude Opus 4.6, and trains entirely on Huawei Ascend chips.

Z.ai just put a very specific number on a very specific bet: 94.8. That is the Design2Code score for GLM-5V-Turbo, its new native multimodal vision coding model, and it lands well above Claude Opus 4.6 at 77.3. For anyone building UI agents, screenshot-to-code tools, or design automation workflows, that gap is the part that matters.

The model also arrives with a technical detail that makes it more interesting than a standard benchmark flex: Z.ai says GLM-5V-Turbo was trained entirely on Huawei Ascend chips using MindSpore, with zero NVIDIA dependency. That matters for supply chain strategy, model economics, and the broader AI hardware race.

A vision model built for code, not just chat

Get the latest AI news in your inbox

Weekly picks of model releases, tools, and deep dives — no spam, unsubscribe anytime.

No spam. Unsubscribe at any time.

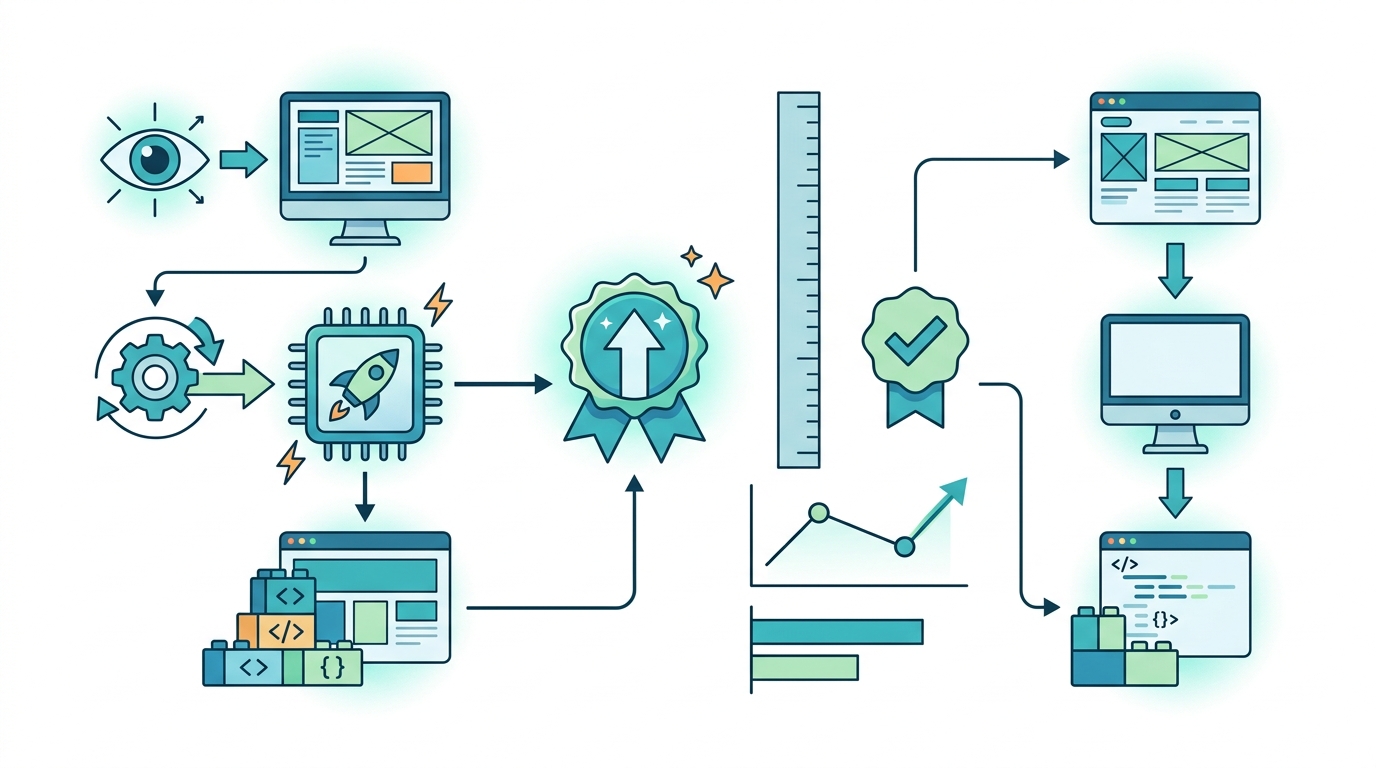

GLM-5V-Turbo is not a general chatbot with image support bolted on later. Z.ai built it for vision-to-code work and GUI agent tasks from the start. The model takes screenshots, design drafts, document layouts, images, and video frames, then turns that visual input into code or interface actions.

Under the hood, GLM-5V-Turbo extends the GLM-5 family, which Z.ai describes as a 745 billion parameter Mixture of Experts model with 256 experts and about 44 billion active parameters per inference. The vision version adds CogViT, a new vision encoder that gives the model native visual understanding rather than a fragile image wrapper.

The context window is large enough to matter in real work: 200K tokens in, 128K tokens out. That is the kind of capacity you want when a model has to inspect a long product spec, compare multiple screens, or trace a UI bug across a codebase and a screenshot.

- Design2Code score: 94.8

- Claude Opus 4.6 on the same benchmark: 77.3

- Model family: GLM-5

- Total parameters: 745B

- Experts: 256

- Active parameters per inference: about 44B

- Context window: 200K tokens

- Output window: 128K tokens

Why the benchmark gap matters

Design2Code is a cleaner signal than a generic chat leaderboard because it checks whether a model can reproduce a visual design as functional code. That is the exact job many teams want an AI to do when they hand it a Figma mockup, a screenshot, or a rough wireframe. A 94.8 score suggests GLM-5V-Turbo is doing far more than describing pixels.

Z.ai also says the model leads on AndroidWorld and WebVoyager, two GUI-agent environments that test whether a model can operate software rather than just talk about it. It also posts strong results on CC-Bench-V2 and PinchBench.

“The future of AI will be about systems that can act, not just answer.” — Satya Nadella

That quote from Microsoft CEO Satya Nadella keeps showing up in product strategy discussions for a reason. The market is moving from text-only assistants toward agents that can inspect interfaces, click through workflows, and output usable code. GLM-5V-Turbo is aimed squarely at that shift.

There is an important caveat, though. Z.ai’s own positioning suggests Claude still holds an edge in pure text coding tasks such as backend development and repository exploration. So this is not a blanket win. It is a targeted win in the vision-to-code pipeline: screenshot in, working interface out.

Pricing is part of the product strategy

Z.ai is pricing GLM-5V-Turbo aggressively. The API is listed at $1.20 per million input tokens and $4.00 per million output tokens, with cached reads at $0.24 per million. That puts the model roughly 60% to 80% below Western alternatives for comparable workloads, depending on the task mix and cache use.

For teams running agent loops, that pricing difference matters more than model spec sheets. GUI agents burn tokens fast because they inspect screens, reason about state, call tools, and revise outputs. A model that is cheaper by a wide margin can be used more freely in production, especially when every iteration involves multiple screenshots and tool calls.

OpenRouter’s early telemetry adds another useful signal. Z.ai’s model reportedly logged about 59,000 requests in its first two days, along with 63.6 million reasoning tokens and 18,900 tool function calls. That is not hobbyist traffic. It looks like real developer experimentation.

- Input pricing: $1.20 per million tokens

- Output pricing: $4.00 per million tokens

- Cached reads: $0.24 per million tokens

- Reported early requests on OpenRouter: about 59,000 in two days

- Reasoning tokens: 63.6 million

- Tool function calls: 18,900

What this says about Z.ai’s strategy

Z.ai is not trying to beat every frontier model at every task. It is picking a lane where visual reasoning, interface manipulation, and code generation overlap. That is a smart move because the developer market is fragmenting into specialized workflows: one model for long-context text, another for code review, another for UI reconstruction, another for agentic automation.

The hardware story matters too. Training on Ascend chips gives Z.ai a clean narrative around domestic infrastructure and less dependence on U.S. GPU supply. It also signals that serious frontier-style training is no longer limited to NVIDIA-based stacks. If the company can keep quality high while staying on that hardware path, it will have a supply and cost advantage that competitors cannot ignore.

There is also the business backdrop. Z.ai completed its Hong Kong IPO in January 2026 and raised about $558 million. That kind of capital gives a company room to spend on training runs, inference infrastructure, and product distribution at the same time.

For developers, the practical question is simple: does your workflow start with a screenshot, a mockup, a document, or a browser? If yes, GLM-5V-Turbo looks worth testing. If your day is mostly backend logic, codebase spelunking, and text-heavy refactors, Claude and other general-purpose models may still be the better fit.

Conclusion: the niche is the point

GLM-5V-Turbo is a reminder that AI progress is getting more specialized, not less. The model’s 94.8 Design2Code score, Ascend-only training stack, and low token pricing point to a very specific product thesis: win the workflows where visual input turns into code and agent actions.

The next question is whether Z.ai can turn that benchmark lead into sticky developer habits. If the model keeps its edge in real UI work, expect more teams to route design-to-code and browser automation jobs to GLM-5V-Turbo while keeping other models for text-heavy reasoning. That split could become the default in AI engineering shops much sooner than people expect.

// Related Articles

- [MODEL]

Why Google’s Hidden Gemini Live Models Matter More Than the Demo

- [MODEL]

MiniMax-M1 brings 1M-token open reasoning model

- [MODEL]

Gemini Omni Video Review: Text Rendering Beats Rivals

- [MODEL]

Why Xiaomi’s MiMo-V2.5-Pro Changes Coding Agents More Than Chatbots

- [MODEL]

OpenAI’s Realtime Audio Models Target Live Voice

- [MODEL]

Anthropic发布10款金融AI Agent