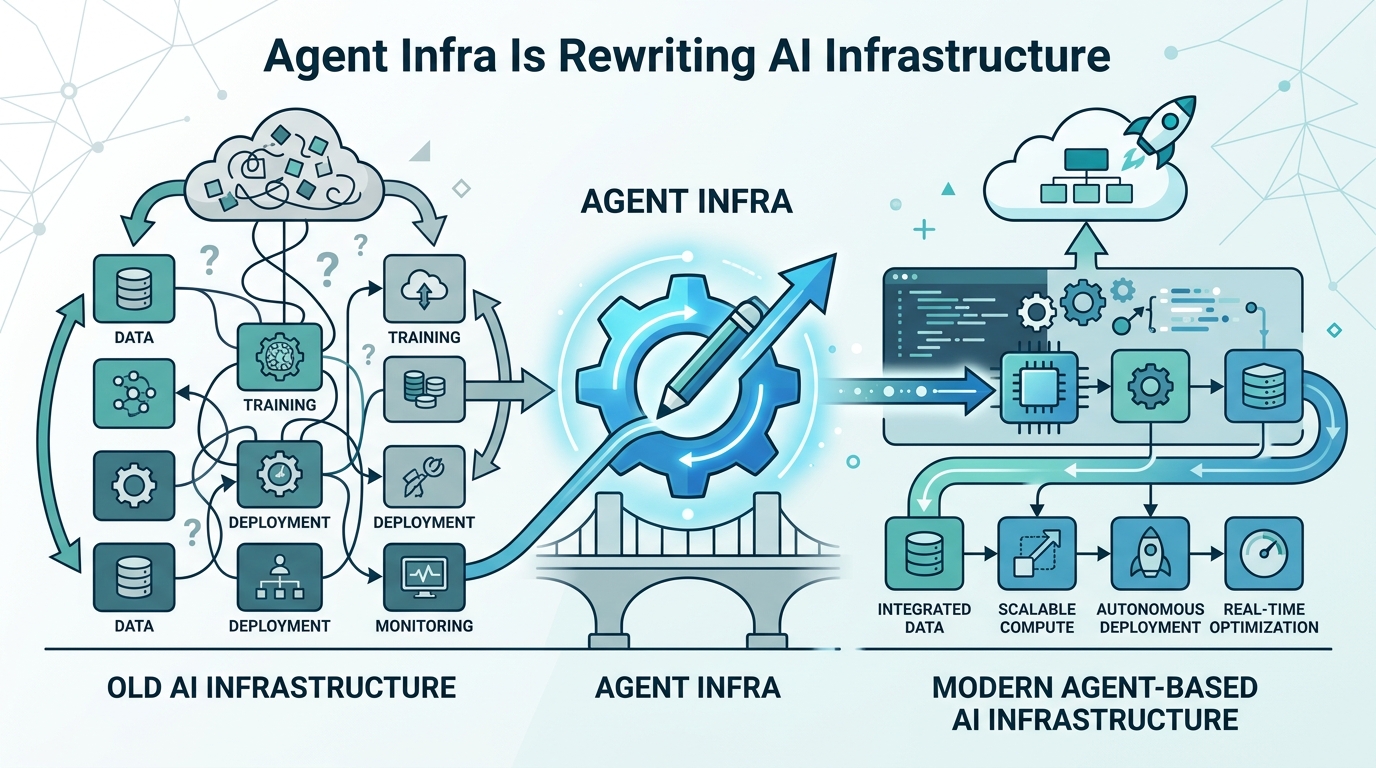

Agent Infra Is Rewriting AI Infrastructure

SWE-agent, Anthropic, and MCP show why agent performance now depends on interfaces, state, and scheduling, not model size alone.

In 2024, SWE-agent made a sharp point: agent performance is not just a function of model quality. The way an agent talks to its tools, remembers state, and gets scheduled across tasks can change results by a lot. Then Anthropic pushed that idea further with Model Context Protocol (MCP), which turned tool connectivity into an open standard instead of a one-off integration problem.

That shift matters because “AI infrastructure” is no longer just about serving tokens cheaply. The stack is being rewritten around agents that act, call tools, keep context, and coordinate work across systems. If you are building products, the next bottleneck is often the agent layer itself.

Why 2024 changed the agent conversation

Get the latest AI news in your inbox

Weekly picks of model releases, tools, and deep dives — no spam, unsubscribe anytime.

No spam. Unsubscribe at any time.

The old framing treated the model as the center of gravity. Bigger models, more parameters, better benchmarks, end of story. But agentic systems exposed a different reality: once a model can plan, call APIs, inspect files, and retry actions, the surrounding infrastructure starts to matter as much as the model.

SWE-agent became one of the clearest public demonstrations of this. It showed that software engineering tasks depend heavily on the agent-computer interface, meaning the exact prompts, tool formats, and feedback loops affect whether the agent can complete a task at all.

Anthropic’s public guidance on agents made the same point from a product angle. In production, the most reliable systems tend to use simple, composable patterns. That is a pretty direct warning against building giant, magical agent frameworks before you have a reason to.

- Agent quality depends on model capability plus interface design.

- Tool use changes the infra problem from pure inference to systems orchestration.

- Production reliability improves when agent logic stays small and composable.

- Open standards matter once multiple tools and vendors enter the stack.

Serving is no longer the whole story

Classic AI infrastructure focused on serving: model hosts, batching, quantization, latency control, and GPU utilization. Those are still important, but agents add a second layer of demand. Now the system has to handle tool calls, state updates, retries, branching plans, and sometimes multiple concurrent workers.

That creates a different set of tradeoffs. A fast model is useful, but a fast model with poor tool routing can still fail. A cheap inference stack is useful, but a cheap stack that loses state between steps can waste more money in retries than it saves in compute.

This is why agent infra is starting to look like a blend of runtime, workflow engine, and database coordination layer. The model is one component. The agent system around it is the product.

Some of the most important design questions now look like this:

- How often should the agent checkpoint state?

- Which actions should happen synchronously versus in the background?

- How do you retry a failed tool call without duplicating side effects?

- What should be cached, and what must always be recomputed?

Anthropic’s MCP moved tools toward a standard

When Anthropic introduced MCP in November 2024, the important part was not branding. It was the attempt to standardize how agents connect to tools and external data. That matters because every custom connector adds friction: different schemas, different auth flows, different tool descriptions, different failure modes.

MCP gives developers a cleaner way to expose capabilities to agents. Instead of wiring every app into every model with bespoke glue, teams can define a protocol layer that multiple clients can understand. That is a big deal for companies that want portability across models and vendors.

It also changes the economics of integration. If your internal tools can speak a shared protocol, you spend less time rewriting adapters and more time improving the actual agent behavior. That is the kind of boring infrastructure work that usually decides whether a system scales beyond a demo.

“The future of AI is not about one model to rule them all. It is about many models, many tools, and a standard way for them to talk.” — Dario Amodei

That quote captures the direction of travel well. The center of value is shifting from model access alone to the connective tissue around it.

State and scheduling are becoming first-class problems

Once agents start doing real work, state stops being an implementation detail. You need to know what the agent has seen, what it already tried, which tool outputs are trustworthy, and where a task can safely resume after interruption. That pushes AI systems closer to distributed systems engineering.

Scheduling matters for the same reason. If you run one agent per user request, you may get away with simple queues. If you run hundreds or thousands of long-lived agents, you need policies for priority, concurrency, memory pressure, and tool contention. The infra challenge shifts from “can the model answer?” to “can the system coordinate many partial actions without collapsing under its own complexity?”

That is why agent infra is starting to split into distinct layers:

- Serving: model inference, batching, latency, and cost control.

- State: memory, checkpoints, task history, and recovery.

- Scheduling: queues, worker allocation, retries, and parallel execution.

- Tooling: connectors, permissions, schemas, and protocol support.

These layers are already visible in the tooling ecosystem. LangGraph focuses on graph-based agent workflows, while OpenAI’s Agents SDK pushes a more structured way to build multi-step agent apps. Different opinions, same direction: the runtime around the model is becoming a product category on its own.

What this means for builders right now

If you are building agent products, the practical takeaway is simple: stop treating the model as the whole stack. The real wins now come from reducing coordination failures, clarifying tool contracts, and designing stateful workflows that can survive retries and interruptions.

That also means teams should be careful about overbuilding. A lot of agent demos look impressive because they chain together many abstractions. In production, each extra layer adds failure modes. The systems that last are usually the ones that keep the control flow understandable and the interfaces explicit.

My read is that agent infra will keep moving toward standard protocols, stateful runtimes, and scheduling systems that look more like distributed application infrastructure than chat app plumbing. The next question is not whether agents can call tools. It is which teams can make those tool calls reliable enough to trust with real work.

If you are deciding where to invest, look at the seams: state persistence, protocol support, task orchestration, and recovery after failure. That is where the next wave of differentiation will show up.

// Related Articles

- [IND]

Why Nebius’s AI Pivot Is More Real Than Hype

- [IND]

Nvidia backs Corning factories with billions

- [IND]

Why Anthropic and the Gates Foundation should fund AI public goods

- [IND]

Why Observability Is Critical for Cloud-Native Systems

- [IND]

Data centers are pushing homeowners to solar

- [IND]

How to choose a GPU for 异环