A Better Way to Seed New LM Tokens

GTI grounds new vocabulary tokens before fine-tuning, aiming to preserve distinctions that mean initialization tends to collapse.

Language models keep getting stretched beyond their original vocabularies. This paper looks at what happens when you add new learnable tokens for generative recommendation, and argues that the usual “just initialize them to the mean” approach is leaving performance on the table. The paper’s core idea is simple: give new tokens a meaningful starting point in embedding space before supervised fine-tuning, so the model has something structured to build on.

For engineers working on recommendation systems or any LM setup that extends the vocabulary with domain-specific symbols, the practical takeaway is clear: initialization is not a throwaway detail. The authors argue it can become a bottleneck that affects how much the model can actually learn from the new tokens later.

What problem this paper is trying to fix

Get the latest AI news in your inbox

Weekly picks of model releases, tools, and deep dives — no spam, unsubscribe anytime.

No spam. Unsubscribe at any time.

The setting here is generative recommendation, where language models are extended with new learnable vocabulary tokens such as Semantic-ID tokens. These tokens are meant to carry domain-specific meaning, but they do not come pretrained the way ordinary language tokens do. The standard workaround is to initialize them as the mean of existing vocabulary embeddings, then rely on supervised fine-tuning to teach them useful representations.

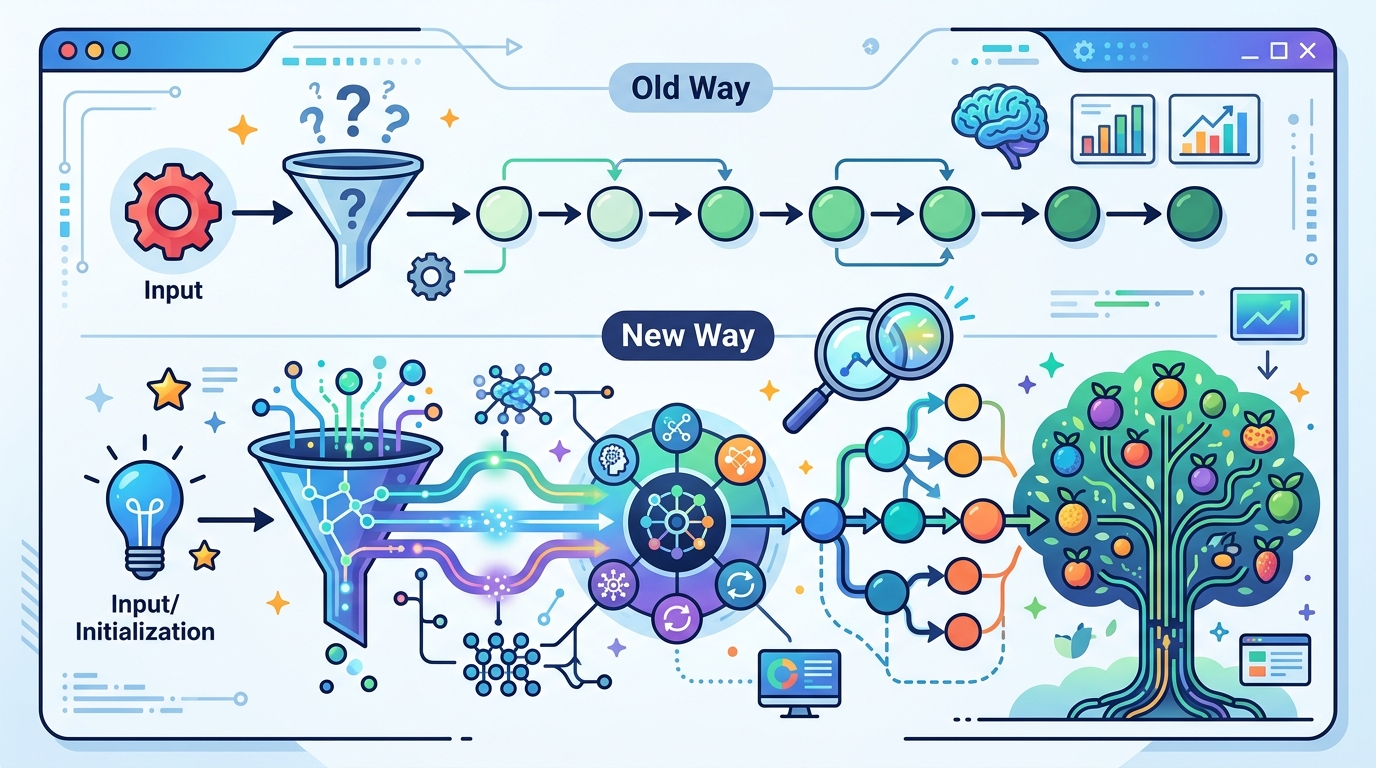

The paper claims that this common practice has a hidden cost. Using spectral and geometric diagnostics, the authors show that mean initialization collapses all new tokens into a degenerate subspace. In plain English: the tokens start out too similar to one another, which erases distinctions that the model would otherwise need to separate different items or concepts.

That matters because if the tokens begin life in nearly the same place, fine-tuning has to do extra work just to untangle them. The authors argue that later training struggles to fully recover the structure that was lost at initialization.

How the method works in plain English

To address this, the paper proposes the Grounded Token Initialization Hypothesis: if novel tokens are linguistically grounded in the pretrained embedding space before fine-tuning, the model can better reuse its general-purpose knowledge for the new domain. The idea is not to invent a new training regime from scratch, but to give the model a more informative starting map.

The implementation is called GTI, short for Grounded Token Initialization. It is described as a lightweight grounding stage that runs before fine-tuning and maps new tokens to distinct, semantically meaningful locations in the pretrained embedding space. The only supervision it uses is paired linguistic supervision.

That makes GTI conceptually different from the usual mean-based initialization. Instead of placing every new token at the same bland average point, GTI tries to position each one so that it already reflects some meaningful relationship to the pretrained vocabulary. In practice, that should give the model more structure to preserve and refine during downstream training.

What the paper actually shows

The paper’s main evidence comes from diagnostics and comparative evaluation, not from a single headline benchmark number. The abstract does not provide specific metric values, so there are no numbers to quote here. What it does say is that GTI outperforms both mean initialization and existing auxiliary-task adaptation methods in the majority of evaluation settings.

Those evaluations span multiple generative recommendation benchmarks, including both industry-scale and public datasets. That breadth matters because it suggests the effect is not tied to one narrow dataset or one carefully tuned setup. The paper is making a broader claim about vocabulary extension itself.

Beyond raw performance, the authors also analyze the internal geometry of the learned embeddings. They report that grounded embeddings produce richer inter-token structure that persists through fine-tuning. In other words, the benefit is not just that GTI starts in a better place; the structure it introduces seems to survive training rather than getting washed out immediately.

This is an important point for practitioners. If a method only helps at initialization but disappears after a few updates, it is mostly a curiosity. The paper argues GTI has a more durable effect, which supports the idea that the initialization stage is genuinely shaping downstream learning.

Why developers should care

If you build systems that extend LMs with domain-specific tokens, this paper is a reminder that representation quality begins before the first supervised step. A weak initialization can force the model to spend capacity undoing a bad starting geometry instead of learning the task itself.

That is especially relevant in generative recommendation, where token semantics are not just labels but part of the model’s interface to items, entities, or catalog structure. When new tokens are too compressed or too similar, the model may have a harder time separating candidates and preserving fine-grained distinctions.

GTI is also attractive from an engineering perspective because the paper describes it as lightweight. It does not read like a heavyweight architecture change or a large auxiliary system bolted onto the training stack. Instead, it is a pre-fine-tuning grounding step that aims to improve the quality of the vocabulary extension itself.

- Mean initialization may be too lossy for new vocabulary tokens.

- Grounding tokens in pretrained embedding space can preserve useful distinctions.

- The effect appears to hold across multiple generative recommendation benchmarks.

- The paper does not give benchmark numbers in the abstract, so the exact gains are not available here.

Limits and open questions

The abstract gives a strong high-level story, but it leaves out the details developers would want before adopting the method. We do not get concrete benchmark values, training cost, or implementation complexity in the source provided here. We also do not see how sensitive GTI is to the quality of the paired linguistic supervision it relies on.

Another open question is whether the same approach transfers cleanly beyond generative recommendation. The paper’s motivation is clearly tied to Semantic-ID tokens and similar domain-specific vocabularies, so the generality to other LM extension problems is plausible but not demonstrated in the abstract.

Still, the paper’s broader message is useful: when you add new tokens to a pretrained LM, the initialization strategy is not just a minor setup choice. It can shape the geometry of the learned space, the separability of the new tokens, and how much the model can recover during fine-tuning.

For teams building LM-based recommendation stacks, that means vocabulary extension deserves the same care as any other model design choice. GTI is one more piece of evidence that the “small” details in embedding initialization can have outsized effects on the final system.

Grounded Token Initialization for New Vocabulary in LMs for Generative Recommendation is therefore less about a flashy new architecture and more about fixing a foundational step that many pipelines probably take for granted.

// Related Articles

- [CHAIN]

Web3 Communication Is Becoming Trust Infrastructure

- [CHAIN]

Why Base’s x402 Protocol Matters More Than the $100M Milestone

- [CHAIN]

Gala Games Finds New Life in Web3 Gaming

- [CHAIN]

Why Lace 2.0 Matters More Than Cardano’s Next Hard Fork

- [CHAIN]

Why Ethereum Treasury Buying Is Becoming a Bad Long-Term Bet

- [CHAIN]

Yakovenko Warns AI Could Crack PQC Wallets