HPE’s new ProLiant gear targets edge AI

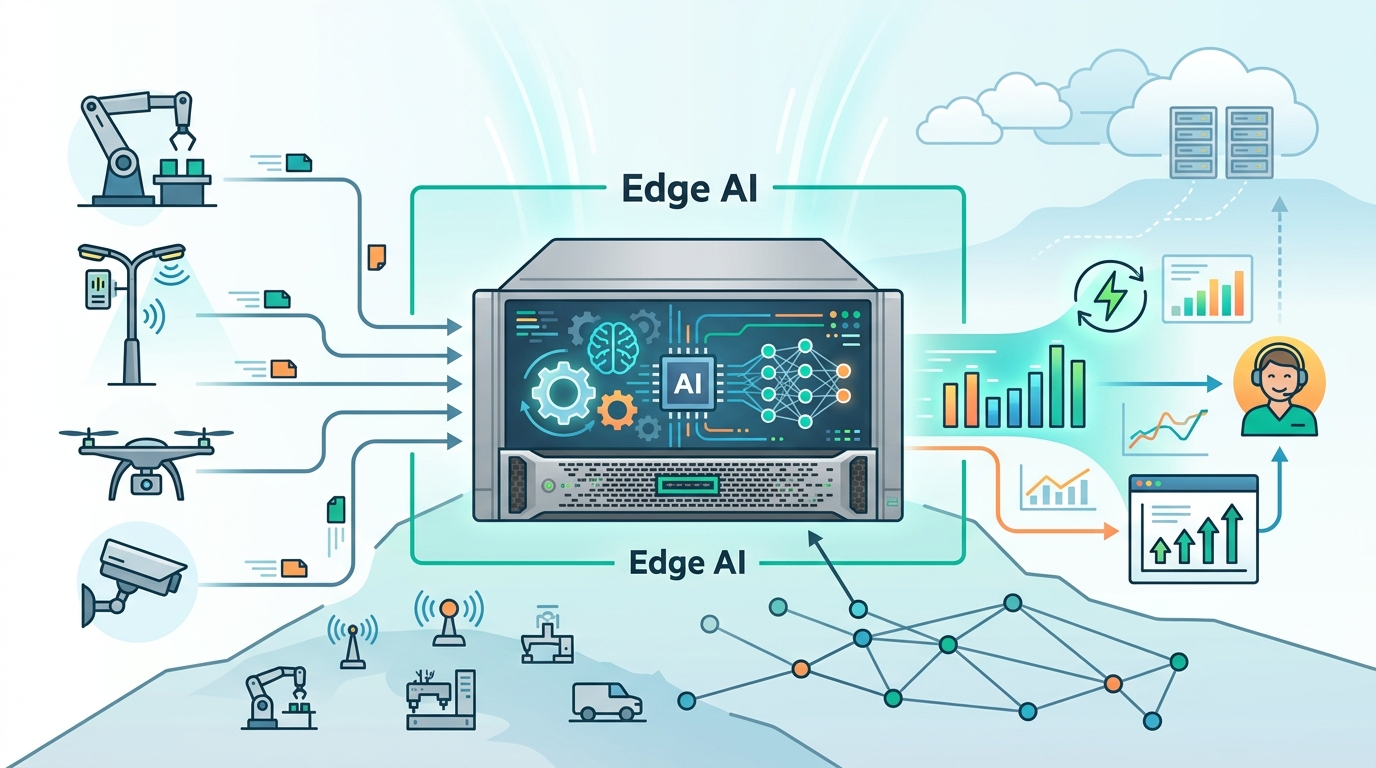

HPE added ProLiant edge servers for AI, analytics, and automation in warehouses, stores, factories, and other distributed sites.

HPE added new ProLiant servers for AI and analytics at the edge.

Hewlett Packard Enterprise announced the new lineup on April 30, 2026, with the ProLiant family aimed at places where data is created, not just stored. The pitch is simple: if a store, factory, or telecom site needs local inference and automation, the server should live there too.

The new hardware centers on the ProLiant Compute EL2000 chassis, plus two Gen12 nodes called the EL220 and EL240. HPE also updated the ProLiant DL145 Gen11 for harsher deployments that need quieter operation, more GPU headroom, and better remote management.

| Product | Key detail | Deployment focus |

|---|---|---|

| ProLiant Compute EL2000 | New chassis for EL220 and EL240 nodes | Distributed edge sites |

| EL220 | Low-profile node, two fit in one chassis | Space-constrained locations |

| EL240 | More expansion room, supports Nvidia RTX Pro 4500 and 6000 GPUs | AI and graphics-heavy edge workloads |

| DL145 Gen11 | Powered by AMD EPYC 8005, supports up to 3 GPUs, ruggedized to 55°C | Retail, manufacturing, telecom, field use |

HPE is betting that edge AI needs different hardware

Get the latest AI news in your inbox

Weekly picks of model releases, tools, and deep dives — no spam, unsubscribe anytime.

No spam. Unsubscribe at any time.

HPE’s framing matters because it is not treating edge computing like a smaller version of the data center. Krista Satterthwaite, senior vice president and general manager of compute, said, “Every edge is different and edge is hard.” That is the right diagnosis. A warehouse with patchy connectivity, a retail back room with little rack space, and a defense site with air-gapped systems all need different tradeoffs.

The company is aiming at workloads that care about latency, local autonomy, and data gravity. That includes video analytics, industrial automation, and on-site AI inference where sending everything back to a central cloud would add delay, cost, or both. HPE’s message is that the edge is becoming a real compute tier, not a side project.

- EL220 is compact enough that two nodes can fit in one EL2000 chassis.

- EL240 adds room for extra storage and Nvidia RTX Pro 4500 or 6000 GPUs.

- DL145 Gen11 now uses AMD’s EPYC 8005 series processor.

- The updated DL145 can be ruggedized for temperatures up to 55 degrees Celsius.

Management software is the part HPE wants you to notice

Hardware gets the headline, but the management layer is where this story gets practical. HPE says Integrated Lights-Out, or iLO, provides built-in security and remote visibility, while HPE Compute Ops Management extends control into the cloud so distributed systems can be handled like one fleet. That matters when the servers are scattered across dozens or thousands of sites.

“The portfolio is great, but it’s only as good as the management that comes along with it,” said John Carter, vice president of mainstream compute at HPE.

That quote gets to the real buying decision. Edge hardware is easy to sell in a demo; it is much harder to support when the nearest technician is hours away. Remote provisioning, policy enforcement, and fleet-wide monitoring often decide whether an edge rollout is manageable or a headache.

HPE is also trying to reduce friction for customers already tied into Microsoft’s stack. The company said the Azure Local Disconnected Operations option is available for the DL145 Gen11 Premier Solution, which matters for isolated and air-gapped environments. That makes the system more appealing to organizations that want local compute without giving up familiar cloud tooling.

The specs tell you who these systems are for

Compared with a standard cloud server, the ProLiant edge lineup is built around physical constraints first. The EL220 is about density. The EL240 is about compute expansion. The DL145 Gen11 is about durability, quiet operation, and GPU support in places where rack space and cooling are limited.

Here is the practical comparison:

- EL220: best fit for compact deployments where every rack unit matters.

- EL240: better for AI inference and graphics workloads that need more room for expansion.

- DL145 Gen11: better for environments that need ruggedness, quieter acoustics, and up to three GPUs.

- Azure Local Disconnected Operations: useful for private or isolated sites that still want Microsoft-managed workflows.

That mix lines up with the customers HPE named: RaceTrac, Bosch, CieloVision, and Bell Food Group. Those are not abstract edge use cases. They are retail, industrial, spatial intelligence, and food processing environments where local compute can cut latency and keep operations moving.

The bigger signal here is that HPE thinks edge infrastructure is maturing into a repeatable product category, with chassis, nodes, GPUs, management software, and cloud integration all bundled into one story. If the company can make deployment and fleet control simple enough, the next buying question will be less about whether edge AI is useful and more about which sites justify the spend first.

What to watch next

The key test is whether HPE can turn these systems into a standard template for distributed AI deployments instead of a one-off set of niche boxes. If EL2000-based systems start showing up in stores, factories, and telecom closets with the same management tooling, the edge market gets easier to buy and easier to run.

For IT teams, the takeaway is practical: if your workload needs local inference, limited latency, or operation in disconnected sites, this is the kind of hardware stack worth comparing against your current x86 edge servers and GPU appliances. The real question now is whether HPE’s management story is strong enough to make large fleets feel boring, because boring is what edge operations usually need.

// Related Articles

- [IND]

Why Nebius’s AI Pivot Is More Real Than Hype

- [IND]

Nvidia backs Corning factories with billions

- [IND]

Why Anthropic and the Gates Foundation should fund AI public goods

- [IND]

Why Observability Is Critical for Cloud-Native Systems

- [IND]

Data centers are pushing homeowners to solar

- [IND]

How to choose a GPU for 异环