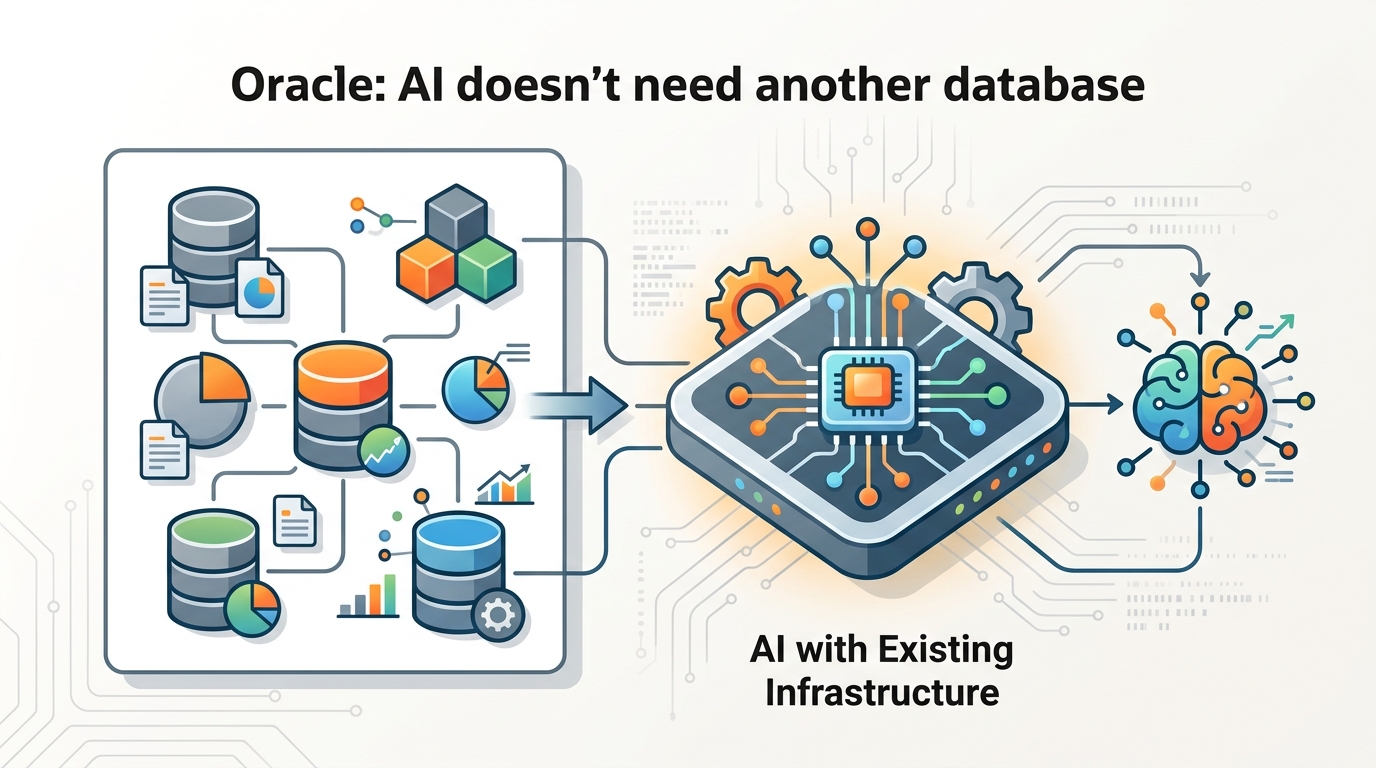

Oracle: AI doesn’t need another database

InfoWorld argues vector search belongs in existing databases, not a separate stack, as vendors like Pinecone move toward retrieval quality.

InfoWorld says most AI apps should use vector search inside existing databases, not a separate vector store.

For enterprise AI, the case for a standalone vector database is getting weaker. In an InfoWorld opinion piece published May 11, 2026, Matt Asay argues that vector support is now common across major databases, so teams should add embeddings to the systems they already run instead of creating a second source of truth.

| 項目 | 數值 |

|---|---|

| Publication date | May 11, 2026 |

| DB-Engines tracked databases | 434 |

| Pinecone launch year | 2021 |

| Postgres pgvector release | 2021 |

| SQL Server version | 2025 |

| Oracle AI Database version | 26ai |

What changed

Get the latest AI news in your inbox

Weekly picks of model releases, tools, and deep dives — no spam, unsubscribe anytime.

No spam. Unsubscribe at any time.

The article says the old pitch for vector databases has faded: traditional databases now support vector types, vector search, and vector indexes. Asay cites Oracle AI Database 26ai, SQL Server 2025, MongoDB Atlas Vector Search, and pgvector as examples of vector support moving into mainstream data platforms.

That shift changes the debate. The value is no longer just storing embeddings; it is retrieval quality, freshness, permissions, and operational simplicity. The article says vendors such as Pinecone, Weaviate, Milvus, and Qdrant are moving up the stack toward those problems.

- Vector search matters for RAG, recommendations, personalization, and agent memory.

- Production AI needs context tied to transactions, documents, policies, and access rules.

- Separate systems can create stale embeddings and inconsistent answers.

- Asay says retrieval should sit close to governed data, not in a detached side system.

Why it matters

For developers, the practical message is simple: if the data already lives in a database, start there. That reduces glue code, avoids syncing multiple stores, and keeps permissions and freshness aligned with the source of truth. The article argues this is especially important when AI assistants answer from customer records, invoices, support tickets, or policy documents.

Standalone vector databases still make sense when retrieval is the product, not just a feature. Search platforms, large multitenant RAG services, and systems with strict latency or ranking needs may still justify specialized infrastructure. But the default architecture for most enterprise apps is shifting toward embedded vector support inside existing databases.

The market takeaway is that vector vendors now have to win on more than storage. They need to prove they can deliver better retrieval, lower latency, stronger developer experience, and cleaner operations than the database teams enterprises already trust.

The question now is not whether vector search matters. It is whether teams really need another database, or just better retrieval inside the one they already have.

// Related Articles