Span, Nvidia, Pulte: Mini AI Data Centers in Homes

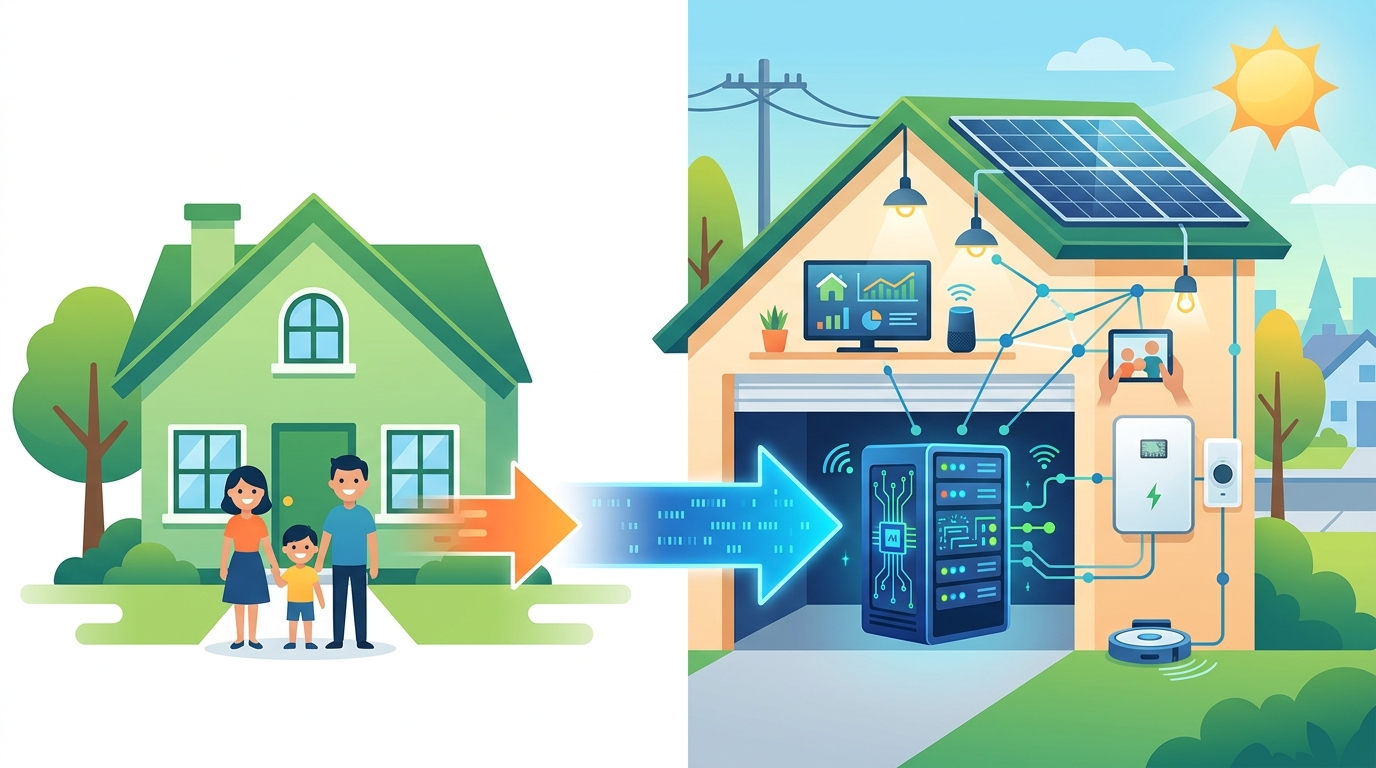

Span is testing home-based AI inference nodes with 1.25 MW across 100 homes, cutting build time from years to months.

Span is trying something that sounds odd until you look at the numbers: 1.25 megawatts of AI inference capacity spread across 100 newly built homes. The company says its new XFRA Node can be installed at no upfront cost to homeowners, while the homes pay a $150 monthly fee for energy and internet.

This is not a toy project. Span says the same 100-megawatt block that can take three to five years and more than $15 million per megawatt to build in a traditional data center could be assembled through 8,000 homes in roughly six months at about $3 million per megawatt. That is a very different answer to the same problem: AI compute is growing faster than power infrastructure.

What Span is actually building

Get the latest AI news in your inbox

Weekly picks of model releases, tools, and deep dives — no spam, unsubscribe anytime.

No spam. Unsubscribe at any time.

Span’s core business is the smart electrical panel, which monitors and manages a home’s power use in real time. The new XFRA Node adds a distributed compute system to that setup, plus a whole-home battery. Span says its orchestration software can route AI workloads across nodes based on latency needs and available energy capacity.

The company is pitching this as a way to use spare capacity in the existing electrical distribution network. Arch Rao, Span’s founder and CEO, said the network operates at only 40% to 45% utilization nominally. In his view, that leaves enough room to put compute closer to where power already exists instead of waiting years for new substations, transformers, and transmission upgrades.

The practical idea is simple: if the grid already has headroom in some places, why build every inference workload into a giant centralized building? Span wants to place small compute modules where homes already have utility connections, solar, batteries, and modern electrical controls.

- 1.25 MW pilot planned for 100 newly built homes

- 1,600 direct liquid cooled inference GPUs in the pilot

- 3 to 5 years to build a 100 MW traditional data center

- About 6 months to deploy the same capacity through 8,000 homes, according to Span

- More than $15 million per MW for conventional builds, versus about $3 million per MW for Span’s model

Why this matters for AI and the grid

The timing is obvious. AI demand is rising faster than new power infrastructure can be built. Interconnection queues stretch for years, equipment is scarce, and local opposition can slow large projects even more. Latitude Media cited research from Sightline Climate showing that up to half of data centers worldwide may be delayed this year.

That pressure has pushed more attention toward grid utilization, a broad bucket of ideas that includes demand response, behind-the-meter batteries, and shifting load to places where power is already available. Span’s pitch fits squarely inside that trend. Instead of asking utilities to deliver a giant new lump of power to one site, it distributes the load across many homes.

For NVIDIA-style inference workloads, latency matters more than it does for model training. Chatbots, autonomous driving systems, and medical imaging tools need to answer fast. Span says putting compute closer to users can reduce latency while also avoiding some of the cost and delay of building massive centralized sites.

“Our hypothesis at Span has been that the existing distribution network operates at only 40 to 45% utilization, nominally,” Arch Rao told Latitude Media. “So there’s plenty of headroom on the existing system that can be used in a more effective way. And then we’re able to deliver compute much faster.”

The homeowner pitch is cheaper power, not free money

Span says installation costs nothing for the homeowner, but the household pays a fixed $150 monthly fee that covers energy and internet. The company says that price is below typical monthly costs. In exchange, Span owns the compute assets and backup battery storage, then sells computing power to hyperscalers, neocloud companies, and AI firms.

That model is familiar if you have seen third-party solar financing. The household gets lower upfront friction and predictable payments, while the provider owns the equipment and monetizes the output. The difference here is that Span is selling compute, not just electricity.

There is also a housing angle. Rao said Pulte Homes, the third-largest homebuilder in the U.S., already installs Span’s smart panels. He said the panel tech helps keep homes at 200 amps, which reduces the size of utility interconnection and the amount of copper in the home. Adding XFRA Nodes in some homes, he argued, could make the homes more affordable by bundling discounted energy and internet into the offer.

- Pulte Homes is already using Span’s smart panels in some builds

- Span says 200-amp homes need less copper and smaller utility interconnections

- The XFRA model pairs compute with battery storage owned by Span

- Homeowners pay a fixed monthly fee instead of buying the hardware outright

How this compares with traditional data centers

Span is careful not to oversell the idea. These home-based nodes will not replace large training campuses. Training frontier models still needs huge centralized sites with dense power delivery, cooling, and networking. The XFRA Node is aimed at inference, where distributed placement may make more sense.

The comparison is less about who wins and more about which workload belongs where. Training wants scale and concentration. Inference wants proximity and speed. That split is why the distributed model is interesting: it turns homes, batteries, and smart panels into small pieces of a compute network.

Here is the tradeoff in plain terms:

- Traditional data centers concentrate power, cooling, and networking in one place

- Span spreads compute across many homes with existing grid connections

- Centralized builds are better for training large models

- Distributed nodes may be better for latency-sensitive inference

- Span’s model depends on software that can route jobs across many small sites

Span is also planning a commercial XFRA Node for early 2027, aimed at businesses and office buildings. That suggests the company sees this as more than a residential experiment. If the home pilot works, the next test is whether commercial sites can absorb enough distributed load to matter for enterprise AI demand.

What to watch next

The real question is whether the economics hold when the pilot scales beyond a neat demo. The home model depends on stable utility relationships, hardware reliability, and enough AI demand to keep the distributed nodes busy. It also depends on homeowners being comfortable with a system that mixes power infrastructure, batteries, and compute hardware inside a house.

If Span can prove that a home can host a tiny inference node without turning into a maintenance headache, it may open a new category of distributed infrastructure. If it cannot, the idea will still have done something useful: it will have forced the AI industry to think harder about where compute should live, and who should pay for the power behind it.

My bet is that the first winners will be inference-heavy workloads in places where grid access is already tight. The next question is whether other companies copy Span’s model or whether utilities and homebuilders decide to build their own versions first.

// Related Articles

- [IND]

Why Nebius’s AI Pivot Is More Real Than Hype

- [IND]

Nvidia backs Corning factories with billions

- [IND]

Why Anthropic and the Gates Foundation should fund AI public goods

- [IND]

Why Observability Is Critical for Cloud-Native Systems

- [IND]

Data centers are pushing homeowners to solar

- [IND]

How to choose a GPU for 异环