Why Streaming Platforms Must Kill AI Slop Remixes Fast

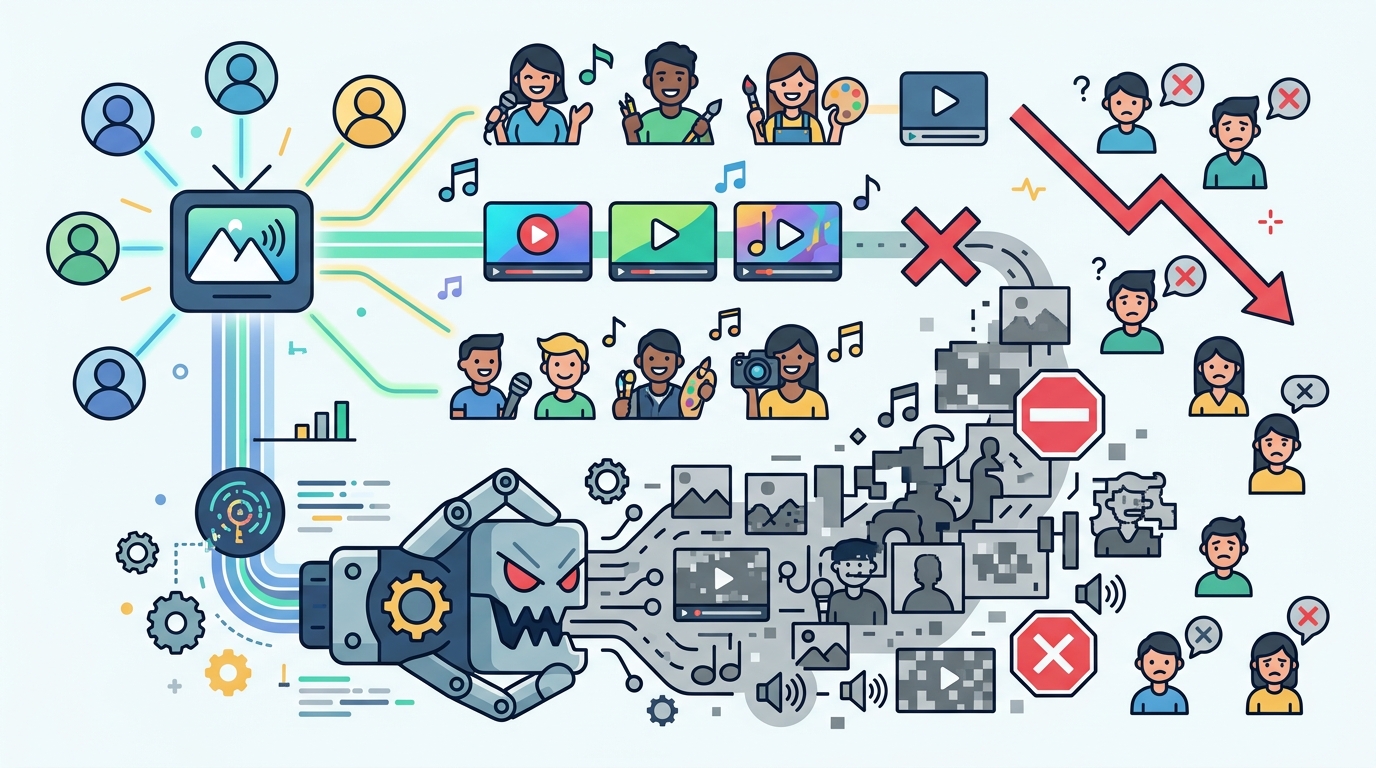

Streaming services should aggressively block AI slop remixes and stop paying royalties to anonymous upload farms.

Streaming services should aggressively block AI slop remixes and stop paying royalties to anonymous upload farms.

The music industry should treat AI slop remixes as fraud, not fandom, and streaming platforms should remove them before they distort charts, siphon royalties, and hijack artists’ names.

Stick Figure’s recent ordeal shows the problem in plain sight. A seven-year-old reggae track suddenly surged to the top of iTunes charts in multiple countries, but the spike was driven by unauthorized remixes that the band says were generated in one click and uploaded without permission. One version pulled more than 1.8 million YouTube views in five days. The band got the attention, the imitators got the traffic, and the actual creators got none of the money.

First argument: AI slop remixes are a royalty theft machine

Get the latest AI news in your inbox

Weekly picks of model releases, tools, and deep dives — no spam, unsubscribe anytime.

No spam. Unsubscribe at any time.

The core harm is economic, and it is immediate. Deezer says the amount of AI songs it detects daily jumped from 18 percent in 2025 to 44 percent in 2026, or more than 2 million tracks a month. Deezer also estimates that 85 percent of those tracks are fraudulent, built specifically to siphon royalties. That is not experimentation at the edges. That is industrial-scale extraction from the same pool of artists and rights holders who already fight for fractions of a cent per stream.

Streaming platforms already know how to respond when manipulation is obvious, and they should act the same way here. Spotify says it removed over 75 million spammy tracks in September and is testing an artist protection feature to keep AI-generated music from being attributed to real artists. That is the right instinct. If a platform can identify spam at that scale, it can also stop rewarding uploads that copy a recognizable song, recycle its lyrics, and use a new account to launder the result into the royalty system.

Second argument: chart distortion is not a harmless side effect

These remixes do more than steal money. They corrupt the signals that tell listeners, labels, and platforms what people actually want. Stick Figure’s song did not rise because of a new creative wave from the band. It rose because a swarm of unauthorized versions spread across TikTok, YouTube, and streaming services, creating the appearance of organic momentum. That kind of fake virality can force artists into responding to a trend they did not start and do not control.

The industry has seen a version of this before, but the scale is different now. When sped-up remixes of Steve Lacy’s “Bad Habit” took off on TikTok, his label eventually leaned into the trend and released an official version. That was messy, but it still left the artist in the driver’s seat. AI remix tools flip the script. They let anonymous uploaders manufacture derivative versions at volume, then capture the attention before the original artist can even decide whether to participate.

The counter-argument

The best defense of these uploads is that remix culture has always been part of music. Mashups, covers, edits, and fan-made versions have long pushed songs into new audiences. The Grey Album became a cultural object precisely because it crossed legal and artistic lines. In that view, aggressive takedowns risk flattening legitimate experimentation and giving platforms too much power to decide what counts as acceptable transformation.

That argument has real weight, and it should not be dismissed. But it fails on the facts that matter here. A fan mashup is usually legible as a remix and often credits the source; AI slop is built to impersonate, scale, and monetize without consent. The issue is not transformation. It is deception plus automated extraction. Once a system starts producing millions of near-zero-effort copies that exploit recognizable songs, the burden shifts from artists to platforms to verify origin and block abuse.

What to do with this

If you build a streaming product, a distributor, or a catalog tool, treat provenance as a first-class feature. Scan uploads against copyrighted audio and lyrics, require stronger identity checks for repeat uploaders, label synthetic or derivative content clearly, and withhold monetization until ownership is verified. If you are a founder, do not wait for a public scandal to design trust and safety into the product. If you are a PM or engineer, optimize for fewer false claims on artist pages, faster takedowns, and tighter controls on anonymous mass uploads. The platform that protects real artists first will earn the right to scale.

// Related Articles

- [IND]

Why Nebius’s AI Pivot Is More Real Than Hype

- [IND]

Nvidia backs Corning factories with billions

- [IND]

Why Anthropic and the Gates Foundation should fund AI public goods

- [IND]

Why Observability Is Critical for Cloud-Native Systems

- [IND]

Data centers are pushing homeowners to solar

- [IND]

How to choose a GPU for 异环