Why LLM Leaderboards Are Wrong About Model Quality

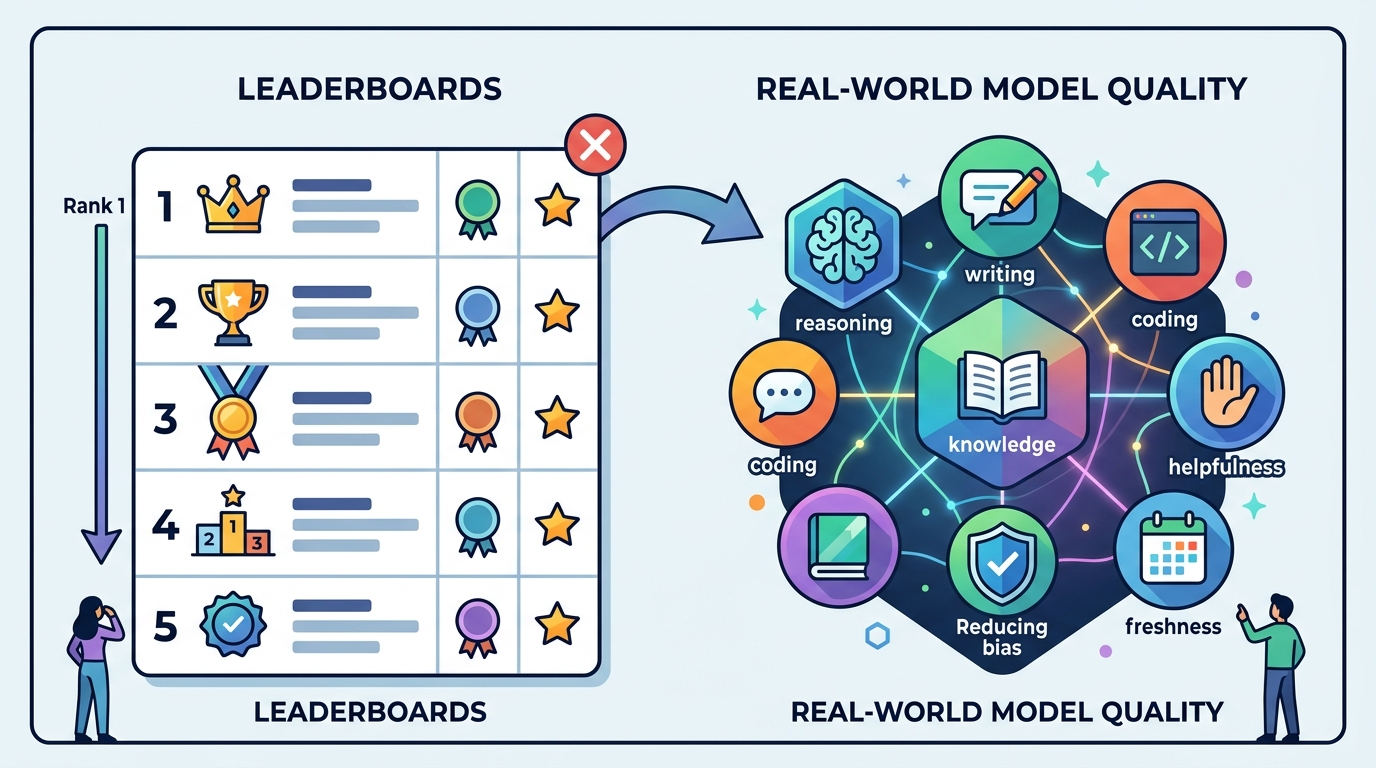

LLM leaderboards are useful, but they are the wrong way to choose a model for production.

LLM leaderboards are useful, but they are the wrong way to choose a model for production.

LLM leaderboards are useful, but they are the wrong way to choose a model for production.

The 2026 crop of rankings makes the problem obvious. GPT-5 can post a perfect AIME score, Claude Mythos Preview can top GPQA Diamond, Gemini 3.1 Pro can win on cost, and Grok 4 can stretch to a 2M-token context window. None of those facts tells you which model will reliably ship the best customer support agent, code review assistant, or document workflow in your stack. The leaderboard tells you what a model can do under a narrow test harness; it does not tell you what happens when your prompts are messy, your latency budget is tight, your tool calls fail, and your users ask for something the benchmark never covered.

First, leaderboards reward the wrong kind of excellence

Get the latest AI news in your inbox

Weekly picks of model releases, tools, and deep dives — no spam, unsubscribe anytime.

No spam. Unsubscribe at any time.

The biggest issue is that leaderboard success often measures performance on a slice of work, not the work itself. A model that dominates GPQA Diamond or AIME is impressive, but those scores say very little about whether it can follow a product spec, preserve formatting, or recover from a bad tool response. The article’s own examples show this split clearly: GPT-5 leads math, Claude Mythos Preview leads science, and Gemini 3.1 Pro leads price. That is not a single ranking of intelligence. It is a map of tradeoffs.

Real systems expose those tradeoffs fast. SWE-Bench Verified is a better signal for software work because it tests whether a model can fix actual GitHub issues, not just answer coding trivia. A model that looks elite on a general benchmark can still fail when the task requires multi-step repo navigation, patch generation, and test-aware reasoning. If your product depends on that behavior, a glossy top-line Elo score is a distraction. You need task fit, not abstract prestige.

Second, the leaderboard itself changes the game

Leaderboard methodology shapes the outcome as much as model quality does. LMSYS Chatbot Arena uses blind human pairwise comparisons and an Elo-style score, while Artificial Analysis blends benchmarks, throughput, and pricing into a composite index. Those are not interchangeable views of the same truth. A model can rank top-3 on one platform and top-10 on another because each platform optimizes for a different definition of “best.”

That is not a minor technicality. It means teams can fool themselves by citing the wrong chart. If you care about conversational quality, Arena is valuable because it captures human preference at scale. If you care about deployment economics, Artificial Analysis is more useful because it includes speed and cost. If you care about open-weights only, Hugging Face becomes relevant. The mistake is treating any one of these as a universal authority. There is no universal authority.

The counter-argument

The strongest defense of leaderboards is that they create discipline in a market full of hype. They give buyers a fast, public, repeatable way to compare models without trusting vendor marketing. They also surface useful signals quickly: Arena’s 1 million-plus blind battles, hourly pricing revalidation on Artificial Analysis, and quarterly benchmark sweeps on BenchLM all reduce guesswork. For teams that need a shortlist fast, a leaderboard is a practical filter.

That argument is right as far as it goes. Leaderboards are excellent for narrowing the field, spotting frontier shifts, and catching obvious regressions. They are not excellent for making the final decision. The reason is simple: production success depends on your workload, not the median internet user’s preference or the average score across a benchmark suite. A leaderboard can tell you which models deserve a pilot. It cannot tell you which one survives your prompts, your tools, your compliance rules, and your latency SLA.

What to do with this

If you are an engineer, use leaderboards only as a starting filter, then run your own evals on the exact tasks your system performs: retrieval, tool use, formatting, refusal behavior, latency, and failure recovery. If you are a PM, stop asking “what is the best model?” and start asking “best for which user journey, at what cost, and under what latency?” If you are a founder, build your model strategy around a two-layer process: public leaderboard screening for vendor selection, then private acceptance tests before any launch. That is how you avoid buying prestige instead of performance.

// Related Articles

- [IND]

Why Nebius’s AI Pivot Is More Real Than Hype

- [IND]

Nvidia backs Corning factories with billions

- [IND]

Why Anthropic and the Gates Foundation should fund AI public goods

- [IND]

Why Observability Is Critical for Cloud-Native Systems

- [IND]

Data centers are pushing homeowners to solar

- [IND]

How to choose a GPU for 异环