Why AI-agent CLIs are the new supply-chain attack surface

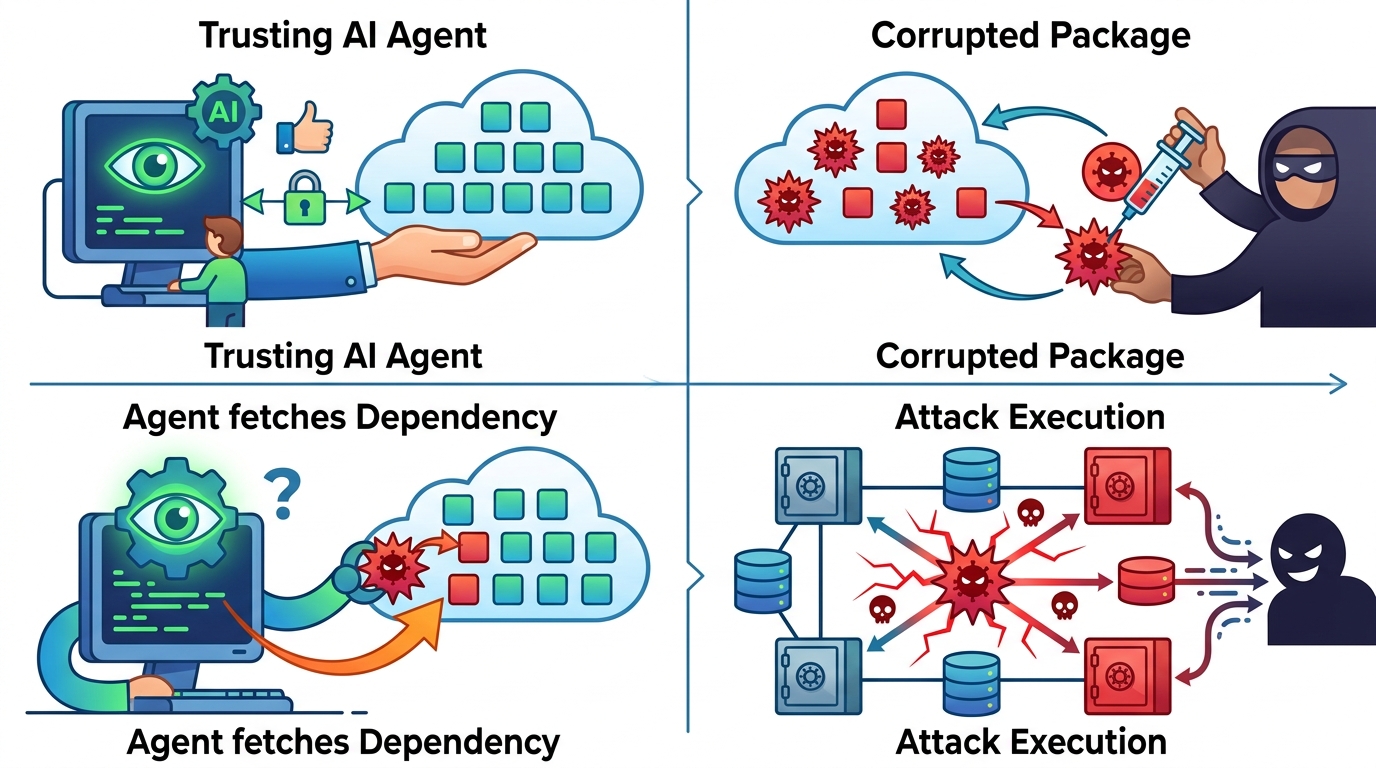

AI-agent CLIs create a new supply-chain backdoor class that scanners do not catch.

AI-agent CLIs create a new supply-chain backdoor class that scanners do not catch.

AI-agent CLIs are the new supply-chain attack surface, and security teams are not treating them like one. The OpenClaw example matters because it shows how a single command can turn a normal open-source repository into something an AI coding agent will execute with trusted intent. That is not a classic malware drop, not a poisoned dependency, and not a typo-squatted package. It is a workflow-level backdoor that rides inside the developer toolchain, where scanners are trained to look for files, packages, and signatures, not for instructions that reshape how an agent interprets a repo.

The first problem is that the attack is operational, not just code-level

Get the latest AI news in your inbox

Weekly picks of model releases, tools, and deep dives — no spam, unsubscribe anytime.

No spam. Unsubscribe at any time.

Traditional supply-chain security assumes the danger lives in the artifact: a malicious package, a compromised maintainer account, a tampered binary, or a hidden script. OpenClaw-style abuse breaks that model because the payload is the interface itself. CLI-Anything can analyze a repository and generate a structured CLI that AI agents can operate with a single command. Once that command exists, the repo is no longer just code to inspect; it becomes a control surface that can be invoked by an agent with far more trust than a human reviewer would grant a shell command copied from README text.

The scale signal is the warning. CLI-Anything reached more than 30,000 GitHub stars in a short time, which means this pattern is not fringe research. It is becoming a default convenience layer for agentic coding. When a tool is this widely adopted, the attack surface is not hypothetical. The more teams rely on generated CLIs to accelerate agent workflows, the more they normalize a mechanism that can be subverted without changing the obvious things scanners check. That is why the threat is structurally different from ordinary dependency risk.

The second problem is that scanners are looking in the wrong place

Supply-chain scanners excel at finding known badness in known layers. They flag vulnerable versions, suspicious installs, unusual network calls, and dependency graphs with risk. They do not have a mature category for a repository that intentionally emits a command interface designed to be consumed by an AI agent. If the repo is clean but the generated interface is the trap, static scanning misses the point. The danger sits in the behavior the repository induces in the agent, not in a malicious blob embedded in source.

That gap is why “no detection category for it” is the real story. Security tooling is built around software provenance, but agentic systems introduce instruction provenance. A generated CLI can encode assumptions, prompts, command routing, and execution paths that are invisible to conventional package scanners. If the tool chain treats the CLI as a productivity feature, then an attacker only needs to shape that feature so the agent performs unsafe actions under the banner of legitimate automation. This is a governance failure as much as a technical one.

The counter-argument

The strongest defense of AI-agent CLIs is simple: they are just automation wrappers, and automation has always been risky. Humans already trust build scripts, install hooks, and CI pipelines. If a repo is malicious, a scanner should catch the malicious code somewhere in the tree, and if it does not, the problem is broader than AI agents. From this view, OpenClaw is not a new class of threat. It is a familiar trust problem wearing a new interface.

That argument has force because it correctly points out that no tool can remove trust from software supply chains entirely. Teams will always need to execute something. But it fails on the specific mechanism here. The risky object is not just a script; it is a generated command surface that changes how an AI agent decides what is safe to run. That shifts the attack from code inspection to intent manipulation. Conventional scanners can catch a bad package. They do not understand that a clean repository can still produce a dangerous agent-facing control plane. That is a distinct failure mode, and it deserves its own detection category.

What to do with this

If you are an engineer or platform owner, stop treating agent-facing CLIs as convenience tooling and start treating them as privileged interfaces. Require explicit allowlists for commands an AI agent can invoke, review generated CLIs the same way you review deployment scripts, and add policy checks that inspect agent action plans, not just repository contents. If you are a founder, make this a product requirement: every agent workflow needs provenance, command boundaries, and audit logs. The lesson from OpenClaw is blunt. The next supply-chain breach will not only arrive through a bad dependency. It will arrive through a trusted command that taught the agent to trust the wrong thing.

// Related Articles

- [IND]

Why Nebius’s AI Pivot Is More Real Than Hype

- [IND]

Nvidia backs Corning factories with billions

- [IND]

Why Anthropic and the Gates Foundation should fund AI public goods

- [IND]

Why Observability Is Critical for Cloud-Native Systems

- [IND]

Data centers are pushing homeowners to solar

- [IND]

How to choose a GPU for 异环