How AI Is Changing Social Media in 2026

AI now shapes social feeds, moderation, ads, and deepfake risk, while chatbot use keeps pulling attention away from posting.

AI now decides more of what people see, post, and trust on social media.

In 2026, the shift is measurable, not theoretical. Adobe says 87% of marketers used generative AI in at least one recurring workflow in Q1 2026, up from 51% in Q1 2024, while Ofcom says 49% of UK adult social media users actively post, share, or comment, down from 61% in 2024.

| Metric | 2024 | 2025 | 2026 | Source |

|---|---|---|---|---|

| Marketers using generative AI in a recurring workflow | 51% | — | 87% | Adobe Digital Trends |

| UK adult social users who actively post, share, or comment | 61% | — | 49% | Ofcom Online Nation |

| US adults who feel more concerned than excited about AI | — | — | 50% | Pew Research Center |

| Deepfake cases tracked on YouTube | — | — | 29.9% | Keepnet Labs |

AI now decides what rises in the feed

Get the latest AI news in your inbox

Weekly picks of model releases, tools, and deep dives — no spam, unsubscribe anytime.

No spam. Unsubscribe at any time.

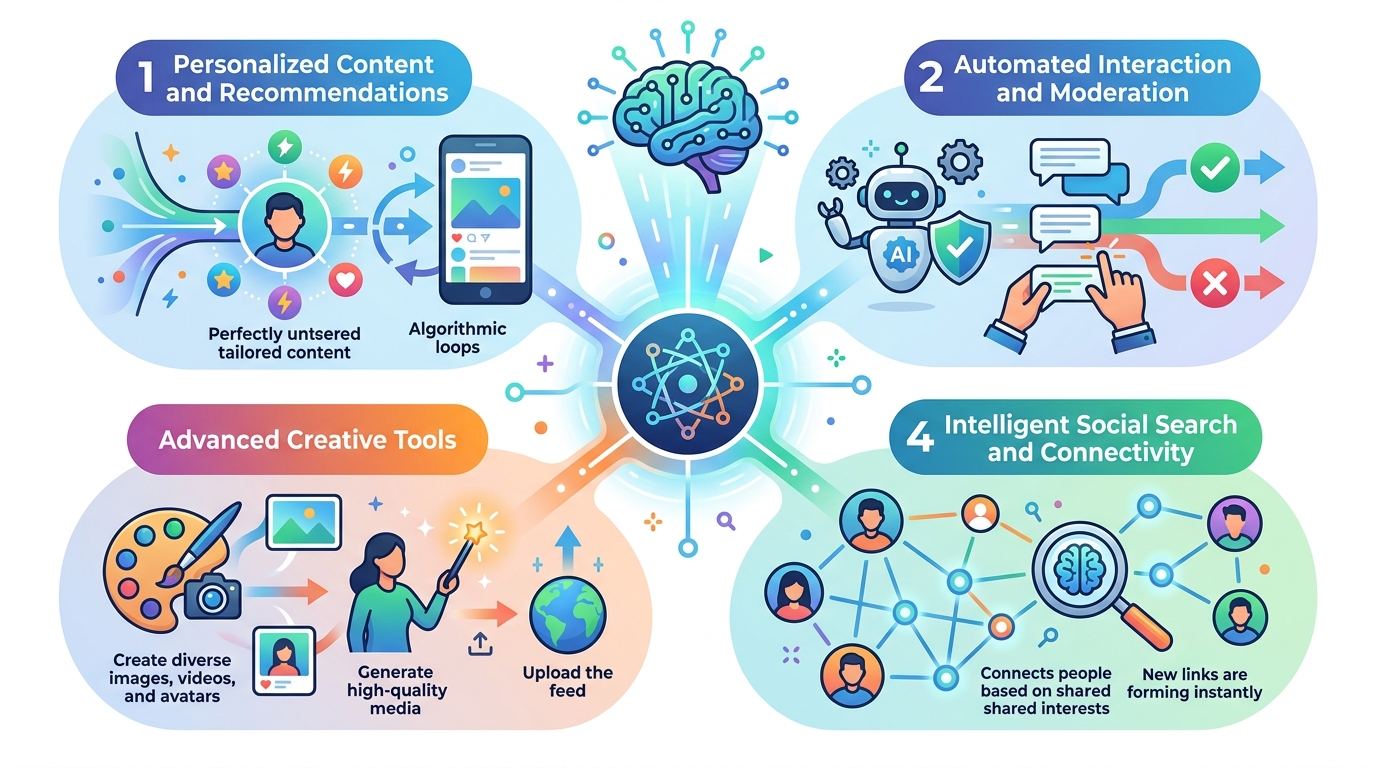

Social platforms have spent years telling us the feed is personalized. In 2026, that personalization is mostly an AI ranking problem. The system watches what you pause on, what you replay, what you skip, and what you finish, then uses those signals to predict the next post most likely to keep you scrolling.

TikTok, Instagram, YouTube, Facebook, and X all use machine learning to rank content, but the inputs differ. Hootsuite’s algorithm review points to watch time, engagement rate, and relevance as the common signals that matter most across platforms.

That matters because ranking is no longer just a sorting layer. It shapes behavior. When the feed gets better at predicting what you want, you spend more time inside it. When the feed gets less personalized, usage drops.

- TikTok uses watch time plus computer vision and speech recognition to understand clips.

- YouTube relies heavily on watch time and click-through behavior.

- Instagram Reels and Facebook weigh engagement and predicted meaningful interactions.

- X relies more on engagement velocity and media-text signals.

The practical effect is simple: creators no longer depend only on follower counts. Discovery now comes from the machine deciding a post has enough potential to hold attention, even if the account is small.

Generative AI is flooding social content

The content firehose got wider because production got cheaper. Adobe says 87% of marketers used generative AI in at least one recurring workflow in Q1 2026, and 59% of creators said they use generative AI tools to streamline content creation. That is a big jump from 51% in Q1 2024, and it explains why social feeds now contain far more machine-assisted copy, images, captions, and edits.

Adobe is one of the clearest data sources here because it surveys both marketers and creators. Its 2026 report and creator survey suggest AI has moved from occasional helper to ordinary production software.

“Most content moderation decisions are now made by machines, not human beings.” — Oversight Board

That quote matters because it captures the other side of the same shift. More AI-generated content means more automated review, more automated ad targeting, and more automated detection of abuse. Platforms are trying to scale moderation with machines because the volume is too high for human review alone.

The problem is that scale comes with trade-offs. Automated systems are good at catching spam and obvious policy violations. They are much weaker when context matters, which is exactly where political speech, satire, or borderline harassment often live.

- Static visuals: feed posts, carousels, and ad creative

- Video workflows: auto-cuts, captions, narration, and voice cloning

- Copy tasks: first-pass captions, scripts, and reply drafts

- Ad testing: dozens of variations from one brief

For creators, this means speed. For audiences, it means more content with less obvious human authorship. For platforms, it means a harder moderation problem and a stronger incentive to automate every layer they can.

Moderation is getting more automated, and less forgiving

Content moderation is where the tension gets sharpest. The Oversight Board says most moderation decisions are now made by machines, and it warned that enforcement based only on automation can overreach because machines still struggle with context. That is not an abstract concern. It affects what gets removed, what stays up, and how quickly borderline content disappears.

Meta said it reduced enforcement mistakes in the United States by about 50% from Q4 2024 to Q1 2025. At the same time, the Center for Countering Digital Hate argued that policy changes could stop 97% of Meta’s content enforcement in key areas, especially hate speech. Those two claims point in different directions, which is exactly why moderation has become such a contested topic.

The real-world outcome for users is a mix of slower takedowns in some areas and more aggressive automation in others. Platforms want fewer false positives, but they also want to keep spam, CSAM, and terror content off the service at scale. That balance is hard, and AI does not remove the trade-off.

It also changes who gets the final say. In the U.S., Meta moved from third-party fact-checking toward Community Notes-style correction. That swaps one kind of institutional judgment for another, with the public now doing more of the visible correction work.

Deepfakes are now part of normal social risk

Deepfakes used to feel like a niche fraud problem. In 2026, they are a standard social-media risk. Keepnet Labs says more than 500,000 deepfakes were shared on social media in 2023, with 8 million projected to circulate in 2025. It also cites a human detection rate of just 24.5% for high-quality deepfake video.

The platform split is telling. Keepnet’s aggregation of industry data says YouTube accounts for 29.9% of tracked deepfake cases, followed by Instagram at 26.8%, Facebook at 18.8%, TikTok at 18.3%, and WhatsApp at 6.3%. Longer videos give attackers more room for face swaps, while short-form apps often see voice cloning and impersonation in comments, DMs, and replies.

- Average deepfake fraud incident cost: about USD 500,000

- YouTube share of tracked cases: 29.9%

- Instagram share of tracked cases: 26.8%

- Human detection rate for high-quality deepfake video: 24.5%

That is why the safest user behavior is boring and repetitive: verify a clip through the person’s official account, distrust urgent voice messages, and assume a viral video may be synthetic until proven otherwise. On social media in 2026, skepticism is a basic security habit.

Chatbots are pulling attention away from posting

One of the quieter changes is that AI chatbots are starting to compete with social apps for the same daily attention. Pew Research Center says about two-thirds of U.S. teens ages 13 to 17 have used an AI chatbot at least sometimes, while 22% of U.S. adults say they get health information from AI chatbots sometimes or more. Pew also found that half of U.S. adults feel more concerned than excited about AI’s growing presence in daily life.

Ofcom adds another useful signal: 54% of UK adults now use AI tools such as ChatGPT, Copilot, or Gemini, while active posting, sharing, or commenting on social media fell to 49% from 61% in 2024. Those lines are moving in opposite directions.

That does not mean social media is dying. It means the center of gravity is shifting. People still consume social content, but more of them are using AI tools for answers, summaries, writing help, and quick advice instead of posting their own updates.

- UK adults using AI tools: 54%

- UK adults actively posting or sharing on social media: 49%

- U.S. adults getting health info from AI chatbots: 22%

- U.S. adults getting health info from social media: 36%

What 2026 really means for social platforms

The biggest change is not one feature or one app. It is the way AI now sits inside every layer of social media: ranking, creation, moderation, fraud detection, and user support. The feed is more predictive, content is cheaper to produce, moderation is more automated, and trust is harder to maintain.

If you run a brand, the takeaway is practical: assume AI will shape both distribution and review. If you create content, expect machine-assisted production to be the norm rather than the exception. If you use social media like most people do, expect more synthetic content, more automated enforcement, and more reasons to verify what you see before you share it.

The next question is not whether AI will keep changing social media. It already has. The real question is which platforms can keep user trust while automating more of the system that decides what billions of people see every day.

// Related Articles

- [IND]

Why Nebius’s AI Pivot Is More Real Than Hype

- [IND]

Nvidia backs Corning factories with billions

- [IND]

Why Anthropic and the Gates Foundation should fund AI public goods

- [IND]

Why Observability Is Critical for Cloud-Native Systems

- [IND]

Data centers are pushing homeowners to solar

- [IND]

How to choose a GPU for 异环