MCP Standard in 2026: Integrating AI Tools

MCP in 2026: how teams adopt Model Context Protocol for AI tool integration, ensure safe rollouts, and connect hosts with servers.

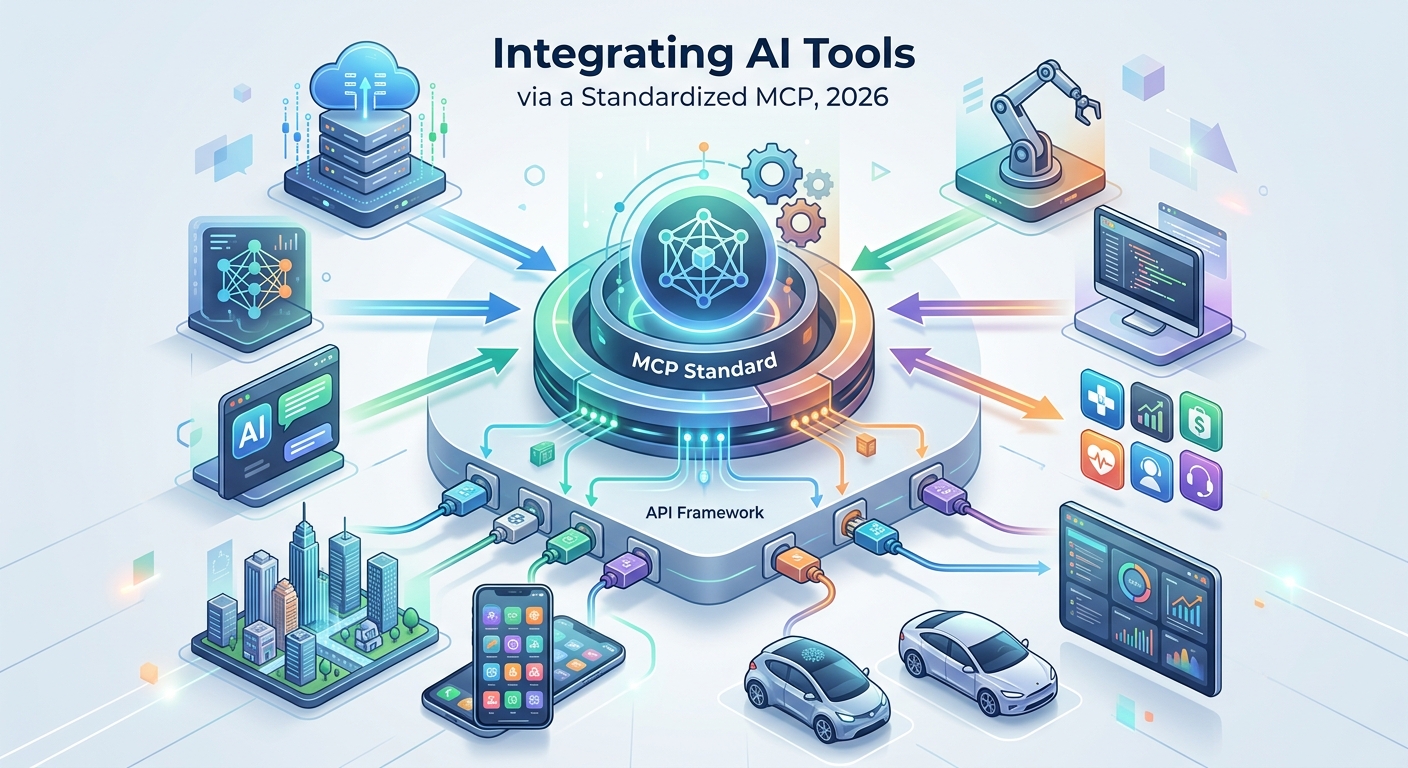

The Model Context Protocol (MCP) in 2026 has become a crucial bridge for AI hosts like IDEs or desktop assistants to discover and call tools on separate server processes. This standardized boundary facilitates integration across various systems without replacing existing databases or scrapers.

MCP's Role in Production

Get the latest AI news in your inbox

Weekly picks of model releases, tools, and deep dives — no spam, unsubscribe anytime.

No spam. Unsubscribe at any time.

Many teams have grown weary of creating ad hoc "agent glue" for each new product. These often involve duplicate tool schemas and inconsistent logging, which can become cumbersome. MCP shifts this burden into a few server processes capable of advertising tools to any compatible host speaking the protocol. This approach standardizes tool orchestration, allowing hosts to manage conversations while servers handle retrieval and side effects.

- MCP provides a reusable integration surface.

- Tools and resources are advertised by server processes.

- Hosts orchestrate interaction while servers manage execution.

Understanding the MCP Standard

MCP is tied to Anthropic’s initiative and is publicly documented. It's an open protocol with SDKs available for languages like TypeScript and Python. However, it doesn’t hold an ISO/IEC number, so it should be presented as an open specification rather than an international standard.

"MCP is not an ISO standard, but an open specification gaining industry traction," says Yassine El Haddad, a software developer and automation specialist.

Hosts such as Claude Desktop and Cursor connect to MCP servers via different transports like stdio or HTTP/SSE, depending on the support offered by each host version.

Comparing MCP with Other Integration Methods

MCP offers a structured way to share servers among multiple hosts and maintain persistent tool catalogs. In contrast, inline function calls are more suited for single app deployments with tightly coupled prompt code and tools.

- MCP: Shared servers, persistent catalogs, operational ownership.

- Inline function calls: Single app, single deployment, tight coupling.

- Workflow automation: Durable schedules, human approvals, branching.

Workflow automation systems like Make and n8n can be triggered by MCP but not replaced, providing visual automation when steps extend beyond a single chat session.

A Practical Rollout Checklist

Implementing MCP requires a security-first mindset. Here are key considerations:

- Allowlist tools to minimize surface area.

- Store secrets outside prompts for security.

- Set timeouts and idempotency to handle failures.

- Log tool names, parameters, and outcomes for auditing.

- Pin versions to prevent unintended permission changes.

Ultimately, MCP in 2026 simplifies integration by reducing bespoke solutions and clarifying side effect ownership. By pairing MCP with robust web-data infrastructure like Apify or Firecrawl, teams can effectively incorporate open web data into their AI models.

Looking Ahead

The adoption of MCP in AI integration processes will likely continue to grow as more teams seek consistent and efficient ways to manage tool interactions. Keeping pace with the protocol's developments and aligning infrastructure accordingly will be key to leveraging its full potential.

// Related Articles

- [TOOLS]

Why VidHub 会员互通不是“买一次全设备通用”

- [TOOLS]

Why Bun’s Zig-to-Rust experiment is the right move

- [TOOLS]

Why OpenAI API pricing is a product strategy, not a footnote

- [TOOLS]

Why Claude Code’s prompt design beats IDE copilots

- [TOOLS]

Why Databricks Model Serving is the right default for production infe…

- [TOOLS]

Why IBM’s Bob is the right kind of AI coding assistant