Why Databricks Model Serving is the right default for production infe…

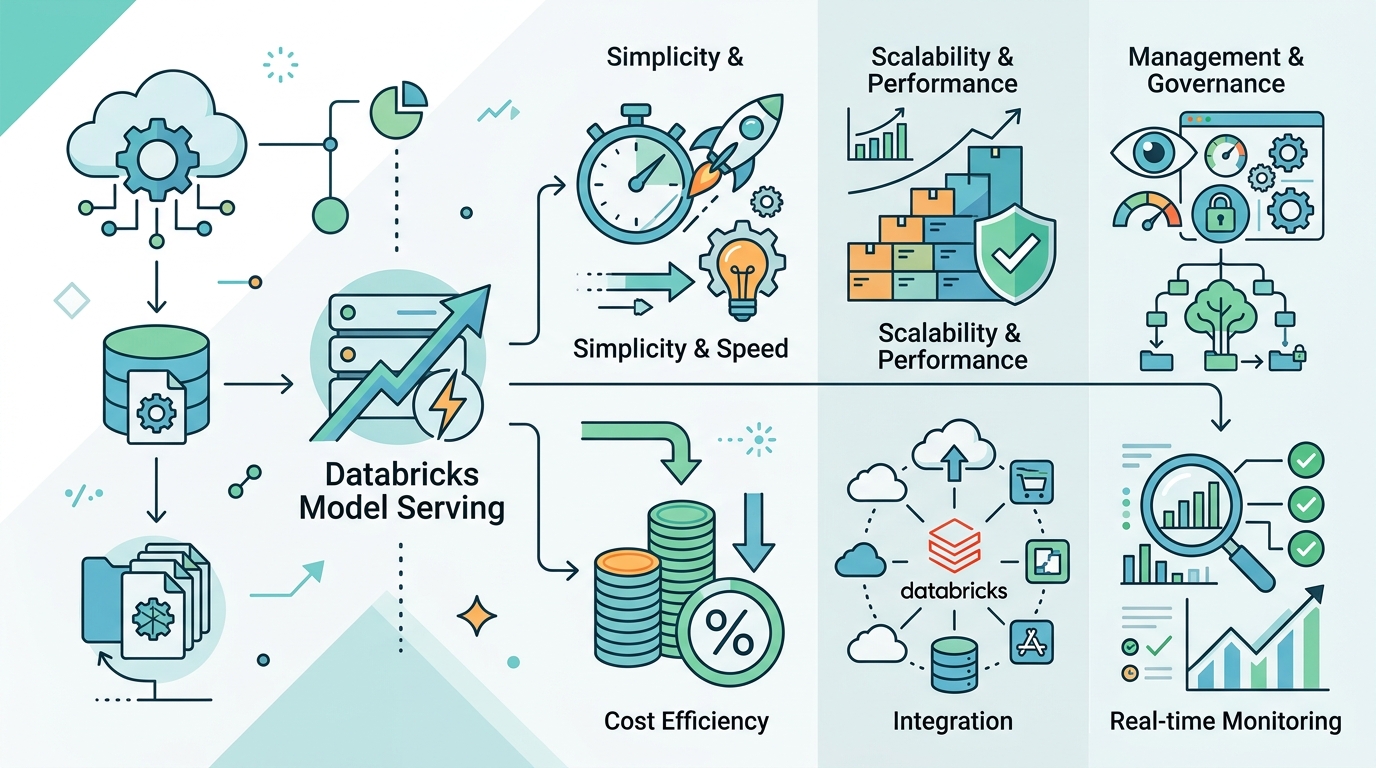

Databricks Model Serving is the right default for production inference because it unifies deployment, governance, and scaling across model types.

Databricks Model Serving is the right default for production inference because it unifies deployment, governance, and scaling across model types.

Databricks Model Serving is the right default for teams that need to put models into production without building a separate platform for every model, provider, and workload. The appeal is not just that it serves custom MLflow models, foundation models, and external LLMs from one place. It is that it turns model operations into a managed system with a single REST API, a single UI, autoscaling serverless compute, and built-in governance. That matters because the real cost of model deployment is not the first endpoint, it is the sprawl that follows when every team wires up its own infrastructure, access controls, observability, and patching process.

One platform beats a patchwork of model stacks

Get the latest AI news in your inbox

Weekly picks of model releases, tools, and deep dives — no spam, unsubscribe anytime.

No spam. Unsubscribe at any time.

The strongest case for Model Serving is consolidation. Databricks lets you manage custom models, hosted foundation models, and external models through the same serving layer, which means one operational pattern instead of three. A team can register an MLflow model in Unity Catalog, expose it as a REST endpoint, and later add an external model like GPT-4 through the same governance surface. That reduces the number of tools engineers must learn and the number of integration points that can fail.

This matters most when a company moves from experiments to production. A proof of concept can tolerate one-off scripts and hand-built API wrappers. A production system cannot. Once product, analytics, and ML teams all need model access, a unified serving layer becomes a force multiplier. Databricks also exposes AI Functions and ai-query for batch inference directly from SQL, which is a practical advantage for organizations that already run data pipelines in the warehouse. The platform is not just serving inference, it is collapsing the distance between data, model, and application.

Governance is the real product, not just hosting

Model Serving is compelling because it treats governance as a first-class feature rather than an afterthought. The Serving UI centralizes permissions, usage limits, and monitoring for all endpoints, including externally hosted ones. That is a serious advantage in enterprises where model access must be controlled across teams and vendors. If one group uses an internal classifier, another uses a hosted foundation model, and a third uses an external API, the default failure mode is policy fragmentation. Databricks pushes against that by putting all of it behind AI Gateway and a single control plane.

Security claims are only useful when they are operationalized, and Databricks does that in ways that map to real enterprise concerns. Requests are logically isolated, authenticated, and authorized. Data is encrypted at rest with AES-256 and in transit with TLS 1.2+. For paid accounts, user inputs and outputs are not used to train Databricks services. Those are not decorative assurances. They are the baseline requirements for any organization that wants to let internal teams use models without creating a compliance fire drill. The same is true of network policies for serverless egress control, which give security teams a lever they can actually enforce.

Production inference needs scale, but it also needs discipline

Autoscaling and low-latency serving are useful only if they are stable under real load, and that is where Model Serving earns its keep. Databricks says the service can support over 25K queries per second with overhead latency under 50 ms. Whether every deployment needs that level of throughput is beside the point. The point is that the platform is designed for high-availability production use, so teams are not forced to choose between developer convenience and operational seriousness. Serverless compute also means infrastructure does not become the bottleneck every time traffic shifts.

There is another detail that reveals the platform’s production posture: Databricks does not patch existing model images in place. A new model image created from a new model version carries the latest patches, but the old image stays untouched to avoid destabilizing live deployments. That is the correct tradeoff. Model serving is not a laptop app, and production inference should not behave like a rolling desktop update. Stability matters more than forcing every endpoint onto the newest image immediately. The cost of breaking a live model is higher than the cost of requiring a new version for patched runtime changes.

The counter-argument

The best objection is that Model Serving centralizes too much and can make teams dependent on one vendor’s abstractions, pricing, and limits. That concern is legitimate. If an organization only needs a single custom model behind a simple API, a lighter-weight deployment stack may be cheaper and easier to reason about. There is also a real risk that a managed serving layer encourages teams to move faster than their governance model can support, especially when they start mixing internal models with external LLMs.

Another fair criticism is that managed serving can hide complexity rather than eliminate it. Autoscaling, throughput tuning, model versioning, region constraints, and endpoint limits still exist. If a team wants absolute control over runtime behavior, custom networking, or image lifecycle, they may prefer to own the serving stack directly. In that sense, Databricks is not the answer to every deployment problem. It is a strong answer to the problem of operating many models across many teams with real security and governance needs.

That counter-argument does not defeat the platform. It defines its boundary. Model Serving is the right default when the problem is organizational scale, not hobbyist simplicity. The moment a company needs one policy surface for multiple model types, one scalable endpoint model, and one place to monitor access and cost, the managed approach wins. The vendor tradeoff is real, but so is the operational debt of stitching together separate tools for hosting, auth, scaling, and monitoring. In production, that debt compounds faster than platform lock-in.

What to do with this

If you are an engineer, treat Model Serving as the baseline for production inference when your team needs fast rollout, governed access, and support for more than one model type. If you are a PM, push for a serving strategy that includes permissions, usage limits, cost visibility, and versioning from day one instead of bolting them on after launch. If you are a founder, optimize for the operating model you will need at ten models and three teams, not the one that looks cheapest for your first demo. The right question is not whether you can host a model yourself. It is whether you want to own the full burden of operating it safely at scale.

// Related Articles

- [TOOLS]

Why VidHub 会员互通不是“买一次全设备通用”

- [TOOLS]

Why Bun’s Zig-to-Rust experiment is the right move

- [TOOLS]

Why OpenAI API pricing is a product strategy, not a footnote

- [TOOLS]

Why Claude Code’s prompt design beats IDE copilots

- [TOOLS]

Why IBM’s Bob is the right kind of AI coding assistant

- [TOOLS]

Why Mvm Is the Right Kind of Go Interpreter