Why OpenAI’s cyber model will matter more than Anthropic’s Mythos

OpenAI’s GPT-5.5-Cyber is a gated, practical security tool, and that makes it the right move.

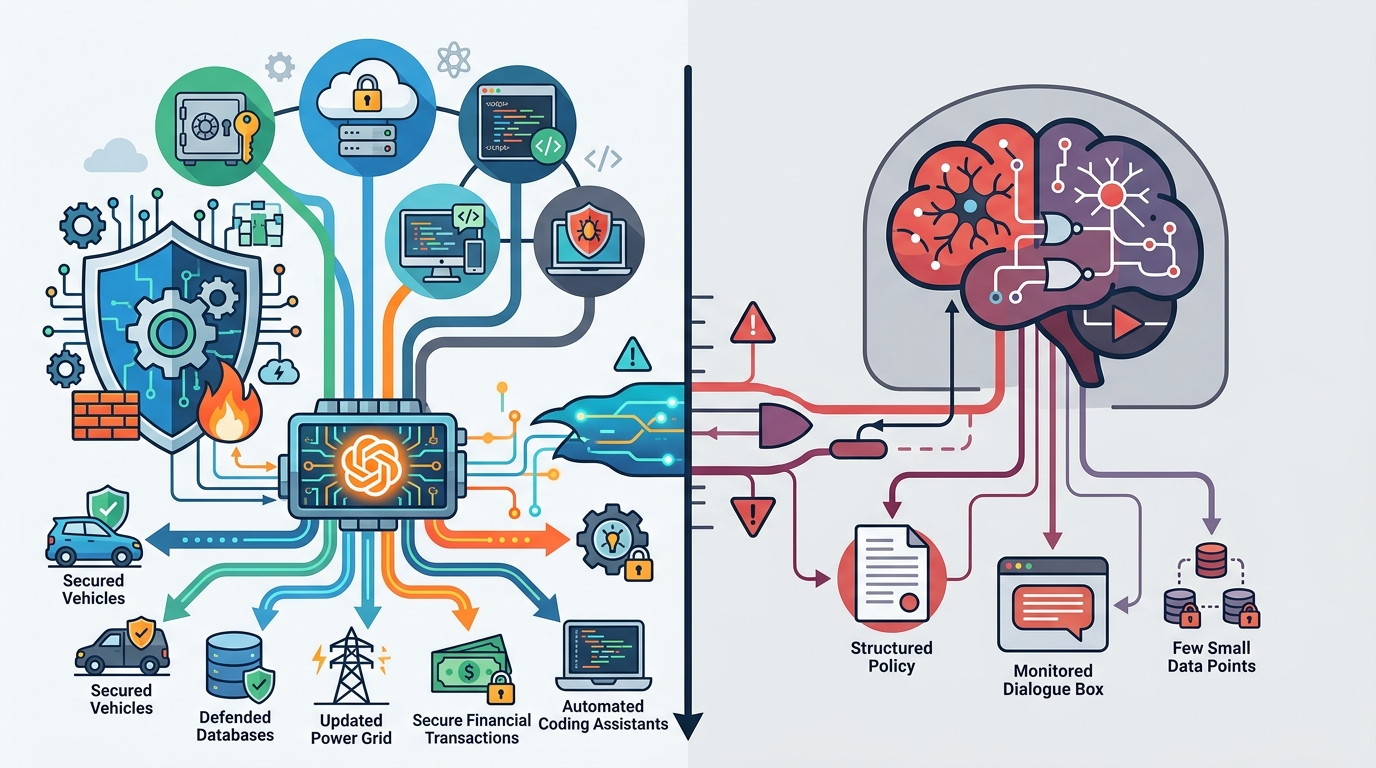

OpenAI’s GPT-5.5-Cyber is a gated security tool built to find and patch vulnerabilities.

OpenAI is right to ship GPT-5.5-Cyber as a restricted, professional-grade security model, because the first useful AI cyber products will be the ones that help trusted practitioners work faster, not the ones that promise broad autonomy to everyone. The Politico report says the model is initially limited to vetted cybersecurity professionals and is designed to help find and patch cyber issues. That is the correct product shape. Cybersecurity is one of the few domains where access control is not a feature add-on but the core of the product, and OpenAI is treating it that way.

First argument: security tools need narrow distribution before they need scale

Get the latest AI news in your inbox

Weekly picks of model releases, tools, and deep dives — no spam, unsubscribe anytime.

No spam. Unsubscribe at any time.

OpenAI is starting with vetted users because cyber models are not generic chatbots with a new label. They can be used for defense, but they can also lower the barrier to abuse. A model that helps identify weaknesses in code, systems, or configurations is only responsible if the initial audience is limited to people who already operate under professional norms, legal obligations, and audit trails. That is why the gated rollout matters more than the model name.

The industry has already learned this lesson in reverse. When broad-purpose models were released without tight workflow controls, vendors spent months bolting on guardrails after the fact. In cyber, that sequence is dangerous. A model that can assist with patching, triage, and analysis should be embedded inside a controlled environment from day one, because the downside of misuse is immediate and the upside of speed is only real when the user is accountable.

Second argument: the real competition is not capability, it is workflow ownership

The headline comparison to Anthropic’s Mythos is less important than the product strategy underneath it. OpenAI is not merely trying to prove it can generate better security advice. It is trying to become the place where security teams do their work. If GPT-5.5-Cyber helps analysts move from alert to root cause to patch recommendation inside one interface, that is a stronger moat than a benchmark win. Security teams buy time, not demos.

That is why the “find and patch” framing is so important. Most cyber tools stop at detection or explanation. The value is in closing the loop: identify the issue, validate the risk, propose the fix, and hand the human a concrete next step. A model that can participate in that full cycle is not just another assistant. It becomes part of the operating system of a security team, which is where durable product power lives.

The counter-argument

The strongest case against OpenAI’s approach is that gated access slows adoption and leaves the field to competitors that package security help more aggressively. Security teams are already overloaded, and they often prefer tools that are easy to deploy and easy to share across the organization. A restricted model can look cautious to the point of irrelevance if it takes too long to prove value or if only a small subset of practitioners can use it.

There is also a legitimate worry that “vetted professionals only” becomes a marketing shield rather than a meaningful safety control. If the model is simply a larger general model with a cyber wrapper, then the gate buys optics, not protection. In that case, competitors that build specialized workflows, stronger logging, and tighter permissioning will win because they solve the operational problem instead of just naming it.

That critique is real, but it does not beat the rollout strategy. Cyber is one of the few markets where trust, provenance, and controlled access are product features, not bureaucracy. OpenAI does not need mass adoption on day one; it needs credible adoption among people who can validate the model under real constraints. If the tool is actually good at finding and patching issues, the restricted launch is an advantage because it prevents reckless use while generating high-quality feedback from the users who matter most.

What to do with this

If you are an engineer, PM, or founder building in security, stop chasing the broadest possible launch and design for controlled usefulness first. Build access controls, audit logs, role-based permissions, and human-in-the-loop workflows into the product from the start. Optimize for one painful security job to be completed faster and with less error, then expand only after you can prove the model improves outcomes inside a real team. In cyber, trust is not a go-to-market tactic; it is the product.

// Related Articles

- [IND]

Why Nebius’s AI Pivot Is More Real Than Hype

- [IND]

Nvidia backs Corning factories with billions

- [IND]

Why Anthropic and the Gates Foundation should fund AI public goods

- [IND]

Why Observability Is Critical for Cloud-Native Systems

- [IND]

Data centers are pushing homeowners to solar

- [IND]

How to choose a GPU for 异环