Why DeepMind workers are right to unionize over Pentagon AI

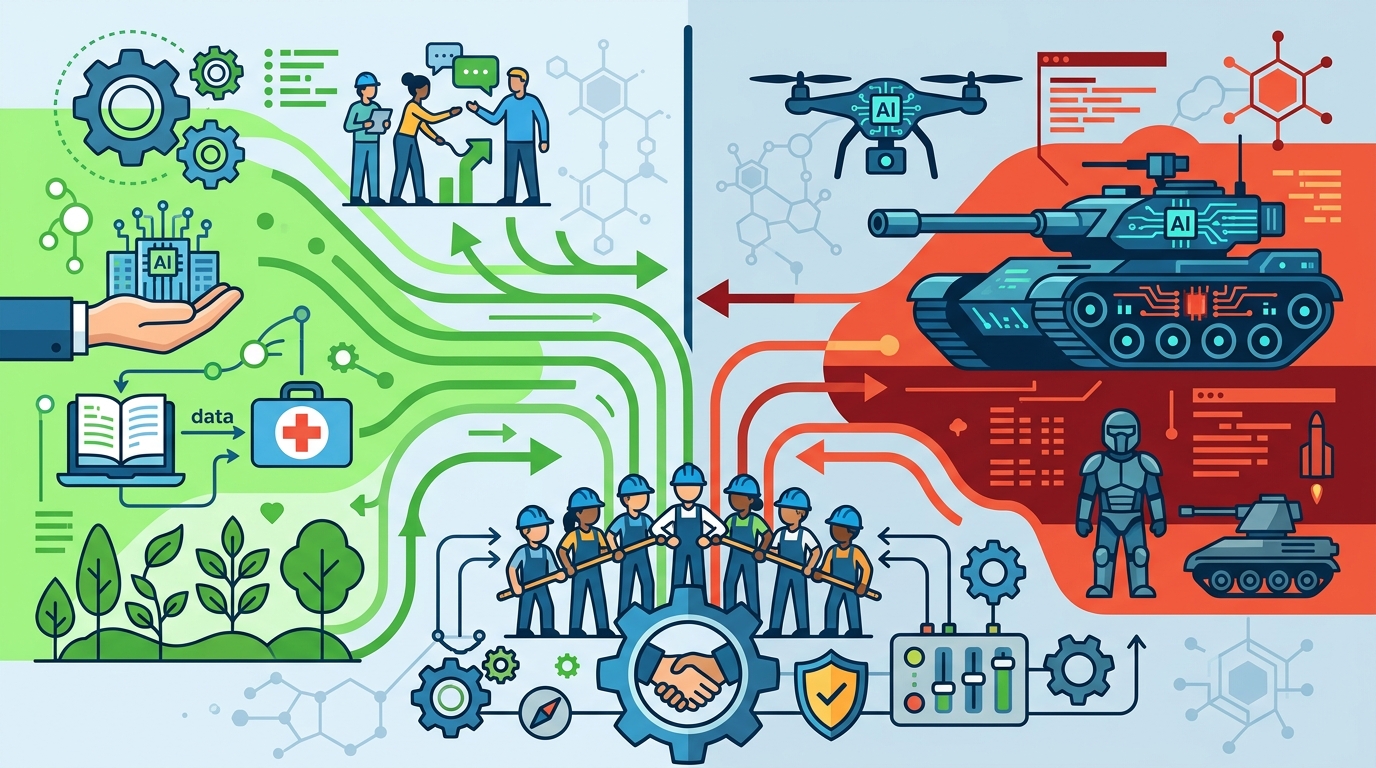

DeepMind workers should unionize because AI labs need worker power to resist military misuse, not just management ethics promises.

DeepMind workers are right to unionize because AI labs need worker power over military use.

DeepMind’s UK staff are right to unionize, and they are right to make the Pentagon deal part of the case.

Google’s latest defense contract is not a side issue or a PR distraction. It is a concrete example of why AI workers no longer trust executive assurances about “responsible” deployment. The company has already dropped its pledge not to develop militarized AI, and the Pentagon agreement includes language against domestic mass surveillance and autonomous weapons that is explicitly non-binding. That is not a safeguard. It is a statement of intent with no enforcement teeth.

Worker power is the only leverage that matches AI risk

Get the latest AI news in your inbox

Weekly picks of model releases, tools, and deep dives — no spam, unsubscribe anytime.

No spam. Unsubscribe at any time.

The first argument for unionization is simple: the people building frontier systems need a formal way to slow down harmful use before it becomes policy. AI labs move fast, management moves faster, and individual objections get swallowed by hierarchy. A union gives engineers, researchers, and product staff collective leverage to demand lines they will not cross. In a field where one model release can shape surveillance, targeting, and information control across governments, “speak up internally” is not enough.

Google has already shown how limited informal protest is. In 2018, employee backlash over Project Maven helped force the company to step away from a Pentagon drone-analysis contract and publish AI principles. That episode proved two things at once: workers can influence military AI decisions, and management will reverse course only when pressure becomes costly. Union recognition turns that episodic pressure into durable power instead of a one-off revolt that disappears after the news cycle moves on.

Ethics policies without labor rights are theater

The second argument is that AI ethics boards and public principles fail when they are not backed by worker rights. Google’s own history makes the point. It once promised not to design AI for weapons, then later abandoned that pledge. That is the lifecycle of corporate ethics in a competitive market: announce restraint, redefine the language, then ship the contract. If staff cannot refuse work on moral grounds, the company can always route the most troubling projects through people who are least able to push back.

The Google DeepMind workers are asking for exactly the kind of protections that make ethics real: a commitment not to build technology whose primary purpose is to harm people, an independent ethics oversight body, and an individual right to refuse morally objectionable projects. Those are not radical demands. They are the minimum needed when the same tools can support scientific research, state surveillance, and battlefield targeting. The workers’ concern is not abstract. They are responding to a world in which the Pentagon wants AI on classified networks with fewer restrictions, and where companies compete to supply it.

The counter-argument

The strongest objection is that unionizing a frontier AI lab risks politicizing research and slowing work that might be valuable for medicine, science, or national security. Google can argue, with some force, that it needs flexibility to serve lawful government customers and that employees should not be able to veto every controversial deployment. The company also says it values constructive dialogue, and it is true that not every defense-related project is automatically abusive.

That argument fails because the issue is not whether governments can buy AI. The issue is whether the people building the system have any meaningful say when the buyer is a military apparatus with a record of using technology in ways workers find unacceptable. The Pentagon deal is especially hard to defend as “just another customer” when the contract language is non-binding and the administration is pushing for fewer restrictions on classified use. A union does not ban research. It creates a check on the most dangerous applications, and that check is exactly what a company cannot be trusted to provide for itself.

What to do with this

If you are an engineer or researcher in AI, stop treating labor organizing as separate from safety work. Join the union drive, push for binding refusal rights, and insist that deployment review includes the people who understand the system best. If you are a PM or founder, build processes that survive pressure from defense customers: clear escalation paths, enforceable use restrictions, and worker representation in high-risk decisions. If you are serious about “responsible AI,” give workers power, not slogans.

// Related Articles

- [IND]

Why Nebius’s AI Pivot Is More Real Than Hype

- [IND]

Nvidia backs Corning factories with billions

- [IND]

Why Anthropic and the Gates Foundation should fund AI public goods

- [IND]

Why Observability Is Critical for Cloud-Native Systems

- [IND]

Data centers are pushing homeowners to solar

- [IND]

How to choose a GPU for 异环