Why Microsoft’s agentic security model beats single-model AI

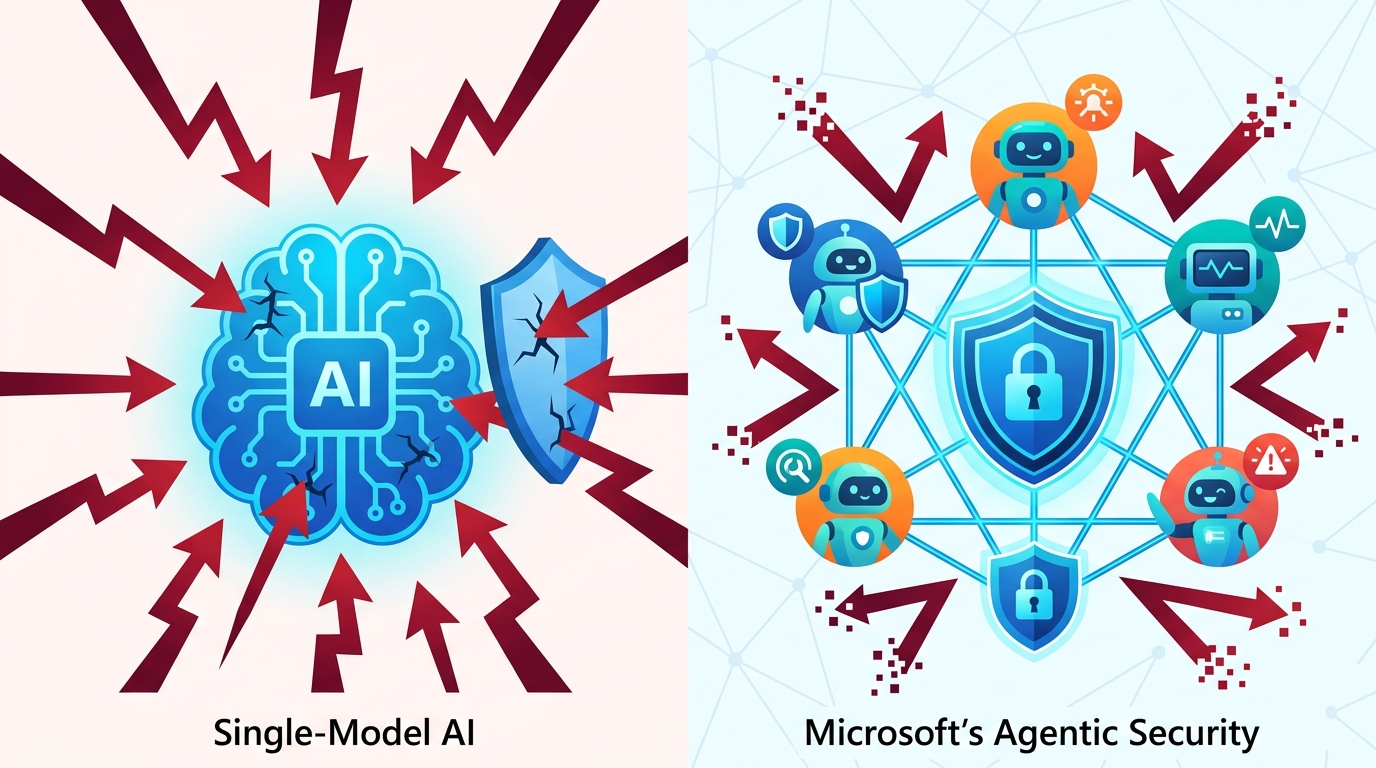

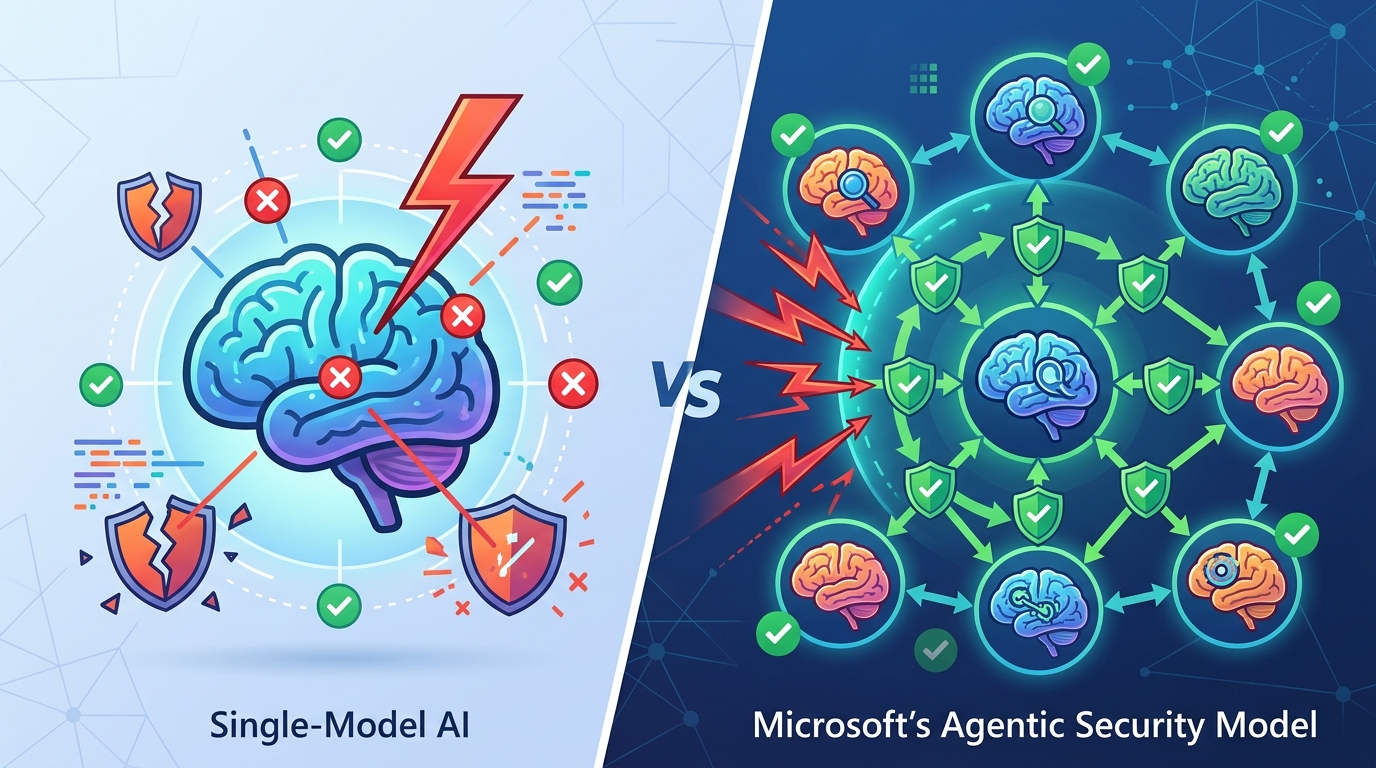

Microsoft is right: multi-model agentic security beats single-model AI for real vulnerability discovery.

Microsoft’s multi-model agentic system is the right way to do AI security research.

Microsoft’s new agentic security stack is not a demo, it is the model for how AI should find real bugs: through a multi-model pipeline that validates, debates, deduplicates, and proves findings before anyone treats them as signal. The company says its MDASH harness found 16 new Windows networking and authentication vulnerabilities, including four Critical remote code execution flaws, and scored 88.45% on CyberGym, the public benchmark of 1,507 real-world vulnerabilities. That matters because the benchmark result is not the point by itself; the point is that Microsoft is showing the industry that the winning security system is an orchestrated workflow, not a single frontier model with a clever prompt.

First argument: security work needs a system, not a chat prompt

Get the latest AI news in your inbox

Weekly picks of model releases, tools, and deep dives — no spam, unsubscribe anytime.

No spam. Unsubscribe at any time.

MDASH’s core design choice is the right one. Microsoft says the harness runs more than 100 specialized agents across a pipeline that prepares the target, scans candidate paths, validates reachability, deduplicates equivalents, and then proves exploitability where possible. That is exactly what serious vulnerability research looks like in practice. A human researcher does not jump from source code to exploit on the first read, and an AI system should not either. The value is in the chain of custody from suspicion to proof.

The StorageDrive test makes that case concrete. Microsoft used a private driver with 21 deliberately injected vulnerabilities and reports that MDASH found all 21 with zero false positives. That is the kind of result that matters to a security team, because false positives are not a rounding error in this field. They waste triage time, erode trust, and bury real issues under noise. A system that can stay precise on unseen code is not merely impressive; it is operationally useful.

Second argument: the ensemble beats the solo model

Microsoft’s strongest claim is not that one model got smarter. It is that the harness got better by mixing model roles. The company says MDASH uses frontier models as heavy reasoners, distilled models for high-volume passes, and a separate frontier model as an independent counterpoint. That architecture is the real breakthrough. In security, disagreement is not a bug in the process. It is evidence. If one model flags a path and another cannot refute it, the finding gets stronger. That is a much better decision rule than trusting whichever model sounds most confident.

The benchmark numbers back that up. Microsoft reports 96% recall against five years of confirmed MSRC cases in clfs.sys and 100% in tcpip.sys, plus the top CyberGym score by roughly five points over the next entry. Those are not vanity metrics. They show that a multi-model pipeline can generalize across real historical bugs, not just toy examples or curated challenge sets. For defenders, that is the difference between a flashy lab result and a system that can sit inside an engineering workflow and keep producing value as the codebase, the model mix, and the threat surface change.

The counter-argument

The best objection is straightforward: this is still Microsoft grading Microsoft. The company owns the code, the benchmark story, the validation pipeline, and the release narrative. Private codebases and planted bugs are not the same as hostile, messy, external reality. A vendor can optimize for its own environment and still miss the kinds of bugs that matter most in open ecosystems, third-party software, or attacker-adaptive settings. There is also a real cost question. More than 100 agents, multiple models, plugins, proof stages, and human oversight sound expensive and complex compared with a single-model scanner that is cheaper to run.

That objection is fair, but it does not defeat the argument. Security is not judged by elegance; it is judged by whether the system finds exploitable bugs without drowning teams in junk. Microsoft is not claiming MDASH replaces humans or every other scanner. It is claiming the opposite: the model is only one part of the product, and the harness is what makes the model useful. If the system can demonstrate high recall, zero false positives on planted vulnerabilities, and proven findings in a production codebase that ships to billions of users, then the added complexity is justified. In security, a more complex pipeline that works is better than a simple one that guesses.

What to do with this

If you are an engineer or security leader, stop buying AI security tools as if the model alone is the product. Ask vendors how they validate findings, how they deduplicate, how they prove exploitability, what kinds of agents they use, and how their pipeline handles model churn. If you are a founder building in this space, copy the operating logic, not the marketing: make the system multi-stage, make disagreement part of the signal, and make proof the finish line. The lesson from MDASH is blunt: AI security becomes real when it behaves like a disciplined research team, not a single autocomplete box.

// Related Articles

- [IND]

Why Nebius’s AI Pivot Is More Real Than Hype

- [IND]

Nvidia backs Corning factories with billions

- [IND]

Why Anthropic and the Gates Foundation should fund AI public goods

- [IND]

Why Observability Is Critical for Cloud-Native Systems

- [IND]

Data centers are pushing homeowners to solar

- [IND]

How to choose a GPU for 异环