Why OpenAI’s cookie defaults are a trust mistake

OpenAI should not enable marketing cookies by default for free ChatGPT users.

OpenAI made marketing cookies the default for free ChatGPT users.

OpenAI’s decision to turn on marketing cookies by default for free users is a trust mistake, not a harmless growth tweak.

The company told users it will now use cookies to promote OpenAI products and services on other websites, and WIRED found those settings were on by default for free accounts. That matters because ChatGPT is not a casual content site. It is a product people use to draft emails, write code, and handle sensitive work. When a company that sits inside that workflow starts nudging free users across the web, the privacy line stops looking like a product detail and starts looking like a business model.

Default settings reveal the real power balance

Get the latest AI news in your inbox

Weekly picks of model releases, tools, and deep dives — no spam, unsubscribe anytime.

No spam. Unsubscribe at any time.

Defaults matter because most people never change them. OpenAI knows that, which is why the marketing privacy control being enabled for free users is so revealing. A user has to go hunting through Settings > Data Controls > Marketing Privacy to opt out. That is not a neutral choice architecture. It is a deliberate funnel that turns passive consent into an acquisition channel.

This is especially hard to defend when the company says the same users’ conversations are not shared with marketing partners. Fine. But the issue is not just chat transcripts. OpenAI says it may share limited identifiers, such as cookie IDs or device IDs, to measure ad conversions and promote its products on third-party properties. That is still tracking, still profiling, and still a one-way extraction of value from free users who are paying with attention and behavioral data.

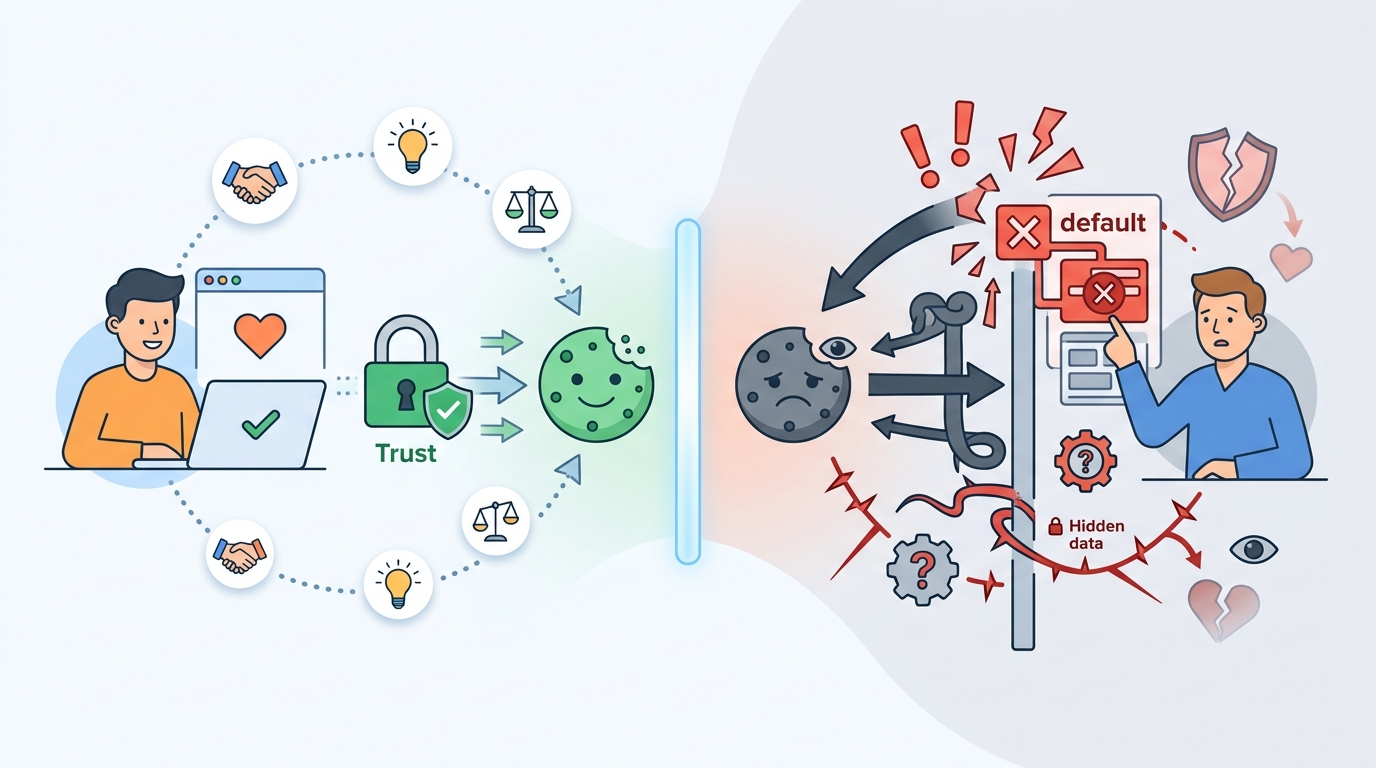

Privacy promises lose force when the business shifts

OpenAI has spent years building its brand around usefulness and restraint, but this policy change pushes it closer to the ad-tech playbook. The company is already experimenting with ads inside ChatGPT, and now it is extending that logic outside the app. The pattern is clear: free users are no longer just product users, they are a growth segment to be retargeted until they convert.

That creates a credibility problem. If a company says it does not sell personal data but also says it may share limited information with select marketing partners for targeted advertising, the distinction is legalistic, not reassuring. Users do not care whether the label is “sale,” “sharing,” or “cross-context behavioral advertising.” They care that their usage of a tool is being turned into ad infrastructure. Once that happens, every privacy pledge sounds conditional.

The ad-tech logic does not belong in a trust product

OpenAI is not a social network where surveillance ads are the price of admission. It is a utility that people invite into work, study, and personal planning. That makes the move more corrosive than a standard web ad tracker. A search engine can argue that ads are part of the bargain. A chatbot that drafts sensitive text and stores user history cannot pretend the same bargain feels natural.

There is also a strategic cost. The more OpenAI behaves like a conventional ad platform, the more it invites the same distrust that has damaged the rest of the internet. Users already worry about prompt retention, model training, and data leakage. Adding default marketing cookies tells them, correctly, that the company sees their free usage as a monetization surface first and a relationship second.

The counter-argument

OpenAI’s defense is straightforward: free services need revenue, and targeted marketing is cheaper than forcing every user into a subscription. The company also says it does not share conversations with advertisers, only limited identifiers, and that users can opt out in settings. On that reading, the policy is a standard growth tactic, not a privacy breach.

There is a real business case here. OpenAI is racing toward massive infrastructure costs, expanding ads inside ChatGPT, and looking for a path to an eventual IPO. If free users are going to remain free, the company needs a way to convert them, and off-platform marketing is a familiar route. The logic is coherent.

But coherent does not mean acceptable. The limit is simple: a company cannot build a trust-dependent product and then rely on default tracking to monetize the people least able to pay. Opt-out tracking shifts the burden onto users and exploits inertia. If OpenAI wants marketing cookies, it should ask for opt-in consent up front. Anything less is a choice to prioritize conversion over credibility.

What to do with this

If you are an engineer, PM, or founder, treat default privacy settings as product strategy, not compliance trivia. If your product handles sensitive or high-intent user behavior, never turn on ad tracking by default for free users. Use opt-in consent, keep marketing data separate from core product data, and assume every shortcut will be read as a statement of values. The fastest way to lose trust is to monetize first and explain later.

// Related Articles

- [IND]

Why Nebius’s AI Pivot Is More Real Than Hype

- [IND]

Nvidia backs Corning factories with billions

- [IND]

Why Anthropic and the Gates Foundation should fund AI public goods

- [IND]

Why Observability Is Critical for Cloud-Native Systems

- [IND]

Data centers are pushing homeowners to solar

- [IND]

How to choose a GPU for 异环