Why Pinecone’s compiled vector artifacts are the right move for AI ag…

Pinecone is right: AI agents need precompiled knowledge artifacts, not raw vector hunting.

Pinecone is right: AI agents need precompiled knowledge artifacts, not raw vector hunting.

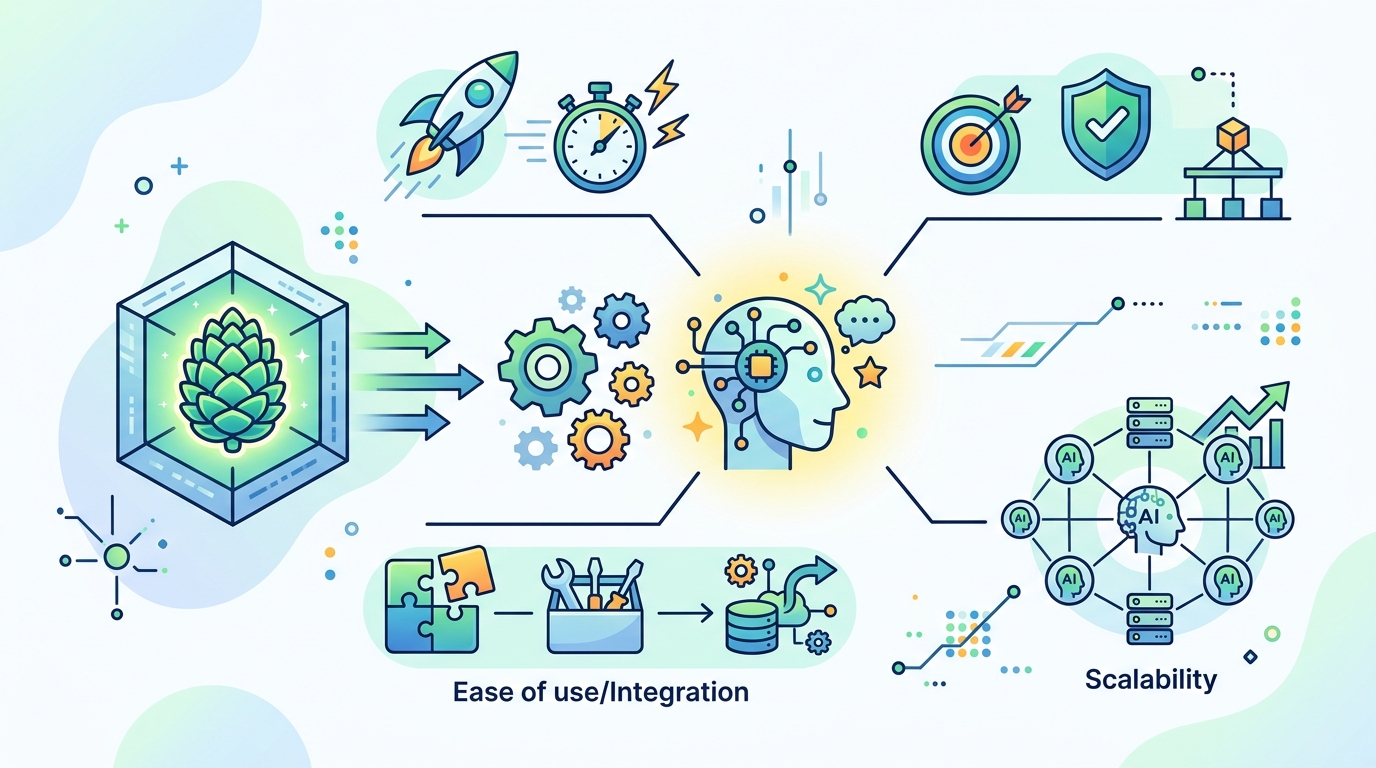

Pinecone’s Nexus is not a gimmick. It is a direct response to the mess most agent systems are already hitting: raw retrieval is too slow, too expensive, and too inconsistent for production workloads. When a system is forced to sift through vectors on every call, token bills climb, latency becomes unpredictable, and “agentic” workflows collapse into brute-force search. Pinecone’s bet is that the next layer in AI infrastructure is not another model trick, but a knowledge layer that compiles context ahead of time.

Precompiled context beats repeated retrieval

Get the latest AI news in your inbox

Weekly picks of model releases, tools, and deep dives — no spam, unsubscribe anytime.

No spam. Unsubscribe at any time.

The strongest argument for Nexus is simple economics. Pinecone says task completion rates are stuck at 50 to 60 percent when agents operate on raw vector data, while its compiled artifacts can cut token usage by up to 90 percent. That is not a small optimization. It is the difference between an agent system that looks clever in a demo and one that survives real usage across thousands of requests.

There is a reason compilers exist in software engineering: work done once is cheaper than work repeated endlessly at runtime. Pinecone is applying that same logic to knowledge retrieval. Instead of making every inference call rediscover the same contextual relationships, the Context Compiler packages task-specific artifacts with provenance, RBAC scoping, versioning, and PII tags. That is the right shape for enterprise AI, where correctness and auditability matter as much as speed.

Agents need specialized knowledge, not generic vector soup

Pinecone is also right to reject the idea that one retrieval layer should serve every agent equally. A sales agent does not need the same context as a finance agent, and a CEO agent does not need the same signal mix as a marketing agent. The examples Pinecone gives are persuasive because they mirror how real teams work: Gong transcripts and Slack threads for sales, billing schedules and usage thresholds for finance, campaign touches and product-qualified signals for marketing.

That specialization matters because agent failures usually come from missing structure, not missing data. A generic vector search might surface related documents, but it does not enforce the decision frame the agent needs. Pinecone’s KnowQL primitives, especially intent, provenance, confidence, and budget, are a better contract between agent and retrieval system than “search and hope.” If agents are going to act on behalf of humans, the retrieval layer must be opinionated about what counts as relevant and what level of certainty is acceptable.

The counter-argument

The best objection is that Pinecone is building a proprietary abstraction on top of a problem that foundation models and better retrieval methods will eventually solve natively. Critics will say precompiled artifacts risk becoming stale, that task-specific pipelines add operational overhead, and that the market does not need a new knowledge compiler when RAG, better embeddings, and larger context windows are all improving. They are right about one thing: if the compilation layer is not maintained, it becomes a liability.

But that does not weaken Pinecone’s thesis. It strengthens it. Enterprise AI is not a lab exercise, and the bottleneck is not just model intelligence. It is governance, cost control, and repeatable execution over proprietary data. Pinecone’s own pitch addresses the real failure mode: agents wasting tokens and time by rediscovering the same context under inconsistent conditions. A compiled artifact layer is not a workaround for bad retrieval. It is the retrieval architecture production systems need when the same knowledge must be reused safely across many agent runs.

What to do with this

If you are an engineer or PM building agent workflows, stop treating retrieval as a live query problem only. Separate discovery from execution. Precompute the context your agents repeatedly need, attach provenance and access controls, and measure success on latency, token spend, and task completion, not just recall. If your use case is enterprise-facing, Pinecone’s direction is the right one: compile knowledge once, then let agents consume a structured artifact instead of paying to reconstruct context every time.

// Related Articles

- [AGENT]

How to Switch AI Outputs from Markdown to HTML

- [AGENT]

Anthropic’s Cat Wu on proactive AI assistants

- [AGENT]

How to Run Hermes Agent on Discord

- [AGENT]

Why RAGFlow is the right open-source RAG engine to self-host

- [AGENT]

How to Add Temporal RAG in Production

- [AGENT]

GitHub Agentic Workflows puts AI agents in Actions