Why RAG is ending for agentic AI

RAG is the wrong layer for agentic AI, and compilation-stage knowledge systems will replace it.

RAG is the wrong layer for agentic AI, and compilation-stage knowledge systems will replace it.

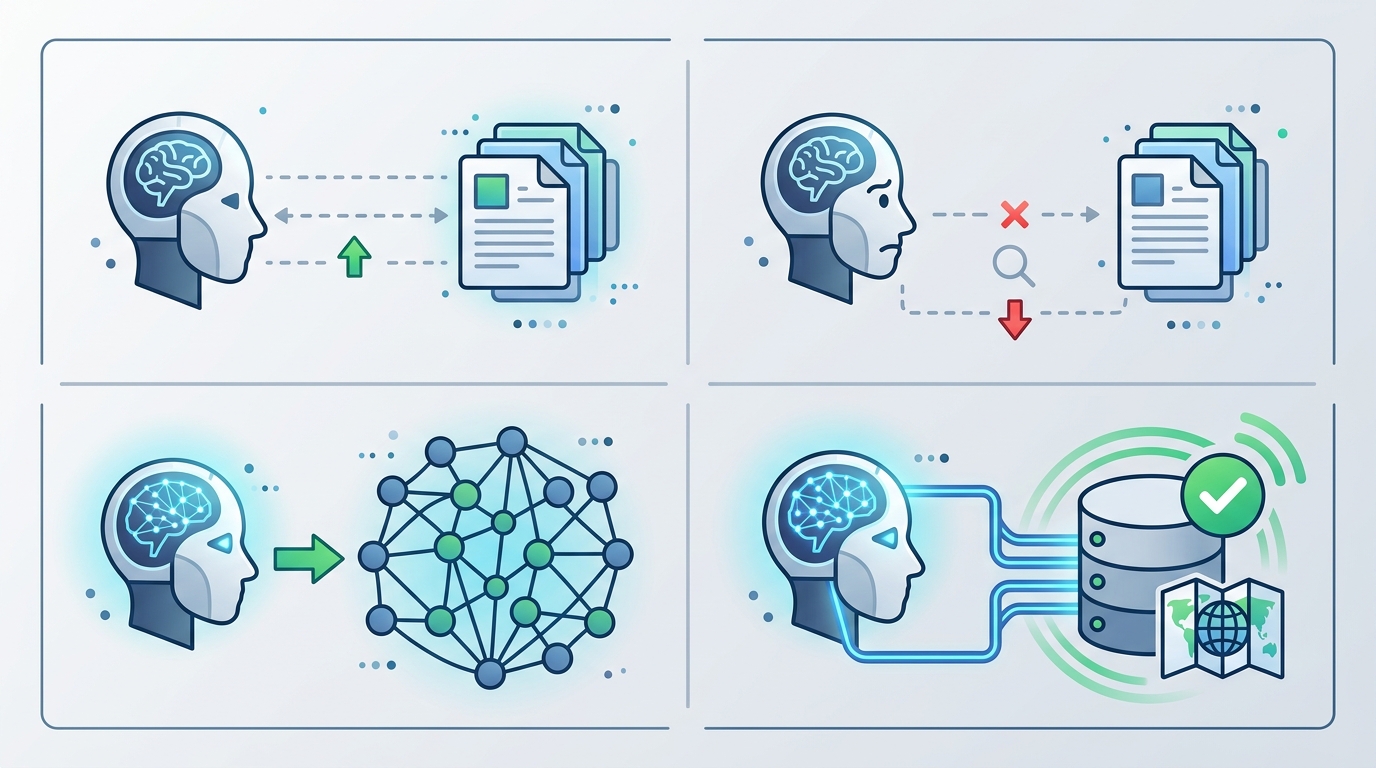

RAG is ending as the default architecture for agentic AI, and the replacement is not a better vector search stack but a compilation-stage knowledge layer that turns raw enterprise data into durable task artifacts before an agent ever asks a question. That is the real signal in Pinecone’s Nexus announcement: the company is not polishing retrieval, it is redefining the unit of knowledge from chunks to compiled context, with KnowQL giving agents a way to ask for output shape, confidence, and latency instead of just “find relevant text.” The market is already moving in that direction. VentureBeat’s Q1 2026 Pulse survey shows standalone vector databases losing adoption share while hybrid retrieval intent has tripled to 33.3 percent, and Pinecone’s own benchmark claims a financial analysis task dropped from 2.8 million tokens to 4,000. Whether that exact number holds in production is secondary. The architectural verdict is clear: agents need compiled knowledge, not raw retrieval.

First argument: agents need stable artifacts, not fresh search every turn

Get the latest AI news in your inbox

Weekly picks of model releases, tools, and deep dives — no spam, unsubscribe anytime.

No spam. Unsubscribe at any time.

Human chatbots can tolerate live retrieval because the user is the compiler. The user reads, compares, and decides what matters. Agents do not work that way. They need a stable internal representation they can reuse across steps, because a multi-step task breaks when the model has to re-derive the same context on every turn. Pinecone’s description of a context compiler is important here: it does not just retrieve passages, it creates persistent, task-specific knowledge artifacts before the agent starts acting. That shifts the system from “search and answer” to “prepare and execute,” which is the right order for autonomous work.

The token economics make the case even more sharply. Pinecone says one financial analysis task went from 2.8 million tokens to 4,000 tokens under Nexus, a 98 percent reduction. Even if the benchmark is vendor-run and not yet production-validated, the direction matters more than the exact ratio. Agentic systems fail when they drag huge context windows through every step, because latency, cost, and error accumulation rise together. A compilation layer attacks all three at once by front-loading the expensive reasoning into a reusable artifact. That is not an incremental improvement to RAG. It is a different operating model.

Second argument: retrieval without structure is too weak for enterprise work

Enterprise data is not a pile of documents waiting to be ranked. It is contracts, policies, tables, tickets, reports, and logs, each with conflicting versions, missing fields, and different trust levels. Traditional RAG treats this mess as text to be embedded and ranked. That works for broad recall, but it fails when the agent needs deterministic answers with provenance. Pinecone’s Nexus adds field-level citations and deterministic conflict resolution, which is the right response to enterprise reality. If an agent is drafting a compliance memo or reconciling financial inputs, “top-k similar chunks” is not enough. It needs structured knowledge with traceable lineage.

The broader market is already voting against pure vector search. VentureBeat’s survey finding that every standalone vector database is losing share while hybrid retrieval intent has tripled to 33.3 percent is not a minor preference shift. It shows buyers want retrieval plus structure, not retrieval alone. That is exactly why the next layer should sit above raw indexing and compile data into task-ready forms. In practice, that means the system should precompute summaries, schema-aware facts, contradictions, and citations, then expose them through a declarative interface. A vector store can still be part of the stack, but it stops being the product story. The product story becomes knowledge compilation.

The counter-argument

The strongest defense of RAG is that it is simple, flexible, and already ubiquitous. Teams know how to chunk documents, embed them, retrieve top results, and feed them to an LLM. That pipeline is cheap to prototype and easy to explain, which matters in a market where most agent projects still live in experimentation. A compilation layer sounds heavier. It introduces preprocessing, task-specific artifacts, and new query semantics. For many teams, especially those with modest scale or low-stakes use cases, RAG remains the fastest path from idea to demo.

There is also a legitimate concern about overfitting the knowledge layer to specific tasks. If you compile too aggressively, you risk baking in assumptions that do not generalize. Retrieval is forgiving because it keeps the source material close at hand. Compiled artifacts can drift, and if the upstream data changes quickly, the system needs refresh logic and governance. In other words, RAG is messier but more elastic.

That critique is real, but it does not save RAG as the dominant architecture for agentic AI. It only defines where RAG still belongs: early-stage prototypes, open-ended exploration, and low-risk assistants. Once an agent must execute repeatable work across steps, the cost of re-retrieval and context sprawl overwhelms the simplicity advantage. Compilation is not overengineering when the task demands determinism, provenance, and reuse. It is the minimum viable architecture for agents that do more than answer questions.

What to do with this

If you are building agents, stop treating retrieval as the core product and start designing the knowledge layer as a compiler for action. Engineers should separate source ingestion, artifact generation, and agent query surfaces. PMs should measure task completion, token burn, contradiction handling, and citation quality, not just retrieval accuracy. Founders should position around durable knowledge objects and declarative agent queries, because that is where the market is heading. Keep vector search in the stack if it helps, but do not confuse the index with the intelligence layer. The next winners will compile enterprise context into something agents can actually use.

// Related Articles

- [AGENT]

How to Switch AI Outputs from Markdown to HTML

- [AGENT]

Anthropic’s Cat Wu on proactive AI assistants

- [AGENT]

How to Run Hermes Agent on Discord

- [AGENT]

Why RAGFlow is the right open-source RAG engine to self-host

- [AGENT]

How to Add Temporal RAG in Production

- [AGENT]

GitHub Agentic Workflows puts AI agents in Actions