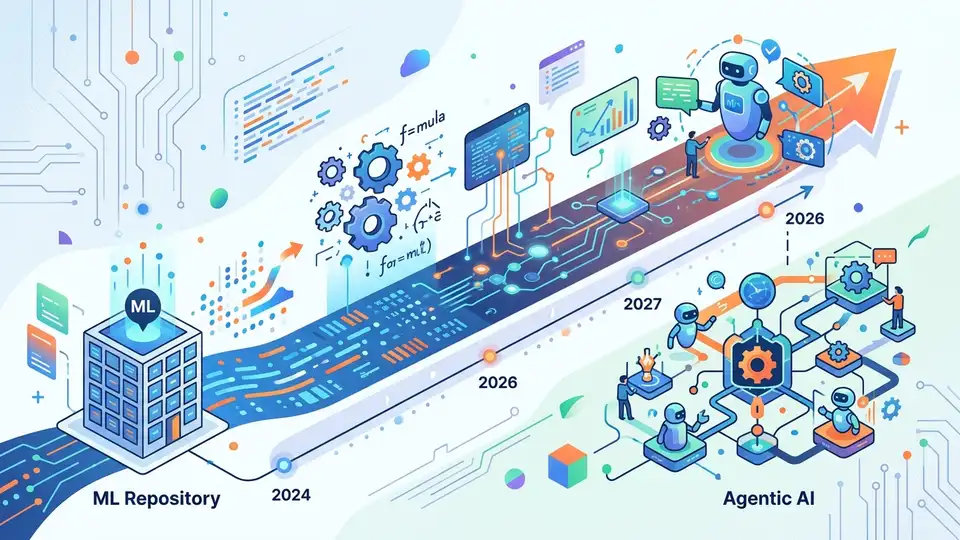

2026 AI roadmap repo maps ML to agentic AI

A tiny GitHub repo with 1 star lays out a 2026 path from ML basics to agentic AI, with tools, projects, and roles.

A GitHub repository with 1 star and 2 forks is trying to do something ambitious: map the road from math fundamentals to agentic AI in one place. The repo is called 2026-ROADMAP-FOR-ADVANCE-ML-AI-GENERATIVE-AI-GENERATIVE-AI-AGENTIC-AI, and its pitch is simple: if you want a job in ML, GenAI, or agent workflows in 2026, here is the order to learn things.

That kind of repository is easy to dismiss because the internet is full of “learn AI in 30 days” content. But this one is more useful than the average checklist because it spans the full stack: math, Python, deployment, large language models, and the newer wave of autonomous systems built around tools, memory, and planning.

What this roadmap actually covers

Get the latest AI news in your inbox

Weekly picks of model releases, tools, and deep dives — no spam, unsubscribe anytime.

No spam. Unsubscribe at any time.

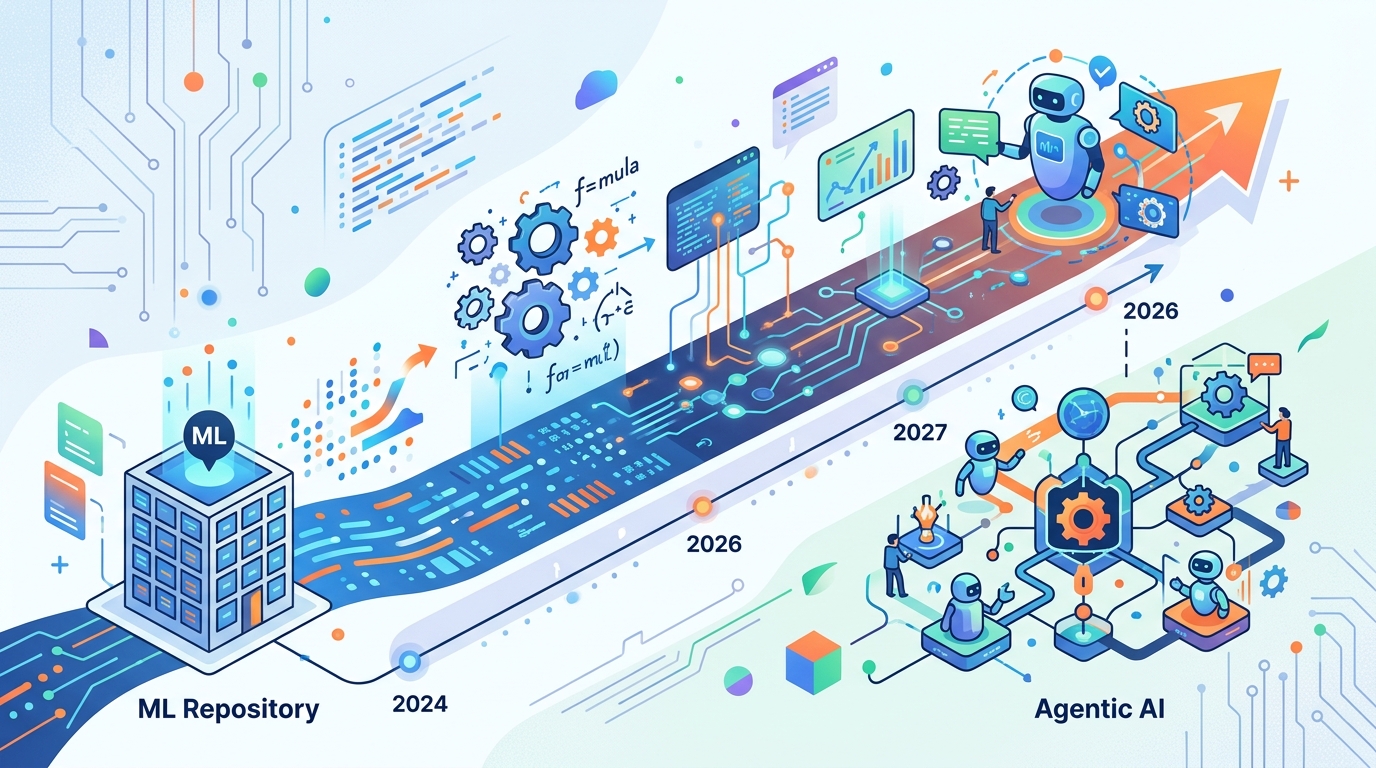

The README breaks the year into phases, starting with foundations in January through March and ending with agentic AI work from October through December. That structure matters. A lot of people jump straight to prompt engineering or building chatbots, then hit a wall when they need to debug a model pipeline or explain why a classifier is drifting in production.

The roadmap begins where most serious AI work begins: linear algebra, probability, optimization, Python, SQL, Git, Docker, and basic system design. Then it moves into supervised and unsupervised learning, time series, and deployment. After that, it shifts into deep learning, generative AI, and finally agent frameworks like LangGraph, AutoGen, CrewAI, and smolagents.

- Foundations: linear algebra, probability, optimization, Python, SQL, Git, Docker

- ML: regression, classification, clustering, dimensionality reduction, time series

- Deep learning: CNNs, RNNs, attention, transformers, PyTorch, TensorFlow

- GenAI: LLMs, diffusion models, fine-tuning, RLHF, prompt patterns

- Agentic AI: tool use, memory, planning, human-in-the-loop workflows

That progression is sensible because it mirrors how real teams hire. A hiring manager for an ML engineer role usually cares about data prep, evaluation, and deployment before they care about whether you can name every new model family. A GenAI engineer, meanwhile, needs to understand retrieval, inference cost, latency, and how to connect a model to external tools.

The repo also calls out a practical toolchain: MLflow, DVC, Hugging Face Transformers, Hugging Face Hub, FastAPI, Streamlit, and cloud deployment on AWS, GCP, or Azure. That mix tells you the author is thinking about production work, not just notebooks.

Why the 2026 timing matters

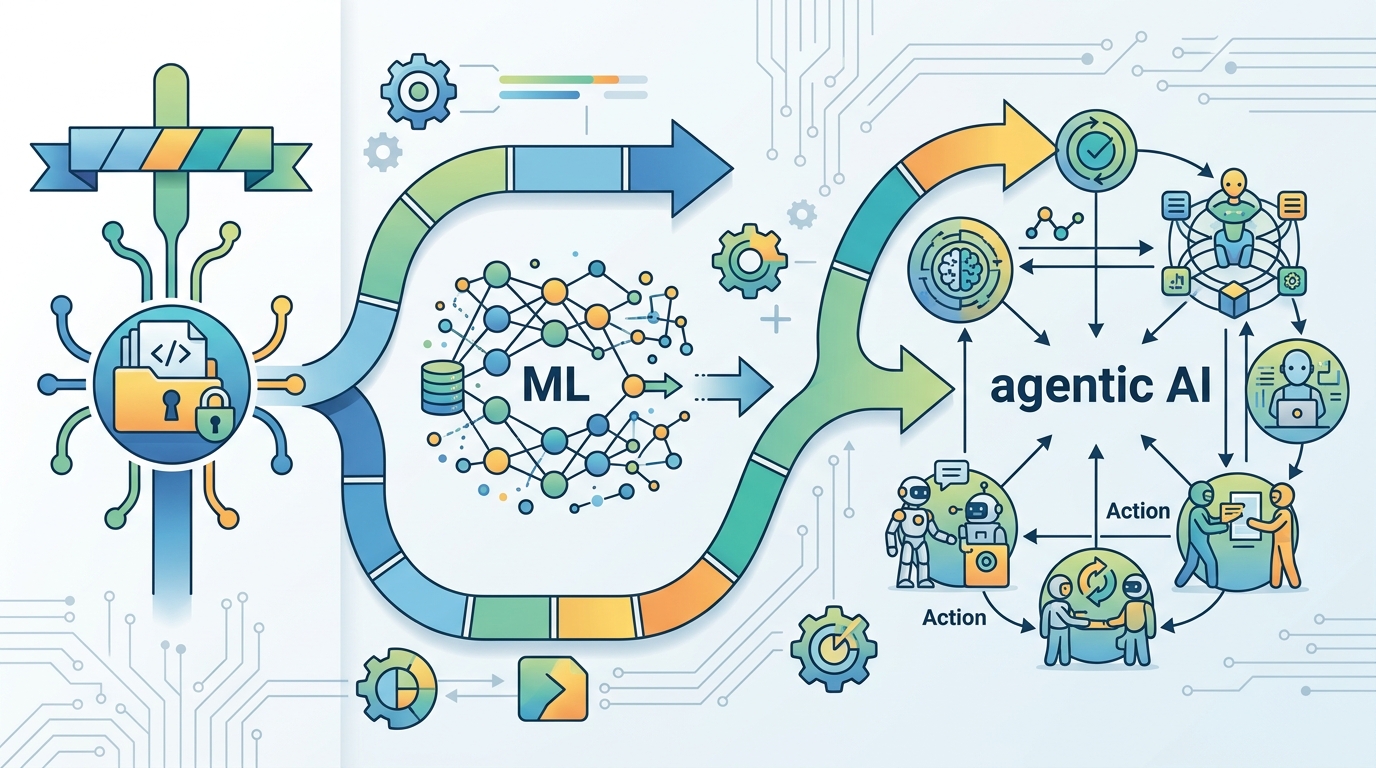

The title says 2026, but the roadmap is really about the shift already happening now: the move from single-model demos to systems that can plan, call tools, inspect outputs, and keep state across steps. That is the difference between a chatbot that answers a question and an assistant that can book tasks, summarize a repo, or draft code changes across a workflow.

OpenAI has made that direction clear in its public messaging around agents. In a post on OpenAI’s official site, the company wrote,

“We believe that there is a lot of value in AI systems that can take actions on behalf of users.”That sentence captures the whole point of the roadmap’s final section: agentic AI is about action, not just text generation.

The roadmap’s emphasis on OpenAI, Google Gemini, and NVIDIA’s NIM microservices also signals a market reality. The stack is getting more modular. Teams are mixing hosted APIs, open-weight models, retrieval layers, and workflow engines instead of betting on one monolith.

That matters for learners because the skills that age well are the ones that survive across vendors. Python, evaluation, data handling, deployment, and systems thinking matter whether you use GPT-style APIs, Llama-based models, or a smaller model running behind an internal gateway.

- OpenAI: API-based model access and agent direction

- Google Gemini: multimodal model family with API access

- Hugging Face: model hosting, datasets, and deployment tools

- NVIDIA NIM: packaged inference services for enterprise use

- LangGraph: workflow-based agent orchestration

How it compares with real-world AI work

The strongest part of the repo is that it does not stop at model theory. It includes projects like churn prediction, loan default modeling, image classification, neural machine translation, text-to-image generation, and code review agents. That project mix is close to what junior and mid-level AI teams actually build.

Compare that with what employers ask for in practice. A data scientist may spend a week cleaning messy tabular data and another week explaining metrics to stakeholders. An ML engineer may spend as much time on CI/CD and feature pipelines as on model choice. A GenAI engineer may spend hours tuning retrieval quality, testing prompt templates, or reducing latency on an inference endpoint.

The repo’s checklist also includes Weights & Biases, Docker, Kubernetes, Snowflake, Databricks, and vector databases like FAISS and Pinecone. That is a decent signal of what production AI work looks like in 2026: model training, experiment tracking, deployment, retrieval, and monitoring all matter at the same time.

Here is the comparison that matters most:

- Notebook-only learning: fast to start, weak on deployment and debugging

- Project-based learning: slower, but closer to real hiring expectations

- Roadmap-based learning: useful if it pushes you into shipping artifacts, not collecting bookmarks

- Agentic AI work: demands API integration, state, error handling, and evaluation, not just prompts

There is also a subtle hiring signal in the roles listed by the repo: ML Engineer, AI Engineer, GenAI Engineer, Agentic AI Engineer, LLM Fine-Tuning Specialist, MLOps Engineer, and AI-focused Data Scientist. Those titles are already spreading across job boards, but the winning candidates will likely be the ones who can explain tradeoffs, not the ones who only know model names.

Is this roadmap worth following?

Yes, with one warning: a roadmap is only useful if you turn it into a sequence of shipped work. If you spend six months collecting concepts without building a classifier, a retrieval app, and one agent workflow, you will still feel behind when you apply for jobs.

The repository is useful because it gives structure to a crowded field. It says, in effect, learn the math, learn the tooling, build the projects, then learn how to connect models to real tasks. That is a better plan than chasing every new model release.

If you want a practical next step, pick one item from each stage: a tabular ML project, one deep learning experiment in PyTorch, one GenAI app with retrieval, and one agent that uses tools. Publish the code, write down the tradeoffs, and measure something concrete like latency, accuracy, or cost.

My prediction is simple: the people who benefit most from roadmaps like this will be the ones who treat them as a build list, not a reading list. If you are choosing where to start this month, ask yourself one question: which project would prove you can move from model to product without hand-waving?

// Related Articles

- [TOOLS]

Why Gemini API pricing is cheaper than it looks

- [TOOLS]

Why VidHub 会员互通不是“买一次全设备通用”

- [TOOLS]

Why Bun’s Zig-to-Rust experiment is the right move

- [TOOLS]

Why OpenAI API pricing is a product strategy, not a footnote

- [TOOLS]

Why Claude Code’s prompt design beats IDE copilots

- [TOOLS]

Why Databricks Model Serving is the right default for production infe…