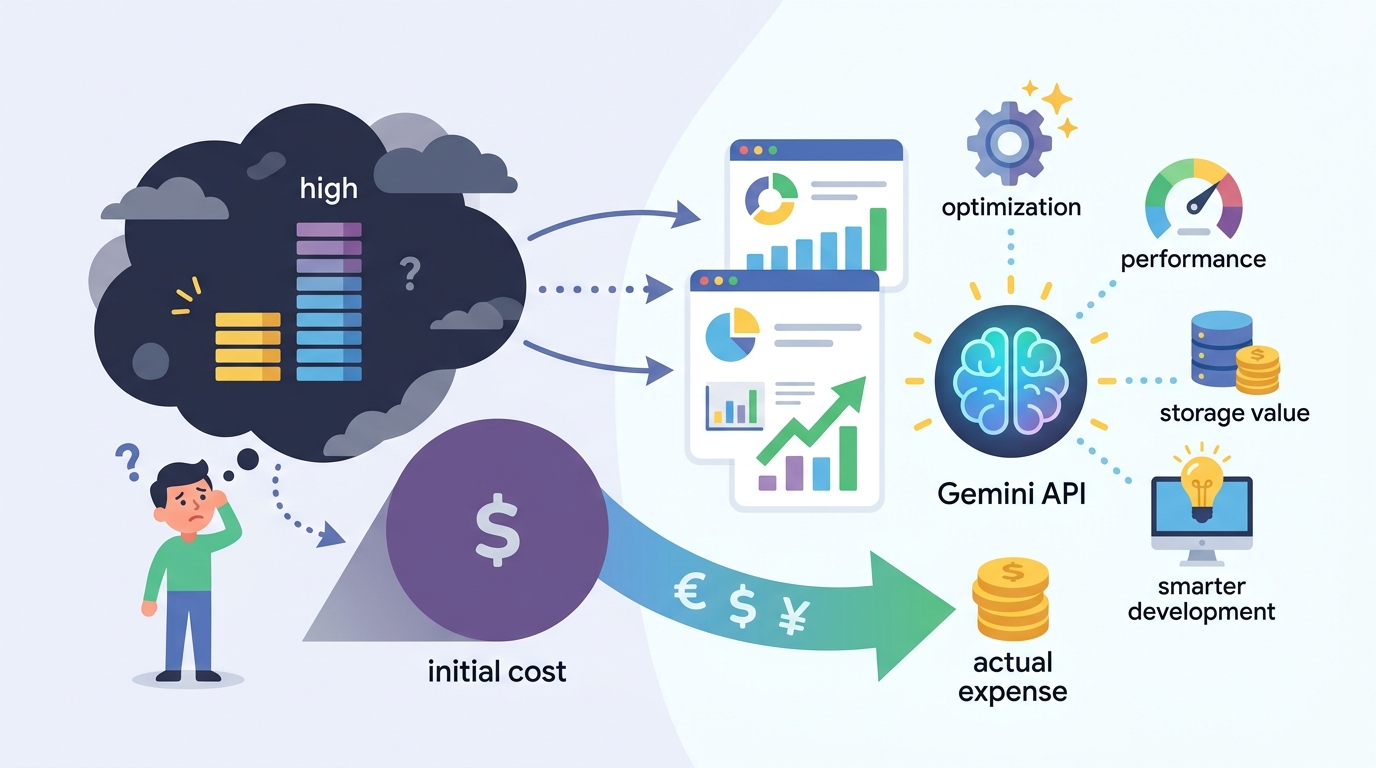

Why Gemini API pricing is cheaper than it looks

Gemini’s sticker price is low, but the real cost is integration, caching, and model choice.

Gemini’s sticker price is low, but the real cost is integration, caching, and model choice.

Google’s Gemini API is not expensive in the way teams usually mean expensive. The raw token rates are competitive, and the latest 3.1 family pushes flagship reasoning into a range that undercuts the most premium alternatives. What makes Gemini costly is not the invoice line for tokens; it is the engineering work around it, from prompt design to context management to feature-specific charges like grounding and media generation.

First, the model prices are only the headline

Get the latest AI news in your inbox

Weekly picks of model releases, tools, and deep dives — no spam, unsubscribe anytime.

No spam. Unsubscribe at any time.

On paper, Gemini 3.1 Pro starts at $2 per million input tokens and $12 per million output tokens for contexts at or below 200K, with a higher tier at $4 and $18 once prompts go past that threshold. That sounds simple until you compare it to the actual workload. A product team shipping a support agent, coding assistant, or research workflow does not buy “tokens”; it buys answers, and answers depend on how much context you stuff into the request.

The pricing table itself proves the point. Gemini 3.1 Flash-Lite sits at $0.25 per million input tokens and $1.50 per million output tokens, while Gemini 3 Flash costs $0.50 and $3.00. That spread is not a minor discount. It is a decision tree. If your use case tolerates lower reasoning depth, you can cut cost by an order of magnitude without leaving the Gemini ecosystem. If you do not make that choice deliberately, you will overpay by default.

Second, context is the real budget killer

Gemini 3.1 Pro advertises a 2 million token context window, which is the kind of feature leaders love to mention in demos and finance teams hate to see in production. The moment you cross 200K tokens, the model jumps to the higher price tier. That means the same product can swing from reasonable to wasteful based on a single architectural choice: whether you resend a giant prompt every turn or design the system to retrieve only what is relevant.

Google’s own pricing makes the fix obvious. Context caching on Gemini 3.1 Pro drops repeated-context costs to $0.20 or $0.40 per million tokens depending on the window, and the article claims caching can reduce costs by up to 90 percent for applications with large repeated prompts. That is not a nice-to-have optimization. It is the difference between a prototype that looks affordable and a production system that survives a real usage spike.

Third, batch and tier selection change the economics

Batch API pricing cuts costs by 50 percent for asynchronous workloads. Gemini 3.1 Pro falls to $1 and $6 per million tokens at the lower context tier, or $2 and $9 at the higher one. For teams running offline summarization, document extraction, enrichment, or backfill jobs, paying full price is a mistake. If the work does not need an immediate response, you should not pay for immediacy.

The same logic applies to the older but still useful 2.5 family. Gemini 2.5 Flash-Lite lands at $0.10 per million input tokens and $0.40 per million output tokens, and the Batch version halves that again. For high-volume, low-stakes tasks like classification, routing, or simple extraction, this is the rational default. The expensive model is not “better” in the abstract. It is only better when the task actually needs its reasoning depth.

The counter-argument

The strongest case against this view is that Gemini pricing is still complicated enough to create hidden costs. The article notes separate charges for grounding with Google Search, audio, image, video, and music generation, plus different pricing on Vertex AI versus the direct API. A team can absolutely misread the bill if it treats the token table as the whole story. In that sense, “cheap” is a dangerous word because it invites sloppy planning.

That criticism is fair, but it does not overturn the conclusion. Complexity is not the same as high cost. In fact, Gemini is cheaper precisely because Google exposes so many levers: model tier, context size, batch mode, caching, and modality-specific pricing. A disciplined team will use those levers to lower spend. The only teams that get burned are the ones that refuse to design for cost from the start.

What to do with this

If you are an engineer, build cost into the architecture before you ship. Route simple requests to Flash-Lite, reserve Pro for hard reasoning, cache repeated context, and move non-urgent jobs to Batch. If you are a PM or founder, stop asking whether Gemini is cheap in general and start asking what one successful user action costs at your expected volume. That is the number that matters. If you cannot answer it, you do not have a pricing strategy, you have a guess.

// Related Articles

- [TOOLS]

Why VidHub 会员互通不是“买一次全设备通用”

- [TOOLS]

Why Bun’s Zig-to-Rust experiment is the right move

- [TOOLS]

Why OpenAI API pricing is a product strategy, not a footnote

- [TOOLS]

Why Claude Code’s prompt design beats IDE copilots

- [TOOLS]

Why Databricks Model Serving is the right default for production infe…

- [TOOLS]

Why IBM’s Bob is the right kind of AI coding assistant