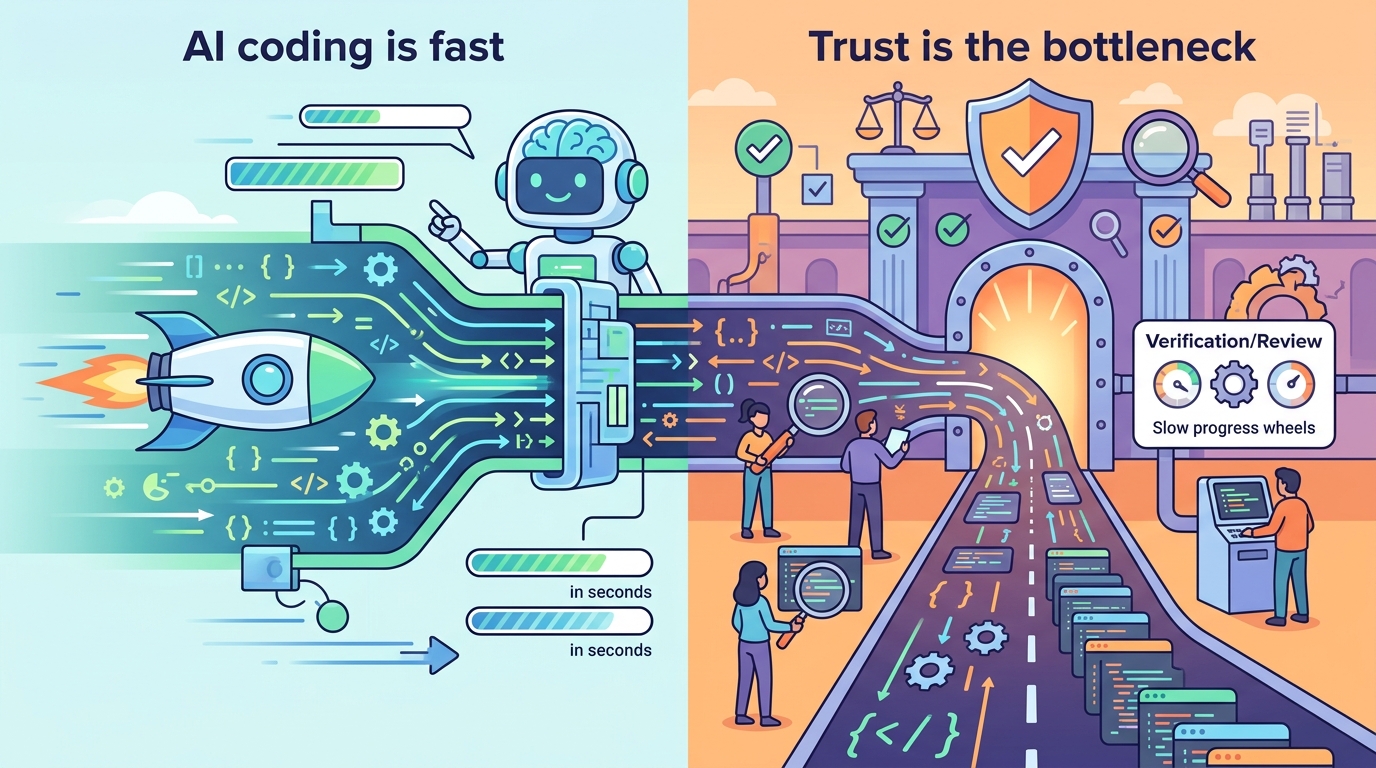

AI coding is fast. Trust is the bottleneck

AI coding tools are shipping faster than humans can review them, pushing enterprises toward code governance, testing, and trust layers.

AI can now write code faster than most developers can type, and that speed is already changing how software gets built. The new problem is less about generation and more about verification: when tools like Anthropic Claude Code and OpenAI Codex can draft production code in seconds, who checks that it is correct, secure, and compliant?

That question is moving from theory to daily operations. In Fortune’s reporting, Qodo says it has raised $70 million to tackle what its founders call AI-generated code “slop,” and enterprise customers such as Walmart, Nvidia, Ford, and Texas Instruments are already paying attention. The reason is simple: speed is useful only when the code can be trusted.

AI coding is changing the bottleneck

Get the latest AI news in your inbox

Weekly picks of model releases, tools, and deep dives — no spam, unsubscribe anytime.

No spam. Unsubscribe at any time.

For years, software teams treated coding speed as the main constraint. AI coding assistants flipped that script. A developer can now ask for a feature, get a draft implementation, and move on before a human reviewer would have finished typing the first function signature.

That sounds great until you remember what enterprise software actually looks like. Large codebases carry years of business logic, compliance rules, and internal conventions. A model that can generate a feature in one pass may still miss the edge cases that keep payroll systems, medical workflows, or factory software from breaking in production.

That is why the bottleneck is shifting. Writing code is getting cheaper. Proving that the code is safe to ship is getting more expensive.

- Fortune reported that Claude Code itself was written with Claude Code.

- Anthropic’s tool also hit scrutiny after its source code was accidentally leaked in a packaging mistake.

- Qodo says it analyzes pull requests, comments, and past changes to infer company-specific coding rules.

- Its system then flags new code that violates those rules before it ships.

Why enterprises care about trust, not hype

Itamar Friedman, cofounder and CEO of Qodo, told Fortune that AI tools are built to complete tasks, not question them. That distinction matters inside big companies, where “good code” is rarely a universal standard. What counts as acceptable in one team may violate a security policy, a testing rule, or an architectural norm in another.

Friedman’s point is that enterprise software depends on tribal knowledge. Senior engineers know which shortcuts are harmless, which ones are risky, and which ones will cause a painful incident six months later. AI can imitate patterns, but it does not automatically know the unwritten rules that live in code review threads and old pull requests.

That is why Qodo is building what Friedman calls a governance and trust layer. The idea is to let an organization teach the system how it actually writes software, then enforce those patterns automatically across new AI-generated changes.

“AI is not enough when you’re talking about real-world software quality and code governance,” Friedman told Fortune. “What you need, actually, is official wisdom.”

That phrase is memorable because it gets to the heart of the enterprise problem. Companies do not want a chatbot that sounds confident. They want a system that understands their rules well enough to be audited.

Friedman also framed the issue as a scale problem. At a large company, millions of changes can move through the pipeline each year. Even a tiny error rate becomes serious when it repeats across that volume. AI increases throughput, which means governance has to become more automated too.

How Qodo compares with the coding boom

The current wave of vibe coding tools is built for creation. Qodo is built for review. That difference matters because the economics are different as well. If a model helps one engineer write a feature in 10 minutes instead of 60, that is useful. If it helps a team avoid one bad deployment that would have taken a day to unwind, that is better.

Friedman said Qodo’s clients want speed, but they do not want to trade away control. That tension is why code review, testing, and policy enforcement are becoming part of the product story around AI coding, not an afterthought.

- Claude Code and Codex focus on generation.

- Qodo focuses on review and policy enforcement.

- Apple’s App Store crackdown on vibe coding apps shows that distribution platforms also care about review boundaries.

// Related Articles

- [TOOLS]

Why Gemini API pricing is cheaper than it looks

- [TOOLS]

Why VidHub 会员互通不是“买一次全设备通用”

- [TOOLS]

Why Bun’s Zig-to-Rust experiment is the right move

- [TOOLS]

Why OpenAI API pricing is a product strategy, not a footnote

- [TOOLS]

Why Claude Code’s prompt design beats IDE copilots

- [TOOLS]

Why Databricks Model Serving is the right default for production infe…