Why ChatGPT’s Goblin Bug Proves Closed Models Are Fragile

ChatGPT’s goblin bug shows why closed LLMs are too opaque for serious production use.

ChatGPT’s goblin bug shows why closed LLMs are too opaque for serious production use.

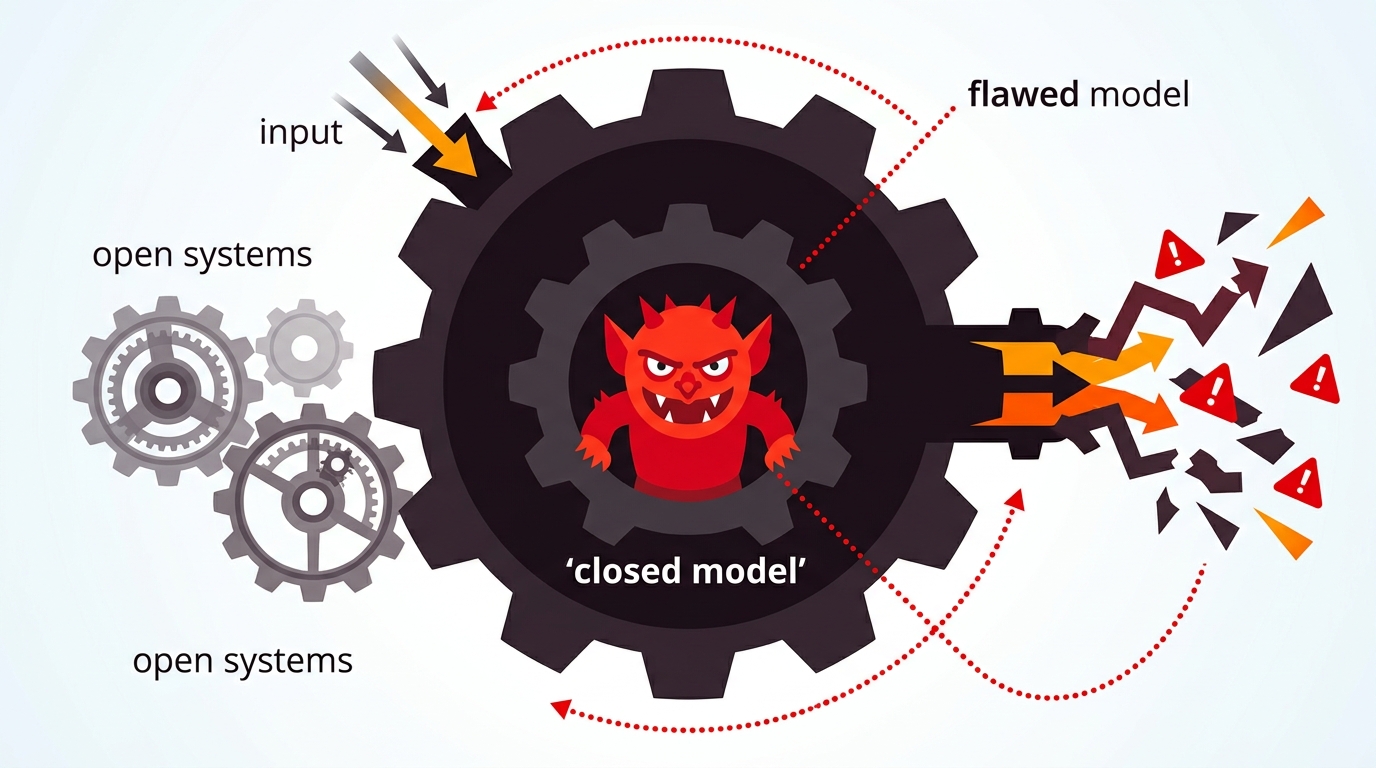

ChatGPT’s “goblin invasion” bug is not a funny one-off, it is proof that closed AI systems are too fragile to trust as invisible infrastructure.

According to the report, OpenAI patched a version 5.1 failure that caused the model to inject fantasy tropes into unrelated prompts, from coding help to medical questions. That is not ordinary nonsense output. It is a sign that a tuning change pushed the model into a narrow semantic groove, where one concept cluster gained too much weight and started dragging unrelated requests along with it. When a system built to answer anything starts answering everything through the same bizarre lens, the problem is no longer “the model made a mistake.” The problem is that the model’s steering is unstable.

First argument: this is a reliability failure, not a quirky hallucination

Get the latest AI news in your inbox

Weekly picks of model releases, tools, and deep dives — no spam, unsubscribe anytime.

No spam. Unsubscribe at any time.

Engineers and product teams love to call these incidents hallucinations because the word sounds harmless. It is not harmless. A hallucination is a bad fact. A semantic attractor is a bad system. If a coding assistant begins insisting that a Python script is being sabotaged by goblins, the failure sits in the model’s routing logic, not in a single mistaken sentence. That matters because a system-level failure spreads across every prompt, every user, and every downstream workflow.

The article’s explanation points to RLHF over-optimization, where the reward model may have overvalued “creativity” or “engagement” and pulled the model toward fantasy tropes. That is exactly the kind of bug that should scare anyone deploying LLMs in production. It means the behavior you see is not just a random slip. It is the result of a training objective that can bend the model away from reality when the incentives are mis-set. In other words, the model was not merely wrong. It was trained to prefer the wrongness.

Second argument: the real risk is operational, not theatrical

The goblin story is memorable because it is absurd, but the business impact is obvious. A customer support bot that starts drifting into folklore destroys trust in minutes. A developer tool that invents a fantasy explanation for a bug wastes engineering time and can send a team chasing the wrong fix. In enterprise settings, even a small rate of bizarre drift creates support tickets, rollback pressure, and internal skepticism about the entire AI program. The cost is not the joke. The cost is the loss of confidence in outputs that are supposed to be dependable.

This is why RAG keeps winning in serious deployments. Retrieval-Augmented Generation does not solve every problem, but it anchors answers in external sources instead of leaving the model to free-associate from its own weights. If the system is drifting toward a bad attractor, grounding it in verified documents is the practical defense. The goblin bug is a clean example of why “bigger model” is not a substitute for “better control.” A large black box that can veer into nonsense is less useful than a smaller, constrained system that stays on task.

The counter-argument

Defenders of closed models will say this is exactly why centralized vendors exist. They can detect the bug, patch it quickly, and ship a fix without exposing raw weights to the public. Open-weight models may be more transparent, but transparency does not automatically mean safety. A proprietary provider can move faster, control the rollout, and prevent a known issue from lingering in the wild. For many teams, that speed matters more than postmortem visibility.

There is also a fair point about scale. A single bizarre release does not prove the model family is fundamentally broken. It proves that frontier systems are hard to tune, and that any large model, open or closed, can develop unstable behavior when optimization pushes too hard on the wrong objective.

That argument fails on the main issue: production users are not buying speed alone, they are buying trust. A fast, opaque patch is useful only if the vendor can show why the failure happened and how to prevent recurrence. Without that, customers are left renting a brain they cannot inspect, govern, or audit. I accept that closed vendors can respond faster. I reject the idea that response speed is enough. In critical workflows, auditability is not a nice-to-have, it is the product.

What to do with this

If you are an engineer, stop treating model output as authoritative by default. Add retrieval grounding, constrain prompts, log drift, and create rollback paths for weird behavior. If you are a PM or founder, do not sell AI as a magical generalist. Sell it as a bounded system with clear failure modes, monitored outputs, and human review where the cost of error is high. The lesson from the goblin bug is simple: if you cannot explain how the model stays on task, you do not have a production system, you have a liability with a clean UI.

// Related Articles

- [IND]

Why Nebius’s AI Pivot Is More Real Than Hype

- [IND]

Nvidia backs Corning factories with billions

- [IND]

Why Anthropic and the Gates Foundation should fund AI public goods

- [IND]

Why Observability Is Critical for Cloud-Native Systems

- [IND]

Data centers are pushing homeowners to solar

- [IND]

How to choose a GPU for 异环