Claude Code hits limits faster than users expect

Anthropic says it is fixing Claude Code limits after users reported burning through tokens far faster than expected, including paid plans.

Anthropic’s Claude Code is getting a lot of attention for the wrong reason: users say they are hitting usage limits far faster than they expected. The company says it is investigating the problem and called the fix its top priority after complaints spread on Reddit. One user on a $100-a-month plan said their free account lasted longer than the paid tier.

This matters because coding assistants are now part of daily developer workflows, not side experiments. When a tool burns through tokens in minutes, it can interrupt debugging, code review, and refactoring in the middle of a work session.

What users are seeing

Get the latest AI news in your inbox

Weekly picks of model releases, tools, and deep dives — no spam, unsubscribe anytime.

No spam. Unsubscribe at any time.

The complaints are pretty specific. Developers say simple prompts are consuming a surprising amount of quota, and some are seeing usage jump in ways that do not match the size of the task. Anthropic said on Reddit that it was looking into the issue and that fixing it was the team’s top priority.

That wording matters. Anthropic is not treating this like a cosmetic bug. It is treating it like a billing and reliability problem, which is exactly what it is for people who depend on the tool to ship code.

- Claude Pro costs $20 per month

- Higher-usage plans cost $100 or $200 per month

- Some business customers pay custom enterprise rates

- Users report quota drops after short sessions and simple replies

The core irritation is transparency. Token-based pricing is common across AI tools, but the amount consumed by each prompt is often hard to predict. If a one-line response can eat a large chunk of a monthly allowance, developers cannot plan around it.

That uncertainty is especially painful in coding, where a small loop, a broken test, or a bad prompt can trigger a chain reaction of extra tokens. One Reddit user described a session where a loop drained a daily budget in minutes. Another said a simple one-sentence reply took usage from 59% to 100%.

Anthropic’s pricing problem is bigger than one bug

Anthropic built Claude Code to sit inside real developer workflows, so every pricing hiccup lands harder than it would in a casual chat app. Developers are used to paying for speed and quality, but they want predictable costs too. When those costs swing sharply, trust drops fast.

The timing is awkward because Anthropic also introduced peak-hour throttling last week on Claude, meaning tokens can be consumed faster when demand is higher. That makes the service feel less like a fixed monthly tool and more like a meter that speeds up when traffic gets heavy.

“One session in a loop can drain your daily budget in minutes,” a user wrote on Reddit.

That quote captures the problem better than any corporate statement. AI coding tools are useful when they save time, but they become frustrating when the pricing model feels like it punishes normal development work.

Anthropic has not publicly broken down the exact cause of the issue, but the complaint pattern suggests something more than a single bad prompt. It could be metering, model behavior, or the interaction between usage caps and throttling. Whatever the root cause, the user experience is the same: the meter is moving too fast.

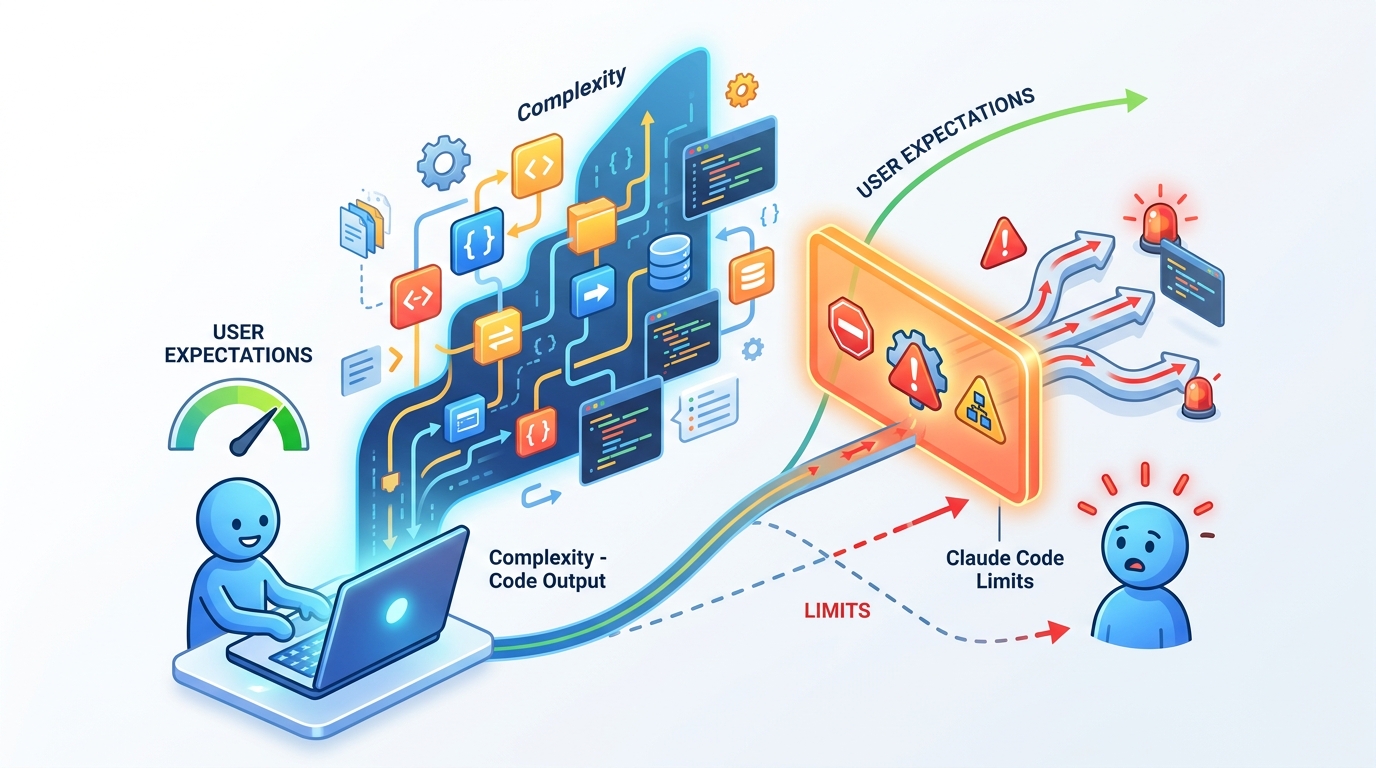

How Claude Code compares with other AI coding tools

Claude Code is part of a crowded field that includes ChatGPT, GitHub Copilot, and Cursor. Each one uses a different mix of subscriptions, rate limits, and model access rules, but the trade-off is the same: more power usually means more complexity around billing.

Anthropic’s public pricing is easy to understand on paper. The real problem is usage predictability. If you are comparing tools for day-to-day coding, the best plan on a spreadsheet is not always the best plan in a long debugging session.

- Claude Pro: $20 per month

- Claude higher tier: $100 per month

- Claude top tier: $200 per month

- GitHub Copilot: typically priced around $10 per month for individuals

- Cursor: individual plans start around $20 per month, with higher tiers for heavier use

Those numbers explain why this issue is getting attention. A $20 subscription feels manageable if it behaves like a steady utility. A $100 or $200 plan feels very different if a few prompts can wipe out the monthly allowance.

There is also a reputational angle. Anthropic recently disclosed that part of its internal source code for Claude Code was released due to human error, not a security breach. The company said no sensitive customer data or credentials were exposed. That incident did not create the current quota problem, but it adds to the sense that Claude Code is under stress from both product growth and operational mistakes.

Why developers care so much about token limits

Token limits sound abstract until they interrupt work. In practice, they decide whether an AI assistant is a helpful pair programmer or an expensive distraction. A developer can tolerate the occasional slow response. A developer cannot tolerate a tool that eats a monthly budget during one debugging loop.

That is why this story matters beyond Anthropic. AI tools are moving deeper into software engineering, and pricing clarity is becoming part of product quality. If the meter is unpredictable, adoption slows, especially inside teams that need to justify every recurring expense.

For Anthropic, the fix needs to do two things at once: stop the immediate quota issue and make usage easier to understand. If the company only patches the bug without improving reporting, the complaints will probably return the next time demand spikes or a prompt spirals.

The broader industry will be watching too. Developers have already shown they will switch tools quickly when costs become hard to predict. Claude Code can still keep its fans, but only if Anthropic makes the billing behavior legible enough for people to trust it during real work.

What happens next

Anthropic says the fix is its top priority, so the next few days should tell us whether this is a short-lived metering bug or a deeper pricing problem. My guess: the company will patch the immediate issue, then quietly tighten how it explains usage so users can see what is eating their tokens.

If you rely on Claude Code for daily work, the practical move is simple: track your usage closely, test short sessions before committing to a higher tier, and compare it with other tools if your budget is tight. The question now is not whether AI coding assistants are useful. It is whether their pricing can stay predictable enough for developers to keep trusting them.

For related coverage, see our report on Anthropic’s source code leak and our analysis of AI coding tool pricing.

// Related Articles

- [TOOLS]

Why Gemini API pricing is cheaper than it looks

- [TOOLS]

Why VidHub 会员互通不是“买一次全设备通用”

- [TOOLS]

Why Bun’s Zig-to-Rust experiment is the right move

- [TOOLS]

Why OpenAI API pricing is a product strategy, not a footnote

- [TOOLS]

Why Claude Code’s prompt design beats IDE copilots

- [TOOLS]

Why Databricks Model Serving is the right default for production infe…