What FerresDB Shipped for Production Rust Search

FerresDB adds PolarQuant, HNSW auto-tuning, PITR, reranking, and Raft-backed distributed storage for production vector search.

FerresDB has moved past the “cool prototype” stage. In the latest update, the Rust vector database added HNSW auto-tuning, PolarQuant compression, point-in-time recovery, cross-encoder reranking, namespace isolation, and a distributed Raft foundation.

That is a lot of surface area for one project, but the direction is clear: this is no longer just about finding nearest neighbors quickly. It is about running vector search with the boring stuff included, the stuff teams usually discover only after their first incident.

From vector search demo to production system

Get the latest AI news in your inbox

Weekly picks of model releases, tools, and deep dives — no spam, unsubscribe anytime.

No spam. Unsubscribe at any time.

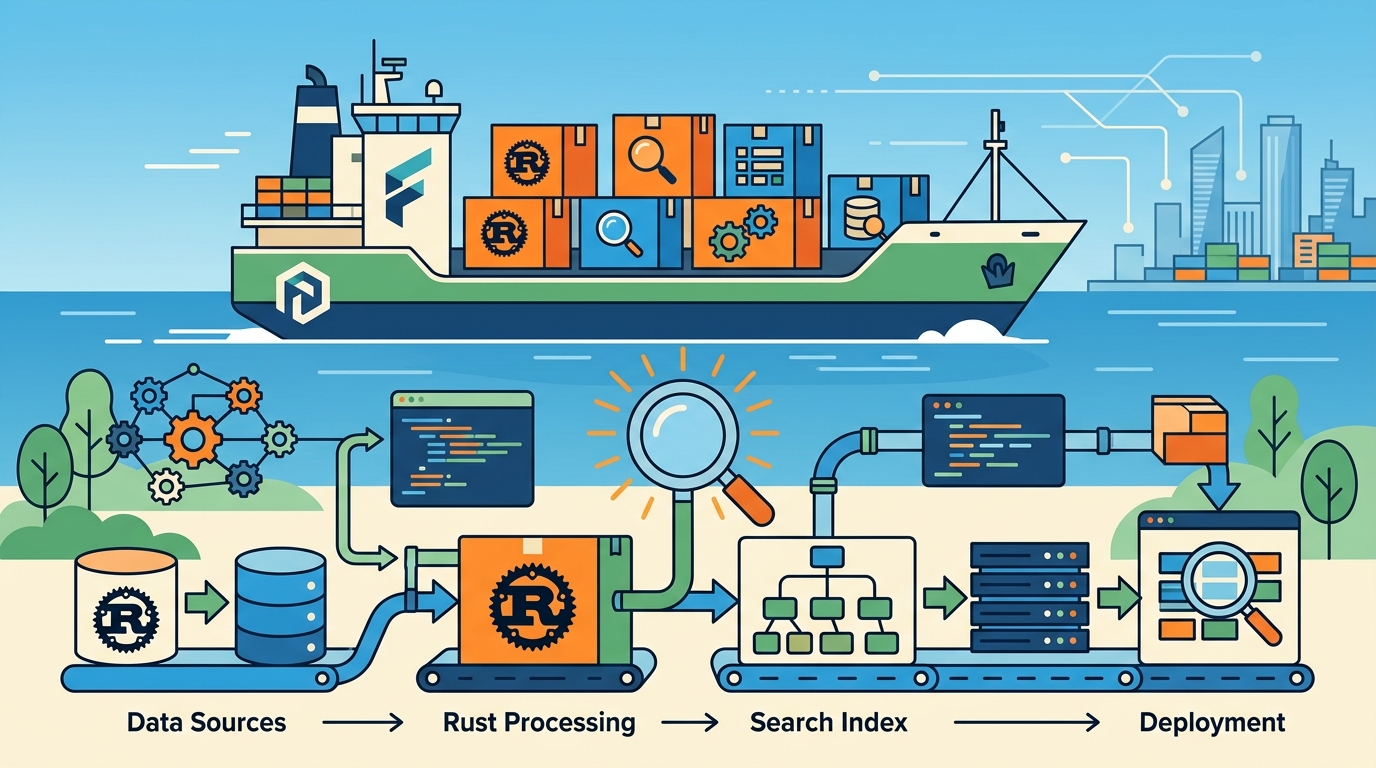

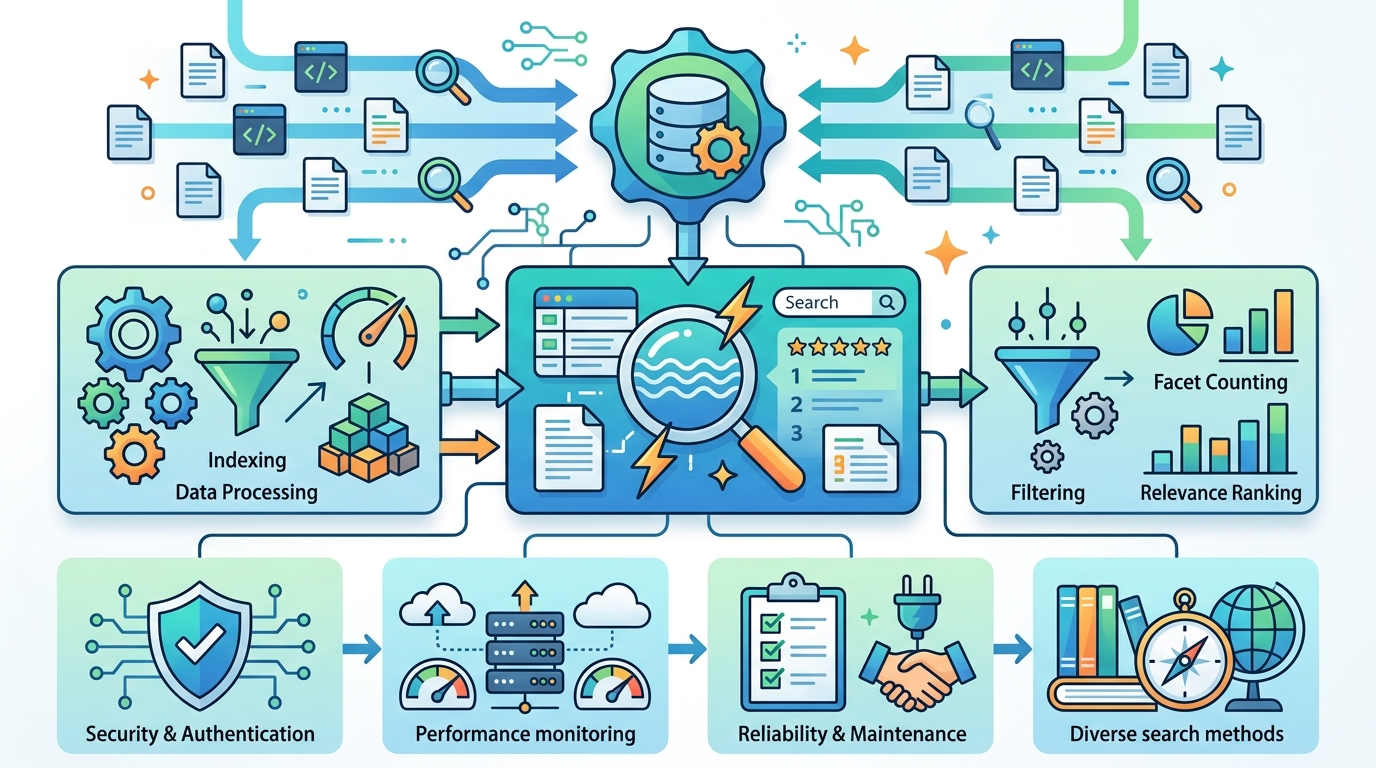

The base FerresDB already had a serious feature set before this round of work landed. It used an HNSW-style index for vector search, a write-ahead log, periodic snapshots, hybrid search with BM25, scalar quantization, tiered storage, WebSocket streaming, OpenTelemetry tracing, and RBAC with API keys.

That matters because the new features build on top of a real storage engine, not a toy index. The update reads like what happens when a search system starts answering hard questions from real users: How do we shrink memory use? How do we recover from mistakes? How do we keep latency predictable when traffic changes?

- HNSW supports cosine, Euclidean, and dot product metrics

- Scalar quantization compresses f32 vectors to u8 and cuts memory by about 4×

- Tiered storage keeps hot data in memory-mapped files

- OpenTelemetry spans track each search operation

- RBAC can restrict access with API keys and collection permissions

If you have built RAG systems or semantic search before, this list probably feels familiar. The difference is that FerresDB is trying to package these pieces into one Rust-native system instead of asking teams to stitch together a search library, a cache, a backup script, and a permissions layer.

PolarQuant changes the compression tradeoff

The most interesting storage change is PolarQuant, FerresDB’s new vector compression method. The older SQ8 approach calibrates each dimension from sampled vectors before the index can be used. That gives good accuracy, but it adds setup cost and keeps extra calibration data around.

PolarQuant takes a different route. It stores a vector as a radius plus a sequence of angles, then quantizes those angles into u8 values using fixed boundaries. There is no calibration phase, no warm-up sample, and no waiting around before the index can accept traffic.

“The key insight: angular boundaries are mathematically fixed. There’s no calibration step, no per-block parameters, and no warm-up phase before the index is ready.”

That design choice is easy to appreciate if you have ever shipped a system that looks fine in benchmarks but gets awkward during startup. Immediate usability is a practical win, especially in environments where collections get created on demand.

FerresDB also added a Criterion benchmark called quantization_comparison that measures build time, search latency, recall@10, and memory footprint at dimensions 128 and 384. The project’s own conclusion is refreshingly honest: PolarQuant initializes faster, while SQ8 can hold a slight accuracy edge at higher dimensions because its calibration adapts to the data distribution.

- SQ8 uses per-dimension calibration and stores extra parameters

- PolarQuant uses fixed angular boundaries and skips calibration

- PolarQuant is ready immediately after vectors are ingested

- SQ8 can be slightly more accurate at higher dimensions

That is the kind of tradeoff production teams actually care about. A method that is 2% better on recall but costs startup friction may be the wrong fit for ephemeral workloads, while a faster-starting format can be a better choice for multi-tenant systems that create and destroy collections often.

Auto-tuning HNSW instead of freezing ef_search

FerresDB’s FerresEngine now adjusts HNSW’s ef_search automatically. That parameter controls the recall-latency tradeoff during search: higher values improve recall, but they also increase CPU work.

The implementation is intentionally simple. Every 60 seconds, the server checks the P95 latency for each collection and nudges ef_search up or down depending on whether the system has headroom. If latency is low, it raises the setting. If latency climbs, it backs off.

This is a small detail with big operational implications. Most teams set HNSW parameters once and then live with the result, even as traffic patterns shift. FerresDB is trying to adapt the index to the workload instead of forcing the workload to fit a fixed tuning choice.

- Latency is sampled from

query_statsevery 60 seconds ef_searchincreases when P95 latency leaves headroomef_searchdecreases when CPU pressure rises- The current value is exposed in

/api/v1/collections/{name}/stats

There is also a clear product decision here: the tuner is conservative. It is a proportional controller, not a full PID loop. That means the system prefers stability over chasing the last few points of recall. For a database, that is usually the right bias.

Recovery, reranking, and the parts teams forget

Two of the most production-minded additions are point-in-time recovery and cross-encoder reranking. The first is about fixing mistakes. The second is about improving result quality when a fast first-pass retrieval is not enough.

PostgreSQL-style point-in-time recovery is now available through FerresDB’s WAL and snapshot system. Each snapshot stores its timestamp, and restore logic replays WAL entries up to the chosen time. That means you can recover from a bad bulk upsert or an accidental delete without rolling all the way back to the last full backup.

For search quality, FerresDB added native reranking through ONNX Runtime. The flow is straightforward: fetch a larger candidate set from HNSW, score those results with a cross-encoder, then return the top matches. The code can load models like BGE-Reranker when exported to ONNX, and the API reports rerank latency so teams can see the cost.

- PITR restores to a selected timestamp using snapshot plus WAL replay

/api/v1/admin/restore/pointslists recovery points- Reranking fetches 5× the final limit before scoring

- ONNX Runtime adds model execution without a separate Python service

That 5× over-fetch is a sensible default. It gives the reranker enough candidates to improve ranking quality without turning every query into an expensive full scan. For RAG systems, this is often the difference between “good enough” retrieval and answers that feel grounded.

Multi-tenant controls, graph traversal, and distributed storage

FerresDB also tightened the multi-tenant story. Namespace allowances let API keys restrict access to specific namespaces, while physical namespace isolation stores each namespace in its own directory when enabled. That split between authorization and storage is a smart one, because security policy and data layout do not always want the same solution.

The project also added graph traversal on top of vector search. Points can link to other points, those links are persisted in the WAL, and a breadth-first traversal can restrict search to a connected subgraph. In practice, that is useful for citation graphs, related-document exploration, or any dataset where semantic similarity should stay inside a known cluster.

Then there is the distributed foundation. FerresDB now has a Raft-based layer for replicated state, which is the sort of feature that turns a single-node search engine into something you can actually plan around in a cluster. The implementation details matter less than the signal: the project is preparing for coordination, replication, and failure handling as first-class concerns.

- Namespace access can be limited with

allowed_namespaces - Physical isolation stores each namespace in separate files

- Graph traversal uses BFS over linked points

- Raft support lays the groundwork for distributed state replication

That combination is what makes this update feel serious. Compression saves memory, auto-tuning smooths latency, PITR protects against operator mistakes, reranking improves relevance, and Raft points toward a multi-node future. Those are not flashy demo features. They are the pieces teams ask for after the first month in production.

What this says about Rust databases right now

FerresDB is interesting because it shows how far Rust has moved in infrastructure work. A few years ago, a vector database in Rust might have been an experiment focused on speed alone. This one is trying to act like a real service with recovery, observability, access control, and operational controls.

It also reflects where vector search is headed in practice. The hard part is no longer “can we compute nearest neighbors?” The hard part is “can we keep recall stable, keep memory under control, recover from mistakes, and fit into a multi-tenant system without duct tape?” FerresDB is answering those questions one feature at a time.

My read is simple: if the project keeps shipping at this pace, the next big checkpoint will be cluster operations, not search quality. The real test will be whether Raft-backed replication and namespace isolation hold up under noisy tenants and rolling restarts. That is where a production database earns its reputation.

If you are evaluating a vector store for RAG or semantic search, this update is worth studying closely. The useful question is not whether FerresDB has a long feature list. It is whether those features map to the failure modes you already know you will hit.

For more Rust and AI infrastructure coverage, see our related write-up on Rust AI infrastructure patterns. If FerresDB keeps adding the same kind of operational depth, the next release may matter less for what it searches and more for how confidently it can be run.

// Related Articles

- [TOOLS]

Why VidHub 会员互通不是“买一次全设备通用”

- [TOOLS]

Why Bun’s Zig-to-Rust experiment is the right move

- [TOOLS]

Why OpenAI API pricing is a product strategy, not a footnote

- [TOOLS]

Why Claude Code’s prompt design beats IDE copilots

- [TOOLS]

Why Databricks Model Serving is the right default for production infe…

- [TOOLS]

Why IBM’s Bob is the right kind of AI coding assistant