MLOps in 2026: Architecture and Strategy Guide

MLOps in 2026 centers on governance, LLMOps convergence, and cost control as enterprises move AI from pilots to production.

MLOps in 2026 focuses on governed production AI, not just model training.

By 2026, MLOps is no longer a niche discipline for data teams. The article’s headline numbers make the problem plain: 87% of enterprises have AI in production, yet fewer than 40% scale beyond pilots. That gap is where most AI programs lose momentum, money, and trust.

| Metric | Value | Why it matters |

|---|---|---|

| Enterprises with AI in production | 87% | AI is already operational, not experimental |

| Enterprises scaling beyond pilots | <40% | Most teams stall before broad rollout |

| AI Act fine ceiling | 6% of global revenue | Governance is now a financial risk |

| Model sprawl found in one audit | 247 production models | Inventory and ownership matter |

| Documented models in that audit | 89 | Undocumented AI creates compliance exposure |

What MLOps means in 2026

Get the latest AI news in your inbox

Weekly picks of model releases, tools, and deep dives — no spam, unsubscribe anytime.

No spam. Unsubscribe at any time.

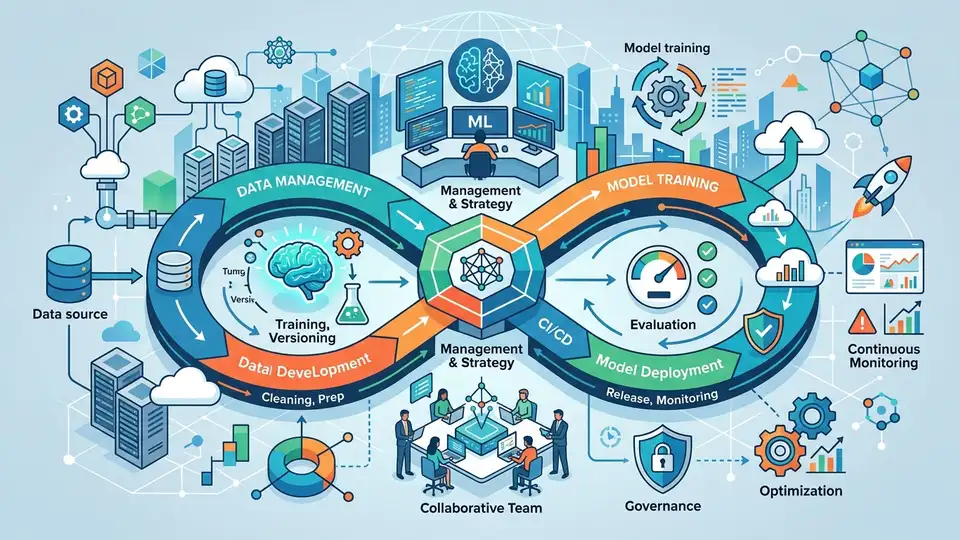

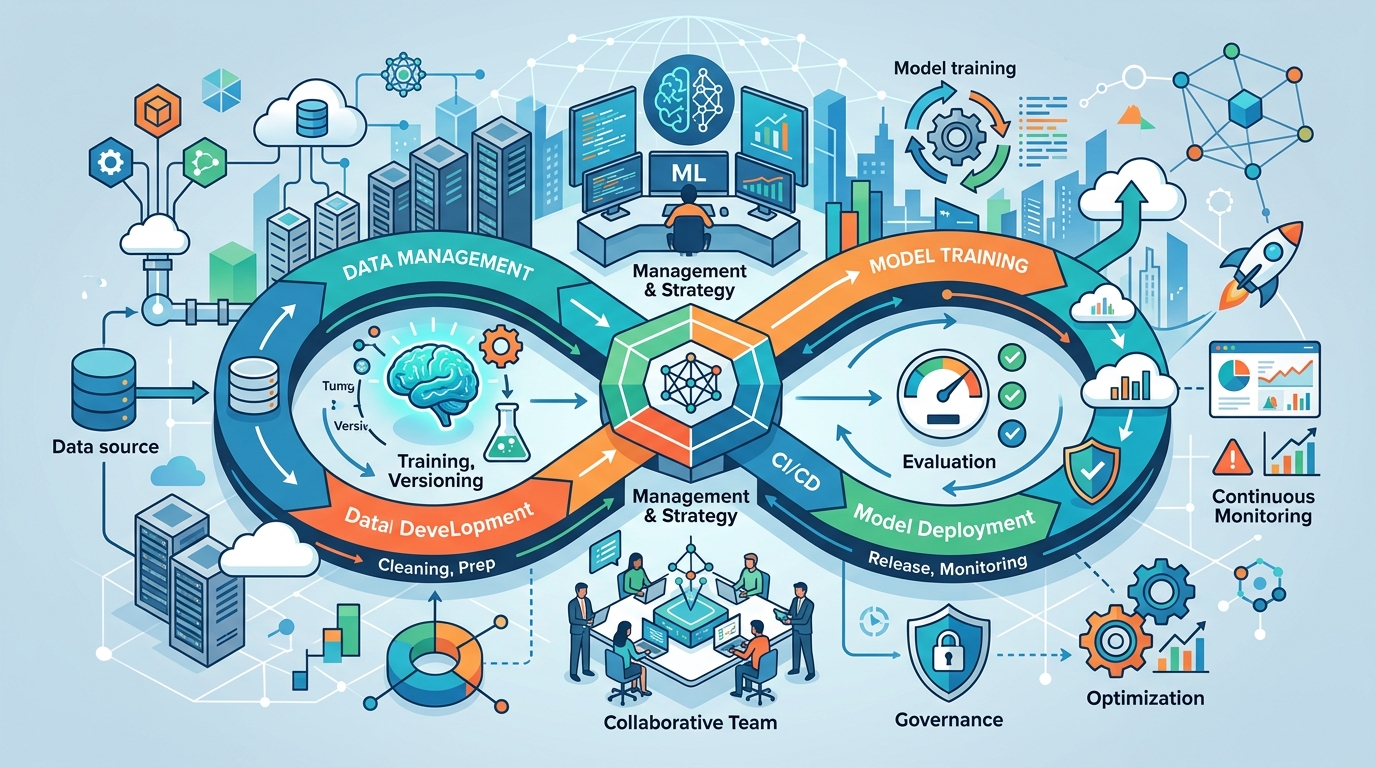

MLOps, or Machine Learning Operations, is the discipline that keeps ML systems reliable after they leave the notebook. It covers building, deployment, monitoring, retraining, and governance, which is a much bigger job than training a model that scores well in a demo.

The article makes a point that still gets ignored in many teams: the model is only about 5–10% of the system. The rest is data validation, infrastructure, observability, governance, and feedback loops. If those pieces are weak, the model will fail in production even if the benchmark looked great.

That framing matters because it changes how teams budget and hire. A company that treats AI as a modeling problem will underinvest in the parts that keep predictions useful after launch.

- Data quality is continuous, not a one-time cleanup task.

- Monitoring must track infrastructure, data drift, model quality, and business impact.

- Governance needs to be part of the workflow, not a review layer added later.

How the stack changed since 2022 to 2024

The old version of MLOps was mostly pipeline automation. Teams stitched together tools, moved models from training to serving, and hoped the handoff held up. In 2026, that is too narrow.

Modern MLOps looks like end-to-end platform operations. Experiment tracking, feature stores, model registries, drift detection, and policy controls are expected pieces of the stack. The article also points to a bigger shift: one control plane for classical ML, LLMs, and agentic workflows.

That convergence is already visible in the tooling. MLflow tracks experiments and models, Kubeflow handles pipelines, and Databricks blends data engineering with ML workflows. On the serving side, platforms like KServe and Seldon keep models online with less manual glue code.

Why LLMOps and AIOps now overlap with MLOps

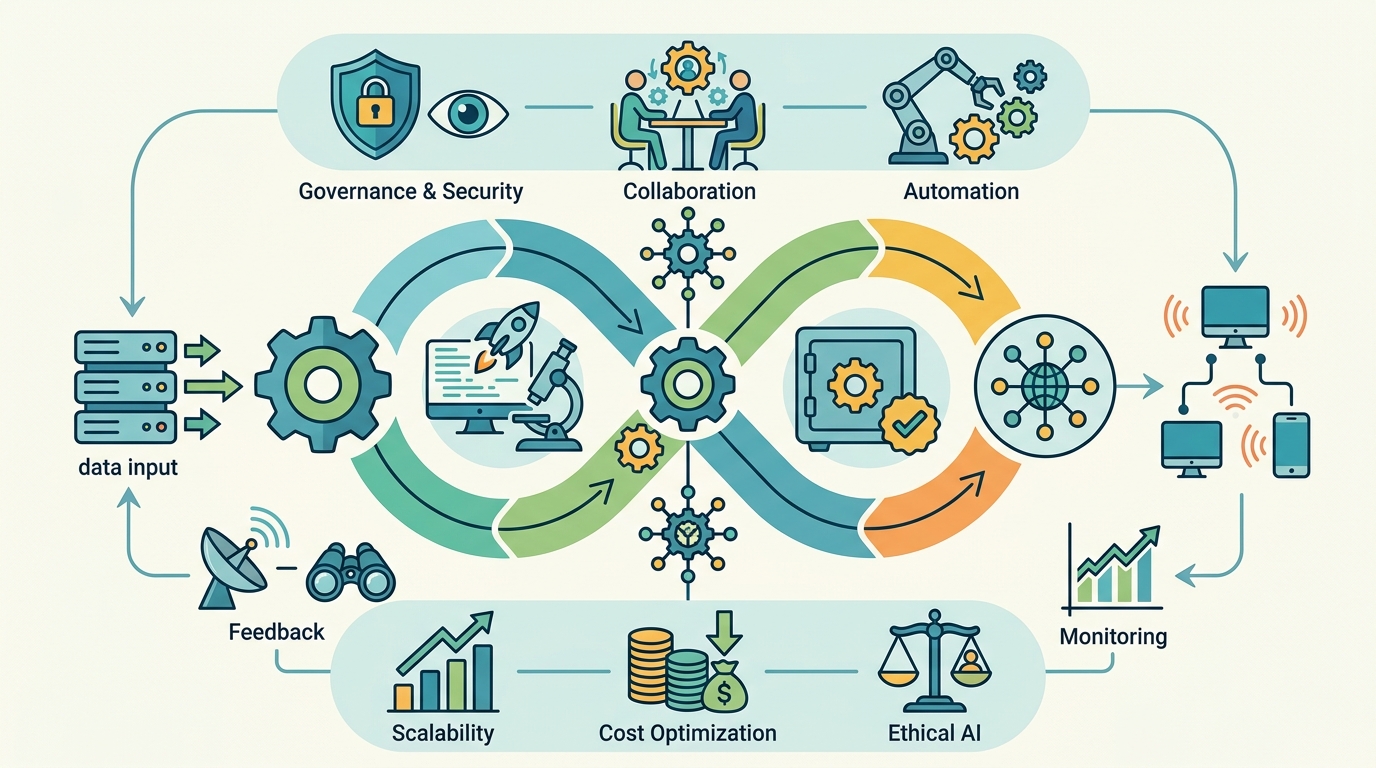

The clean boundaries between MLOps, LLMOps, and AIOps are fading fast. The article’s comparison table is useful because it shows that these domains now share common infrastructure, while still having different failure modes.

MLOps usually deals with structured data, model accuracy, and prediction latency. LLMOps introduces prompt injection, hallucinations, content safety, and retrieval quality. AIOps focuses on logs, traces, incidents, and root-cause analysis. The tools overlap more every quarter, but the risks do not.

“The model is maybe 5–10% of an ML system.”

That line from the article captures the real shift in thinking. In practice, the winning teams are the ones that can run all three disciplines with shared governance and separate controls where needed.

- MLOps cares about drift, accuracy, and inference latency.

- LLMOps cares about safety, prompt leakage, and output quality.

- AIOps cares about incident prediction, anomaly detection, and operational response.

The trends that matter most in 2026

Several trends are reshaping how enterprises build AI systems. Some are technical, while others are about money and regulation. The biggest mistake would be treating them as optional extras.

The EU AI Act and similar accountability rules are turning auditability into a hard requirement. The article says fines can reach 6% of global revenue, which is enough to change how legal, security, and platform teams think about AI deployment.

At the same time, AI spending is becoming a FinOps problem. GPU bills rise fast, especially when teams keep training large models or serving expensive inference workloads without usage controls. The article says disciplined practices like spot instances, distillation, and chargeback can reduce costs by 40–60% versus unmanaged spending.

- Edge AI is pushing compression, federated learning, and over-the-air updates into the ops stack.

- Autonomous retraining is replacing fixed retraining schedules in some systems.

- Purpose-built observability tools like Arize AI, WhyLabs, and Fiddler AI are filling gaps generic monitoring tools miss.

Where enterprise teams still get stuck

The hardest problems are rarely about model architecture alone. The article points to four recurring blockers: data silos, talent shortages, model sprawl, and inconsistent standards.

Those issues sound familiar because they are. A company may have five definitions of customer lifetime value, a dozen deployment patterns, and no single owner for production inventory. That is how a compliance audit turns up 247 live models when only 89 are documented.

The article also calls out shadow AI, where business teams deploy models on personal accounts because official workflows take too long. That is a process failure, not a policy failure. If compliant paths are slower than workarounds, people will pick the workarounds.

- Data silos need executive sponsorship, not just a new catalog tool.

- Self-service platforms can reduce the need for rare hybrid talent.

- Reference architectures and templates help standardize work without killing experimentation.

What to do if you are building an MLOps strategy

The strategy advice in the article is practical, and it avoids the usual “buy a platform and hope” trap. Start by inventorying all production models, deployment methods, and data sources. If you do not know what is live, you cannot govern it.

Next, classify risk. Low-risk analytics models can move faster than systems used for credit, hiring, or medical decisions. That kind of tiering keeps governance proportionate instead of turning every deployment into a committee meeting.

Then build around roles, not heroes. Data scientists own model quality. ML engineers own latency and reliability. Platform engineers own developer experience. Data engineers own pipeline quality. One person cannot do all of that well.

For teams choosing tools, the article’s guidance is straightforward: cloud-native platforms work for smaller or single-cloud teams, open-source stacks fit stronger engineering groups, and regulated industries need governance-heavy setups with dedicated monitoring. Most enterprises will end up with a hybrid mix.

If you want a practical benchmark, the article’s maturity model is a good one to copy. Level 3 means productionized ML with CI/CD/CT and drift detection. Level 4 adds a scalable platform with multi-tenant self-service. Level 5 moves toward self-healing pipelines and policy-driven automation.

What this means for the next 12 months

The clearest takeaway is that MLOps in 2026 is becoming a control system for AI operations, not a set of deployment scripts. The companies that win will treat AI like a product with inventory, ownership, monitoring, and cost controls, not like a one-off data science project.

If your team is still running models through hand-built scripts and checking performance only after users complain, the next step is obvious: inventory what is live, add monitoring before the next deployment, and decide which models need strict governance today. The question is no longer whether your AI works in a notebook, but whether you can prove it works next quarter, under audit, and at a cost the business can keep paying.

// Related Articles

- [IND]

Why Nebius’s AI Pivot Is More Real Than Hype

- [IND]

Nvidia backs Corning factories with billions

- [IND]

Why Anthropic and the Gates Foundation should fund AI public goods

- [IND]

Why Observability Is Critical for Cloud-Native Systems

- [IND]

Data centers are pushing homeowners to solar

- [IND]

How to choose a GPU for 异环