Why Docker’s microVM sandboxes are the right move for AI agents

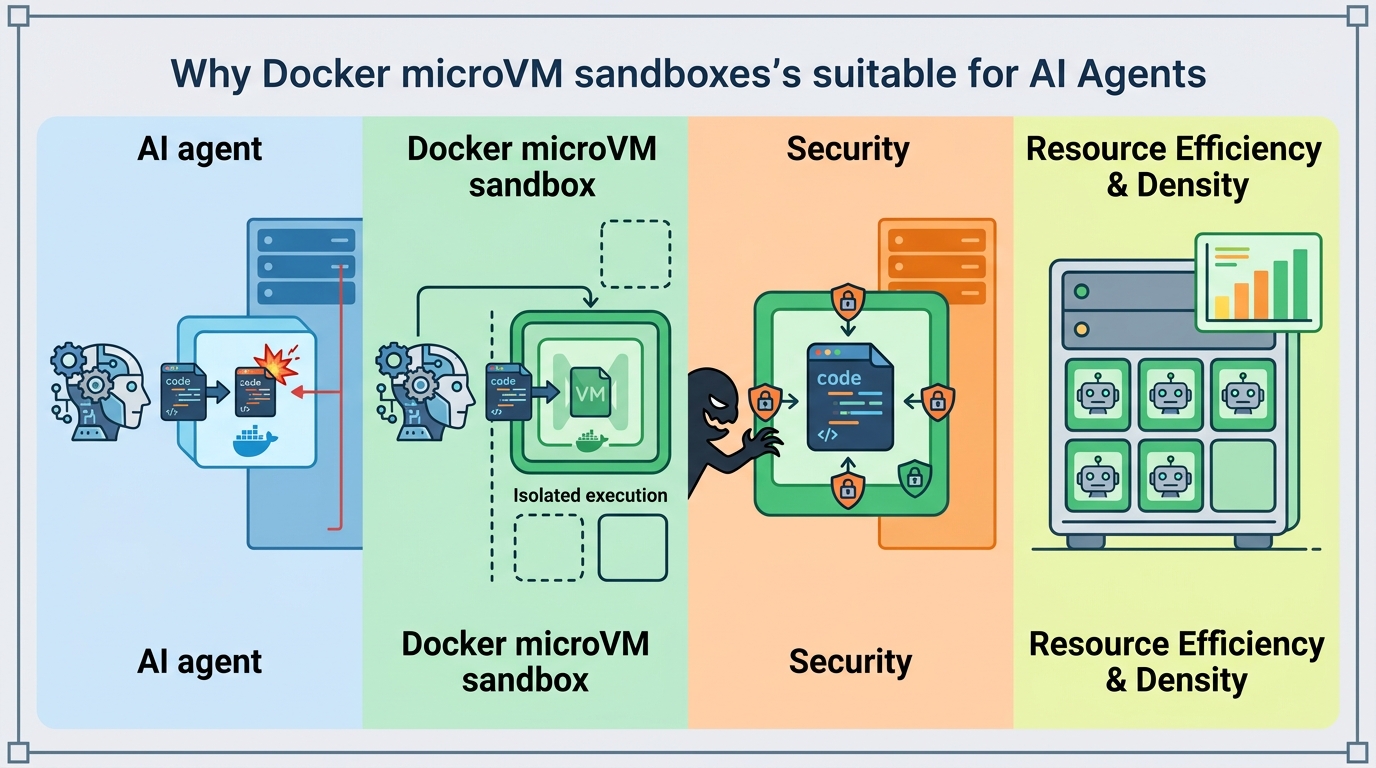

Docker is right to use microVM sandboxes for autonomous AI agents because containers are too weak a boundary for root-level code execution.

Docker’s microVM sandboxes are the right boundary for autonomous AI agents.

Docker is right to move autonomous AI agents into microVM sandboxes, because containers are not a serious enough boundary for code that runs as root, installs packages, edits files, and spawns more processes on your machine.

That matters now because the new wave of coding agents is not just reading code or suggesting diffs. Tools like Claude Code and Codex can execute scripts, pull dependencies, touch the filesystem, and even start containers. Once you give an agent that much authority, the old “it’s just a container” comfort blanket stops holding up. A container shares the host kernel. A microVM does not. That is the difference between a bad day in one sandbox and a bad day on the whole machine.

Containers were never the right trust boundary

Get the latest AI news in your inbox

Weekly picks of model releases, tools, and deep dives — no spam, unsubscribe anytime.

No spam. Unsubscribe at any time.

The first reason Docker’s approach is correct is simple: containers were built for packaging, not for containing hostile or semi-trusted autonomy. Namespaces and cgroups isolate processes, but they do not create a separate kernel. If an agent can exploit a kernel bug, the whole host is in play. That is an acceptable risk for many app workloads. It is the wrong risk for software that can run arbitrary commands on demand.

We already know where this logic goes in practice. Teams routinely grant agents access to local repos, package managers, and shell commands because that is what makes them useful. The moment you do that, the agent becomes a high-privilege operator, not a passive helper. A dedicated microVM gives each sandbox its own kernel instance, which is the minimum serious boundary for that class of workload. Docker is not overengineering here. It is catching up to the threat model.

Cross-platform support makes the product matter

The second reason this launch matters is that Docker chose a proprietary VMM rather than Firecracker so the feature works on macOS and Windows as well as Linux. That is not a minor implementation detail. It is the difference between a niche infra trick and a tool developers can actually use on the laptops where agent workflows begin.

Firecracker is a strong fit for server-side isolation, but it is Linux-first. Most developers are not living inside Linux-only environments. They are on MacBooks and Windows machines, and they want a local sandbox that behaves consistently across those systems. By building its own VMM, Docker is making a deliberate bet that portability beats purity. For a developer tool, that is the correct bet. A security boundary that only works in the data center is not enough when the risky code starts on the desktop.

Sandbox Kits turn isolation into something repeatable

The third reason Docker’s move is smart is that it pairs runtime isolation with declarative setup through Sandbox Kits. YAML that declares tools, environment variables, injected credentials, allowed network domains, dropped-in files, and startup commands is the right abstraction for ephemeral agent environments. It makes the sandbox reproducible instead of handcrafted.

That reproducibility matters because agent workflows fail in messy, non-obvious ways. One sandbox has the right CLI tool, another lacks a credential, a third can reach the wrong domain and leaks data. A kit turns those details into versioned configuration. In practice, that means engineers can review, share, and standardize agent environments the same way they already do with infrastructure-as-code. The combination of microVM isolation and declarative kits is what makes Docker’s launch more than a security feature. It is a workflow product.

The counter-argument

The strongest objection is that microVMs add overhead, complexity, and operational friction. Containers start fast, use fewer resources, and fit naturally into existing tooling. A team running many short-lived sandboxes may worry that microVMs will slow iteration, increase memory pressure, and complicate debugging. That critique is fair. If the workload is low-risk and tightly controlled, containers remain the cheaper option.

There is also a legitimate concern that stronger isolation can create a false sense of safety. If teams inject credentials too freely, allow broad network access, or treat the sandbox as a license to run anything, the boundary does not solve the real problem. Security is still about policy, secrets hygiene, and least privilege. A microVM does not fix bad governance.

Even so, the counter-argument does not beat the launch. The point of a sandbox for autonomous agents is not to optimize for the cheapest possible runtime. It is to constrain software that can make decisions, execute code, and expand its own activity surface. That is exactly the kind of workload that justifies paying some overhead. Yes, teams still need disciplined secrets handling and network controls. But that is an argument for better configuration, not for weaker isolation.

What to do with this

If you are an engineer, stop treating agent execution like a normal dev script and start treating it like untrusted workload management. Put autonomous agents behind a stronger boundary, define their tools and credentials declaratively, and audit what they can reach over the network. If you are a PM or founder building agent products, design for reproducibility and revocation from day one. The winning platform will not be the one that lets agents do the most. It will be the one that lets teams trust what those agents can do.

// Related Articles

- [TOOLS]

Vibe Research: AI Tools for Faster Research

- [TOOLS]

Why AWS’s repository-wide security scanner matters more than faster S…

- [TOOLS]

Why Gemini API pricing is cheaper than it looks

- [TOOLS]

Why VidHub 会员互通不是“买一次全设备通用”

- [TOOLS]

Why Bun’s Zig-to-Rust experiment is the right move

- [TOOLS]

Why OpenAI API pricing is a product strategy, not a footnote