Why Qdrant Cloud’s enterprise push matters for AI retrieval

Qdrant Cloud’s new GPU indexing, Multi-AZ clusters, and audit logs are the right move for production AI.

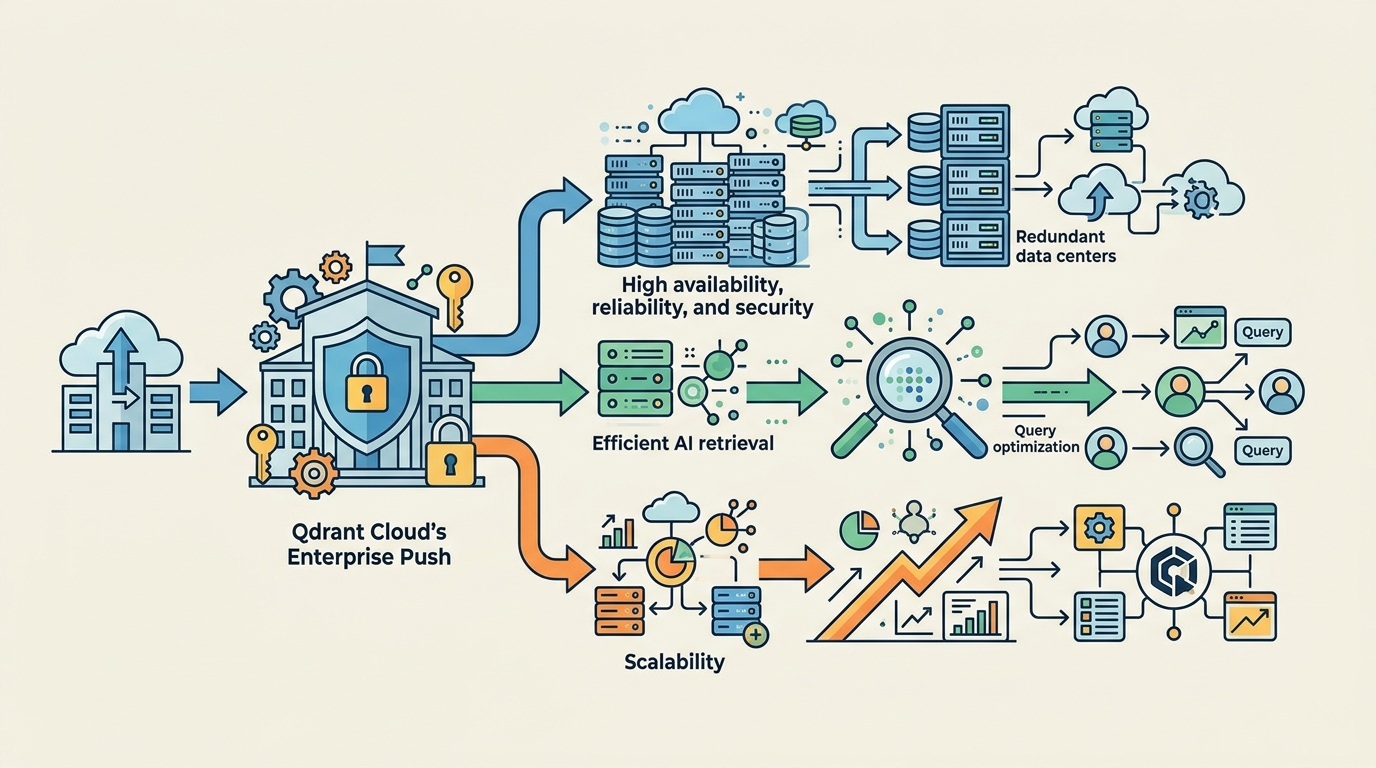

Qdrant Cloud is adding enterprise features that make AI retrieval faster, safer, and easier to govern.

Qdrant Cloud is right to treat vector search like production infrastructure, not a demo feature. The company’s new GPU-accelerated indexing, Multi-AZ clusters, and audit logging are not cosmetic upgrades; they are the minimum set of controls that turn retrieval-augmented generation into something enterprises can trust at scale. If an AI system depends on fresh context, it needs low-latency retrieval, uninterrupted availability, and a paper trail for every query and delete.

First, performance is the product

Get the latest AI news in your inbox

Weekly picks of model releases, tools, and deep dives — no spam, unsubscribe anytime.

No spam. Unsubscribe at any time.

AI systems do not fail gracefully when retrieval is slow. A chatbot that takes seconds to fetch context feels broken, and an agent that stalls on every lookup becomes unusable. Qdrant’s GPU-accelerated indexing is the right answer because the bottleneck in vector search is not just model inference, it is the speed of organizing and searching embeddings. The company is betting that enterprises will pay for infrastructure that keeps similarity search close to human response time, and that bet is correct.

The evidence is already visible in how RAG systems are being deployed. Teams are wiring vector databases into customer support, internal knowledge search, and agent workflows where every extra 100 milliseconds compounds across a conversation. In those settings, brute-force retrieval is dead on arrival. By pushing indexing onto GPUs, Qdrant is targeting the part of the stack that actually determines whether AI feels responsive or sluggish. That is where differentiation lives.

Second, resilience and compliance are now table stakes

Multi-AZ clusters matter because AI retrieval is no longer a sidecar service. When retrieval goes down, the application goes down with it. Qdrant’s design, which keeps copies across three availability zones and avoids manual failover, addresses the real operational expectation of enterprise buyers: reads and writes should continue even if a node fails. That is the standard cloud teams already demand from databases that hold customer records or transaction state, and vector data has reached the same level of importance.

Audit logging is just as important, and too many AI vendors still treat it as an afterthought. Enterprises need to know who queried what, who deleted what, and when collection or snapshot changes happened. Qdrant’s structured JSON logs with user-key attribution and configurable retention give security, legal, and compliance teams the evidence they need. For regulated industries, that is not a nice-to-have. It is the difference between piloting an AI system and approving it for real use.

The counter-argument

The strongest critique is that Qdrant is dressing up a database with cloud features that every serious infrastructure vendor already offers. GPU acceleration, multi-zone resilience, and audit logs sound like standard platform hygiene, not a breakthrough. There is also a broader objection: the vector database market is crowded, and enterprises may prefer to consolidate on a larger cloud provider rather than adopt a specialized service.

That objection is fair, but it misses the point of specialization. Vector retrieval has unique performance characteristics, and AI workloads punish generic database assumptions. A general-purpose cloud stack can offer availability and logging, but it does not automatically optimize the indexing path for embeddings or the latency profile of semantic search. Qdrant does not need to reinvent enterprise infrastructure to win. It needs to make retrieval fast enough, durable enough, and auditable enough that teams stop treating it as experimental.

What to do with this

If you are an engineer or platform owner, stop evaluating vector databases on demo quality and start testing them under failure, load, and audit pressure. Benchmark retrieval latency with realistic embedding sizes, kill nodes during writes, and verify whether your logs can satisfy security review. If you are a founder or PM, treat retrieval as core product plumbing and budget for the features that keep it trustworthy. The AI stack is moving from prototype to operations, and the winners will be the vendors that make the boring parts fast, durable, and visible.

// Related Articles

- [TOOLS]

Why Gemini API pricing is cheaper than it looks

- [TOOLS]

Why VidHub 会员互通不是“买一次全设备通用”

- [TOOLS]

Why Bun’s Zig-to-Rust experiment is the right move

- [TOOLS]

Why OpenAI API pricing is a product strategy, not a footnote

- [TOOLS]

Why Claude Code’s prompt design beats IDE copilots

- [TOOLS]

Why Databricks Model Serving is the right default for production infe…